What You’ll Learn

why tests fail

Key Takeaways

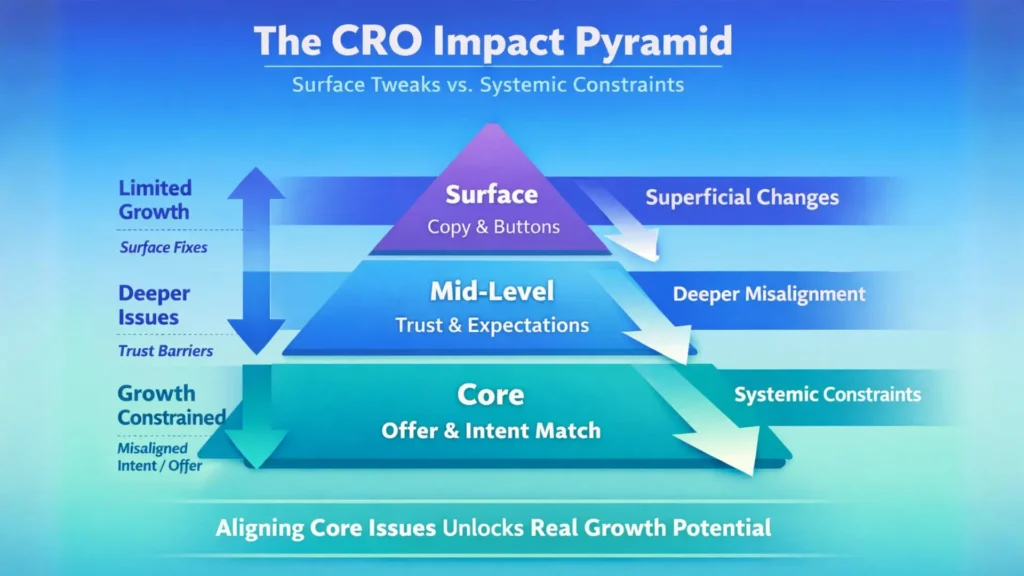

- Conversion tests often fail because they address superficial elements, not underlying offer-intent misalignment, which limits impact from the start.

- Trust deficits and unclear expectations add statistical noise that can obscure true conversion gains, leading to false positives and wasted effort.

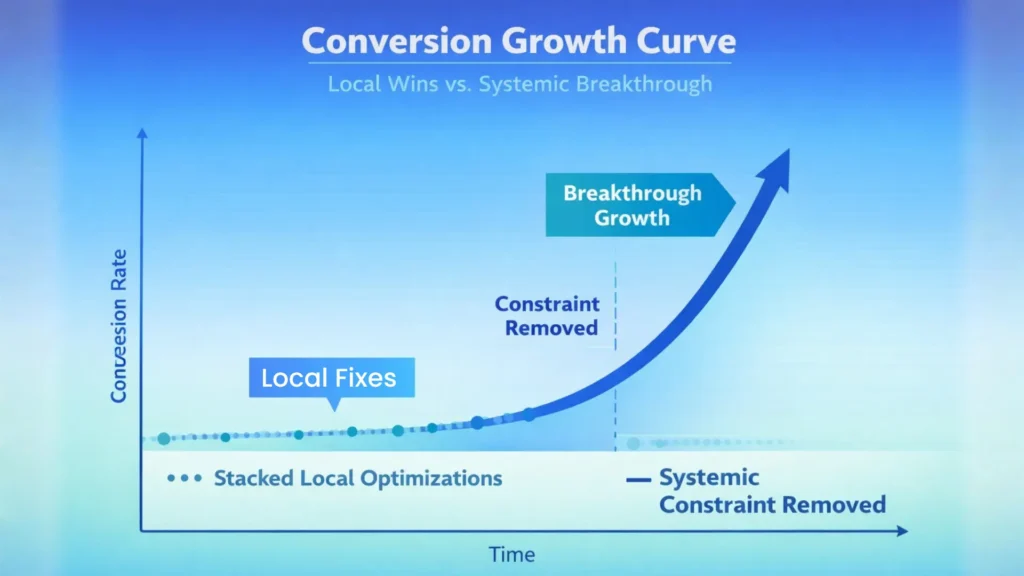

- Small, local optimizations rarely add up to meaningful growth if the core systemic constraints are not uncovered and solved.

- The moment incremental test lifts disappear or aren’t reflected in business KPIs, it’s a signal to focus upstream on repositioning or rethinking the offer.

Most teams blame the page when conversion rates stall, but there’s a deeper truth: the real constraint is rarely on the surface.

Pouring another A/B test onto a misaligned offer is like swapping headlights on a car with no engine – you get a change, but you never move forward.

When testing doesn’t move the needle, what layer is broken?

Common Reasons CRO Tests Fail Due to Intent-Offer Misalignment

| Diagnostic Question | Purpose | Indicator of |

|---|---|---|

| Has your ‘winning’ variation driven leadership-relevant metrics (pipeline, deals)? | Check if tests impact business-critical outcomes | Strategy problem if no; Testing problem if yes |

| Are learnings leading to revisiting product, pricing, or targeting instead of cosmetics? | Identify whether insights push strategic changes | Strategy problem if no; Testing problem if yes |

| Would doubling traffic with your best variation move profit? | Assess potential business impact beyond traffic volume | Strategy problem if no; Testing problem if yes |

| If all tests stopped for 60 days, would the business feel it? | Evaluate whether tests affect core business health | Strategy problem if no (symptom management); Testing problem if yes |

How misaligned intent or offer limits every page tweak

Here’s why most CRO tests fail: every optimization runs head-first into invisible headwinds if the prospect’s intent and the offer don’t match.

You can test button colors, copy, or forms all year, but if someone lands expecting a checklist and finds a sales pitch, even the cleverest tweak can’t save the session.

We’ve seen SaaS clients obsess over micro-copy changes, yet their traffic came from blog searches, not buying intent.

Lifts were marginal at best, because the match between user intent and core offer was off – no page element can conjure urgency where motivation or need is missing.

Ask yourself: what percentage of visitors actually see your offer as a fit for their problem?

If that number is low, every test is capped before it runs.

Here’s the myth: “Fix the page and you fix the funnel”.

In reality, the funnel is only as strong as its tightest intent-offer match.

This is a classic example of systemic CRO failure patterns – problems that repeat not because of surface-level execution, but because the true blocker is never surfaced or solved.

When intent and offer align, even average pages perform above baseline.

When they don’t, improvement is an illusion.

Tuning the page while the fundamental connection is missing is chasing shadows.

Why trust and expectation gaps act like noise on conversions

Imagine running a test where half your visitors believe your promise and half don’t.

The gap in trust introduces so much noise, meaningful signals get lost in the static.

We once watched a fintech client’s signup rate bounce up and down week after week, only to discover their main call-to-action raised more questions than it answered.

The real problem wasn’t the design; it was missing credibility and unclear expectations that created friction invisible in analytics.

This is the CRO testing blind spot: small trust deficits flood your data with false positives and ambiguous trends.

Every unaddressed doubt compounds, making it impossible to identify a true winner, let alone replicate it at scale.

It’s like trying to pick out a single voice in a crowded airport – you don’t need a louder microphone, you need fewer distractions.

Executive takeaway: If your tests plateau, suspect the upstream.

When intent and trust go unchecked, every new test runs into the same hidden wall, no matter how creative the page tweaks.

Why small wins on pages rarely add up over time

Most CRO wins feel bigger than they are.

Stack a dozen local improvements, and you expect your conversion graph to arc gracefully upward – until reality intervenes, and total growth barely stirs.

What gives?

Local gains failing to scale: the illusion of progress

The CRO illusion: fix enough small things, and surely the numbers must rise.

But reality cuts sharper – while individual tweaks occasionally move a micro-metric, those wins almost never aggregate into real, lasting growth.

We’ve seen teams log a dozen site improvements, only to discover their main conversion rates are flat.

Most ‘lifts’ quietly offset or overlap, with underlying constraints – like offer mismatch – erasing systemic progress.

The trap: When local experiments masquerade as momentum, teams risk overselling incremental progress.

True compounding only happens when you solve the right systemic bottleneck.

Traffic volatility and sample noise hide real trends

Here’s the trap: believing short-term changes in conversion rate actually reflect improvements, when more often you’re just seeing echoes of volatility.

Daily swings in traffic sources, targeting algorithms, or external events can disguise test outcomes.

We’ve seen clients celebrate a 7% lift, only to watch it shrink to statistical insignificance once data stabilized.

What’s happening?

Noise, not signal, is driving the result.

Consider sample size the way you’d view weather: a sunny morning doesn’t mean the climate is changing.

Drawing conclusions on thin data or variable traffic periods can warp your perception of impact, and a string of false positive “wins” only compounds confusion.

Pause to ask: is that uptick real, or just a temporary artifact of randomness?

Local fixations drain energy.

Sustainable growth requires surfacing the systemic constraint, not treating every page bump as gold.

The teams that escape this trap see through the illusion, focus on causal bottlenecks, and save their effort for changes that echo across the entire funnel.

What structural testing missteps obscure your true constraints

Most conversion wins are declared in a rush – sometimes after a few days, sometimes after a week – because the uplift looks enticing on the chart.

But here’s the surprise: claiming victory too early is the fastest way to chase your own tail.

Winning tests that don’t reflect real, systemic change create more noise than clarity.

It’s like celebrating a coin flip that landed heads, then betting the business on a pattern that doesn’t exist.

Calling winners too early or on false positives

There’s a persistent myth that fast wins from CRO tests mean you’ve identified a true constraint.

In practice, we’ve seen the opposite: more tests called “winners” on a wave of luck or seasonal blips than on durable insight.

Clients often celebrate a 7% uplift, only to watch performance fade when traffic volume returns to baseline.

The emotional whiplash is real – one client burned through three homepage iterations, each with short-term gains, none with year-end impact.

Much of this comes down to statistical volatility.

A/B tests on low sample sizes, or run for too short a window, are breeding grounds for false signals.

The excitement of an upward blip masks the reality: the supposed breakthrough was just noise dressed as progress.

How many CRO teams are stuck on this carousel – confident they’re compounding value, but endlessly circling the same few basis points?

Repeatable insight: The more eager you are to find a “win”, the less likely you are to actually move the business.

Testing superficial elements instead of leverage points

It’s tempting – sometimes irresistible – to focus on testing color, button size, or headline order because they’re easy levers to pull.

But the hard observation is this: superficial changes rarely touch the real constraint.

Testing low-impact elements feels productive but leaves upstream bottlenecks – like offer clarity, segment targeting, or risk reversal – untouched.

We’ve watched teams run dozens of headline tests while ignoring that their buyers never understood the offer in the first place.

The page changes, but the fundamental question goes unanswered: are you actually testing the variable that will release growth?

Think of it like inflating a balloon with pinholes.

No matter how much you pump, real lift escapes elsewhere.

If conversion rate plateaus persist, it’s often proof that the surface is not the lever.

So, next time you plan a test, ask: Is this adjustment going to break through a constraint, or just rearrange the deck chairs?

Calling test wins before they’re validated – or optimizing trivial elements – keeps most teams running in circles.

Progress starts when you spot the true lever and ignore the crowd noise.

What to consider next: are you testing symptoms or constraints?

Most teams pour time into optimizing a page element, convinced it’s a breakthrough – only to see the graph flatline.

It’s a setup: the visible issue is almost never the constraint that’s quietly capping growth.

The real challenge isn’t finding another test; it’s knowing whether your focus is an itch or the infection.

Questions to decide if this is a strategy or testing problem

Diagnostic Questions to Differentiate Strategy vs Testing Problems

| Reason | Description | Impact on CRO Tests |

|---|---|---|

| Misaligned User Intent | Visitors expecting specific content (e.g., checklist) find sales pitch instead | Reduces session engagement and conversion lift |

| Offer Does Not Fit Problem | Offer doesn’t address visitor’s core problem or motivation | Caps possible conversion rate improvements |

| Traffic Source Mismatch | Traffic comes from non-buying intent (e.g., blog search vs buying intent) | Limits impact of page element optimizations |

| Low Percent of Visitors Seeing Fit | Few visitors perceive offer as relevant | Prevents tests from reaching full potential |

If your tests win but revenue doesn’t budge, you’re not alone – one client cycled through fifteen headline fixes before we realized the offer itself put buyers on pause, not the copy.

The myth: every friction can be resolved with iterations.

The reality: if you’re mending symptoms, you prolong diagnosis and rack up opportunity costs.

Here’s how we diagnose the line between testing problem and strategy problem:

- Has your “winning” variation ever driven a metric that leadership actually cares about, not just CTR, but pipeline or closed deals?

- Are your learnings leading you to revisit product, pricing, or target segment, or just cycling cosmetics?

- Would doubling traffic – even with your best version – actually move profit?

If you answer no or hesitate, the test is probably a bandage over a pressure leak upstream.

Think of it like adjusting stage lights when the sound system is dead – the show won’t improve.

There’s one question we repeat in client rooms: “If you stopped all tests for 60 days, would the business feel it?”

If the answer is no, you’re not in the testing business – you’re in the symptom management business.

When page-level iterating is still valid – and when to call it quits

Yet, not every stalled test points to a strategic overhaul.

Sometimes, the right move is another round of iteration, provided two signals hold:

- evidence of life, like a test nearly shifting a lagging metric, and

- a clear hypothesis that still ties directly to an unresolved user objection or friction.

What’s not valid?

Endless button color tweaks or copy edits that move micro-conversions but never cross over into actual transaction volume or qualified leads.

We’ve seen companies triple experiment counts but paddle in place on topline KPIs.

That’s when iterating is just noise dressed as motion.

Think of iterating at this stage like sharpening a pencil that’s already broken in half: no matter how sharp the lead, the line won’t get longer.

Unless you see accumulating impact, continued testing is just ritual.

Strongest signal to call it quits: the lift from each test gets smaller, and insights blur into confirmation of what you already know.

That’s when you pivot focus upstream – offer, targeting, or value proposition – where constraints are hiding.

The core idea: If your tests diagnose symptoms, breakthrough won’t come from more tweaks.

But if each test chips away at the real constraint, you’ll see momentum spill from the page into the business.

Time to choose: Are you treating pain, or curing the disease?

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Cognitive biases undermine decision accuracy

“The Impact of Cognitive Biases on Professionals’ Decision-Making: A Review of Four Occupational Areas” – V. Berthet – Frontiers in Psychology

Shows that decision-making is systematically distorted by biases such as overconfidence, confirmation bias, and anchoring, leading to predictable errors in judgment that affect outcomes even in structured environments—directly explaining why CRO changes often fail when underlying bias-driven behavior is not addressed.

https://pmc.ncbi.nlm.nih.gov/articles/PMC8763848/ - Matching offers with intent in digital marketing

“Consumer Decision Making in Online Shopping Environments: The Effects of Interactive Decision Aids” – Haubl & Trifts – Marketing Science

Demonstrates that alignment between user intent and presented options significantly affects decision quality and purchase likelihood, showing that mismatched structures reduce conversion even when usability is high.

https://pubsonline.informs.org/doi/10.1287/mksc.19.1.4.15178 - Statistical volatility in A/B testing

“Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing” – Ron Kohavi et al. – Cambridge University Press / Microsoft Research

Shows that many A/B test results are unreliable due to sample noise, insufficient power, and misinterpretation, leading to false positives and incorrect optimization decisions in CRO programs.

https://experimentguide.com - Trust, signal detection, and conversion performance

“Dispositional Trust and Distrust Distinctions in Predicting High- and Low-Risk Internet Expert Advice Site Perceptions” – McKnight, Choudhury, Kacmar – MIS Quarterly

Provides empirical evidence that trust is a primary driver of online transaction behavior, and that weak or inconsistent signals reduce conversion by increasing perceived uncertainty and decision hesitation.

https://www.jstor.org/stable/10.2979/esj.2004.3.2.35 - Breakdowns in scaling: Local vs systemic optimization

“Complexity and the Limits of Optimization” – W. Brian Arthur – Santa Fe Institute / Complexity Economics

Shows that in complex systems, local optimizations often fail to scale because system-wide constraints dominate performance, explaining why CRO improvements plateau when upstream constraints are not addressed.

https://sites.santafe.edu/~wbarthur/complexityeconomics.htm

Questions You Might Ponder

Why do most conversion tests fail to improve profit?

Most conversion tests fail because they target surface-level elements rather than addressing misalignments between user intent and the product offer. Without correcting these upstream constraints, any observed uplift is temporary or illusory and rarely results in real revenue growth.

How does intent-offer misalignment affect conversion rates?

Intent-offer misalignment means visitors’ expectations do not match what the page offers. This disconnect caps conversion limits from the start, making even well-designed pages ineffective if the core promise and target audience needs aren’t properly matched.

What are false positives in A/B testing, and why are they risky?

False positives occur when a test result shows improvement due to statistical noise, sample size errors, or volatility rather than real change. Relying on these misleading signals can lead teams to repeat the same issues, wasting resources and masking the real constraints.

How can trust and expectation gaps undermine conversion optimization?

Gaps in trust or unclear expectations introduce noise into test data, making it difficult to discern what truly works. This can result in inconsistent user responses and unreliable data trends, often leading teams to chase irrelevant tweaks rather than fix the trust deficit.

When should businesses stop page-level iterating and refocus strategy?

If test improvements plateau, new tweaks produce diminishing returns, or ‘winning’ variations don’t move business KPIs, it’s time to pause. Businesses should then assess offer, targeting, and value proposition – areas where real constraints and growth opportunities likely lie.