What You’ll Learn

why wins dont repeat

Key Takeaways

- Not all CRO “wins” repeat; many are driven by fleeting novelty or only affect narrow audience segments, causing gains to evaporate at scale.

- Changes in traffic mix, device, or channel context can undo past conversion gains, exposing the risk of relying on historic test results for future forecasts.

- Statistical noise and impatience (like early test stopping) inflate false positives, so many perceived wins are not truly sustainable or scalable.

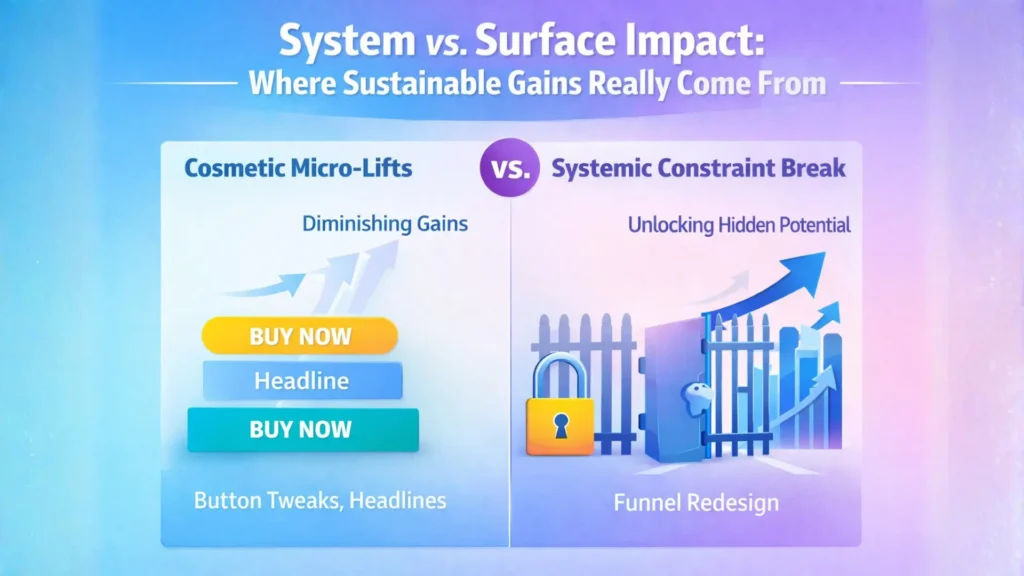

- Lasting business results come from fixing system-level constraints, not stacking superficial test lifts; actionable learning often comes from losing tests.

Most executives celebrate a strong A/B test lift – until the real numbers hit the quarterly report and the gain has vanished.

If your latest “winning” experiment doesn’t move the monthly dashboard, the problem isn’t bad luck.

It’s a blindness to repeatability risk built into most conversion programs.

When a ‘winning’ test doesn’t scale – what that means for outcomes

Picture this: a design tweak, fresh headline, or bold button color suddenly spikes conversions.

It feels like striking oil.

But here’s the catch – many of these wins aren’t real shifts in user decision-making.

They’re short-term boosts, driven by novelty bias.

People notice what’s new, not necessarily what’s better.

How novelty effects can create temporary lifts that fade

Comparison of Novelty-Driven Lifts vs. Sustainable Lifts

| Segment | Example Outcome | Effect on Overall Business |

|---|---|---|

| Segment with Strong Lift | Desktop users +10% sales | Positive contribution, but limited to segment |

| Segment with No Change | Mobile users 0% change | No impact, dilutes overall lift |

| Segment with Negative Impact | Tablet users -5% sales | Offsets gains, reducing total uplift |

| Overall Audience | Aggregate of all segments | Lift approaches zero or very low when combined |

We’ve seen cases where teams celebrated a 15% jump, only to watch it flatline once users adjusted and the “new” became normal.

It’s like switching the store entrance – shoppers pause, react, maybe even try the new path.

After a week, traffic patterns revert because the move didn’t match how people actually shop.

The initial excitement simply fades.

A common myth: If it worked this week, it’ll keep working at scale.

In reality, novelty wears off fast, especially in high-frequency digital environments.

One repeatable insight: Lasting change is the exception, not the rule, when lifts depend on surprise more than value.

What’s worse, these fleeting “wins” look excellent in post-test reports but disappear in actual business metrics.

How do you spot the difference?

Watch for lifts that coincide only with the launch window and erode in weeks.

Sustainable conversion gains outlive their debut.

Why segmented success may not reflect your entire audience

Impact of Segment-Level CRO Wins vs. Overall Audience Effect

| Aspect | Novelty-Driven Lift | Sustainable Lift |

|---|---|---|

| Duration | Short-term, fades in weeks | Long-lasting, stable over time |

| User Behavior | Driven by initial curiosity or surprise | Reflects lasting value and improved decision-making |

| Effect on Metrics | Spikes during launch, then flatlines | Consistent positive impact on business metrics |

| Scalability | Often does not scale beyond test audience | Scales reliably across entire user base |

You may see a strong bump… but only for one slice of your market.

That might feel like progress, but segmentation wins carry hidden risks.

Patchwork improvements in a test-friendly segment (say, mobile-first visitors or email repeaters) can be offset by no movement – or even losses – elsewhere.

One client watched a checkout experiment boost sales by 10% among desktop users.

Mobile? Flat.

Tablet? Negative.

When rolled out across the entire audience, the overall lift faded to nearly zero.

Think of it like reconfiguring aisles for morning shoppers but making evening traffic worse – your total sales end up unchanged.

The core myth: All audience wins are additive.

The reality: Success in one group might mask stagnation, or even cannibalization, in another.

Are you tracking cumulative impact across every segment, or just spotlighting wins where the light is best?

Executives need more than celebratory test results – they need the full map, showing where gains come at a hidden cost elsewhere.

Focusing on universal outcome, not just isolated lifts, turns CRO from a slot machine to a business lever.

Short-term wins that vanish and segmented lifts that don’t add up can quietly drain momentum from your growth engine.

Sustainable outcomes come from understanding – and correcting – these hidden repeatability traps.

How shifts in context undo past gains

Most leaders treat a CRO win as if it’s baked into tomorrow’s forecast.

The reality: what moved the needle last quarter might fade to background noise today – simply because your audience, channel, or device mix changed under your feet.

Imagine relying on last year’s weather to plan next week’s event.

The same blind spot applies to digital tests.

Five forces drive most context drift: changes in traffic mix, intent maturity, device use, channel source, and seasonality.

If any of these variables shift after a winning test, your apparent gain can erode – or even reverse – regardless of your page or process.

Traffic mix and intent drift erode consistent performance

A CRO win is never insulated from market shifts.

If your traffic swings from organic to paid, or buyers turn into window-shoppers, even last month’s big win can fade out overnight.

User context – who arrives, on what device, and with what intent – will quietly rewrite your results.

For one client, a homepage redesign spiked conversions – until a surge in mobile searchers with lower buying intent flooded in post-campaign.

The lift didn’t vanish because the test was flawed.

The profile of visitors transformed.

This is the silent risk: conversion rate is not a fixed property of your page, but a moving average of ever-shifting people and purposes.

Are you measuring a page, or waves of intent washing through your funnel?

That distinction decides if your last win sticks – or disappears at scale.

Device and channel shifts change how variants behave

Test results look persuasive – until you slice them by device or source.

A layout that streamlines desktop journeys may choke on a small smartphone.

Similarly, the message that hooked email subscribers will feel generic to hurried ad traffic.

We’ve seen variations deliver a 20% lift on desktop, only to dip negative on mobile, nullifying gains in aggregate.

Channels introduce their own friction and assumptions; a test run in direct traffic might fail outright among first-time paid users arriving mid-scroll or mid-commute.

In CRO, context is king and it changes every week.

What if your winning design is just the right answer for yesterday’s mix of devices and sources?

Treating all user flows as interchangeable is a costly myth.

The same page is often a different experience depending on where – and how – someone arrives.

The threads holding a CRO lift together are thinner than most teams realize.

Wins unravel when context – traffic, intent, channel, or device – moves outside the narrow frame of your original test.

The next section reveals what statistical signals are, and aren’t, telling you about the durability of those results.

What statistical noise tells us – and what it doesn’t

That “statistically significant” result might just be statistical trickery.

Here’s the catch: even a flawless-looking test can deliver a false win – or worse, create false hope for a result that never materializes outside your data set.

Most executives don’t realize how noise, sampling quirks, and human impatience quietly risk their next big bet.

Early stopping and sample noise inflate false positives

There’s a hidden danger in watching a test dashboard too closely: the urge to call a winner before the story is finished.

Most teams, when they see a sudden spike, want to lock in gains quickly.

But acting on this is like judging a football game at halftime because your team’s ahead – it ignores all the future plays and reversals.

In day-to-day practice, we see teams get excited the moment significance turns green, pausing a test days or even weeks before it stabilizes.

The result?

False positives – not true improvements, just random fluctuations dressed up as insight.

It’s easy to forget that (in conversion testing) every refresh brings new traffic flavors, intent mixes, and device randomness.

A test that looks golden on Monday can look average or even negative by Friday.

This is not a theoretical risk.

In one executive review, a supposed “35% lift” vanished when the test ran just one week longer – revealing it was random chance.

One decision, rushed by dashboard optimism, cost months of inaccurate projections downstream.

Imagine making a growth bet based on weather that changes minute by minute.

When it comes to noise, ask yourself: what’s the cost of being wrong, not just the benefit of being right?

Test results aren’t summative – small gains don’t compound reliably

Picture a team stacking five 5% wins and expecting a 25% leap.

In practice, small gains rarely add up so neatly.

Hidden interactions, user adaptations, and shifting constraints mean most individual lifts overlap or even cancel each other out.

Our team has run portfolios of dozens of winning experiments, only to discover the aggregate sitewide lift is a fraction of expected.

Why?

Bottlenecks move.

Visitor behavior adapts.

Sometimes, a change helps Segment A but degrades the experience for Segment B.

It’s like tuning a race car engine – add power to one cylinder, and the extra strain exposes weaknesses everywhere else.

If executives cling to the idea that small test lifts always combine for compounding profit, they’ll be confused when big-picture growth stalls.

A repeatable insight: In optimization, not every ‘win’ is a brick in the wall – some are just smoke.

Business decisions shouldn’t rest on statistical signals alone.

Clarity comes from understanding what those numbers actually mean for sustainable momentum.

Reframing CRO decisions through system-level impact, not isolated tests

Most teams chase CRO “wins” like bonus points – racking up minor boosts and celebrated tweaks.

But here’s the disconnect: a stream of test victories doesn’t always create business momentum.

What if those shiny metrics are distractions, keeping decision-makers busy while true constraints remain untouched?

Executives expecting a parade of compounding lifts end up disappointed as growth plateaus despite a history of winning experiments.

Prioritize changes that challenge system constraints, not cosmetic tweaks

Most growth plateaus aren’t triggered by neglected button colors – they’re the result of unaddressed, system-level bottlenecks.

It doesn’t matter how many A/B tweaks patch the surface if a single step upstream loses users en masse.

Clients often ask why multiple micro-lifts never add up.

The answer: if a process bottleneck is unaddressed, every downstream win gets capped by the same ceiling.

One retail client saw twenty separate page tweaks least effect – until we re-engineered the account creation step that killed 40% of intent.

Not all wins are equal in the system view: big, constraint-releasing moves shift outcomes; superficial tweaks get lost in statistical noise.

Look for learning value in losing tests, not just wins

A failed experiment isn’t a sunk cost – it’s a compass.

The best-performing digital growth teams don’t just count lifts; they count lessons.

It’s a myth that only “winning” tests pay off.

In reality, a well-run losing test tells you what your users won’t do, under what conditions, and why.

That learning paves the way for strategic bets instead of incremental pokes in the dark.

We’ve seen clients frustrated by a string of negatives, overlooking behavioral patterns hiding in the data – like repeated friction on mobile or persistent abandonment at a single step.

Every null result sharpens the map, revealing where attention, resources, and strategic change could generate real impact.

What’s the repeatable insight?

Treat each test not as an attempt to collect trophies, but as a flashlight – it either finds a lever to pull or exposes what effort to skip.

In system-level CRO, surface improvements and test-for-the-sake-of-testing thinking hit a wall quickly.

The business momentum comes from diagnosing and dislodging constraints, and from using losing tests as high-beam headlights, not stop signs.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Effect of Novelty on User Engagement

“States of curiosity modulate hippocampus-dependent learning via the dopaminergic circuit” – Gruber, M. J., Gelman, B. D., & Ranganath, C. – Neuron, A Cell Press journal

This study provides evidence on the role of novelty in capturing attention and temporarily boosting engagement, supporting the article’s discussion of short-term novelty effects in CRO tests.

https://pubmed.ncbi.nlm.nih.gov/25284006/ - Repeatability and Scalability in Experiments

“A Comparison of Approaches to Advertising Measurement: Evidence from Big Field Experiments at Facebook” – Gordon, B. R., Zettelmeyer, F., Bhargava, N., & Chapsky, D. – Marketing Science

This systematic review analyzes why experimental results may not scale, linking to the article’s focus on losses when “winning” CRO results don’t repeat at scale.

https://pubsonline.informs.org/doi/10.1287/mksc.2018.1135 - Segmentation and Cannibalization Risk

“Marketing Segmentation, Target Market Selection, and Positioning” – Kotler, P., & Armstrong, G. – Principles of Marketing, Pearson

The text explores how segment-targeted strategies may underperform or produce losses outside the focal segment, aligning with risks of patchwork CRO wins not adding up.

https://www.pearson.com/en-us/subject-catalog/p/principles-of-marketing/P200000013361/9780135413524 - Context Effects in Performance Measurement

“Multiple uses of performance appraisal: Prevalence and correlates.” – Murphy, K. R., & Cleveland, J. N. – Journal of Applied Psychology

Discusses how shifts in context (traffic, device, channel) affect repeatability and relevance of performance tests, reinforcing the article’s claims.

https://psycnet.apa.org/doiLanding?doi=10.1037%2F0021-9010.74.1.130 - Statistical Significance and False Positives in A/B Testing

“Always Valid Inference: Bringing Sequential Analysis to A/B Testing” – Johari, R., Pekelis, L., & Walsh, D. J. – arXiv: Statistics Theory

Analyzes how premature test decisions and sampling fluctuations produce unreliable results, matching the article’s warnings about erroneous inferences from ephemeral significance.

https://arxiv.org/abs/1512.04922

Questions You Might Ponder

Why do most conversion tests fail to improve profit?

Most conversion tests fail because they target surface-level elements rather than addressing misalignments between user intent and the product offer. Without correcting these upstream constraints, any observed uplift is temporary or illusory and rarely results in real revenue growth.

How does intent-offer misalignment affect conversion rates?

Intent-offer misalignment means visitors’ expectations do not match what the page offers. This disconnect caps conversion limits from the start, making even well-designed pages ineffective if the core promise and target audience needs aren’t properly matched.

What are false positives in A/B testing, and why are they risky?

False positives occur when a test result shows improvement due to statistical noise, sample size errors, or volatility rather than real change. Relying on these misleading signals can lead teams to repeat the same issues, wasting resources and masking the real constraints.

How can trust and expectation gaps undermine conversion optimization?

Gaps in trust or unclear expectations introduce noise into test data, making it difficult to discern what truly works. This can result in inconsistent user responses and unreliable data trends, often leading teams to chase irrelevant tweaks rather than fix the trust deficit.

When should businesses stop page-level iterating and refocus strategy?

If test improvements plateau, new tweaks produce diminishing returns, or ‘winning’ variations don’t move business KPIs, it’s time to pause. Businesses should then assess offer, targeting, and value proposition – areas where real constraints and growth opportunities likely lie.