What You’ll Learn

CRO diminishing returns

Key Takeaways

- CRO improvements become harder to achieve as friction points are removed, leading to optimization decay and diminishing returns.

- Context shifts – like changes in audience or traffic sources – can quickly erode previous conversion gains, requiring constant adaptation.

- Declining conversion rates despite on-site stability often signal upstream problems, such as intention dilution or trust gaps.

- The most effective response to stalled growth often involves refining audience intent or trust signals, not just site-level tweaks.

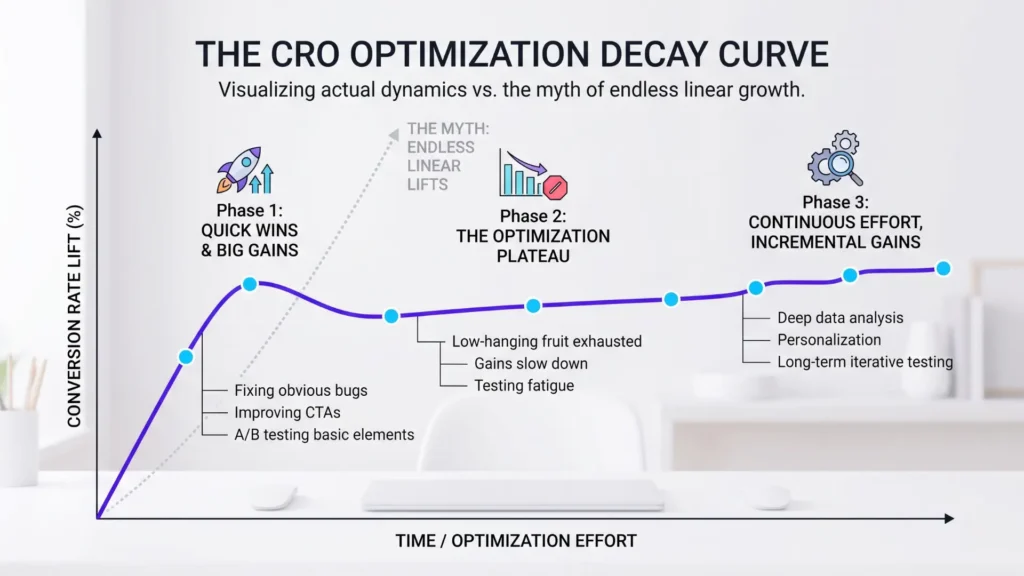

Most teams expect the next test to deliver another jump in conversion – until the graph flattens and every win shrinks.

The myth is that optimization is a smooth, endless climb.

In reality, “CRO diminishing returns” sets in the moment you clear the last obvious friction point, and what happens next catches most execs off guard.

When CRO improvements taper off, what’s really happening

The first rounds of conversion work often feel like magic.

Strip out a checkout form field, simplify an offer, or fix a broken CTA, and you can watch lifts of 20%, 30%, sometimes even more.

But after these hurdles are gone, many assume more tweaks will drive more revenue.

That’s the trap.

Removing friction is like clearing rocks from a stream – once the big ones are gone, the water can only move so much faster, no matter how many pebbles you pull.

When friction removal yields its last big bump

Here’s what we see repeatedly: early-stage CRO projects produce headline stats, then subsequent tests barely nudge the baseline.

This isn’t a failure of creativity; it’s the expected shape of an optimization curve.

One global ecommerce client saw their initial edits double conversion in six weeks, yet every fix after that delivered less than 5%.

Why?

Easy problems get solved first.

Once you’ve squashed the bugs, you’re left seeking advantage at the margin, not the core.

Most teams keep tuning, believing another big breakthrough is just one split-test away.

It rarely is.

The repeatable insight: each friction fix delivers less ROI than the last, and eventually, the curve plateaus no matter how many ideas you throw at it.

How context shifts rewrite improvement curves

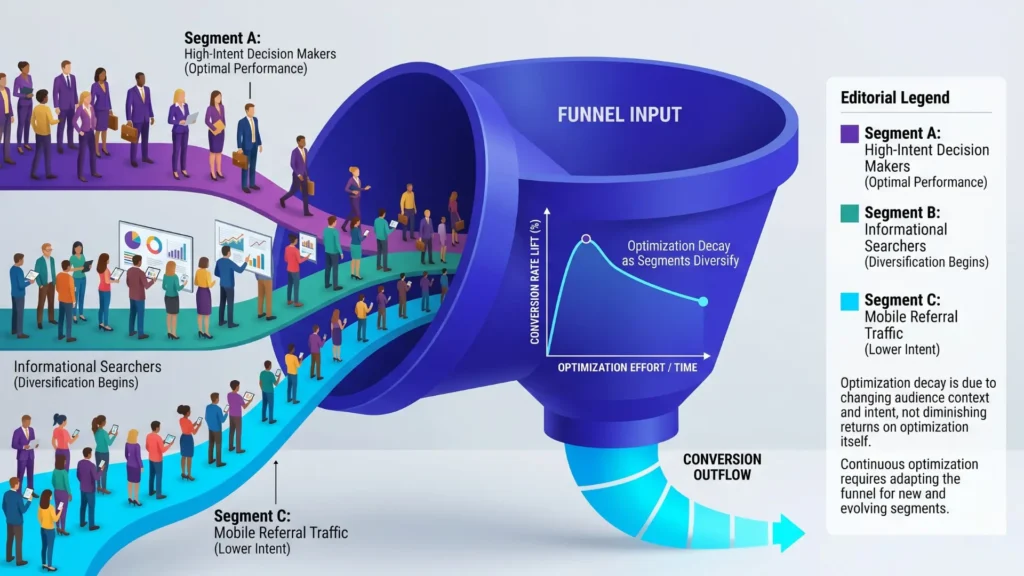

But here’s the twist: even plateaus don’t stay put.

The reason?

Context is always moving.

Change your traffic sources – say, by starting paid social after thriving on intent-driven organic search – and your shiny “optimized” page can suddenly underperform.

The uplift you squeezed from one audience can vanish with the next wave of visitors who care about different details, have less urgency, or never saw your brand before.

We once introduced a newsletter blast to a landing page that had been beating industry averages for months.

Conversion dropped by a third – not because the page design weakened, but because the new audience didn’t match the assumptions baked into the copy and flow.

Intent maturity changes the curve: newcomers or low-trust visitors have different friction points than repeat buyers or warm leads.

Like tuning a car for street driving, then entering a rally – without adjusting, performance dips, sometimes sharply.

What’s rarely discussed is how quickly context drift, traffic mix, and intent shift can erode gains that seemed rock-solid.

Are you optimizing for today’s users or yesterday’s?

Have you checked if new campaigns are bringing in the same caliber of traffic as before?

These shifts don’t announce themselves; instead, they slowly flatten improvement curves and make old wins obsolete.

After the honeymoon phase of friction removal, progress stalls for a reason: both your page and your audience are moving targets.

Recognizing which lever – the site, the offer, or the context – needs attention is the difference between ongoing growth and a slowly fading edge.

Why your conversion peak won’t stay – decay drivers you can’t ignore

Key Drivers of Conversion Rate Decay

| Symptom | Common Misinterpretation | True Meaning | Recommended Action |

|---|---|---|---|

| Shrinking Test Wins (e.g. 0.3% uplift) | Every statistically significant test still improves results | Marginal lifts are often economically irrelevant | Shift focus from micro-optimizations to structural levers |

| Conversion Drop Without Page Changes | Faulty page or design issues | Traffic quality or upstream audience changes causing decay | Diagnose traffic mix and upstream signals immediately |

| Small, Noisy Gains After Initial Wins | Keep doubling down on more tests and volume | Optimization plateau and diminishing returns set in | Evaluate traffic intent quality and upstream trust gaps |

Most teams hit a record conversion rate and act as if the job is done – but what actually happens is more like a market’s tide shifting beneath their feet.

The page that once printed wins now shows smaller, noisier gains – or small, unexplained drops.

What drives this slow leak of progress?

It’s often less about the testing process and more about what’s changing in the audience and the psychology behind each click.

Audience mix and intention dilution as conversion killers

Imagine you’ve just opened the doors on a newly optimized funnel: the eager early users arrive, hungry to convert, and the metrics climb.

Yet, once you push harder – launching new campaigns, expanding to colder lookalikes, or diversifying channels – the numbers look less predictable.

The reason?

The equation quietly changes: traffic volume increases, but intent quality and segment purity degrade.

Instead of a steady flow of high-fit prospects, broader audiences dilute the pool, stirring in more window-shoppers than buyers.

One client briefly doubled top-line traffic, only to watch conversion rates slide solely because the new visitors arrived with weaker motivation and lower trust.

Most think more visitors mean more sales; in reality, growth often brings noisy variance and declining conversion stability.

The broader your audience, the more diluted intent quality becomes – reducing your ability to maintain high conversion gains.

What percentage of your recent tests reflect true improvement versus the swirling mix of changing intent, geographies, and psych profiles?

CRO diminishing returns aren’t just about optimization ceiling effects – they’re just as much about traffic mix impact and the silent spread of intention dilution.

Emotional risk and uncertainty that sneak back in

Winning A/B tests can mask a subtle decay underneath: as new audiences arrive, unresolved doubts and friction points re-emerge.

Old anxieties – “Is this legit? What’s the real price? Will this work for me?” – creep back into the buying journey, especially when campaigns reach colder or less-primed users.

Conversion improvement decay reasons don’t vanish with a slicker page design; fears and hesitations mutate, adapting to the fresh context.

We’ve watched promising variants slowly lose ground, not because the experience got worse, but because the visitor’s uncertainty outpaced the clarity gains.

Every new visitor segment brings shadow worries you didn’t have to address before – changing the conversion equation overnight.

Emotional risk is never fully solved; it only retreats until context drift in CRO decay revives it.

The strongest CRO work anticipates these cycles, not just technical obstacles.

Conversion stability deterioration happens quietly, then all at once.

The warning sign?

When confidence sags and underlying doubt grows, even the sharpest optimizations won’t protect your peak for long.

How to spot diminishing returns before they cost momentum

Diagnostic Signs of CRO Diminishing Returns

| Driver | Description | Effect on Conversion | Example Scenario |

|---|---|---|---|

| Audience Mix and Intention Dilution | Broader, less-targeted audiences dilute intent and reduce conversion quality. | Noisier metrics and lower conversion rates | Doubling traffic with colder lookalikes caused conversion drop |

| Emotional Risk and Uncertainty | Old anxieties and doubts re-emerge with new or colder visitors. | Subtle decay in conversion despite design improvements | New visitor segments bring fresh hesitations that slow conversions |

| Context Drift and Traffic Mix | Changes in traffic sources and visitor intent alter previously optimized contexts. | Sudden underperformance despite no page changes | Introducing newsletter traffic caused a 33% conversion drop |

The warning signs aren’t hidden – they’re just easy to rationalize away.

When conversion lifts get smaller no matter how bold the test, it’s not bad luck.

It’s economics: once the heavy friction is gone, what remains is increasingly resistant to change.

Yet most teams misinterpret these patterns as a cue to double down on volume or micro-tweaks, chasing the ghosts of past success.

The real danger?

By the time you notice, momentum is already leaking away.

When test wins shrink and statistical significance loses meaning

Here’s where it gets deceptive: your next A/B test flickers to life, hits statistical significance, but delivers a 0.3% uplift.

Sounds scientific.

But is your bottom line actually moving?

The myth is that as long as a test “wins”, it’s worth shipping.

In reality, marginal lifts – especially when traffic or sample size is large – can shine on a dashboard but be economically irrelevant, even negative after costs and regression to the mean.

We once saw a SaaS firm invest months into micro-optimizations: button shade, headline variants, form labels.

Wins kept coming, but revenue stagnated.

Why?

Statistical significance became a vanity metric.

It told them change happened, not that any change mattered.

This is the conversion equivalent of wringing water from a rock: the effort far outweighs the return.

If your best win lately is barely funding coffee for the team, you’ve hit the local maximum.

This is when executive attention should shift from micro-lifts to larger, structural levers.

Shifting signals: conversion drops without page changes

What if metrics slide but nothing has changed on-site?

That’s your diagnostic goldmine.

Deterioration here rarely means a technical or design flaw.

Instead, it’s a symptom of upstream issues – changes in traffic quality, audience mix, or market context that now shape your conversion ceiling behind the scenes.

Think of it as driving with clean glass but increasingly foggy headlights: the road, your page, remains steady, but your ability to see results degrades due to outside factors.

We saw this with a DTC client: their conversion rate dropped 18% Q/Q, but user sessions, design, and flow were unchanged.

The culprit?

A surge in paid traffic with lower purchase intent diluted the pool.

No amount of on-page tests could compensate for that blend.

Small, repeated test wins may indicate you’ve hit a growth ceiling; if unexplained drops appear, immediate upstream diagnostics are critical.

Catching these cues early lets you pivot before you lose momentum.

If CRO growth stalls, what should you evaluate next

Most teams assume another round of headline tests or button tweaks will reignite growth.

The reality: once you hit the CRO ceiling, every incremental change gets less cost-effective – and at some point, you’re optimizing fragments while missing the real leverage.

What if the true constraint is no longer on your page at all?

Comparing incremental CRO against upgrading intent quality or clarity

Chasing single-digit conversion bumps can feel productive.

But after dozens of tests, there’s a hard truth: the biggest wins often happen by leveling up your audience or sharpening your value signal – not by shaving milliseconds or rewriting microcopy again.

We’ve seen clients push months into granular tweaks, only to realize their top-of-funnel messaging confused more than it clarified.

No pixel-perfect page can compensate for misaligned expectations or muddy positioning.

Sometimes the biggest shift comes not from further page improvements, but from upgrading the intent quality or clarity at the top of the funnel.

If your last five CRO projects netted smaller and smaller lifts, the question isn’t “What haven’t we tried yet?” but “Are we solving the right problem now?”

Is it time to address upstream signals or trust gaps instead?

There’s a myth: only page-level friction slows growth.

But after the easy gains, real bottlenecks hide upstream – subtle trust gaps, mismatched pre-click promises, or silent concerns nobody voices in exit polls.

We’ve watched stubbornly flat conversion rates unfreeze only when brands addressed a lingering doubt or clarified an offer that sounded too good to be true.

Ask: do visitors truly believe your claims, or are they hedging with questions you never answer?

Sometimes, conversion decay isn’t about message clarity but emotional risk.

When intent signals dim or brand relevance slips, no A/B test can patch the leak.

The best time to ask “Where are we losing confidence?” is before the plateau hardens into decline.

Here’s the move: when every page tweak feels incremental, evaluate the entire chain – ad promise, onboarding flow, and underlying trust.

CRO isn’t a slot machine.

Sometimes, stepping back from the screen reveals the growth window you couldn’t see before.

When growth stalls, the next big lift rarely looks like the last one.

Sharper context and cleared trust build a new ceiling.

Don’t wait for decay to force your hand – decide where better leverage lives, now.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Cognitive limits in performance optimization

“Attention and Cognitive Control” – Michael I. Posner, C.R.R. Snyder – Information Processing and Cognition (Academic volume)

Establishes that cognitive control operates under strict capacity limits and is only engaged in complex or novel situations, meaning that as “easy” friction is removed, further improvements require overcoming deeper cognitive constraints that are less responsive to optimization.

https://philpapers.org/rec/POSAAC - Diminishing returns in optimization under constraint

“Cognitive Control as a Multivariate Optimization Problem” – Harrison Ritz et al. – arXiv / Cognitive Science

Frames performance improvement as an optimization problem under constraints, showing that additional effort yields smaller gains as systems approach optimal configurations, directly mirroring diminishing returns in CRO after initial wins.

https://arxiv.org/pdf/2110.00668 - Audience segmentation, intention dilution, and conversion

“Market Segmentation: Conceptual and Methodological Foundations” – Wedel, Kamakura – Springer / Marketing Science

Demonstrates that broader segmentation reduces predictive precision and increases heterogeneity in user intent, leading to weaker behavioral signals and lower conversion efficiency as targeting expands.

https://link.springer.com/book/10.1007/978-1-4615-4651-1 - Context effects and dynamic market adaptation

“Choice in Context: Tradeoff Contrast and Extremeness Aversion” – Tversky, Simonson – Journal of Marketing Research

Shows that consumer decisions are highly sensitive to surrounding context and available alternatives, meaning that conversion gains from optimization are unstable as competitive or environmental conditions shift.

https://journals.sagepub.com/doi/abs/10.1177/002224379202900301 - Economics of marginal utility in business processes

“Intermediate Microeconomics“ – by Hal R Varian, Marc Melitz – W.W. Norton

Explains that each additional unit of effort produces smaller incremental benefit, providing a formal economic foundation for why optimization gains decrease over time in conversion systems.

https://wwnorton.com/books/9781324034292

Questions You Might Ponder

What causes optimization decay in CRO, and how can it be identified?

Optimization decay in CRO occurs when initial improvements deliver strong results, but subsequent changes lead to smaller gains. It’s identified by a flattened improvement curve and minimal test impact, signaling the exhaustion of easy solutions and the onset of diminishing returns.

How does audience mix impact optimization decay during CRO efforts?

A shift or broadening in audience mix often leads to lower-intent traffic and diluted conversion potential. When new segments with different motivations and trust levels enter the funnel, prior optimizations for warmer audiences may become ineffective, accelerating optimization decay.

Why do early CRO changes show big conversion lifts, but later tests show less impact?

Early CRO changes typically remove major friction points, revealing significant performance gains. Once these are addressed, further improvements only address marginal barriers, resulting in minimal lifts as the system reaches its local maximum optimization level.

What warning signs indicate your CRO gains are plateauing?

Key warning signs include shrinking A/B test uplifts, statistical significance without real business impact, unexplained conversion drops despite no on-site changes, and increasing difficulty finding meaningful optimization levers – each hinting at approaching or reaching optimization decay.

What should teams do when further CRO tests fail to deliver strong results?

When CRO results stall, teams should shift focus upstream – evaluating traffic quality, pre-click messaging, and trust elements. Improvements in audience targeting or clarifying product value often provide greater returns than continued site micro-optimizations in a mature testing environment.