What You’ll Learn

redesign shock

Key Takeaways

- Redesign shock disrupts user habits and trust, often causing conversion drops despite visual or UX improvements.

- Trust signals are subtle and easily broken after redesign, impacting the subconscious comfort of returning users.

- Optimization success is fragile and highly context-dependent; changes in traffic or audience profile can negate previous gains.

- Before reverting a redesign, analyze metrics deeply to distinguish between behavioral lag, technical errors, and true rejection.

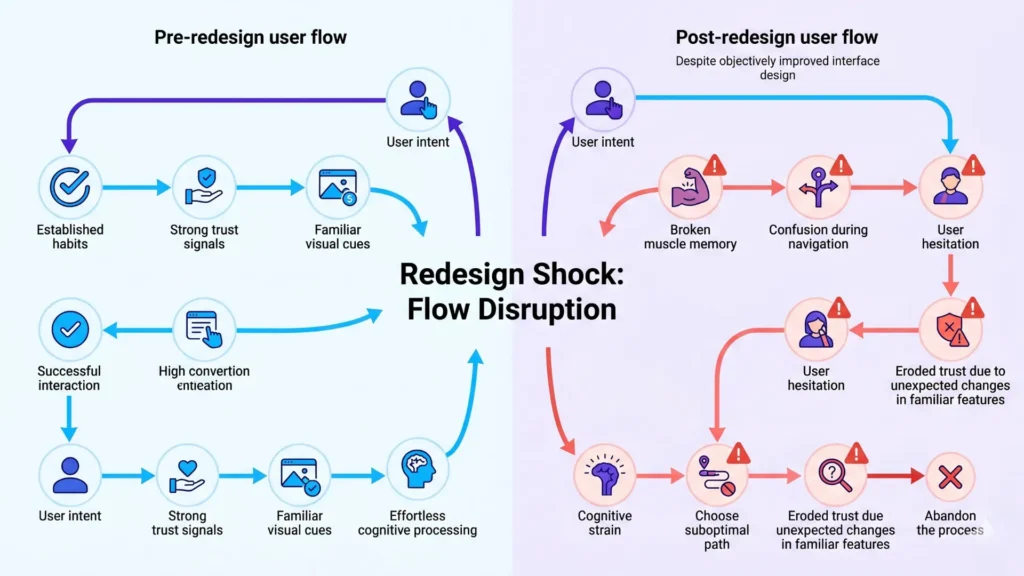

Most teams expect an improved design to lift results – yet the surprise is how often a “better” redesign triggers a conversion drop.

The cause isn’t the visual upgrade itself, but a hidden reset: redesigns quietly erase the trust, habits, and mental shortcuts your best users rely on.

If you’ve ever watched returning customers freeze on a familiar page that now looks “better”, you’ve seen redesign shock in action.

That friction is measurable – and it often explains why conversion drops after an improvement.

Why a ‘Better’ Redesign Can Suddenly Undermine Performance

Our oldest client, an e-commerce brand, learned the cost of breaking muscle memory the hard way: users who once breezed through checkout suddenly paused, searched, and abandoned.

The culprit?

Subtle, well-intentioned shifts in navigation and button placement.

People underestimate just how deeply returning users operate on autopilot.

When their habitual paths disappear, momentum evaporates – raising significant cognitive load after redesign.

The experience is like rearranging furniture in a dark room – users stumble where they once walked with confidence.

How recognition and habit disruption slow decision momentum

Executives often expect users to “figure it out” fast.

But every deviation from memory forces micro-decisions (“Where’s that add-to-cart?”), raising cognitive load after redesign – slowing the very flows that used to convert effortlessly.

A practitioner tip: we’ve seen even minor label changes, for example “My Account” to “Profile”, dent metrics for weeks.

The myth is that more intuitive always means quicker to adopt – reality is, intuition depends on recognition, not just logical clarity.

This is why conversion decay after redesign is so common: returning users analyze instead of act.

What are they really hesitating over?

Familiarity, more than function.

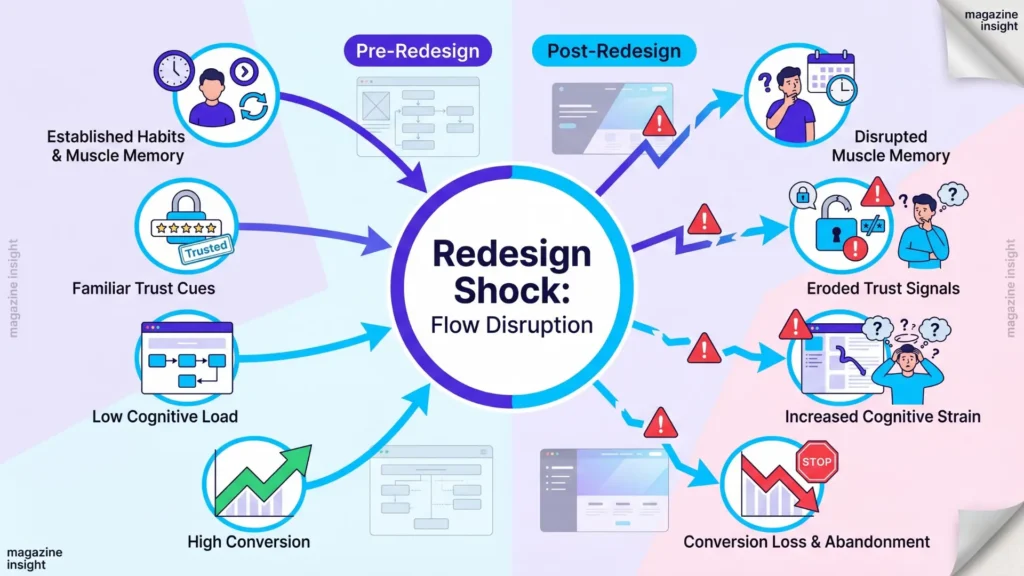

Why trust elements and cues are fragile assets

Trust resets aren’t always loud – they’re often invisible until numbers fall.

In one diagnostic for a SaaS platform, we found shifting a trust badge below the fold cut signups 18%.

The loss wasn’t the badge itself, but the subconscious cue it provided to returning visitors.

Recognition disruption in redesign is especially hazardous to trust; users scan for signals (“Is this the same company? Is it safe?”) and react to even small discrepancies.

Most decision-makers assume trust comes from big declarations – testimonials, badges, guarantees.

Yet in practice, trust cues are surprisingly brittle.

Change the tone of a headline or the tone of button text (“Start Free” vs. “Try Now”) and users hesitate, often without noticing why.

It’s like changing the keys on a piano – muscle memory fails, and the tune no longer flows.

If your redesign hides or changes trust signals, expect a behavioral lag – or outright resistance.

Here’s the enduring insight: what feels like an aesthetic upgrade to your team may signal risk or “unknown territory” to repeat users.

Invisible trust is an asset that’s slow to build, quick to disturb, and essential to conversion stability.

Teams that anticipate this shock avoid the trap of blaming design quality for lost performance.

Instead, they recognize that habit and trust disruptions – not design flaws – often trigger the real conversion drop after a redesign.

This is the essence of “trust reset after redesign” – even well-intentioned changes can dismantle the subconscious trust your repeat users rely on.

When Context Shifts Reveal Optimization Fragility

Picture this: your design relaunch goes live and – like clockwork – conversion rates start slipping, but it isn’t the interface that’s changed most.

The real disruptor?

Your traffic mix just shifted under your feet.

Most teams don’t realize that optimization is fragile because it’s tuned to a specific context, not a universal ideal.

Every change to who’s visiting, or why they’re showing up, can quietly unwind hard-won gains.

How new traffic sources dilute conversion consistency

Impact of Traffic Sources on Conversion Consistency

| Condition | Description | Action If True | Action If False |

|---|---|---|---|

| Catastrophic and sustained drop | Is the conversion drop severe and lasting? | Consider reversal | Monitor and investigate |

| External factors ruled out | Have other causes like traffic or tracking issues been excluded? | Consider reversal | Investigate external causes further |

| User feedback shows active rejection | Are users explicitly indicating issues with the redesign? | Consider reversal | Collect more user insights |

| Direct link between friction and design choices | Can friction be traced directly to redesign elements? | Consider reversal | Explore non-design causes |

| None or partial conditions met | Less definitive signs of failure | Reinforce stabilization and test | Continue to monitor |

Adding a new channel can feel like growth, but brings a hidden risk: weaker intent.

We’ve seen teams scale spend on paid social or partner campaigns, only to discover those visitors bounce at twice the rate.

What feels like more volume is often just more noise.

Our clients are often shocked when a campaign that delivers impressive traffic fails to generate quality leads – what’s missing is intent alignment with your optimized flow.

Scaling rarely preserves the “perfect” buyer profile that early successes rely on.

Instead, it pulls in new segments whose goals and urgency aren’t a fit for finely tuned messaging or flows.

It’s like racing a car tuned for dry pavement onto a muddy track; the same machine, totally different grip.

Are you measuring conversion by total visitors, or by the right visitors?

That single distinction explains why growth surges often conceal fragility, not strength.

Why what once worked stops working over time

It’s tempting to blame the site when performance decays, but optimization’s real enemy is diminishing returns.

As new audiences cycle in, even the best-performing offers or layouts begin to decay – not because they’re worse, but because the environment shifted.

We’ve seen optimized forms that worked wonders with long-term subscribers start failing with fresh, colder traffic.

CRO is like a medicine with an expiration date: its effects wane as context changes.

The biggest myth?

Past wins are “locked in” after deployment.

In reality, every context shift – new ad, channel, or partnership – forces old optimizations to compete in a new game.

The more you chase scale, the more susceptible you are to conversion shock and decay.

Most conversion drops after redesign are not design failures – they’re context shifts exposing just how brittle even well-optimized systems actually are.

Success depends not just on the quality of your changes, but on the stability of what feeds your funnel.

The next chapter addresses what signals to trust, and which to doubt, when the data clouds the cause.

What Changes Visibility, and What Should Trigger Reversal

Most conversion drops after a redesign aren’t caused by the interface – but blaming the UI is often the kneejerk reflex.

The real trap?

Executives lock onto the most visible change, while invisible culprits go unnoticed.

If you think every post-launch dip means the new design failed, that belief will cost you – sometimes twice: first in wasted rework, next in lost signal about what’s truly broken.

Which signals point to tracking, SEO or behavioral misattributions

Analytics errors and attribution drift lurk in the background of every redesign shock conversion drop.

We’ve seen campaigns where conversions vanished overnight, only to discover a misplaced tag or a conflicting tracking script was quietly erasing “wins”, not the design.

Ignore these signals, and you risk chasing ghosts in your UI while hidden technical faults quietly compound.

Here’s the myth: a conversion graph’s downturn always means your users didn’t like what you shipped.

But sometimes, it’s the measurement that’s failed – not the experience.

Look for sharp drops tightly aligned to a deployment window, then cross-check for changes in tag infrastructure or SEO logic.

For example: organic visibility might have cratered due to a missed redirect, or tracking parameters might be stripped from new templates.

If your session counts, device distributions, or major sources fluctuate sharply, the root problem probably isn’t your redesign itself.

It’s a background glitch – one that can destroy trust in your data before you ever reach a real CRO hypothesis.

Think of it like looking through a cracked lens: if you misread the distortion as real-world change, your interventions all target the wrong risk.

Executive teams who recognize these tells separate the noise from the pattern.

When to reverse changes versus reinforce stabilization

Conditions Checklist for Reversing vs. Stabilizing Post-Redesign Conversion Drops

| Traffic Source | Typical Intent Level | Conversion Impact | Common Risk |

|---|---|---|---|

| Paid Social | Often low to medium | Lower average conversion | High bounce, weak intent |

| Partner Campaigns | Variable, often lower | Diluted conversion consistency | Misaligned messaging |

| Organic Search | High, intent-driven | Stable conversion | SEO changes can affect volume |

| Direct/Brand | Highest, loyal users | Highest conversion | Loss signals bigger issues |

| Referral | Medium intent | Moderate conversion | Dependent on partner quality |

Deciding to hit “undo” on a redesign isn’t about blame – it’s about pattern recognition under uncertainty.

The real question: is this drop part of the normal convulsion after any major release, or is something systemically broken?

We advise clients to resist the reflex to roll back unless four conditions hold:

- the drop is catastrophic and sustained,

- external factors have been ruled out,

- user feedback signals active rejection, and

- you can directly tie friction to design choices – not just correlation.

Sometimes, what looks like conversion decay after redesign is just habit disruption and conversion stability finding a new baseline.

Stabilization means running additional split or holdout tests, investigating new vs. repeat visitor behavior, and dissecting traffic mix effect conversion.

The best executive move?

Codify a wait-and-watch period, flag all tracking or SEO anomalies, and use instrumentation to pinpoint whether you’re facing a redesign shock or an external misfire.

If the signal points to invisible context shifts, don’t reverse – adapt, patch, and let the new patterns reveal themselves.

But if trust reset after redesign or broken recognition disrupts high-value funnel steps, be ready to revert with precision, not ego.

Conversion volatility doesn’t always mean failure – sometimes, it just means your measurement is finally honest.

The discipline lies in knowing which signals deserve reaction, and which demand patience.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Habit Disruption and Cognitive Load

“Human Navigation in Electronic Environments” – Luciano Gamberini, MS, and Stefano Bussolon, MS – Cyberpsychology, Behavior, and Social Networking

This paper analyzes the impact of interface redesigns on user behavior, focusing on muscle memory, cognitive load, and conversion disruptions.

https://journals.sagepub.com/doi/10.1089/10949310151088398?cf-mal-redirected=true& - Trust and Risk Perception Online

“Trust between patients and health websites: a review of the literature and derived outcomes from empirical studies” – Laurian C Vega, Enid Montague, Tom DeHart – Health Technol (Berl)

Examines how subtle shifts in design elements affect users’ subconscious trust signals, paralleling findings in health websites.

https://pmc.ncbi.nlm.nih.gov/articles/PMC3266366/ - Context Shifts and Optimization Fragility

“Developing a Conversion Rate Optimization Framework for Digital Retailers” – R. Zimmermann, A. Auinger – Journal of Marketing Analytics

Shows that conversion performance depends on multiple interconnected touchpoints and traffic sources, where changes in input conditions (e.g., traffic mix, device, intent) alter outcomes even without UI changes—demonstrating that optimized systems are fragile to contextual variation.

https://pmc.ncbi.nlm.nih.gov/articles/PMC8864459/ - Attribution and Measurement Errors

“Conversion Rate Optimization through Evolutionary Computation” – R. Miikkulainen et al. – arXiv / computational marketing systems

Demonstrates that CRO operates in a high-dimensional design space with interacting variables, where limited testing and measurement constraints lead to incomplete evaluation and misattribution of performance changes, especially when systems evolve or inputs shift.

https://arxiv.org/abs/1703.00556

Questions You Might Ponder

Why does a redesign that looks better sometimes hurt conversion rates?

A visually improved redesign can disrupt user habits and break recognition, leading seasoned users to hesitate or drop off. This ‘redesign shock’ occurs when trust and muscle memory are reset, increasing the cognitive load and slowing decisions despite the appearance of improvement.

How do trust signals impact user behavior after a redesign?

Trust signals like familiar badges or language reassure users subconsciously. When a redesign hides, removes, or alters these cues, repeat visitors may feel uncertain, lowering their confidence in taking action. Recognizing and preserving trust signals during redesigns helps maintain conversion rates.

What is optimization fragility and how does it affect conversion?

Optimization fragility describes how conversion-focused improvements are vulnerable to context changes such as new traffic sources or user intent shifts. Gains achieved in one environment can vanish if the audience or acquisition channels change, exposing weaknesses in prior optimization strategies.

When should you reverse a redesign after a conversion drop?

Reversal is recommended only if four conditions are met: the decline is severe and ongoing, external factors are excluded, user feedback signals rejection, and friction is directly linked to new design features. Often, careful measurement and analysis should precede any rollback decision.

Can analytics or tracking issues cause apparent conversion drops after redesign?

Absolutely. Analytics misconfigurations, lost tags, or SEO errors during a redesign can misrepresent user behavior by undercounting conversions. Always verify your measurement infrastructure before blaming the interface – addressing tracking faults often resolves ‘phantom’ conversion declines.