What You’ll Learn

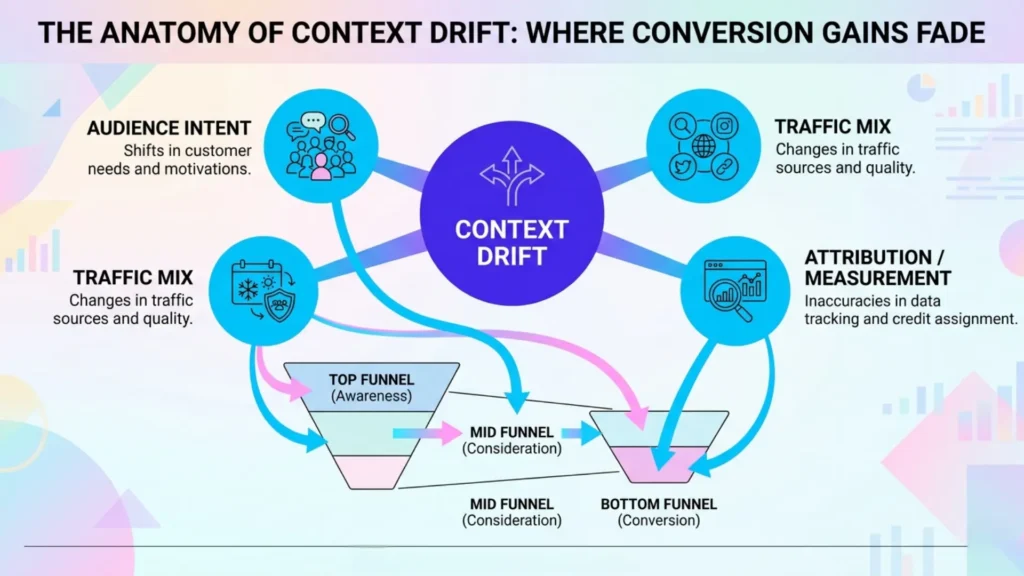

context drift

Key Takeaways

- Conversion gains often erode due to context drift, not on-page issues, as audiences, traffic sources, and measurement environments evolve.

- Major conversion instability can result from shifts in traffic intent, audience mix, seasonal demand, or attribution accuracy.

- Many teams misdiagnose conversion drops by focusing on design fixes instead of validating upstream context or tracking changes.

- Sustainable conversion optimization requires continuous context monitoring, adaptive metrics, and recognition that success is temporary and conditional.

Surprise: your best-converting page can quietly lose its edge – without anyone changing a single word, color, or button.

Most executives expect conversion rate instability after a redesign or campaign flop, not during a growth phase when the funnel “should” be humming.

So why do hard-won improvements so often fade out even as you scale?

When conversion improvements fade: what exactly changes behind the scenes?

The answer: context drift in conversion optimization is the silent reset button.

The very conditions that drove those big early wins don’t stand still – they shift underneath you, rewriting what’s possible no matter how pristine your design stays.

Let’s look at exactly what changes, and why so many marketers miss the early clues.

How shifts in audience intent and traffic mix silently undermine past wins

When your traffic mix shifts – even with zero page changes – conversion rates can slip.

Scaling spend or adding new channels often brings in less motivated, lower-intent segments.

For example: a client tripled their ad budget expecting more of the same buyers, but the new channels swamped high-intent users with colder traffic, eroding prior gains.

Here’s the repeatable insight: optimizing for yesterday’s audience won’t save you from today’s traffic mix shift.

When your traffic recipe changes, past conversion gains can erode almost overnight.

Picture a chef swapping premium ingredients for bulk alternatives – you might hit the same recipe, but the flavor just doesn’t land.

Are you still serving the same meal, or have you diluted what made it powerful?

The decay is subtle at first.

Retargeting audiences fatigue.

New sources bring higher bounce rates and more window shoppers.

Suddenly, what looked like optimization fragility is actually just your funnel telling you that intent has shifted.

If conversion rate instability sets in right after scaling, ask: did we filter for motivation or just fill the funnel?

Why measurement gaps and drift in tracking hide true performance

What if your numbers lie?

As tracking standards shift and privacy updates break attribution, the picture gets muddy.

A sudden drop in reported conversions may have nothing to do with user experience or page failure – sometimes it’s measurement drift, not market rejection.

We’ve seen this firsthand while auditing client data.

One enterprise lost track of 15% of its mobile conversions after a cookie law change – panic ensued, but the “decline” was purely technical.

Another client noticed a conversion slump that mapped perfectly to a broken pixel, not a broken offer.

It’s like your car’s dashboard showing the gas tank as empty, while the fuel line is actually fine – diagnosing a breakdown when the instrumentation is off leads to wasted spend and false alarms.

Is the conversion decline real, or did tracking decay mask your true performance?

The difference between optimization decay and contextual conversion decline is often hidden behind the scenes – until someone asks the right question.

Effort spent blaming the page obscures the real story: context is always shifting.

The most profitable conversion strategy is knowing what changed, not just what broke.

Why success isn’t permanent: expectations, seasonality, and optimization decay

Most companies treat a bump in conversion rate as a trophy – locked in, celebrated, banked for the year.

But those wins have an expiration date that almost nobody budgets for.

The real surprise?

Your conversion “high score” is less about digital page design and more a product of external shifting tides – seasonal markets, audience expectations, and the invisible pull of optimization decay.

Why seasonal and competitive forces reset the conversion baseline

Imagine launching a campaign in Q4 and watching performance soar, only to see that same landing page stall in February – even before you touch spend or creative.

That’s not sudden failure; it’s context drift in conversion optimization at work.

Seasonal conversion shifts behave like ocean tides – your baseline rises and falls beneath you, even as you stand still.

We’ve seen B2B SaaS teams panic when post-holiday leads dry up, convinced a funnel update or competitor poaching is to blame.

In reality, the whole market cycle has shifted: budgets reset, intent drops, priorities move elsewhere.

What about years when macro headwinds or a competitor’s market entry quietly stifle the entire sector’s appetite?

That baseline slips, eroding even top-performing pages.

A myth persists: “If it worked last quarter, it should work now”.

But unlike a static asset, your conversion opportunity is perpetually renegotiated by forces outside your control.

Are you benchmarking against your genuine addressable market, or a memory from peak season?

How optimization gains wear out and require shifting evaluation standards

Most optimization gains fade for reasons no A/B test will reveal.

Initial improvements often impress because everyone’s paying attention – a narrative fueled by novelty and unmet demand.

But as fatigue sets in, the same offer or message loses its punch.

Scaling effects on conversion show up as diminishing returns, not sudden collapse; the applause grows quieter, even if the stage looks the same.

We’ve watched high-performing e-commerce promos lose impact over a few cycles, triggering teams to chase tactical tweaks when the real issue was simple: people get used to what was once new.

Worse, growth targets stay anchored to a fading success, so leaders miss the signs of optimization fragility.

Think of conversion gains like the freshness of produce: yesterday’s peak becomes today’s average as soon as external context shifts.

Measurement standards must adapt too.

What counted as a win at launch might be mediocrity in a new quarter, or after a competitor mimics your move.

Are you evolving your evaluation – or calcifying around old highs?

Success, in conversion optimization, is only ever rented.

Seasonal waves, shifting expectations, and optimization decay mean the conversion rate you celebrate is temporary by design.

Next: Let’s shift the blame from the page itself – and decode when and how context drift is actually the hidden culprit.

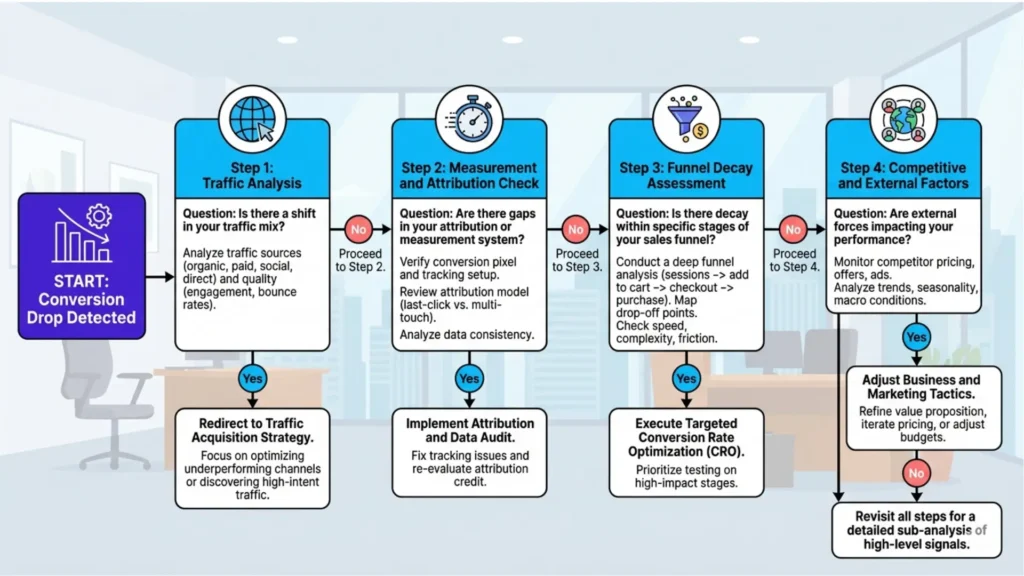

How to stop blaming the page – and start diagnosing context-driven conversion shifts

Most teams chase redesigns the moment conversions slip – when the real problem is rarely on the page.

The toughest truth: conversion rate instability is often triggered before a visitor ever lands, and changing copy or layouts won’t fix what started upstream.

If your instinct is to tweak a CTA, ask: what if nothing visible on the page actually broke?

Evaluating if the root cause lies upstream, not on the page

Here’s the myth: “Our conversion fell, so our landing page failed”.

In practice, repeated client audits reveal a different culprit nearly every time.

Picture a high-performing landing page converting at 8%.

Traffic scales, budgets increase, and – despite zero updates – the rate slides to 5%.

We’ve seen executive teams waste quarters optimizing color, copy, even testimonials, while missing the silent swap: channel sources changed, intent dropped, or tracking degraded.

Think of it like an engine running on different fuel.

The page could be flawless, but weaker intent, broader targeting, or inaccurate measurement will sabotage outcomes.

Has your new campaign blended cold display traffic with what was once high-intent search?

Are referral partners sending less qualified visitors?

Even privacy tweaks or attribution lags can sabotage what looks like a stable conversion asset.

When diagnosing conversion decline, the first move must be to test your upstream assumptions.

Are you measuring the same audience, with the same level of awareness – and with true performance signals, not just defaults pulled from analytics dashboards?

Ask: what changed off the page?

Key signals that tell you whether this is context drift, not design failure

Key Signals Indicating Context Drift vs. Design Failure

| Decline Pattern | Key Metrics to Monitor | Escalation Criterion |

|---|---|---|

| Channel Shift | Sustained drop in CTR from new sources | Multiple weeks of continuous CTR decline post-launch |

| Measurement Changes | Rise in unattributed or inconsistent conversions | Clear tracking changes confirmed in analytics logs |

| Funnel Drop-off | Gradual conversion decay at specific funnel stages | Consistent drop-off observed across reporting periods |

| Cross-Channel Patterns | Declines across several unrelated channels | Multi-channel signals align before action taken |

Key signals for context drift:

- Sudden drop in click-through rates or conversions after a traffic source shift.

- Attribution discrepancies or conversions reporting inconsistently across analytics tools.

- Increased bounce rate or sudden audience behavior changes without onsite changes.

- Loss of quality leads or sales despite stable page experience.

- Tracking code issues, form drop-off, or latency that mimic conversion decline.

Review these before diagnosing page failure.

A classic practitioner pattern: hot traffic cools.

Cost per acquisition inches up.

Lead quality tanks without warning, even when heatmaps and engagement metrics look stable.

If your funnel used to convert high-intent segments and now sees generic or impulse visitors, expect optimization fragility – no page rewrite will revive rates until the context shifts back.

Ignoring context drift when diagnosing low conversion is like updating your storefront while traffic patterns outside divert your main customers.

The environment matters as much as the page.

Stop hunting for flaws in the visible.

The strongest teams validate context shifts before they move a pixel.

The next section breaks down which signals actually confirm drift, so you know if the root problem is on the surface – or hiding upstream.

Don’t overlook operational noise either – sometimes tracking code breaks, user interface tweaks, or slow load times can mimic context drift.

Distinguish external context factors from technical or form-based causes for full clarity.

When context drift is diagnosed: what to look at next

Most teams diagnose context drift, then instantly lunge for surface tweaks – missing the hard truth: diagnosing drift is a fork, not a fix.

You’re not facing a simple repair; you’re entering a decision maze with more dead ends than shortcuts.

The problem isn’t “something broke” – it’s, “What exactly shifted underneath, and which lever even matters now?”

Distinguishing between traffic mix, attribution, and funnel drift

Not all context drift is created equal.

Diagnosing the root is like separating three lookalike actors – each plays their part, but only one is stealing the scene.

One of our clients saw conversion rate instability every time they ramped up spend on a new channel.

On inspection, the traffic mix had shifted toward lower-intent audiences, quietly sabotaging the conversion baseline.

Chasing attribution fixes there would have wasted cycles – because it wasn’t a measurement issue after all.

In another case, a sudden dip looked like classic optimization decay.

But the real culprit was attribution drift: tracking changes meant new touchpoints were miscounted, and the team was optimizing for a mirage.

One simple analogy – the difference between traffic drift, attribution gaps, and funnel decay is like three leaks in a boat: only one floods the hull, but you need the right toolkit to find which.

So, is your conversion drop driven by weaker traffic, tracking misfires, or a deeper funnel shift?

Each route demands a different analysis – traffic segmentation tools, attribution audits, or deep funnel forensics.

Try to treat them the same, and you guarantee misdiagnosis.

When to confirm drift before escalating – metrics and decision checkpoints

Metrics and Decision Checkpoints for Confirming Context Drift

| Signal Category | Example Indicator | Diagnostic Insight |

|---|---|---|

| Traffic Behavior | Sudden drop in CTR or conversions after traffic source shift | Indicates likely context drift via change in visitor mix |

| Attribution Metrics | Conversions reporting inconsistently across tools | Suggests attribution or tracking issues causing misinterpretation |

| User Engagement | Increased bounce rate without onsite changes | Signals decline caused by audience behavior shifts, not page failure |

| Lead Quality | Loss of quality leads despite stable page experience | Points to upstream intent or traffic quality changes |

Here’s where most decision-makers get tripped up: acting too soon, or waiting too long, both cause more damage than the drift itself.

The temptation is to escalate after a bad week, but context drift reveals itself in patterns, not blips.

Set clear checkpoints – are decline patterns tied to channel shifts, seasonality, or measurement changes?

For one client, we held escalation until three separate signals aligned: sustained CTR drop from a new source, a rise in unattributed conversions, and clear funnel drop-off after a traffic pivot.

Only then did we greenlight a full diagnostic cycle.

What should you look for?

Consistent, multi-metric signals.

Does the drop show up only after a campaign launch, or across several unrelated channels?

Are tracking changes visible in your analytics logs?

Or is the decay gradual and specific to one funnel stage?

The right threshold isn’t about perfection – it’s about stacking enough evidence to avoid wild goose chases.

Recognize that a context drift diagnosis isn’t the end – it’s the handoff.

The moment that pattern locks in, the right next step is targeted, not vague: investigate which drift type dominates, confirm with converging metrics, and funnel your team’s effort with intent.

Stay surgical.

Guesswork here is the costliest mistake.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Attention, Intent, and Decision-Making

“Selection for Action: Some Behavioral and Neurophysiological Considerations of Attention and Action” – Allport, A. – Attention and Performance Journal

Explores how shifts in attention and intention directly shape user actions and outcomes, underlying the mechanism behind changing performance under shifting conditions.

https://www.taylorfrancis.com/chapters/edit/10.4324/978131562799-18/selection-action-behavioral-neurophysiological-considerations-attention-action-alan-allport - Optimization Decay and Novelty Effects

“Dissociating Mere Exposure and Repetition Priming” – Butler & Berry – Memory & Cognition

Demonstrates that repeated exposure changes processing and preference, but effects are non-linear and can plateau or reverse, explaining why repeated optimization or repeated stimuli lose effectiveness over time.

https://link.springer.com/article/10.3758/BF03195866 - Attribution and Measurement Drift

“Attribution Strategies and Return on Keyword Investment in Paid Search Advertising” – Hongshuang (Alice) Li, P. K. Kannan, Siva Viswanathan, Abhishek Pani – Marketing Science

Provides a technical foundation for understanding attribution breakdowns, tracking drift, and regulatory impact on data reliability and performance measurement.

https://pubsonline.informs.org/doi/10.1287/mksc.2016.0987 - Contextual Variation in Online Decision Outcomes

“Choice in Context: Tradeoff Contrast and Extremeness Aversion” – Tversky, Simonson – Journal of Marketing Research

Demonstrates that consumer decisions are highly sensitive to contextual framing and surrounding options, meaning that identical offers can perform differently depending on environmental signals and comparison sets.

https://journals.sagepub.com/doi/10.1177/002224379202900301

Questions You Might Ponder

Why do conversion rates drop even when the website stays the same?

Conversion rates can decline when external factors change, like shifts in audience intent, traffic sources, or market conditions. Even without page edits, context drift can undermine past optimizations, revealing that performance is shaped by broader ecosystem shifts – not just page design.

How does context drift impact digital marketing ROI?

Context drift alters the effectiveness of existing conversion assets. As audience characteristics, intent, or attribution accuracy changes, previously successful tactics may generate weaker ROI, forcing marketers to adapt continuous measurement and refresh strategies for sustained performance.

What are the main signs that conversion decline is caused by context, not design?

Key signals include sudden drops after traffic source changes, inconsistent reporting across analytics tools, stable on-site engagement but lower lead quality, or unexplained metrics shifts following compliance or tracking updates. These indicate issues upstream of design.

How should teams diagnose conversion drops before redesigning pages?

Teams should first analyze traffic mix, attribution pathways, and seasonal or competitive changes before making design changes. Validating if measurement or external context shifted prevents wasted effort fixing the page when the real root lies offsite or upstream.

Can seasonal and competitive shifts erase previous conversion success?

Yes, seasonality and competitive forces continually reset conversion baselines. Performance highs set during peak season may disappear as demand wanes or new competitors enter, making ongoing context analysis essential for sustaining digital gains.