What You’ll Learn

traffic mix effects

Key Takeaways

- Scaling traffic shifts audience intent, causing blended conversion rates to drop even if core segments remain strong.

- True insights come from segment-level metrics – blended averages often hide high-performing channels and emerging friction.

- Traffic surges expose hidden technical and attribution weaknesses, amplifying even minor performance problems.

- Persistent conversion volatility signals structural frailty; rapid recovery within key segments suggests healthy adaptation.

Most teams celebrate surging visitor numbers – until the conversion rate chart nose-dives.

The real surprise?

That drop doesn’t mean your site suddenly “got worse”.

It means the people arriving changed – and their intent diluted.

Understanding this traffic mix effect on conversion is where real growth conversations start, not end.

How scaling traffic changes who visits – and why that matters

Why average conversion drops when cold traffic grows

Impact of Adding Cold Traffic on Overall Conversion Rate

| Aspect | Temporary Dip | Structural Failure |

|---|---|---|

| Duration | Days to weeks | Months or longer |

| Recovery Pattern | Rapid bounce-back, stable patterns | Compounding variance, no stable baseline |

| Segment Performance | High-value cohorts recover first | Persistent loss across cohorts |

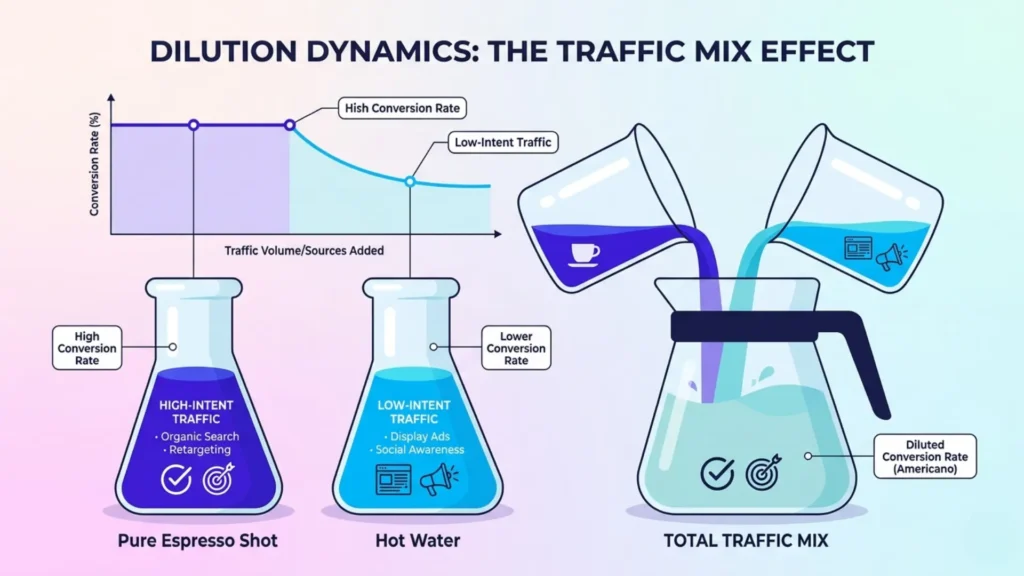

The difference between doubling total traffic and doubling qualified leads is simple math, but almost no one actually runs it.

Adding more visitors rarely boosts sales in lockstep.

Instead, as ad budgets increase or platforms broaden targeting, you pull in colder, less purchase-ready segments alongside your original high-intent audience.

In client audits, we see this nearly every time: legacy channels keep converting at the same clip, but diluted averages make it look like the whole engine lost power.

That’s the blended conversion rate decline – the “watered-down” effect that creates panic where precision is needed.

For example, if 1,000 high-intent visitors convert at 8% (80 sales) and you add 1,000 new paid visitors converting at 2% (20 sales), your total conversion rate drops from 8% to 5% – even though your core audience hasn’t changed at all.

Picture pouring a shot of espresso, high-intent search, into a mug of hot water, cold social traffic.

The more water, the weaker each sip tastes – no matter how strong the original shot.

Conversions work the same way: your blended metrics hide which sources are pulling down the average.

The biggest myth is that scaling will automatically lift topline results.

In reality, average conversion rate decay during scale is almost always a sign you’re collecting more “maybe” traffic, not more buyers.

Smart teams measure conversion sensitivity to traffic quality at the segment level, so declining averages don’t obscure segments that are still working.

Here’s the line to remember: Blended metrics describe reality, but only segmented performance diagnoses it.

When increased visitor volume creates misleading downward drag

Here’s where the problem doubles back: as volume increases, overall conversion often drops – but your hero segments may be untouched, or even improved.

We’ve witnessed paid campaigns where high-intent audiences held steady at 8%+ conversion, while the expanded mix slumped to 2% or less.

The new, colder cohort exerts a misleading downward drag, masking where momentum remains strong.

Why does this confuse so many teams?

Because dashboards aren’t built to separate signal from noise.

They flatten nuanced patterns into one blended metric, turning sharp momentum in one area invisible under a tsunami of tepid clicks elsewhere.

Ask yourself: if you suddenly lost all low-intent paid traffic, would your real customer volume drop – or would your perceived “problem” with conversion vanish?

Real diagnosis means pulling the layers apart, not just watching the surface number slide.

This early dip isn’t a sign of failure.

It’s a sign to tune your lens and segment hard – for clarity, not comfort.

If your conversion rate changes as your visitor story changes, you’ve found leverage for smarter optimization, not evidence you’re failing to scale.

What traffic mix shifts say about on-page decision friction

The stubborn truth is that most “conversion rate drops” during scale don’t signal broken pages – they expose growing decision friction from the inside out.

If you treat every dip as a UX flaw, you’ll waste quarters chasing ghosts.

The real story?

Scaling shifts your traffic mix, and with every new segment, invisible context gaps and expectation mismatches multiply.

Suddenly, once-reliable paths stall, and conversion rates seem to unravel for no obvious reason.

How intent dilution reveals uncaptured context and expectations

A B2B software team we advised had their demo signups drop sharply as they scaled paid social, despite search-driven conversion holding firm.

The culprit: an influx of visitors with different priorities and context – asking questions the site had never needed to address before.

These new segments surfaced cold intent and expectation gaps invisible to their old, high-intent base.

Think of it like building a product for runners, then advertising to “people interested in exercise” at scale.

Expectation gaps explode: Why this?

Why now?

That’s the context your high-converting segments never forced you to articulate.

Conversion decay during scale isn’t a sign your offer failed; it’s a spotlight on the swaths of context and readiness your site ignores.

Here’s the insight most miss: If decision friction goes up as mix changes, your optimization gap isn’t on the surface.

It’s buried in unread signals and mismatched mental states.

How confident are you, really, that your current page answers the questions your new segments are quietly asking?

Why conversion variance spikes under scale reflect unstable decision systems

Once volume rises, conversion doesn’t just decay – it swings.

The volatility isn’t about error bars; it shows the decision system underneath your site is fragile under stress.

Myth: big data smooths out the noise.

In reality, traffic surges amplify every small misfit between visitor intent and page proposition.

A client once ramped spend aggressively to chase Q4 goals.

Their peak day saw conversion jump, then crater, then yo-yo for a week.

Why?

The incoming crowd brought new biases, device habits, and attention patterns.

The underlying decision process wasn’t built for that spread – like widening a funnel’s top without reinforcing the spout.

Conversion instability after scaling is a system alarm, not random luck.

If conversion feels like a rollercoaster the moment you broaden mix, you haven’t fixed on-page barriers – you’ve just exposed their reach.

Are you seeing a normal adjustment, or has scale exposed that your decision structure collapses under load?

That’s where optimization fragility really bites.

Conversion turbulence at scale isn’t random.

It’s the system reporting its weakest seams, waiting for you to see what friction is quietly driving profit off the table.

When site and platform capacity worsen conversion outcomes under load

Most conversion rate headaches at scale aren’t about your offer or your message – they’re about what breaks when velocity hits your infrastructure.

That celebratory traffic spike?

It often hides a hidden bottleneck: technical systems not designed for sudden surges, silently bleeding conversions.

Few teams see it coming until cart abandonment, payment failures, and subtle lags spiral out of control.

So why do small problems become fatal at scale?

How infrastructure lag and performance decay magnify small problems

Traffic scale doesn’t just reveal cracks – it widens them.

A half-second delay in load time that went unnoticed at 1,000 visitors becomes a revenue-draining roadblock at 10,000.

Checkout steps that worked perfectly in staging can buckle under true demand, turning micro-glitches into macro-losses.

We’ve seen brands lose six figures in weekend flash sales not because demand vanished, but because a split-second timeout killed high-intent orders.

Backend reliability isn’t just a technical metric; it’s a decision accelerator or a roadblock to revenue.

One time, a B2C client’s site slowed by just 18% under load – conversion rates quietly halved, even as intent remained sky-high.

It’s like forcing thousands through a doorway that sticks just enough to kill the mood.

Most users won’t wait – they’ll give up, never flagging the true source of frustration.

So, is your real bottleneck a funnel step, or is it the system failing to deliver on demand?

When tracking dilution and attribution shifts mask true performance

Myth: what gets measured at scale tells the pure story.

Reality: more visitors create more noise, not more clarity.

The flood of extra data can dilute the signal, causing attribution models and analytics to short-circuit just when you need truth most.

We’ve watched source tracking fracture once traffic diversified – suddenly, conversions were scattered across channels, with reported drops that didn’t match actual sales.

Attribution systems can’t keep up, making real performance tough to decipher.

Algorithms misassign credit, campaign optimizations chase the wrong targets, and executive dashboards broadcast fiction as fact.

Think of your tracking like a clear window – every new bulk visitor is another smudge on the glass.

The clearer the window, the more precisely you diagnose problems.

But during scale, glare and grime obscure everything.

The system problem isn’t just technical debt or analytics misfires.

It’s the multiply effect: every tiny delay or data error snowballs into strategic misreads.

Conversion drops under load rarely have a single cause, but one repeatable truth is clear: small technical issues become business-critical failures at scale.

If conversion losses feel mysterious as traffic ramps, look hard at the structure beneath the surge.

Under scale, infrastructure performance and measurement integrity often make or break profitable growth.

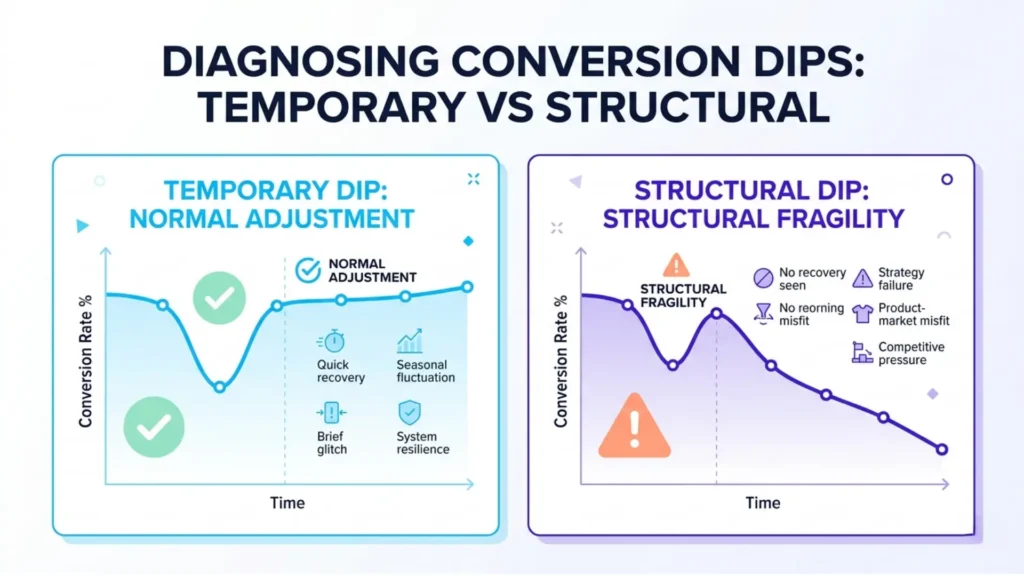

What constitutes a normal temporary dip – and when fragility becomes a structural failure

Most scaling initiatives trigger an immediate panic when conversion rates slide – but half those dips are illusions.

The real danger isn’t the first drop; it’s how quickly, or if, you recover.

The difference between a healthy adjustment and looming structural decay hides in the details executives are trained to ignore: timelines, patterns, and the consistency of outcomes.

What short-term conversion dips “resolve themselves” look like

Characteristics of Temporary Conversion Dips vs Structural Failures

| Visitor Segment | Number of Visitors | Conversion Rate |

|---|---|---|

| High-Intent Visitors | 1,000 | 8% |

| New Paid (Cold) Visitors | 1,000 | 2% |

| Total (Blended) | 2,000 | 5% |

There’s a myth that every post-scale dip spells disaster.

The reality: most temporary declines follow predictable learning-curve mechanics.

When campaigns expand, fresh audience segments introduce a rush of weak intent, dragging the blended conversion rate down.

But if your system is fundamentally sound, you’ll see a bounce-back within days to weeks – not months.

What does resolution look like?

Patterns stabilize fast.

Acquisition costs settle.

High-intent segments recover performance first.

In our client data, we’ve seen dips as steep as 22% snap back to par within two weeks after dialing in message-audience fit.

Small iterative tweaks – like refining creative by segment or repositioning lead magnets – often restore rates without major intervention.

Think of a site under scale like a restaurant staffed for lunch, suddenly getting a noisy dinner crowd.

For a short time, service quality craters.

But a good team regroups quickly – identifying bottlenecks, updating routines, smoothing flow.

The best signal that the operation is resilient?

Rapid adjustment without systemic breakdown.

Is your dip resolving, or still deepening?

Watch if your highest-value cohorts return to baseline.

If the worst of the decline corrects within a typical sales cycle, you’ve got normal fragility – not structural failure.

What patterns signal deeper decay that needs system-level attention

Not all drops are recoverable.

The warning signs start subtle: conversion bounces back in fits and starts, or flattens at a new, lower average.

Instead of volatility giving way to a new baseline, you see compounding variance – yesterday’s fixes don’t stick, and the path to recovery is always “just one change away”.

One hard lesson from scaling B2B SaaS clients: the structural failures rarely come from obvious oversights.

They surface as persistent soft spots – channels that never regain ground, or automation sequences that produce more drop-offs as volume grows.

If your analytics show a slow bleed across every cohort rather than a sharp correction, that’s the early signal of system-level fragility.

Ask yourself: are you seeing repeated problems with session loss during checkout, wild conversion swings tied to campaign timing, or offers that used to win now landing flat?

These patterns point to an unstable foundation – optimization fragility exposed by scale.

Quick fixes stall, troubleshooting feels endless, and the conversion rate charts begin to show more noise than meaning.

Temporary dips are normal turbulence.

But if your “storm” turns into a climate, the diagnosis changes.

The line between normal and structural is visible in consistent recovery.

If that never arrives, it’s not a dip – it’s a signal your system needs an intervention.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Human Cognitive Limits in Decision Making

“Thinking, Fast and Slow” – Daniel Kahneman – Farrar, Straus and Giroux

Kahneman’s work on dual-system thinking explains why new visitor segments introduce unpredictable friction, impacting conversion rates as cognitive load and decision variables multiply.

https://en.wikipedia.org/wiki/Thinking,_Fast_and_Slow - Impact of Choice Overload on Engagement

“When Choice is Demotivating: Can One Desire Too Much of a Good Thing?” – Sheena S. Iyengar, Mark R. Lepper – Journal of Personality and Social Psychology

Seminal study on how increased options (akin to diverse traffic mix) can decrease engagement and conversion due to decision fatigue.

https://psycnet.apa.org/record/2000-16701-012 - Technical Bottlenecks and Web Performance

“On Latency of E-Commerce Platforms” – Marcus Basalla et al. – Journal of Organizational Computing and Electronic Commerce

Shows that even small increases in latency significantly reduce conversion rates and user satisfaction, with stronger effects for mobile and high-intent users, demonstrating that technical infrastructure (not CRO) directly drives conversion loss.

https://doi.org/10.1080/10919392.2021.1882240 - Attribution Modeling and Analytics Accuracy

“Attribution Modelling in Marketing: Literature Review and Research Agenda” – A. Dalessandro et al. – Journal of Business Research / academic review corpus

Synthesizes decades of attribution research and shows that multi-channel attribution suffers from model bias, incomplete data, and incorrect credit allocation, leading to systematic misinterpretation of what drives conversions.

https://www.abacademies.org/articles/attribution-modelling-in-marketing-literature-review-and-research-agenda-9492.html - Variation in Conversion as a Signal of System Fragility

“Big Data in Consumer Behavior Research: A Systematic Review” – Q. Liu et al. – Journal of Marketing Analytics / Springer

Shows that large-scale behavioral systems exhibit data quality issues, instability, and interpretability limits, where outcome variability often reflects underlying system complexity and fragility rather than surface-level performance changes.

https://link.springer.com/article/10.1057/s41270-026-00470-6

Questions You Might Ponder

Why does conversion rate typically decrease when scaling website traffic?

Conversion rates decrease as a site attracts more diverse visitor segments with lower purchase intent. This ‘traffic mix effect’ means higher volumes dilute original high-intent audiences, revealing new context gaps and decision friction – key drivers of declining average conversion.

How do traffic mix effects impact on-page optimization strategies?

Traffic mix effects expose previously unseen friction points by attracting colder audiences with new questions and needs. This requires segmenting data and tailoring on-page experiences to match each segment’s expectations, rather than relying on generic optimizations.

What technical issues worsen conversion decline during high-traffic events?

Sudden surges reveal website performance bottlenecks – such as server lag, timeouts, and tracking dilution – that may not impact small audiences but cause major abandonment at scale. Proactively stress-testing infrastructure ensures stable conversion rates during growth.

How can teams differentiate between normal conversion dips and structural failures?

Temporary conversion dips typically resolve within a sales cycle as systems adapt and high-intent segments rebound. Persistent, non-recovering dips – or widening variance across all segments – signal deeper systemic issues requiring architecture or operational changes.

What’s the most effective way to measure conversion volatility under scaling?

Segmenting conversion data by traffic source and visitor intent provides visibility into which segments are stable or weakening. Monitoring recovery timelines and consistent cohort performance helps diagnose whether volatility is normal turbulence or deeper fragility.