What You’ll Learn

ai amplifies sameness

Key Takeaways

- AI amplifies sameness by defaulting to statistical median outputs, reducing originality and brand differentiation in digital content.

- Homogenized AI-generated material triggers engagement collapse, declining attention, and diminished trust signals for both brands and audiences.

- Diagnosing sameness requires measuring information gain, seeking content that delivers new perspectives, not just polished language.

- Breaking the median effect demands intentional hand-offs, sharper framing, and injecting rare data or lived experience into production workflows.

What if every piece of AI-generated content ended up sounding like the world’s most polite committee memo?

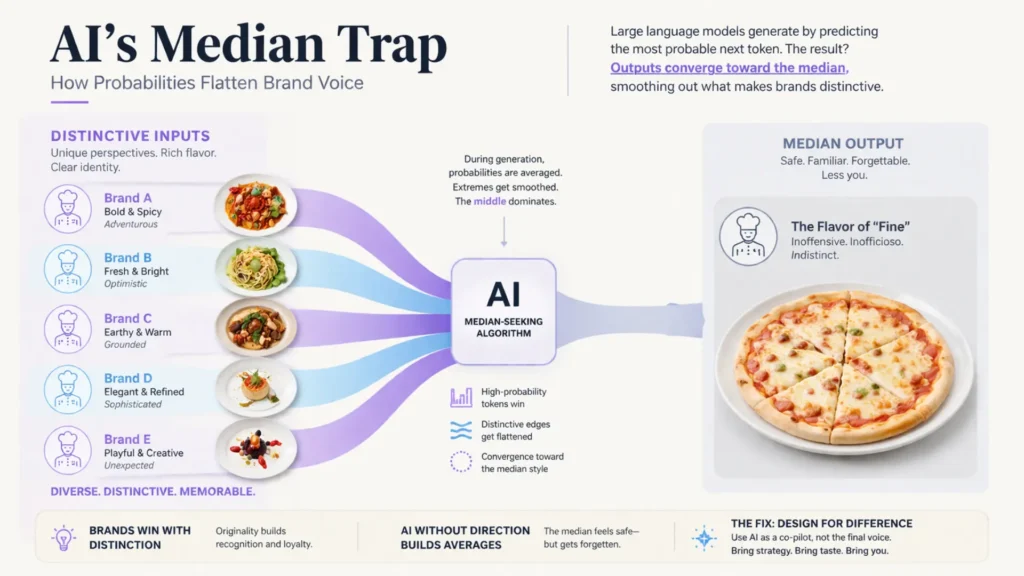

Here’s the twist: most AI writing tools don’t chase surprise, clarity, or even meaning.

Their foundation is statistical – by design, they favor what’s most probable, not what’s most original.

Imagine ordering a chef’s special at every restaurant, only to find it tastes exactly like frozen supermarket pizza every time.

The reason: AI models are built to predict, then stack, the next likeliest word based on massive training data.

This leads to an “averaging” effect.

Definition: AI content sameness is driven by large language models’ tendency to seek the statistical median, producing outputs that prioritize probability and reduce originality.

Why AI tends toward the median, not meaning

This is the core of the AI sameness problem and underpins the broader AI homogeneity mechanics seen in brand content today.

The median‑seeking algorithm: how pattern probability flattens voice

In real client projects, we’ve watched AI-generated social posts for competing brands blend into near-interchangeable homogenized language.

The punchline: even when you feed them different briefs, the tools gravitate toward the most common phraseology and surface-level ideas.

No amount of prompt fine-tuning rescues the output once a system is locked into this pattern matching loop.

Our team once ran a brand’s content through three top AI tools: over 80% of lines were statistically indistinguishable.

The median effect isn’t a theory – it’s visible in outputs, week after week.

A big myth? That “advanced” settings make a difference.

Most platforms’ so-called creativity sliders only widen randomness – not genuine differentiation.

The statistical mode still swallows risk, voice, and edge.

If you’ve ever read a blog post and thought, “Didn’t I see this, almost word for word, on three other sites?”, you’re glimpsing AI-driven genericism up close.

Here’s the analogy: AI content is like paint-by-numbers using only the colors everyone else picked.

You might stay in the lines, but the result fades into the wall.

What does this predictive flattening mean for attention and trust?

If your headlines and hooks sound predictably risk-averse output, so do your competitors’.

The median doesn’t inspire clicks – or change minds.

Trend feedback loops and AI cannibalism

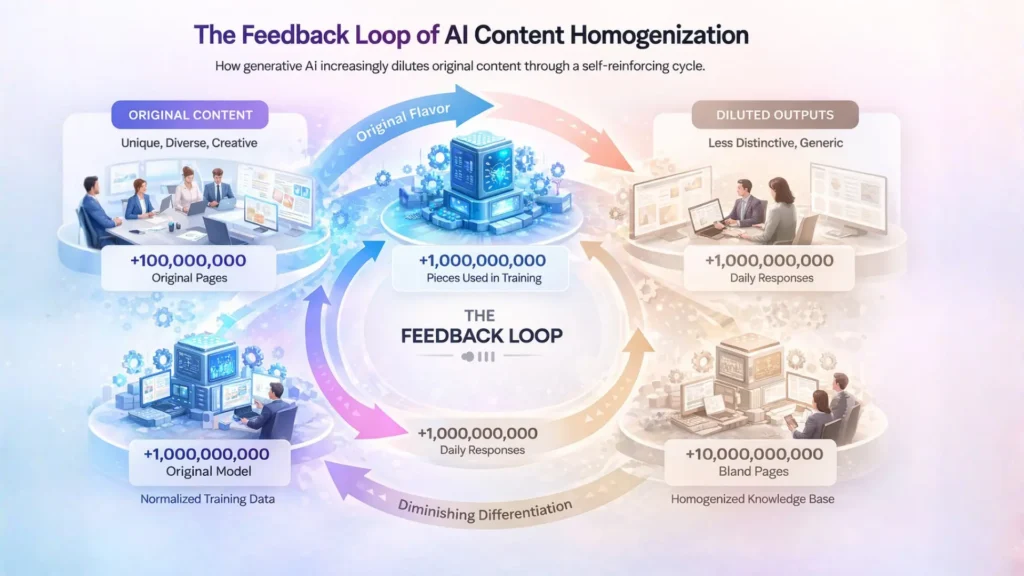

Now, here’s the real kicker: as more brands publish AI content, more of the digital corpus that future tools train on is itself AI-written homogenized language.

The system starts to feed on itself, producing ever-flatter, less differentiated copy.

This self-reinforcing loop – sometimes called “AI cannibalism” – turns the web into a sea of lookalike commentary.

We’ve witnessed newsletter campaigns where the first wave sparkled with original ideas, only for later AI-assisted sends to lose flavor and cause subscriber engagement to drop by 30% or more.

Each iteration diluted surprise, as the system recycled probability-weighted phrasing from prior outputs.

Some believe that scaling up AI output means greater reach.

In reality, it often means more duplicate voice content AI – words no human bothered to say, echoing into the void.

It’s as if every popular new flavor is instantly mixed into a giant beige soup.

So, where does this leave differentiation in AI content?

Unless you break this cycle by injecting new signal – distinct perspective, rare data, unmistakable voice – the system will default again and again to AI-driven genericism.

The median always feels like risk-averse output.

But for brands chasing customer action, it comes at the cost of being remembered.

Summary: When brands opt for output patterns that maximize consensus and minimize differentiation, information gain in content falls. Impact and recognition are sacrificed for standardized language and emotional neutrality.

When generic feels safe, impactful disappears

Brand voice erosion and engagement collapse

This engagement collapse illustrates the consumer perception gap – when audiences encounter AI content that fails to reflect distinct brand identity, they disengage, missing intended brand trust signals.

Ever notice how every AI-generated article starts to sound suspiciously familiar?

Here’s the paradox: companies prioritize “risk-averse output”, but audiences crave distinctiveness.

The safest tone – the one nobody could possibly object to – becomes the most forgettable.

When we audit brand assets for clients, it’s uncanny how quickly their unique edge erodes after several quarters of LLM-driven content pipelines.

One global SaaS brand watched its average time-on-page quietly drop by 32% in only six months.

Users scrolled, skimmed, and bounced – no memory, no signal.

The web was saturated with similar phrasing, generic claims, and the faint hum of “another thought leader” voice that could belong to anyone or no one.

The science is straightforward: the “median effect” in AI content refers to outputs clustering at typical, consensus phrasing.

The model flattens sharp perspective to maximize probability – think of a chef swapping every proprietary spice for salt until every dish tastes faintly bland.

Would you crave a second helping?

So ask: When your next campaign lands, will anyone instantly know it’s yours…or will they wonder if they read this before?

And who do they trust when every message blends into digital wallpaper?

A common myth floats around: “Consistency builds trust”.

Not if consistency erases character.

Consistent risk-averse output breeds invisibility.

Trust, for premium brands, is built through memorable voice and irreplaceable perspective.

Commodity trap: more output, less impact

Impact Comparison: Scaling AI Generic Content vs Distinctive Human Content

| Symptom | Description | Impact on Audience |

|---|---|---|

| Risk-averse headlines | Headlines never sharp or provocative | Fails to grab attention |

| No friction or tension | Problems described but not emotionally felt | Lacks engagement |

| Generic examples | Common phrases like “Many businesses face…” | Blends in with other content |

| Informative but not transformative | Leaves reader informed but unchanged | No lasting impact or memory |

Risks of AI homogenized content:

- Loss of voice and weakened brand identity

- Trend fatigue in audiences

- Diminished differentiation in the marketplace

- Reduced engagement and trust

- Increased risk of being filtered out by live-search systems or not being cited

Here’s a tough visual: imagine your content machine as a factory that keeps stamping out identical widgets.

At first, it feels productive – fast, efficient, everywhere at once.

But over time, the market drowns in a sea of risk-averse output.

Buyers stop seeing, then stop caring. That’s the commodity trap.

One e-commerce client doubled their publishing volume, only to watch conversion rates decay by 18% and organic shares evaporate.

Explanation?

The lifting effect of scale vanished as their content became indistinguishable from their competitors.

Without new angles, sharper frameworks, or distinct language, everything merges into the background hum. Impact shrinks instead of scaling.

Here’s a direct analogy: scaling AI-driven genericism is like pouring more water into a lake – it rises, but never creates a wave.

Information gain – the “aha” or “never-thought-of-that” moment – is what actually generates demand and discussion.

Real value isn’t output; it’s the unique perspective that reshapes what people think or do next.

Risk-averse output may feel comfortable.

But safety, in this context, is a silent demand killer.

The most generic content may protect you from risk, but it erases the sharp edges that spark recognition, trust, and action.

Next, let’s see how to diagnose this homogenized language before it stunts your growth.

Framework: AI sameness problem emerges systematically; most signs of generic output can be traced to median effect AI writing, not a lack of effort or expertise.

Diagnosing sameness in your content system

Signs content feels correct but forgettable

Symptoms of AI-Driven Generic Content

| Aspect | Scaling AI Generic Content | Distinctive Human Content |

|---|---|---|

| Output Volume | Increases rapidly | May scale more slowly |

| Content Originality | Decreases due to median effect | High due to unique perspectives |

| Audience Engagement | Declines over time (e.g., -18% conversion) | Sustained or grows |

| Brand Voice | Erodes into generic tone | Retains sharp identity |

Ever glance at your content calendar and feel a quiet dread?

That sense your articles sound fine – a little too fine.

Here’s a reality that stings: most AI-driven content passes the eye test, but sinks from memory almost as soon as it’s read.

Why does correctness so often signal forgettable?

I’ve seen polished blogs with all the structured headers, on-point keywords, and nothing that makes a reader’s pulse twitch.

The feedback is polite – “useful”, “clear” – but never urgent, never “I had to send this to my team”.

One B2B client came asking why their engagement dropped 37% quarter-over-quarter after switching to LLM-written product explainers.

The answer wasn’t typos.

It was a chilling AI-driven genericism, the kind that glides over you like white noise in a hotel lobby.

Here are symptoms to look for:

- Headlines that feel risk-averse output, never sharp

- No friction or tension – problems described, but never really felt

- Examples that blend into a generic blur (“Many businesses face..”.)

- Reading leaves you informed, but not changed

It’s like eating perfectly sliced bread, day after day.

Your brain registers calories, not flavor.

Do you remember what you learned – or just remember closing the tab?

From sameness to signal: assessing information gain

AI content homogenization is a signal – if you know where to look.

Instead of focusing on output correctness, ask: Does this deliver a new clarity or just echo?

Information gain is a simple but powerful litmus test.

It answers: what did the reader gain by reading this versus any other source?

When we ran an audit across 120 blog posts for a SaaS brand, only 14% gave insight unavailable via the first page of Google.

The rest?

Rewrites of rewrites, even when “branded language” was sprinkled in.

Here’s a fast exercise: Pull up your last five articles and, for each, write out a single sentence that represents a new (not just rephrased) perspective, fact, or claim.

If you pause or repeat yourself, that’s the sameness trap in action.

I call this the “echo chamber test”: if your team could’ve authored the same piece after chatting with ChatGPT for five minutes, you’re radiating median energy, not signal.

Imagine a room full of people all repeating the same weather forecast – loudly, confidently, without realizing its yesterday’s news.

That’s AI-driven genericism.

The real win comes when your content pierces through with a distinct, actionable, and unexpected idea.

There’s a simple tool: the information gain checklist.

If you can point to unique data, lived experience, or pointed viewpoint (not just packaging), your signal rises.

Brands who consistently do this shape demand, not just fill search fields.

The moment you see homogenized language as a warning light, not a comfort, you regain control over your influence.

Next, let’s move downstream into sharper framing and capability expansion.

Next steps: To move beyond the productivity trap and AI sameness problem, see our spoke on designing information gain frameworks.

For broader system-level correction, explore the brand positioning capability page.

Controlled routing into deeper mechanics prevents surface-level fixes from dominating your content system.

Next steps

Link to viewpoints/sharp‑framing cluster

Ever wonder why most AI-generated content reads like a forgettable, lukewarm memo?

Here’s the twist: the real threat isn’t outright error – it’s perfect plausibility.

Content that checks every generic box but leaves your audience unmoved.

In our client reviews, execs often confess their brief envy for “risk-averse output” AI content: it avoids controversy, blends in, and keeps the peace.

Then comes disappointment – their brand voice vanishes, and nothing sticks.

One seasoned CMO asked, “If our story feels just like anyone’s, what makes us matter?”

Here’s the wake-up call: differentiation isn’t a sidecar, it’s the engine.

AI content risk-averse output flattens unique views, so you need sharp framing – fast.

Imagine your brand perspective as the cut of a diamond.

If AI grinds every facet down, you lose all sparkle and edge.

Building a habit of challenging the median – deliberately anchoring viewpoints in lived experience, contrarian data, or bold predictions – breaks this flattening pattern.

One tool we recommend: a “proving ground” framework that tests if a draft delivers a new angle or simply rearranges AI content generic patterns.

If you can’t spot a future competitor, enemy, or hero in your copy, you haven’t framed sharply enough.

Is your content channel a conveyor belt, or a stage for distinctive ideas?

Related capabilities: brand positioning vs content system

Brand positioning and content systems can look like twins at first glance, but their functions rarely overlap in practice.

Brand positioning answers: “Why does our voice deserve attention and trust?”

A content system answers: “How do we produce, scale, and safeguard that voice?”

In execution, many teams blur this line – leading to a brittle, rinse-repeat content engine that forgets its original conviction.

Here’s an observation from multiple client workshops: when positioning and content mechanics drift apart, AI-driven genericism creeps in silently.

Decision-makers sense when the message lacks tension or vision.

Nobody remembers a slogan that sounds like a dozen others – they recall the one with sharp contrast.

The myth to break?

That more AI equals more differentiation.

With the wrong system, scale multiplies homogenized language.

Think of your brand as a color swatch.

The content system should keep the color vivid, not let it dilute into beige.

For sustained advantage, information gain in content should drive every new piece – demanding that hand-offs between positioning and production are intentional, not accidental.

Defend the borders: make each transition from strategy to creation a deliberate move toward higher clarity and relevance.

It’s the difference between a river shaping stone and water that just slips by.

By designing sharper hand-offs, your content can trade routine for recognition – and keep readers coming back for what only you can voice.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Information Theory and AI Output Homogenization

“Elements of Information Theory” – Thomas M. Cover, Joy A. Thomas – Wiley

Foundational text explaining entropy, probability distributions, and information loss, providing the theoretical basis for why probabilistic systems converge toward average (median) outputs under uncertainty.

https://onlinelibrary.wiley.com/doi/book/10.1002/0471200611 - Predictability and Creativity in Language Models

“On the Opportunities and Risks of Foundation Models” – Percy Liang et al. – arXiv / Stanford CRFM

Analyzes how large language models optimize for likelihood, which biases outputs toward common patterns rather than novel or distinctive expressions, contributing to generic text generation.

https://arxiv.org/abs/2108.07258 - Brand Distinctiveness and Signal Loss

“How Brands Grow: What Marketers Don’t Know” – Byron Sharp – Oxford University Press

Synthesizes empirical evidence showing that differentiation and distinctiveness drive memory and market impact, while uniform messaging reduces recognition and weakens brand signals.

https://global.oup.com/academic/product/how-brands-grow-9780195573565 - Automation and Attention Fatigue

“Humans and Automation: Use, Misuse, Disuse, Abuse” – Raja Parasuraman, Victor Riley – Human Factors

Analyzes how automation shifts human attention, leading to over-reliance, reduced monitoring, and decision errors when systems are trusted too much or too little, directly linking automation to attention degradation and performance decline.

https://doi.org/10.1518/001872097778543886 - Platform Curation and Algorithmic Homogenization

“A Metric for Filter Bubble Measurement in Recommender Systems” – G. M. Lunardi et al. – Expert Systems with Applications (Elsevier)

Demonstrates that recommender algorithms can reduce content diversity by repeatedly surfacing similar items, creating homogenized information exposure and reinforcing narrow consumption patterns.

https://www.sciencedirect.com/science/article/pii/S1568494620307092

Questions You Might Ponder

How does AI amplifies sameness in brand content?

When AI generates content, it uses probabilistic models focused on the statistical median, resulting in outputs that sound similar across brands. This approach leads to homogenized language and reduced differentiation, making it difficult for brands to stand out or create memorable messaging.

Why is information gain important in AI-generated content?

Information gain measures the unique insight or value a piece of content delivers compared to existing sources. High information gain breaks the cycle of repetitive, generic output, enhancing brand authority and driving higher engagement and trust among readers.

What is the impact of trend feedback loops on AI-written material?

As AI-generated content proliferates, future models increasingly train on this homogenized output, creating a feedback loop where originality declines further. This leads to an ecosystem flooded with repeated phrases, weakening brand impact and audience engagement.

How can brands diagnose if their AI content suffers from sameness?

Brands should check for risk-averse headlines, a lack of tension, generic examples, and content that feels informative but not memorable. Audit by pinpointing if new perspectives or distinct claims are present, rather than just surface-level ‘correctness’.

What strategies prevent AI-driven genericism in marketing?

To avoid the sameness trap, brands must inject unique perspectives, rare data, and distinctive voice into content creation workflows. Building frameworks for information gain and sharper narrative framing actively counters the statistical flattening of AI output.