What You’ll Learn

citation safety

Key Takeaways

- AI evidence thresholds filter out any claim lacking robust, machine-verifiable proof, prioritizing documented facts over consensus or intuition.

- Claims about high-risk topics (like clinical, legal, or financial advice) face stricter thresholds, resulting in conservative and generic outputs.

- Fragmented entities, poor citation alignment, or ambiguous provenance lead to automatic claim exclusion regardless of credibility perception.

- The underlying conservative answer bias is applied intentionally to minimize unsupported claim risks, making evidence sufficiency the defining factor in AI responses.

Why do powerful AI systems routinely skip over perfectly factual sources – sometimes even missing what looks “obvious” to a human researcher?

What is AI citation safety?

Definition and scope of citation safety

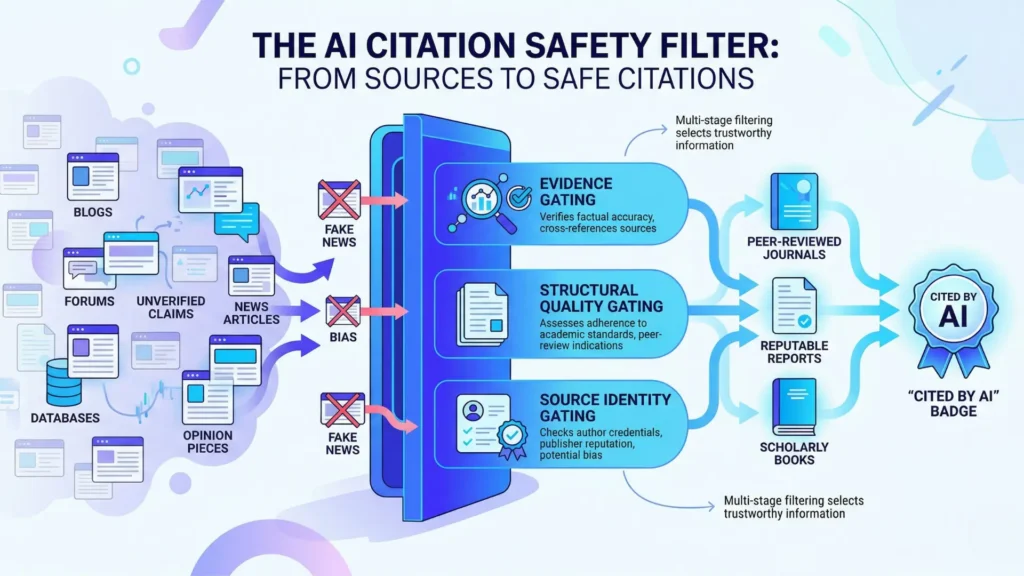

AI citation safety is the process by which an AI system chooses only sources that meet strict evidence, structure, and identity criteria – prioritizing safety over ranking, reach, or popularity.

Think of it this way: An AI model acts less like a savvy SEO specialist and more like an ultra-cautious librarian during a legal trial.

It won’t risk guessing or gambling.

This means many mainstream link-building tactics are simply invisible to it.

Distinguishing citation safety from ranking: Top search rank does not equal citation safety.

In real practice, we’ve seen high-ranking, traffic-heavy web pages get passed over by sophisticated AI systems – simply because they lacked clear evidence or traceable authority.

SEO is about optimizing for algorithms that measure popularity, structure, and relevance signals.

AI citation safety is about evidence, reliability, and minimizing attribution risk.

Like a sorting hat at Hogwarts, the AI’s first question is, “Is this source provably trustworthy, or just widely read?”

Many executives are surprised when their #1 article on Google isn’t cited in an AI answer, while their more technical but barely-ranked whitepaper makes the cut.

What citation safety controls vs what it doesn’t

AI Citation Safety: Controls vs What It Does Not Control

| Failure Pattern | Description |

| Attribution risk | Claims without verifiable proof are excluded to minimize attribution risk. |

| Structural ambiguity | Unstructured or uncrawlable content prevents AI from extracting useful data. |

| Entity fragmentation | Inconsistent or unclear branding causes the site to be seen as sketchy or unknown. |

Controls:

- Filters out sources lacking concrete, verifiable evidence (what can be directly checked wins).

- Limits citations to sources with well-structured, publicly accessible content (messy structure = auto-skip).

- Prioritizes stable, clearly attributed authors and organizations (unclear authorship? Door closed).

Does not control:

- Rankings or search result placement (SEO juice or traffic mean nothing here).

- Content quality beyond evidentiary, structure, and identity checks (beautiful prose with weak facts? Rejected).

- Halo effect from popularity or volume (even “top” blogs can be skipped if structure/identity fail).

Watching AIs “pass over” what looks like valuable material frustrated several clients this quarter. One was shocked after investing in dozens of high-authority backlinks, only to see their content consistently skipped for citation by GenAI platforms. The reason? Weak source clarity and unstructured pages. Fact: a flashy byline is worthless if evidence and structure are missing.

Every client wants to know: Can our page be cited? The answer lives in safety logic, not in traffic logs.

Imagine a security checkpoint that only lets you through if you’re carrying certified documents – not just a good story or a fancy badge. That’s AI citation conservatism, in action.

The real diagnostic opportunity? When you know what AI citation safety gates out, you can fix it. But thinking “ranking” and “citation” use the same playbook means stepping on invisible rakes.

This is why understanding the controls – and the limitations – behind AI citation decisions is non-negotiable for growth teams who expect traction from machine-powered authority.

You’ll see why the answers you get depend entirely on what AI trusts. In the next section, we get precise about what AI citation safety is – and what it isn’t.

This is not / This is

This is not…

What if everything you thought about AI citation safety was upside down?

Here’s what gets left at the door:

- AI citation safety is not a shortcut for search rankings.

- It isn’t about clever tactics, link schemes, or gaming the system. Smart marketers sometimes ask, “Which trick guarantees my source appears?” The real answer: none.

- Citation safety never means “anything goes if it’s on a big site”. High traffic doesn’t guarantee trust – ask anyone who’s seen a top blog misquote the facts.

I’ve seen executives assume a reputable-sounding name is enough, only to discover their content was never cited. The deeper reason? AI requires more than just surface trust signals.

This is…

Now, step into a room with different rules – diagnostic, deliberate, protective of reputation:

- Citation safety is a trust firewall – it rejects sources that can’t be verified, even if they seem popular.

- Think of AI as a bouncer at a high-security club: only sources with clear, cross-confirmed identity and evidence get through. If you’ve ever watched a guest list brush-off, you already get the vibe.

- Diagnostic logic sits at the heart: it operates like an evidence gate, prioritizing conservative sourcing in AI, attribution risk filters, and safe AI source selection – especially when public-facing answers might ripple into legal, medical, or financial domains.

Clients often expect quick wins from niche mentions.

But we’ve shown, repeatedly, that AI citation conservatism operates by design.

No hidden hacks.

Are you starting to see why high standards – while frustrating – also protect you from the wrong kind of visibility?

The big distinction: citation safety runs on trust, auditing, and risk-averse logic – not old SEO playbooks.

Next, we’ll dig into the telltale patterns of sources that get quietly blocked.

Failure patterns: why AI avoids risky sources

Common Failure Patterns Leading AI to Avoid Sources

| Controls | Does Not Control |

| Filters out sources lacking concrete, verifiable evidence | Rankings or search result placement |

| Limits citations to well-structured, publicly accessible content | Content quality beyond evidentiary, structure, and identity checks |

| Prioritizes stable, clearly attributed authors and organizations | Halo effect from popularity or volume |

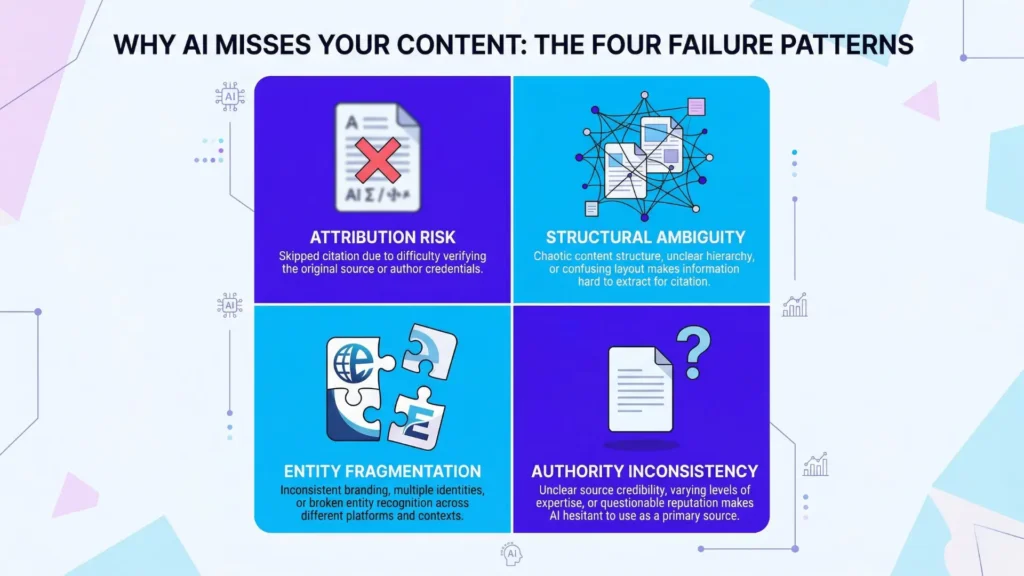

Attribution risk: claims without verifiable proof

Imagine asking an AI for a market stat – and watching it refuse to answer.

Frustrating?

Maybe.

Necessary?

Absolutely

The number one reason: if the model cannot tie a claim to a source that’s visible, stable, and checkable, it drops the topic (or dodges the specifics).

We once audited a financial Q&A tool that ignored dozens of “insider” blogs, even when those had the fastest numbers.

Why?

The proof was soft, sources unverifiable.

Clients expect numbers that hold up under inspection.

So, unsupported claims get the silent treatment.

Ever notice how AI favors the dull but official links?

That’s the evidence gate in action – a bit like a security scanner tossing out untagged luggage. Myth: “AI invents citations to look smart”.

Reality: unchecked claims are excluded, not embellished.

Structural ambiguity: unstructured or uncrawlable content

Ever felt like your best insights vanish into the void?

If your web page is one giant wall of text, AI systems struggle to extract anything useful.

Crawlers get lost when headers, context cues, and semantic structure are thin or tangled.

With one SaaS client, we saw support docs ignored simply because their PDFs jammed multiple answers into a single block.

No clear section, no discrete ideas – just an informational blur.

When content isn’t structured for clarity, reality, or crawlability, AI coughs up a blank or picks someone else.

Think of it as trying to quote a recipe written on a spilled cup of coffee – good luck getting precise steps.

Entity fragmentation: unclear or inconsistent branding

You wouldn’t trust financial advice from “Smith&?” or “SthFincorp Inc”.

But that’s exactly what many sites signal to AI: mixed-up names, unclear authorship, orphan pages.

One ecommerce operator changed its brand on three of five key knowledge pages; their product FAQs disappeared from AI-generated answers overnight.

If the system can’t lock onto a single, stable identity, it files the site under “sketchy or unknown”.

This is a major pitfall for brands that replatform or tweak branding without syncing metadata. In practice, AI models only cite clearly identified, consistently labeled sources.

If the digital fingerprints don’t match, the content sits in citation limbo.

Are your digital signals all pointing to you – or are you accidentally a ghost in the system?

Authority inconsistency: sources lack cross‑confirmation

Here’s a non-intuitive reality: even factually accurate content gets ignored if it stands alone.

AI citation safety depends on cross-confirmation – finding the same claim echoed in more than one reputable spot.

With B2B SaaS launches, we’ve seen expert-authored guides go undetected because nobody else referenced their findings (yet).

The logic is conservative: if everyone in the crowd points the same way, trust is higher.

If you’re an outlier, even with good data, you stay invisible until others agree.

Picture AI like a financial auditor: one report isn’t enough; you need at least two matching books to pass.

Trust is enforced by the crowd, not just by credentials.

Spotting these pattern failures early puts you at an advantage.

Next: which contexts raise the bar even higher – and what that means for source trust.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Auditing and Interpretability in AI

Interpretable Machine Learning: A Guide for Making Black Box Models Explainable – Christoph Molnar – Leanpub Academic

Provides a systematic framework for model interpretability, including feature attribution, surrogate models, and explanation methods, which form the basis for auditing, transparency, and verification in AI systems.

https://christophm.github.io/interpretable-ml-book/ - Trust and Verification in Algorithmic Systems

The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation – Miles Brundage et al. – Future of Humanity Institute, University of Oxford

Analyzes security risks from AI misuse and emphasizes the need for verification, controlled access, and monitoring to prevent manipulation and ensure trustworthiness of AI outputs.

https://arxiv.org/abs/1802.07228 - AI in Regulated Industries

Artificial Intelligence in Healthcare: Past, Present and Future – Jiang et al. – Stroke and Vascular Neurology (BMJ)

Explains how clinical AI systems require high-quality data, validation, and interpretability to meet strict evidence standards and ensure safe decision-making in healthcare environments.

https://pubmed.ncbi.nlm.nih.gov/29507784/ - Entity Resolution and Digital Identity

A Survey: Knowledge Graph Entity Alignment Research Based on Representation Learning – B. Zhu et al. – Artificial Intelligence Review (Springer)

Analyzes modern entity alignment methods across knowledge graphs, focusing on how structural and semantic signals are combined to resolve identity across heterogeneous systems, a core problem in digital identity consistency.

https://link.springer.com/article/10.1007/s10462-024-10866-4 - Content Structure, Schema, and Information Retrieval

A Survey on Knowledge Graphs: Representation, Acquisition and Applications – Shaoxiong Ji et al. – arXiv / IEEE Access (survey)

Explains how structured representations (knowledge graphs, schemas, embeddings) improve information retrieval, reasoning, and query answering, demonstrating why structured data enables more reliable access than unstructured documents.

https://arxiv.org/abs/2002.00388

Questions You Might Ponder

Why doesn’t AI cite high-ranking pages if they seem reliable?

AI citation safety is driven by evidence and authoritativeness, not popularity. Even if a page ranks well in search, AI will skip it for citation if it lacks verifiable sources, clear structure, or consistent branding – ensuring only the most trustworthy evidence is referenced.

What factors cause AI to ignore otherwise valuable content?

AI frequently omits content for citation due to weak evidence, unstructured information, unclear authorship, or lack of cross-confirmation. These failures outweigh traffic or brand recognition because citation safety prioritizes reliability and minimized attribution risk above all else.

How does citation safety impact content strategy in regulated industries?

In regulated spaces like healthcare or finance, citation safety enforces higher verification and attribution standards. This means content must provide audit-ready evidence and cross-confirmation or risk being excluded, even if it’s otherwise accurate and from a reputable publisher.

Can improving page structure increase AI citation odds?

Yes – well-organized, schema-friendly content with discrete sections, traceable authorship, and explicit sources dramatically raises the likelihood of being cited by AI. Structure and clarity are critical citation safety signals, often outweighing SEO tactics in machine-driven selection.

Why do some expert white papers never appear in AI-generated results?

AI avoids solo-claim or “orphan” sources, requiring multiple independent confirmations to minimize attribution risk. If expert research is not cross-cited elsewhere or its publisher’s identity is unclear, it’s unlikely the source will pass AI citation safety filters – regardless of factual quality.