What You’ll Learn

technical seo fundamentals

Key Takeaways

- Technical SEO fundamentals is the access layer that makes pages eligible for search – crawlable, processable, and indexable.

- Discovery is not visibility – a URL can be crawled and still never get stored in the index.

- Invisible pages often trace back to access friction – broken paths, conflicting signals, or content that cannot be processed reliably.

- If access is healthy but growth stalls, the next filter is stability and experience – including Core Web Vitals and consistency over time.

Search engines must find and understand pages first.

Most teams think publishing equals visibility. It doesn’t.

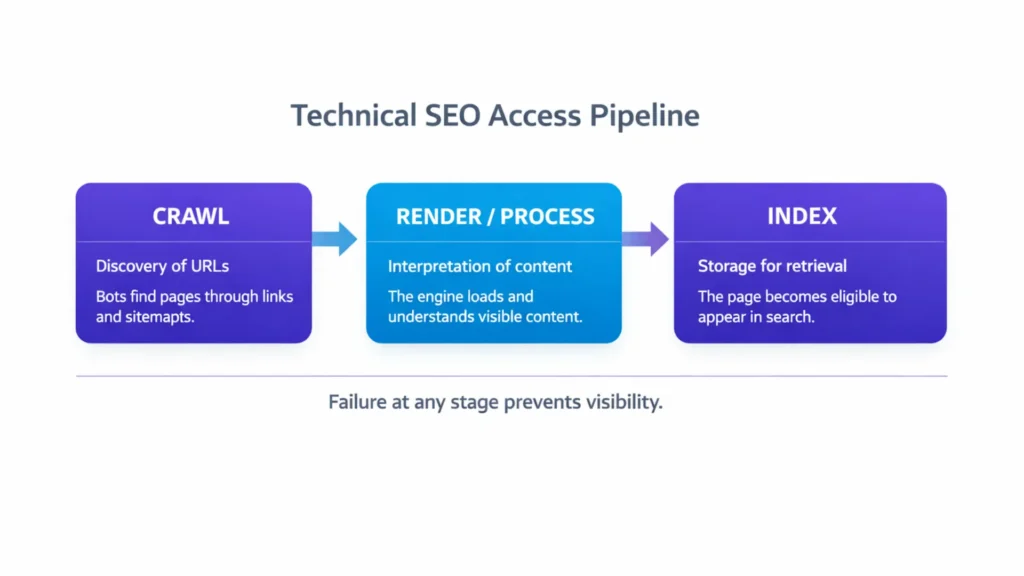

Crawling finds pages, rendering interprets content, and indexing stores it for retrieval.

Imagine writing 300 detailed pages for a new product line and seeing zero of them show up in Google.

You’d assume the content is weak. Often it isn’t. The blocker is access inside your architecture – whether systems can reach, read, and store pages in the index.

Here’s a less obvious truth: a crawler can reach a page but still not index it. Discovery alone doesn’t guarantee visibility. That’s how strong content can sit unused in search.

In practice, small access breaks matter more than thousands of words. One broken path can keep whole sections out of the index.

Hold one idea: technical SEO is about the access pipeline working reliably – crawl, render, index – before anything else can compound.

Not: technical SEO as a list of developer tasks.

Instead: technical SEO as the access layer that makes pages eligible to appear in search.

If you want the full model, start from the SEO system map.

What „technical SEO” really means

Search engines aren’t like visitors clicking your site.

They don’t see your content unless they can discover it, interpret it, and store it. If any step fails, the page stays outside the system. They follow a defined sequence-crawl, render, then index-before anything ever shows up in results.

These are distinct processes that determine whether your content exists in search at all.

Crawling – bots discover URLs through links and sitemaps.

Rendering/processing – the engine interprets what the page actually shows (including JavaScript-driven content).

Indexing – the engine stores the interpreted page so it can be retrieved for queries.

These stages matter because your content’s visibility hinges on them long before relevance or authority play a role. A page can be crawlable yet still not be indexed if the search system can’t interpret it or deems it inaccessible.

Imagine a warehouse full of high-value products that no delivery trucks can reach. No matter how great the stock is, it never leaves the building. Search engines work similarly: unless they can reach, read, and store your pages, your content simply has no presence in their results.

In client work, this shows up fast:

- A site with strong messaging and backlinks still hovers at zero organic traffic because the architecture blocks efficient crawling.

- Another client relaunched with fresh, high-quality content and watched indexing drop by nearly half overnight after structural changes broke key discovery paths.

These aren’t theory. They are access failure dynamics that stop SEO before it starts.

Technical SEO is not a menu of unrelated tactics. It is the infrastructure layer that governs whether search engines can access, interpret, and store your pages. Until that layer is working reliably, relevance and authority won’t produce measurable search visibility. Technical SEO defines whether search systems can even use your site. Next comes the pipeline that decides what gets stored and what gets skipped.

How search systems discover and process pages

By the time an executive notices a problem, the pipeline often broke earlier. The system must discover a page, interpret it, and then decide to store it.

Crawling is the discovery process. Automated bots (like Googlebot) start with known pages and follow links or sitemap entries to find new content. If your site’s structure hides pages deep or behind broken signals, „crawlable” content may never actually be found.

Rendering and processing is what happens next. Once a crawler fetches a page, the engine loads it and interprets the content – this can include JavaScript and dynamic elements – so the system understands what is actually on the page. Without successful rendering, some content might be accessible in theory but not interpretable in practice.

Only after discovery and processing does indexing occur.

Indexing makes a page eligible to be retrieved. It does not guarantee rankings.

A clean mental model: crawl finds it, processing understands it, indexing stores it. If any one of these stages fails, the content never reaches real visibility.

In real work with clients, we often see this pipeline break at unexpected points. One ecommerce site had thousands of pages, but only a fraction were indexed for weeks because internal linking didn’t guide bots deeper into the structure. Another brand’s new content didn’t show in searches because rendering issues kept key page elements from being interpreted by bots in the first place.

Understanding these stages is not academic – it explains why visibility often stalls even when content quality is strong. Until search systems can reliably discover, process, and store pages, other SEO effort rarely compounds. Crawl, process, index is the order that decides visibility. If one step breaks, results can stall for reasons content cannot fix.

Visibility vs processability

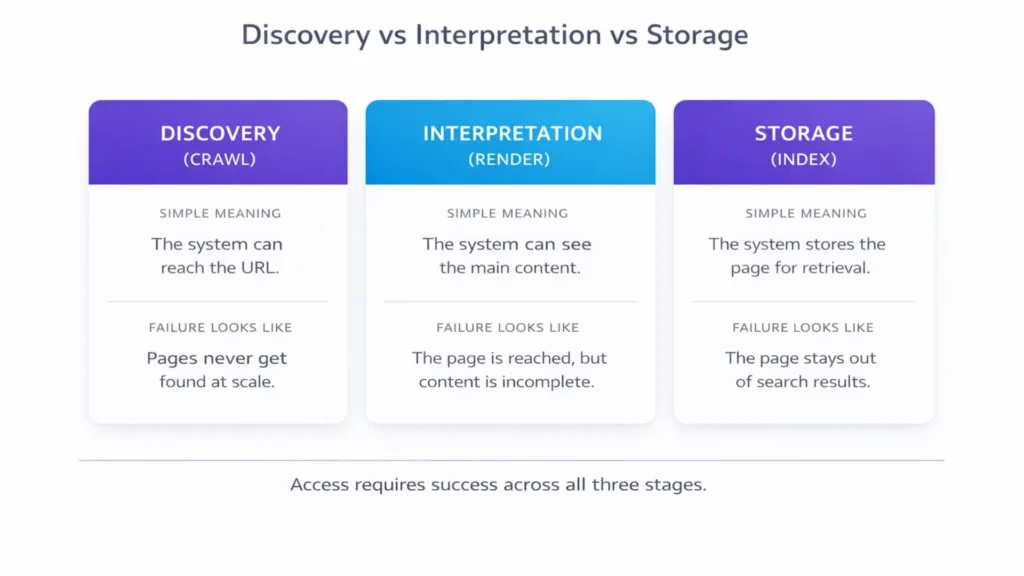

A bot reaching a URL proves access to the address. It does not prove access to the content.

This is where teams get misled: humans see the page, but the system cannot reliably interpret the main content. Crawling is only the first filter. After that comes processing and indexing – and those matter for visibility. These are separate stages that must all succeed before your content appears for users.

Crawlability means search bots can reach your pages by following internal links, sitemaps, and other discovery signals. If a page isn’t reachable, it can’t even be considered for search results.

Myth: “If Google crawled it, it’s in Google”.

Reality: crawling proves discovery, not storage.

Crawlability – the bot can reach the URL.

Processability – the system can interpret the main content.

Indexability – the system can store the page for retrieval.

| Stage | Simple meaning | What failure looks like |

| Discovery (Crawl) | The system can reach the URL. | Pages never get found at scale. |

| Interpretation (Render/Process) | The system can see the main content. | The page is reached, but content is incomplete or unclear. |

| Storage (Index) | The system stores the page for retrieval. | The page stays out of results even when searched directly. |

This gap is common with JavaScript-heavy pages, blocked resources, and delayed content loading. The URL loads for a user, but the system sees a partial page, so it never treats it as stable, indexable content.

Indexing is the stage after processing where the system stores and organizes the page in its searchable database. A crawled page that never gets indexed will never show up in results, no matter how good the content is.

Here’s a question many leaders think but rarely articulate: Why do pages get discovered but never appear for relevant searches? The answer is often that the system couldn’t process and index them after discovery – not that the content was weak.

In client work, we’ve seen cases where Google crawled most pages but indexed only about 15% because key content depended on scripts that were not processed cleanly, and other cases where crawlable pages stayed invisible until internal signals stopped conflicting, with measurable improvement over 6-8 weeks.

So if bots can reach pages but you still see no search traffic, the missing link isn’t relevance or backlinks – it’s processability and proper indexing. A page must be discoverable, interpretable, and indexable before it can appear for real user queries.

Crawl access is necessary, but it is not the finish line. Next comes why pages get excluded even after discovery.

Why content can be ignored

Search engines sometimes ignore content even after they’ve crawled it. That might surprise you, but it happens all the time. A page can be reachable by bots, yet never show up in search results if the system never indexes it. Indexing is the step where crawled pages are stored in the search engine’s database and become eligible to appear for queries.

Crawlers might visit pages and fetch their HTML, but the system still decides whether to analyze, interpret, and keep them. If the page fails those checks or sends conflicting signals, it may stay out of the index. Often the system clusters similar URLs and selects one canonical version to keep. The others can remain crawled but excluded, even when they look “fine” in a browser.

You might be asking yourself: Why would a good page be ignored after discovery? The answer isn’t always about content quality. Sometimes the technical or structural setup makes the system decide the page doesn’t belong in the index. This means that even excellent content can remain hidden from searchers because of processing and signal issues – not because it lacks value.

The pattern looks simple from the outside: the URL exists, but the system chooses not to keep it.

We’ve seen this play out in real work:

- A B2B platform published hundreds of service pages, and almost all were crawled by bots, but less than 25 % ever appeared in search because indexing was inconsistent across structural variants. Once internal signals stopped conflicting, indexed coverage rose over 10 weeks.

- Another client had content that crawlers reached easily, yet most pages were flagged as non-indexable in tools until we clarified internal link patterns and canonical signals (a technical signal telling search engines which page version to keep). After those signals stopped conflicting, index coverage improved noticeably.

Most exclusions happen because signals conflict, the system picks a different version, or the content cannot be processed consistently. The result looks the same: crawled pages that never become searchable.

So if you see pages being crawled but not listed in search results, it’s not a mystery – it’s a sign that something after discovery is blocking their path. For search engines to show content, they must crawl, process, and index it – and only then can relevance and authority begin to matter in matching queries.

A page can be crawled and still stay out of search. Next comes whether structure and consistency make it easy to keep and revisit.

The role of site structure and architectural clarity

Search engines don’t explore your site randomly. They follow paths created by your internal links and hierarchy to reach and process content. When your site’s layout lacks a clear structure, bots may crawl only parts of it while ignoring others – especially pages buried too deep or without meaningful internal connections.

Site structure is more than navigation for visitors. It’s the map crawlers use to discover and prioritize content. When you organize content into clear groups with direct links between related pieces, search engines move through your site more efficiently. Stability matters too. When the same URL patterns and internal links stay consistent over time, the system trusts the structure and revisits key areas more predictably.

Think in paths, not pages. Clear internal paths help the system discover, prioritize, and revisit important URLs.

Internal links act like signposts. They direct crawlers from one page to the next and signal which content matters most. Pages with multiple high-quality incoming internal links are more likely to be crawled frequently and indexed reliably than pages with few or no such links.

In practice, we’ve seen this play out in client work:

- A global services site clarified its internal link references so that core service pages were referenced consistently from related content. Within eight weeks, deep content that was previously crawled but rarely indexed began appearing regularly in index reports.

- An SaaS platform reduced crawl depth by reducing how deep key pages sat in the structure. Pages that were previously four or more clicks away from the homepage became reachable in two clicks, improving crawl efficiency and index coverage.

When structure is weak, bots spend time on low-value paths and miss important pages. This doesn’t mean those pages are bad content – it means they are poorly integrated into the site’s architectural logic.

Clear architecture also supports crawl distribution. The closer an important page is to the „surface” of your site – fewer clicks from the main entry points – the more reliably search engines can access it during regular visits. A shorter path increases the chances your most valuable pages stay indexed and visible.

Even if content is excellent, poor structure can hide it. Strategic internal links and logical hierarchy help search systems discover and track your pages efficiently – and that’s a prerequisite for ongoing growth and visibility. Structure is the map the crawler follows and the context the system infers. Next are the failure modes that explain „we published, but nothing moved”.

Access failure modes (conceptual diagnostics only)

Imagine search visibility as a filter path rather than a single gate. A page can be seen by search systems and still never show up to your visitors. That’s because crawling and indexing are two separate steps – a crawler can discover a URL, but the system may choose not to store it for search retrieval. A simple lens: discovery failures, interpretation failures, and storage failures.

A common sign of this is the status „Crawled – currently not indexed” in tools like Google Search Console, which means the page was discovered but not added to the search index. That page will not appear in search results until it enters the index.

So what specific barriers stop pages from ever being seen even after discovery? Here are the main access failure modes that can silently block visibility:

- Index control directives – Pages might include tags or instructions that tell search systems not to store them, even if crawled. This is often intentional for back-end or private pages, but mistakes can hide content you want visible.

- Duplicate or conflicting signals – If multiple pages send inconsistent choices about which version should be indexed, or if canonical tags point elsewhere, search systems may skip indexing some of them.

- Blocked resources or misunderstandings – Even when crawlers reach a page, critical resources like scripts or styles may be blocked or difficult to process. If the system can’t interpret the content cleanly, it may decide the page isn’t indexable.

- Crawl prioritization and capacity – Search systems don’t guarantee every crawled page will be indexed. They evaluate which content is worth storing based on many factors, including clarity, signals, and other prioritization rules, so some pages simply don’t get pulled into the index queue.

These failure modes show you that access isn’t a single event but a sequence of filters: discovery, rendering/processing, and indexing. A page might pass the first filter but stop before it ever becomes visible to users.

In client work, we’ve seen cases where 70% of pages were crawled but only 30% were indexed due to conflicting signals, and other cases where script-loaded content never moved past processing even though users could see it.

Even if search systems find pages, if subsequent processing and storage filters reject them, those pages remain missing in search results – and your investment in content never delivers the visibility you expect.

These patterns explain why effort and visibility diverge. If access is not the blocker, experience signals come next.

When access exists but results still stall

It’s possible for search systems to find and even index your pages and yet you see no meaningful visibility or growth. This can feel frustrating because the basic access requirements seem met – bots can crawl, content is indexed, but organic search still underperforms.

So what’s really happening? Search engines don’t stop at indexing. They also evaluate signals after indexing that influence whether a page gets shown for relevant queries – including structural clarity, internal link strength, and how confidently the system believes the page should compete for attention.

You might ask yourself: My pages are being indexed – so why don’t they show up in search results? One big reason is that after indexing, the system continues to assess how well a page fits its database and whether it deserves regular visibility for user queries. If a page is indexed but not consistently picked for queries, it’s often because downstream signals don’t give it search confidence.

Indexing puts a page in the database. Ranking decides whether it gets served for real queries. If signals stay weak or inconsistent, the page can remain eligible but rarely selected.

In practical work with clients, we’ve seen sites where index reports looked fine – but the pages never started ranking until the structural signals were clarified and strengthened. One media client’s authority pages were indexed, but they weren’t being shown for target queries because internal link structures diluted relevance signals. Once internal link hierarchy became clearer, visibility improved within a few months. Another B2B platform saw spikes in impressions after clarifying how indexed service pages related to high-priority category pages.

When access exists but results still stall, stability and experience become the next filter. That is where Core Web Vitals start acting like a second gate for visibility.

That’s the bridge forward to the next layer of foundational filters, where site experience and core web signals start influencing visibility. That is the handoff from access to experience.

Scientific context and sources

The references below describe how modern search systems discover, render, evaluate, and index content. They provide foundational and first-party explanations for the mechanisms described in this article.

- Web search crawling and indexing architecture

The Anatomy of a Large-Scale Hypertextual Web Search Engine – Brin, S., Page, L. – Stanford University (1998)

Foundational paper describing how large-scale search engines crawl, index, and rank web documents at system level.

http://infolab.stanford.edu/~backrub/google.html - Distributed crawling systems

Mercator: A Scalable, Extensible Web Crawler – Heydon, A., Najork, M. – Compaq Systems Research Center

Describes large-scale crawler architecture, URL discovery, prioritization, and processing pipelines.

http://www.cs.ucr.edu/~vagelis/classes/CS242/publications/scalable-crawler.pdf - JavaScript rendering and search processing

Dynamic Rendering and JavaScript SEO – Google Search Central Documentation

Explains how search engines render JavaScript content before indexing and how processing stages affect visibility.

https://developers.google.com/search/docs/crawling-indexing/javascript/javascript-seo-basics - Crawl budget and indexing prioritization

Large-scale Incremental Processing Using Distributed Transactions and Notifications – Google Research

Explains distributed processing and document updates in large-scale systems, supporting how indexing prioritization works.

https://research.google/pubs/pub36726/

Questions You Might Ponder

What is technical SEO?

Technical SEO refers to the infrastructure that allows search engines to crawl, render, and index your pages. It focuses on access and eligibility, not keywords or copy. If search systems cannot reliably discover and process your content, it cannot appear in search results regardless of authority or content quality.

Why are my pages crawled but not indexed?

Crawled but not indexed means Google discovered your page but chose not to store it. Common causes include conflicting canonicals, noindex directives, duplicate variants, weak internal linking, or JavaScript rendering issues. Discovery alone does not guarantee storage. Indexing requires clear, consistent, and processable signals.

What is the difference between crawling, indexing, and ranking in SEO?

Crawling is discovery. Indexing is storage. Ranking is retrieval and position for queries. A page can be crawlable but not indexed, or indexed but rarely shown. Each stage depends on different signals, and failure at any stage prevents consistent search visibility.

How does site structure affect SEO crawling and indexing?

Site structure determines how efficiently bots discover and revisit content. Shallow hierarchy, logical categories, and strong internal links improve crawl distribution and index consistency. Deep nesting, orphaned pages, or conflicting internal signals reduce crawl priority and can keep important pages inconsistently indexed.

Why are my pages indexed but not ranking?

Indexing makes a page eligible, not competitive. Rankings depend on relevance, internal authority, clarity of signals, and comparative strength. Indexed pages may sit beyond page one if titles mismatch queries, internal links dilute importance, or competing pages offer stronger structure and trust signals.