What You’ll Learn

false positives in CRO tests

Key Takeaways

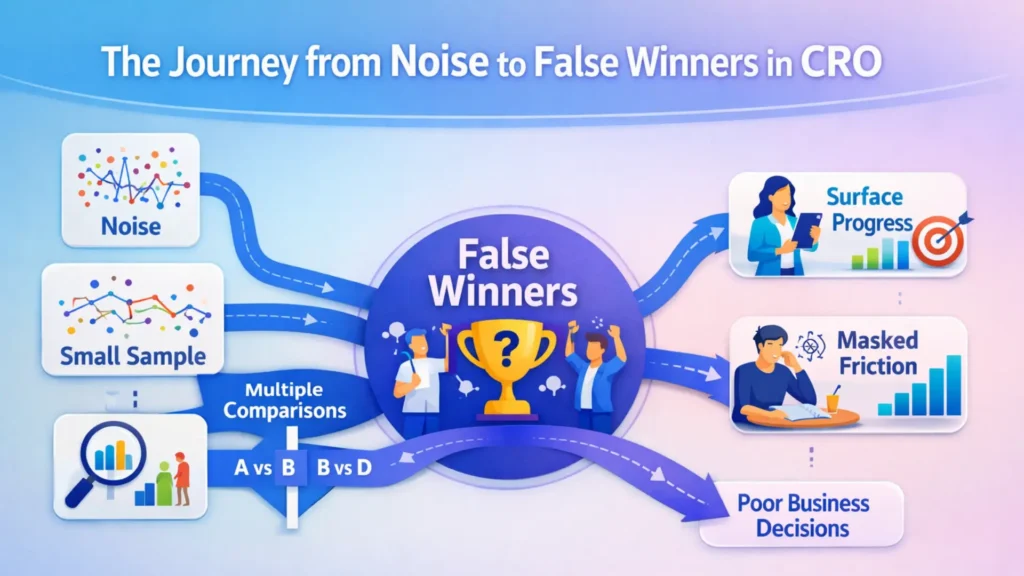

- Small sample sizes and volatile traffic drive false winners in CRO through random noise, not true behavioral change.

- Running many tests or variants sharply increases the risk of false positive wins, misleading teams into flawed decisions.

- Conversion increases that lack quality or intent alignment often harm downstream revenue, making statistical wins counterproductive.

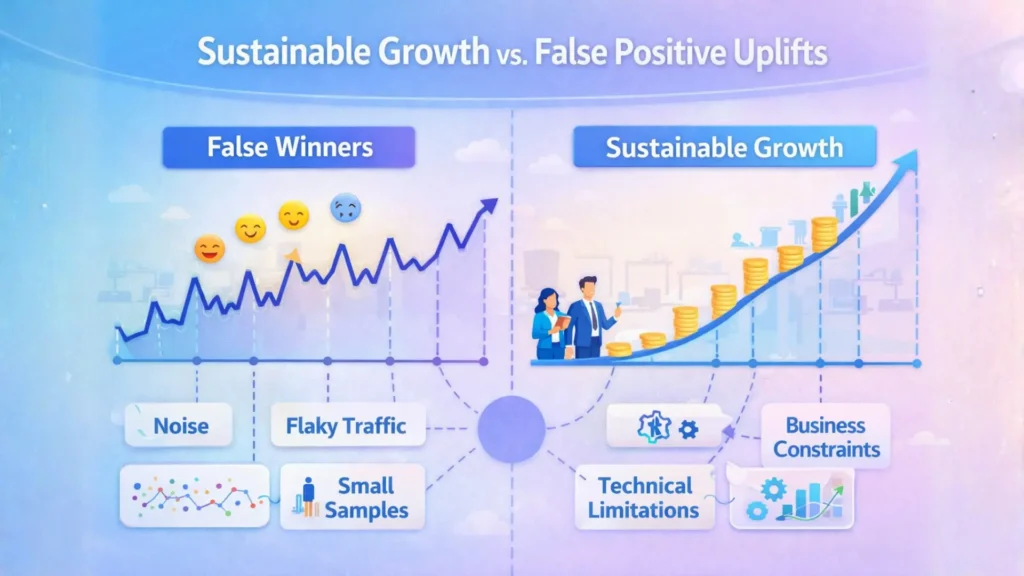

- Sustainable CRO improvement relies on diagnosing and resolving systemic constraints, not chasing dashboard uplifts from fleeting statistical spikes.

Most teams don’t realize just how easy it is to “win” a test due to nothing but statistical static.

A few random clicks on a slow Monday, a sudden spike from an email blast, or even the weather – these can all tilt a conversion rate and create the illusion of lift.

If your traffic base is small or unstable, that fleeting blip can masquerade as a breakthrough.

Why noise and limited data can create deceptive test results

A/B testing is often treated as a truth machine.

But with small samples, the reality is closer to reading tea leaves.

One client celebrated a 14% uplift after a week – until we zoomed out and saw the extra “wins” were concentrated in a two-hour window when a social mention drove bot-driven clicks.

The myth: every change in conversion rate means your hypothesis was right.

The reality – most short-run uplifts are statistical mirages, driven by randomness more than real user behavior.

Why minor traffic shifts can look like wins

Think of it like tossing a coin just five times.

Heads might come up four times, but that doesn’t mean the coin is weighted; it’s just noise.

Traffic volatility amplifies this effect: a single influencer tweet or an accidental campaign can swamp your test, and without enough data, you won’t spot the difference.

That’s why “mini wins” in low traffic are the most dangerous.

They feel actionable, but they’re really just forecasting clouds with five days of weather logs.

The key repeatable insight: small sample volatility frequently produces false positives in CRO tests, not insights worth scaling.

How repeated testing magnifies the chance of error

Statistical Error Risks in Multiple Testing

| Criteria | Purpose | Questions to Ask |

|---|---|---|

| Multi-cycle Validation | Ensure the win holds across repeated test cycles | Does the variant still perform after multiple test runs? |

| Cross-channel Stability | Check if uplift sustains across different traffic sources | Does the effect persist beyond a single acquisition channel? |

| Cohort Consistency | Verify consistent impact for various user segments | Is the uplift stable across customer groups or regions? |

You might think that running more tests means more learning.

In reality, every new test is another roll of the dice – and with each spin, the odds of a statistical “win” that’s actually a fluke keep climbing.

This is the multiple comparisons problem: run enough experiments, and one of them will eventually say you struck gold, simply by chance.

We worked with a SaaS firm that ran 40 headline tests in a quarter.

Four tests “won big” – but revenue didn’t budge.

Why?

Those apparent breakthroughs were pure accidents of random variation, not repeatable opportunities.

The analogy: testing outcomes at scale is like fishing with dozens of lines in a small pond – one trap is bound to snag a fish, but it tells you nothing about the health of the ecosystem.

Ask yourself: with each additional variant, are you multiplying learning or just multiplying risk?

Most teams miss this, chasing surface-level movement that doesn’t survive contact with reality the next month.

Don’t mistake noise for progress.

If it’s easy to get a statistical win, it’s even easier to get lulled into the wrong decision.

Every time you test multiple variants or run many tests at once, you multiply your chance of a false win – a risk known as the “family-wise error rate” in statistics.

This means running more comparisons makes at least one false positive much more likely, no matter how careful you are.

Similarly, tracking too many metrics at once increases your “false discovery rate”, where some results look significant just by chance.

Segment-level analysis – like slicing results by region or device – can seem to offer insight, but often just increases the risk of chasing statistical ghosts.

Cherry-picking apparent wins across segments is a classic over-testing trap, inflating false discoveries instead of surfacing genuine opportunities.

When a ‘statistical win’ masks a wrong business decision

The difference between CRO leaders who drive profit and those who chase vanity metrics isn’t technical skill – it’s how they define value.

Most teams celebrate a test winner when the conversion rate ticks up, but what if that win actually moves the business backward?

The result looks like progress but quietly sabotages growth.

Why higher conversions can mean lower value

A surge in conversions does not always mean more value.

We once saw a B2B client double their demo requests after tweaking a call-to-action, but sales flagged that most new leads had no budget or buying intent.

Conversion rate soared, but both close rates and deal values plunged.

More was, in reality, worse.

The myth: all conversion lifts are wins.

In practice, chasing absolute numbers in CRO can hurt revenue per lead or pollute pipelines.

The high-score mentality – max out form fills or signups – treats leads like tokens rather than people with real intent.

Imagine swapping dollars for pennies at higher speed; your dashboard glows, but profit disappears.

What if “winning” a test just means accelerating the wrong traffic?

Direct experience makes the pain clear.

In SaaS, we’ve watched upgrades spike after optimizing plan comparison copy – then churn wipe out the net gain.

The short-term optics lured stakeholders into expanding the test, doubling down on a move that looked like momentum but created payment friction downstream.

The repeatable insight: Conversion lifts without value matching downstream are just noise in disguise.

When novelty bias fades, so does apparent progress

Novelty bias makes test wins look larger than they are.

When a new headline or unexpected CTA grabs attention, the numbers spike.

But as users adapt, results inevitably fade, often returning to baseline within weeks.

Like a flash sale, the jolt doesn’t last – and rarely sustains business growth.

We’ve run tests where an unconventional offer generated a 25% signup boost – only for that lift to evaporate within a month.

Users who stuck around long enough adapted and stopped noticing.

Novelty bias is silent but powerful; it gives the illusion of change while masking fragility.

Is the win real, or just a mirage caused by the first-time effect?

Rely on this analogy: Temporary wins from novelty are like caffeine for your funnel – an immediate jolt, but the effect wears off and sometimes leaves you worse off than before.

The one question leaders should ask: Will this result stand up to time and repeated exposure, or is it just today’s sugar rush?

Here’s the core takeaway: A statistical win means nothing if it doesn’t compound into better revenue, higher customer value, or more reliable growth.

Progress worth betting on endures – temporary upticks and empty volume do not.

What this reveals about systemic CRO constraints

Most teams think their last “winning” test proves the system works.

Here’s the shock: those easy wins often signal deeper, hidden limits – ones that small tweaks simply can’t break.

The real obstacle isn’t your page or headline.

It’s that the machinery upstream – offer, messaging, and buyer intention – sets an invisible ceiling you rarely see in a dashboard.

Learning from wins that don’t compound

A single A/B test where Variant B nudges conversion rate upwards feels like progress, but ask yourself: when was the last time one “win” triggered a domino effect across the rest of your funnel?

Too often, businesses rack up isolated improvements – each a minor bump never quite stacking with the next.

Like adding an extra gear to a worn engine, these changes hum for a moment, then stall against friction that never moved.

We’ve seen clients rush to scale a headline tweak that boosted clicks by 8%.

Yet three months later, revenue didn’t budge – lead quality stagnated, sales pipelines slowed, and decision cycles stayed stuck.

Why?

Because that headline didn’t address the mismatch between audience needs and the value proposition.

Structural blockers – like unclear offers or off-target acquisition channels – absorb page-level lifts like a sponge.

Conversion optimization as practiced (test, tweak, repeat) is too often like patching leaks downstream while upstream pipes remain cracked.

Until the intent, offer, and incentive structure is right, small wins won’t compound; they simply dissolve under bigger misalignments.

When dashboards fool but decisions stall

Meaningful change hides behind metrics.

CRO dashboards are engineered to spotlight uplift, not friction.

But even a beautiful trend line can distract from the core business reality: what’s driving decision inertia, and why aren’t buyers moving further or faster?

We’ve watched businesses celebrate “statistical winners” while sales teams report – unchanged close rates, stagnant deal velocity, or rising discount demands.

It’s like seeing ripples in a pond and calling it a current.

The visual of progress, the quick dopamine of green numbers, can lull leaders into a sense of momentum that isn’t translating upstream.

The myth that tool analytics are business improvement is dangerous.

Until you force the question – what is truly slowing or stopping buyer decisions? – the dashboard wins are often just noise.

Real progress requires stripping away the comfort of incremental metrics to confront the system’s big bottlenecks.

If tweaks don’t multiply impact across your funnel, it’s time to look upstream, not just at the screen.

Sustainable growth comes from dismantling root friction, not chasing dashboard highs.

Next diagnostic steps when tests mislead

Most teams double down on test iterations the moment results disappoint.

But pursuing more A/B cycles after a string of false positives in CRO tests is like switching treadmills – motion, but no real distance covered.

The root problem rarely sits in the layout; it’s almost always lurking upstream, in muddied intent or shaky trust.

If your tests keep surfacing “statistical false winners”, ask: are you treating the symptoms or addressing the real disease hiding in plain sight?

Prioritize diagnosing intent and trust blockers, not tweaks

The biggest conversion testing pitfall isn’t failed experiments – it’s ignoring the intent and trust friction that no amount of button-color optimization can resolve.

We’ve seen startups spin months tweaking pricing pages, all while qualified leads drop out mid-funnel due to vague value messaging or missing social proof.

One client burned through five “winning” test variants before confronting the uncomfortable truth: their audience never trusted their claims in the first place.

Random variation in testing makes statistical noise look actionable, pushing teams into a cycle of surface tweaks.

But lifts created on top of blocked intent rarely stick.

Test result instability is just a symptom – chronic, not acute.

Imagine trying to tune a race car while the fuel line is leaking; unless you fix the upstream leak, no amount of alignment brings a real win.

So if conversion metrics refuse to budge or move for the wrong reasons, shift focus: Are core motivations clear?

Where’s decision friction killing momentum?

Trust gaps and intent confusion drive more false positives than any call-to-action color ever has.

Evaluate sustainability before scaling winners

Checklist to Evaluate Sustainability of CRO Test Wins

| Term | Definition | Cause |

|---|---|---|

| Family-wise Error Rate | Probability of at least one false positive among multiple tests | Running many variants/tests simultaneously |

| False Discovery Rate | Proportion of false positives among all significant results | Tracking multiple metrics or segments at once |

| Multiple Comparisons Problem | Error risk increase when testing many hypotheses | Conducting many tests without controlling error rate |

A CRO win that can’t last is a trap disguised as progress.

The urge to lock in changes after confirming statistical significance creates blind spots to the business context.

We’ve watched teams launch site-wide design shifts based on two weeks of “promising” data – only for those uplifts to collapse when novelty wears off or after a channel spike fizzles.

If you’re not evaluating sustainability – over multiple cycles, channels, and customer cohorts – you’re gambling, not scaling.

Ask yourself: does the variant still deliver after the next campaign, season, or shift in acquisition mix?

Can it weather A/B testing noise and small sample volatility, or does the win evaporate on contact with reality?

The repeatable insight: endure the temptation to double down on short-term statistical lifts.

Wait for signal persistence, for value to stack across the funnel, before you scale.

What matters is not the “winner” today, but what still wins three quarters from now – after the noise fades and real habits form.

Sustainable progress starts when leadership pivots from test-chasing to diagnosing systemic friction – the moves that keep outcomes stable as environments and audiences change.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Statistical noise and decision error

“Why Most Published Research Findings Are False” – Ioannidis, J.P.A. – PLOS Medicine

This article analyzes how random variation and sampling noise can mislead decision-making processes, especially within small samples or repeated experiments.

https://journals.plos.org/plosmedicine/article?id=10.1371/journal.pmed.0020124 - False discovery in multiple comparisons

“Controlling the False Discovery Rate: A Practical and Powerful Approach to Multiple Testing” – Benjamini, Y. & Hochberg, Y. – Journal of the Royal Statistical Society: Series B

Lays out the principles behind multiple comparisons and the increase in false positives as more variants or segments are tested, offering frameworks for adjustment.

https://www.jstor.org/stable/2346101 - The effects of novelty on behavior and metrics

“Too good to be bad: Favorable product expectations boost subjective usability ratings” – Raita E, Oulasvirta, A. – Interacting with Computers

Examines how novelty effects in digital interfaces temporarily inflate engagement and conversion rates, but typically fade as users habituate.

https://www.sciencedirect.com/science/article/abs/pii/S095354381100035X - Constraints and performance plateaus in business systems

“The Machine That Changed the World” – Womack, J.P., et al. – Harper Perennial (November 1990)

Explores why incremental process improvements tend to hit systemic constraints, and why sustainable growth depends on addressing underlying bottlenecks rather than local wins.

https://en.wikipedia.org/wiki/The_Machine_That_Changed_the_World_(book)

Questions You Might Ponder

How does noise in small data sets lead to false winners in CRO?

Noise in small samples increases the chance of random conversion spikes, making it easier to misinterpret statistical fluctuations as genuine business progress. This can result in adopting test results that don’t generalize, leading to false winners and risky scaling.

What is the multiple comparisons problem in digital experimentation?

When many A/B tests or variants are run, the likelihood of at least one statistical false positive rises exponentially. The multiple comparisons problem describes how this inflates the rate of false winners, undermining trust in test outcomes.

Why do higher conversion rates sometimes reduce business value?

Conversion rate gains can mask a decline in customer quality or buying intent. When metrics focus on absolute volume rather than customer fit, businesses often chase short-term lifts that decrease overall revenue, loyalty, or deal quality.

How does novelty bias distort CRO test results?

Novelty bias boosts initial engagement when users encounter something new, leading to temporary conversion lifts. However, as users adapt, performance typically fades, exposing the metric as a mirage rather than a durable gain.

What steps prevent scaling false positive wins in CRO?

Diagnose upstream factors like intent and trust before acting on statistical wins. Evaluate if the result persists over time and across real-world contexts. Sustainable scaling hinges on persistent value, not short-term statistical noise or surface-level tweaks.