What You’ll Learn

what is a source

Key Takeaways

- AI systems cite “sources” defined as organizations or entities with coherent authority signals, not isolated URLs or lone pages.

- Consistent branding, authorship, and topic focus are required for AI to recognize and trust a source for citation.

- Fragmented authority or unstable attribution – like multiple brands or changing author tags – dramatically lower eligibility for AI recognition.

- Structured content and cross-entity corroboration significantly boost citation rates and authoritative status in AI-powered search.

What is a source in AI search?

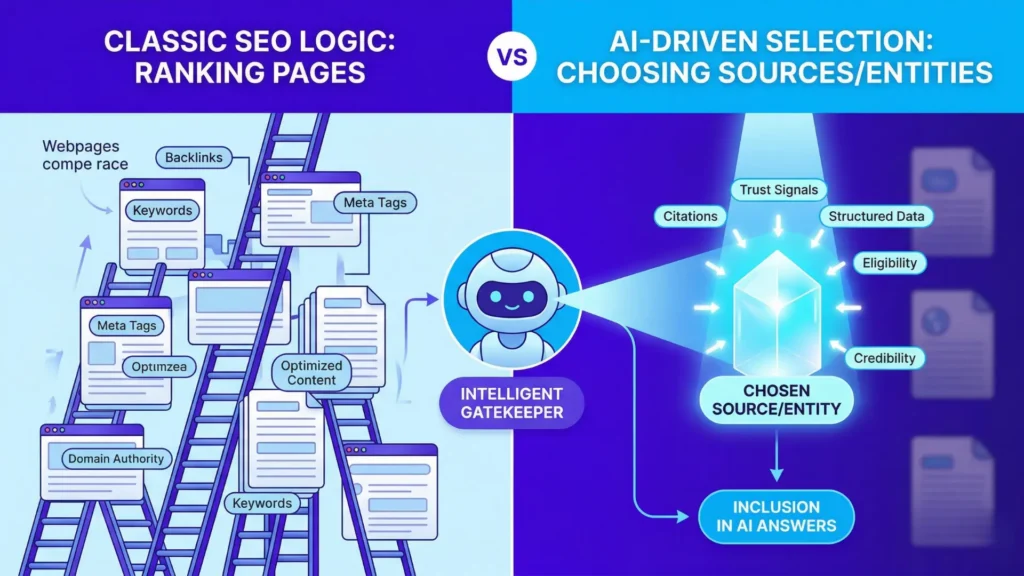

In AI search, a “source” means a coherent, authoritative entity or body of content that can be reliably attributed – distinct from a single URL or webpage.

This core concept underpins how AI systems select and cite material.

AI-level concept: a source is not a URL but a coherent authority unit

Key terms:

- AI source definition: A unit of trust, defined not by web address but by entity and authority coherence.

- AI authority unit: The entity or set of content tied by consistent branding, topic, or ownership.

- AI entity vs page: AI recognizes entities/organizations, not individual webpages, as the basis for trust and citation.

- Source abstraction: The process by which AI aggregates signals across multiple URLs and content types to establish a single, credible authority.

- Attribution: The consistency and stability of ownership/authorship signals enabling AI to safely quote or rely on a source.

Why URLs are insufficient as AI source units

Ever wonder why a page that ranks on Google sometimes fails to get cited by generative AI – even if it’s loaded with credible data? Here’s the twist: AI doesn’t treat a single URL as a true “source” unless it’s part of a broader, coherent authority. That means your best article, sitting by itself, can be invisible to AI’s citation logic.

We’ve watched well-meaning brands pour thousands into content, expecting AI to pluck punchy facts straight from those pages. Yet, time after time, isolated URLs vanish in AI-generated answers. Why? Because for AI, a URL is just a moment – a snapshot, easily lost in the noise. What AI really wants is a dependable authority unit, consistent and validated, not a one-off digital island.

The analogy is simple: would you trust legal advice from a single business card left on a doorstep, or from a firm with a visible office and clear credentials? Pages act like business cards in isolation; coherent authorities become the office.

Here’s a myth we see everywhere: “If my site ranks, AI will count it as a source”. In reality, absent stable authority signals, the URL does little for you. We’ve witnessed even high-traffic pages slip through the AI net when they’re not anchored in a clear brand or entity.

How AI interprets authority through coherence, not pages

AI defines a source as a unit of trust – built across content, brand, and signal. It’s not about which page comes first. It’s about whether the entity behind those pages shows consistency, credibility, and connection.

For example, across several client audits, we’ve seen that brands with tightly connected domain properties (think: shared branding, authorship, and topic focus) show up more often as “source” entities in AI answers – even when individual pages barely rank.

AI’s “source abstraction” works like assembling a mosaic. It looks for repeating patterns: shared authors, unified branding, backlink patterns, and repeated expert bios. If the pieces fit, AI forms a strong attribution unit; if signals scatter, the source gets fragmented.

Can you picture your content as a visible web, threads connected by keywords, author bios, schema, and repeatable entities? That visual coherence is what gives AI confidence in citing – and sometimes elevating – your site as a trusted authority unit.

So, if you’re focused only on optimizing URLs or chasing single-page rankings, you’re playing the wrong game for AI source recognition. The real win comes from building out the coherent, entity-backed mesh that lets AI see – and trust – your domain as a whole.

Bottom line: To become citation-eligible, start thinking beyond URLs. Build a source abstraction that AI can anchor to – and keep proving you’re more than just a collection of pages.

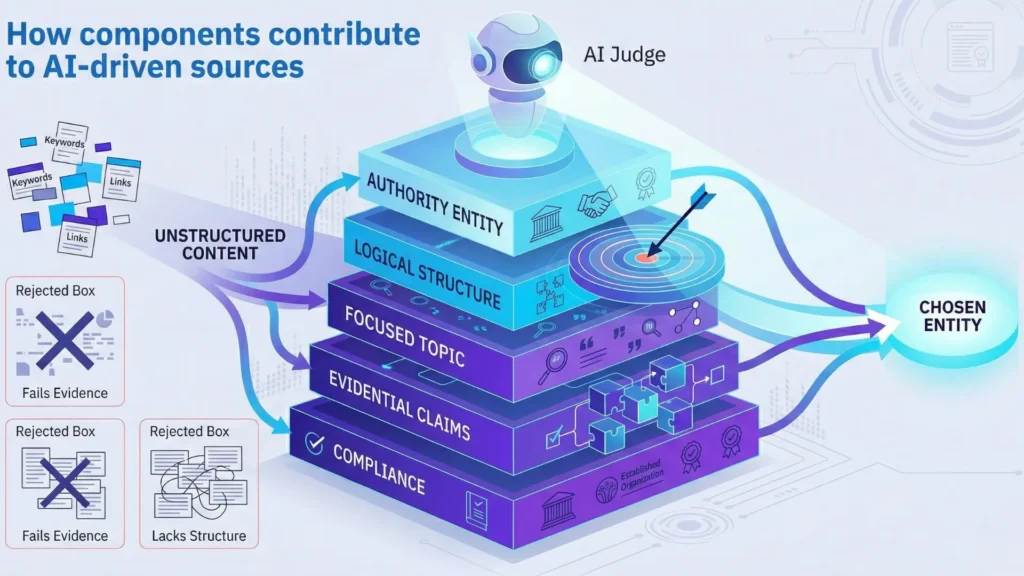

Failure modes: fragmented authority, unstable attribution, missing evidence

Common Failure Modes in AI Source Recognition

| Signal | Description | Impact on AI Trust |

| Consistent branding and ownership | Stable and unified brand identity across content | Builds trust through recognizable authority |

| Clear author/entity tags | Explicit author or entity attribution on content | Enables accurate source identification |

| Structured headings and definitions | Organized content with clear sections and definitions | Improves extractability and clarity |

Why fragmented sites fail to qualify as a source

Have you ever wondered why an AI search engine can index a thousand of your pages – yet still not see you as an authority? Picture your expertise as a bright beam, not a handful of scattered flashlights. When content drifts across dozens of subdomains, tertiary blogs, or microsites, the system struggles to stitch your authority together. We’ve seen clients pour energy into niche microsites, only to watch AI treat each as noise – none aggregating enough signal to pass the “AI source definition” test.

AI doesn’t judge by URL alone. It searches for a coherent “authority unit” – one reinforced by consistent signals: linked branding, clear entity tags, and tightly clustered topics. Fragmentation confuses the system, causing what’s called “source fragmentation AI”. This is when content floats in isolation, breaking the crucial trust signal chain. It’s like an orchestra with musicians playing in different rooms, producing only chaos, not symphony, instead of a harmonized performance. Does your main entity always match across your content ecosystem? If not, you might be invisible to the very systems that drive “AI attribution unit” decisions.

Myth: More domains equals more reach. In AI’s eyes, it often equals diluted trust – just as an orchestra with musicians playing in different rooms produces only chaos, not symphony.

Why unstable attribution undermines trust

Do you ever swap author names, rotate brands, or adjust publishing patterns just to “freshen” your platform? Caution: to an AI, these changes signal instability. We’ve seen even well-known brands lose citation eligibility after shifting author profiles frequently or running content syndication with inconsistent entity mentions.

Stable attribution is the backbone of AI trust. When a page’s author, organization, or even logo keeps changing, the platform perceives volatility – often downgrading citation potential. One large publishing client watched their “AI content trust signal” drop within weeks after launching a multi-brand contributor program with no unified author taxonomy.

Consider unstable attribution like changing the lock on your front door each week. Visitors (and AI systems) start to question whether they’re entering the same trusted place – or a risky unknown. Occasionally, minor changes help prevent staleness. But swing too far, and those “AI authority unit” signals splinter, leaving your brand stranded outside the AI’s trusted citation circle.

Losing source status isn’t about algorithms being “harsh”. It’s about broken patterns: fragmented authority and unstable attribution erode the credibility required for reliable AI source recognition. Next, see how evidence signals help repair that trust.

Evidence signals: how AI judges citation eligibility

Have you ever wondered why AI sometimes skips that brilliantly-written article or insightful research summary – while quoting a dry, clinical page that most humans would ignore? Here’s the twist: citation eligibility for AI isn’t about style or even sheer depth. It’s about structure, corroboration, and formatting. Let’s pull back the curtain on how those signals work.

Structural clarity and extractable content

Core Citation Eligibility Signals for AI Source Recognition

| Failure Mode | Description | Analogy |

| Fragmented Authority | Content spread across multiple disconnected domains or subdomains | Orchestra with musicians playing in different rooms |

| Unstable Attribution | Frequent changes in author names, brands, or publishing patterns | Changing the lock on your front door each week |

| Missing Evidence | Lack of corroboration from other credible sources | Judge hearing a witness without corroborating testimony |

Core citation eligibility signals:

- Consistent branding and ownership

- Clear author/entity tags

- Structured headings and definitions

- Tables and lists for extractability

- Evidence or corroboration from other sources

- Stable attribution (minimal changes to authorship/branding over time)

- Topic focus and topical consistency

AI systems, by design, crave order. A single, well-labeled definition can weigh more than three pages of loosely organized commentary. We’ve seen clients gain a sudden surge in cited snippets after simply breaking monolithic text into discrete headings, with explicit definitions and precise tables. Here’s something few realize: when a table is formatted for quick extraction – clear headers, consistent cells – AI recognizes the pattern as safe to quote. It’s not just scanning for facts. It is looking for boundaries it can trust.

Imagine a warehouse: if everything’s in identical unmarked boxes, you’d never find what you need quickly. But if each box is labeled and shelved in a logical order, even an automated robot can find and verify its content efficiently. AI works the same way with structured signals. We once worked with a SaaS client whose blog was packed with expertise, yet their visibility among AI-generated sources skyrocketed only after we restructured core pages for extractability. Suddenly, quotes and lists began appearing in summaries – sometimes within six weeks.

Here’s a common myth: “If my content is the most thorough, AI will always pick it”. In practice, AI skips content it cannot confidently extract, even if the depth is impressive. The practical outcome? If you want to maximize eligibility as an “AI authority unit”, clarity and formatting beat raw word count.

Consensus and corroboration across sources

How can a single source feel trustworthy to a machine? AI citation logic doesn’t reward clever outlier claims. It looks for patterns that repeat across credible sources. If two or three independent high-authority domains state the same fact, the chance of that claim becoming a “trusted” building block jumps dramatically. We’ve seen this firsthand: a financial client with excellent, unique research lost AI attribution when their key conclusions stood alone, uncorroborated across the web. But when industry peers echoed (or cited) those findings, the same statements became recurring citations within weeks.

The analogy? Imagine AI as a judge in a dimly lit room, scanning for witnesses that agree on the same story. Novelty is less valuable than verified consensus. Doubt lingers when statements lack a second anchor. The surprising layer: the more stable and corroborated your claims, the less likely they are to be filtered out as unreliable or low-signal noise.

Here’s a question that might sting: if AI cross-checks claims for consensus, is your content getting echoed or stranded? Brands that build signal density across multiple recognized entities – by earning or referencing validation elsewhere – find themselves cited more often and with greater precision.

Structural clarity and consensus aren’t extras. For AI, they’re the line between invisible and quotable. Next, we’ll see why source identity changes the game from page ranking to selection mechanics.

Why authority abstraction leads into selection vs ranking

From source identity to selection mechanics

Have you ever wondered why a #1 ranked page on Google might be invisible to AI-driven search assistants as a “trusted” source? It’s a bit like building a billboard in the forest – sure, it’s big and impressive, but nobody’s there to see it if the right roads don’t lead to it.

Here’s the pivot most teams miss: AI systems don’t think in terms of ranking pages – they identify and select coherent sources that act as stable authority units. With several enterprise clients, we’ve seen pristine SEO pages outperformed by less-optimized sites that demonstrate clear, consistent authority signals (name, brand, and claim structure remained unbroken over time). Outranking someone means nothing if AI doesn’t recognize your source as an eligible citation or authority.

Imagine trying to tune into your favorite radio station, but it’s sending its signal from five different frequencies every day. That’s what fragmented authority looks like to an AI – it can’t reliably lock on, so its selection system ignores you even if your signal is loud in one spot.

This selection process isn’t just about “who ranks highest”. AI evaluates which authority abstraction matches its confidence requirements for citation or answer synthesis. For example, we’ve seen tools like custom knowledge retrieval systems that map “source units” before parsing individual pages, separating the identity layer (who/what) from the page layer (where).

Do you catch yourself still thinking: ‘I need my page to rank higher’? Ask instead, ‘Is my authority unit stable, coherent, and extractable by machines?’

Here’s a myth worth busting: “If I win on ranking, I’ll lead in AI citations”. Not true. In fact, we’ve seen high-ranking pages lose out on citations when their brand, author, or evidence signals don’t create a unified source identity for the AI. The action shifts from keyword and link competition, toward consolidating evidence and presentation across every digital footprint you control.

Think of the authority abstraction as the passport, and ranking signals as travel guides. Without a passport, you don’t get selected – no matter how many glowing reviews your destinations have.

For AI, eligibility depends on source abstraction and authority clarity, not on page ranking – making traditional SEO position less relevant to answer selection logic. This distinction leads directly into the next layer: why ranking signals don’t transfer to AI source selection.

This shift forces decision-makers to reframe: selection is about source eligibility, built on clarity and unity, not about sheer visibility. Look at how citation logic fully separates from traditional ranking systems – and what you can do about it.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Web-scale information aggregation and entity coherence

From Strings to Things: Web Search for Entities – Amit Singhal – Google Official Blog

Modern search systems shifted from documents to entities as primary units of knowledge. It introduces the Knowledge Graph model where entities replace pages as the core indexing unit. organization.

https://blog.google/products/search/introducing-knowledge-graph-things-not/ - Citation and evidence mechanisms in large-scale retrieval

Neural Approaches to Conversational Information Retrieval – Jianfeng Gao et. al – Foundations and Trends in Information Retrieval

Evidence selection in AI systems relies on ranking, redundancy, and corroboration signals, not traditional citation norms.

https://www.researchgate.net/publication/357875438_Neural_Approaches_to_Conversational_Information_Retrieval - Attribution stability and signal integrity

The Semantic Web – Tim Berners-Lee et al. – Scientific American

Stable attribution depends on structured data and shared schemas across the web. It introduces the concept of machine-interpretable metadata for reliable information linking.

https://www.scientificamerican.com/article/the-semantic-web/ - Decision science and information trust

Digital Media, Youth, and Credibility – Andrew J. Flanagin and Miriam J. Metzger – MIT Press

Trust in digital environments is shaped by cognitive heuristics, platform design, and information structure. It explains how credibility is constructed through context, repetition, and platform signals.

https://mitpress.mit.edu/9780262562324/

Questions You Might Ponder

What is a source in AI-driven search systems?

A source in AI-driven search systems refers to a coherent, authoritative entity – such as an organization or brand – recognized by signal consistency across content, not just a single webpage. This entity-based approach enables AI to attribute information reliably for citation and trust-building purposes.

Why don’t high-ranking URLs always qualify as AI citation sources?

High-ranking URLs often lack the stable, repeated authority signals needed by AI systems. Without entity coherence – like unified branding, author bios, or structured data – a single web page remains an isolated signal and is ignored as an authoritative “source” by AI.

How does AI determine whether a website is a credible source?

AI systems aggregate signals such as consistent branding, author information, topical focus, structured content, and corroboration from other credible entities. When these elements persist and align across a domain, the website is seen as a credible source, increasing its citation eligibility.

What causes fragmented authority and lost citation eligibility in AI search?

Fragmented authority occurs when content is spread across multiple domains or lacks unified branding and author signals. This fragmentation confuses AI, making it difficult to recognize a single authority unit, which lowers or eliminates the site’s chance of becoming an AI-citable source.

What evidence signals increase AI citation and entity recognition?

Evidence signals include structured content (headings, tables), consistent authorship, stable branding, and corroboration from multiple trusted sources. These signals make information easy for AI to extract and verify, increasing the likelihood of being cited as an authoritative source.