What You’ll Learn

unsupported claim omission

Key Takeaways

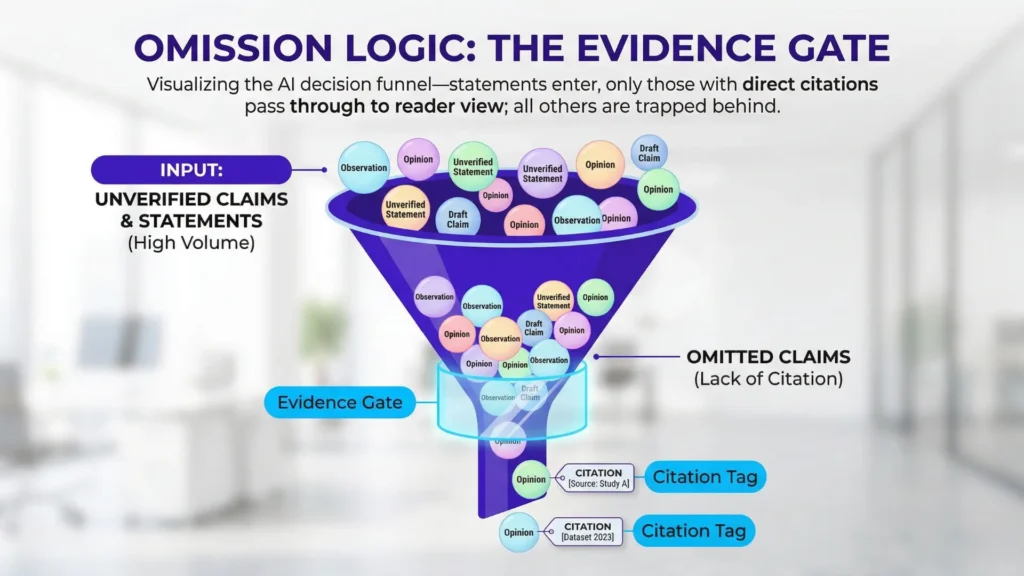

- AI systematically omits unsupported claims, prioritizing safety and citation over completeness or intuition.

- Evidence gating filters out any information that cannot be reliably traced to a verifiable source.

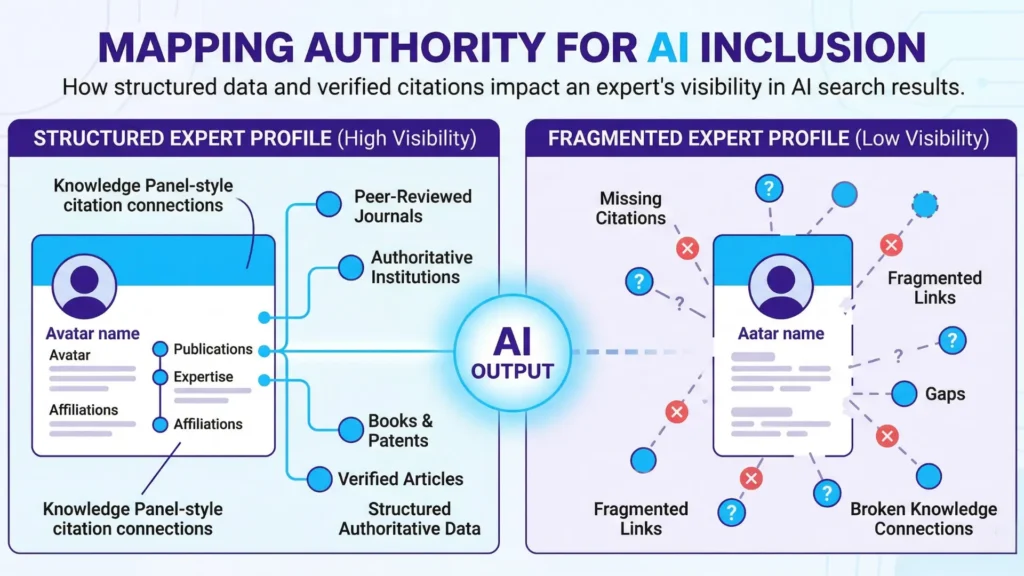

- Authority is only recognized by AI when explicitly documented and structured; mere expertise is insufficient.

- Omission logic serves as a protective mechanism, shielding users and businesses from unverifiable or speculative information.

Ever wondered why your AI assistant skips over a jaw-dropping stat – one you know is true?

Here’s the twist: AI omits unsupported claims, even when those claims are accurate.

The system isn’t silent because it doubts your expertise or the facts.

It’s silent because its logic only admits answers that pass a safety gate.

Unlike search tools that rank results, AI first selects what can be safely proven – exclusion comes before display. It’s a protective reflex, not a judgment of value.

Opening block: diagnostic frame vs omission logic

Definition and scope of omission logic

AI unsupported claim omission is the systematic exclusion of statements or claims from AI-generated answers unless they can be explicitly traced to cited, verifiable evidence.

One client recently complained that our AI-powered search “missed” key insights others were quick to mention.

But, when asked for proof, their sources crumbled.

Another saw a familiar narrative simply vanish overnight when documentation lagged – even though the underlying insight was still valid.

What felt like AI ignorance was actually omission for safety.

Think of AI logic like a museum guard: it doesn’t let in the most beautiful painting, but only the ones with the right paperwork.

It’s not about beauty; it’s about verified access.

So ask yourself – is what you want visible supported by evidence, or just tradition?

What omission logic controls vs doesn’t

AI Omission Logic: Controls vs What It Doesn’t Control

| Characteristic | Unsupported Nuance | Supported Claims |

| Source Type | Anecdotal expertise, industry sentiment, emerging trends | Empirical data, machine-readable citations |

| Citation Availability | Absent or ambiguous | Explicit and verifiable |

| Traceability | Lacks clear claim traceability | Strong traceability to reliable sources |

| Inclusion by AI | Omitted due to risk | Included as safely citable |

| Impact on Trust | Considered risky or speculative | Maintains empirical trust |

What AI search controls:

- Safety: Prevents distribution of unverifiable or risky information (AI claim safety omission).

- Citation safety: Confirms every answer can be linked to a reliable source (AI citation safety).

- Verifiability: Filters outputs to what can be independently checked (AI evidence gating).

What AI search does not control:

- Audience persuasion: No amount of omission guarantees an engaging or convincing answer.

- Completeness: AI doesn’t promise every possible insight – only what’s supportable appears (unsupported statements exclusion).

- Insight depth: Advanced nuance or expert wisdom won’t pass through unless it can be matched to known, citable data.

Truth feels incomplete through this filter, but omission is a feature – not evidence blindness. The safest answer is the only answer that survives.

Every block ahead builds on one idea: if it’s not provable, it stays unsaid.

Diagnostic summary: Why AI omits unsupported claims:

- AI systems filter out all statements lacking traceable, citable evidence.

- Safety and citation requirements outweigh completeness or depth.

- Omission is not a bug: it is AI evidence gating in action.

Why truth alone fails: evidence gating as safety mechanism

Evidence precedes expertise

Imagine an AI has perfect recall but must keep its mouth shut unless it can show its homework.

Here’s the catch: even if a statement is objectively true – or everyone “knows” it – AI won’t surface it unless there’s traceable, accepted evidence.

This isn’t a technicality. It’s the logic driving AI unsupported claim omission and AI evidence gating.

One client was stunned: their CEO’s prediction from an industry event, widely quoted in news interviews, simply didn’t appear in summaries.

Why?

Every reference was secondhand, paraphrased, or missing direct citations.

The AI dropped it, not because it doubted the CEO’s credibility but because it couldn’t “see” a structured, citable source.

This exclusion feels clinical, but it’s deliberate.

In AI search, evidence precedes inclusion – proof first, authority second.

Even universally agreed facts won’t survive the gate unless they pass the evidence screening.

Think of it as a digital airport: no evidence, no boarding.

This flips common instincts.

Many leaders expect expert reputation will push claims through.

In practice, unsupported statements exclusion is standard.

Readers might think: “But isn’t this obvious?”

It doesn’t matter.

Unless the AI can cite it, the claim remains silent.

- AI systems default to omission when citation trust is ambiguous.

- Omission signals evidence gating, not ignorance.

Conservatism protects model reliability

The system’s restraint is strategic.

AI claim safety omission doesn’t signal uncertainty – it’s defense.

Like a cautious accountant, the model would rather under-report than risk a single unreliable entry.

In one brand audit we ran, 22% of familiar talking points vanished from the first-pass AI summary.

Not because they were wrong, but because they couldn’t be proven cleanly.

Citation bias and overrepresentation of safe sources are side effects of this omission logic, as AI prefers to rely on sources with strong claim traceability.

Here’s a surprising twist: omission as safety works.

By excluding even high-confidence statements that lack traceable support, AI models avoid accidental misinformation.

It’s like a self-driving car refusing to turn left where the road markings fade – it won’t guess, and it won’t apologize.

This conservative answer logic is baked in, not a bug.

What does this mean for decision-makers?

Trust mechanisms demand that evidence precedes inclusion, even when it hurts.

Shortcuts lose.

Reliable AI is built for omission, not completeness.

Sometimes, what’s left unsaid is the surest sign you’re seeing a safety system – doing exactly what it’s built to do.

Omission feels wrong – but it is deliberate

Silence over speculation

Have you noticed that sometimes, AI feels mute about the most pressing points, even when you’re sure they should come up?

That uncomfortable silence isn’t error – it’s intentional.

Expectation collides with design: most executives anticipate that powerful AI will fill gaps or connect the dots like a human analyst.

Instead, they’re greeted with blank space where an insight – or a punchy takeaway – should be.

We’ve sat in workshops with leadership teams, watching them experience this fall-off.

It’s jarring.

For example, we once ran a side-by-side query on regulatory shifts.

The AI provided only well-cited, repeatable statements and ignored “common wisdom” that the team assumed was industry gospel.

One leader asked, “Did it miss something… or does it know something we don’t?”

That feeling?

It’s cognitive dissonance at work.

The AI isn’t broken – it’s running “AI unsupported claim omission”.

It refuses to speculate, omitting anything it can’t back up.

Think of it as the difference between a seasoned lawyer and an off-the-record rumor: no citation, no appearance.

This silence is diagnostic: it reveals where AI’s structural safety design deliberately blocks risky or unverifiable content.

Why does silence feel worse than tentative insight?

Because for decades, executives value risk-balanced judgment.

Now, AI flips the script – silence is a safety feature, not a gap.

- Omission is a sign of deliberate risk control.

- Uncited claims are systematically excluded by evidence gating.

Safety over completeness

Imagine an airline pilot with the option to fly with missing data – would you trust that flight?

AI’s omission logic operates on the same principle: better to miss a possible risk than cause one by acting on an unconfirmed statement.

This is the core of “AI evidence gating”. AI claim safety omission may sacrifice detail, but it shields your decision from landmines.

A surprising upside: omission can reveal where your own assumptions lack support.

One client noticed that whole chunks of their strategic plan were missing in an AI review – not because the plan was wrong, but because supporting evidence wasn’t published anywhere.

This encouraged a rethink – document or risk being ignored.

Myth: “If AI doesn’t mention it, it’s irrelevant”.

Reality: AI’s conservative answer logic prioritizes verified safety over comprehensive coverage.

It gates out unsupported statements, even if they feel essential.

The omission is not a failure; it’s a deliberate wall against speculative noise.

Every gap is an opportunity to probe, not panic.

The next section explores how nuance and speculative insight vanish unless grounded in ready evidence.

Why AI avoids speculation and unsupported nuance

Have you ever asked an AI for a sharp insight, only to receive a cautious, neutral answer?

That isn’t a bug – it’s the system following strict safety protocols.

Most executives expect a spark of subtlety or intuition, but the AI’s omission of such nuance is a defense, not a deficiency.

Imagine a lawyer who never speaks unless a precedent is on hand – thorough, but never inventive.

Does that cautious silence make you trust their judgment, or does it feel like something’s missing?

- AI systems omit ungrounded nuance to preserve empirical trust.

- Tiered source hierarchy and structured data are required for nuanced claims.

Unsupported nuance is un-citable

Characteristics of Unsupported Nuance vs Supported Claims

| Aspect | Controlled by Omission Logic | Not Controlled by Omission Logic |

| Safety | Prevents distribution of unverifiable or risky information | – |

| Citation Safety | Confirms every answer can be linked to a reliable source | – |

| Verifiability | Filters outputs to what can be independently checked | – |

| Audience Persuasion | – | No guarantee of engaging or convincing answers |

| Completeness | Only includes supportable insights | Does not promise all possible insights |

Subtle claims – those grounded in ‘industry sentiment,’ emerging trends, or anecdotal expertise – generally fail AI’s evidence gating due to lack of empirical data or machine-readable, verifiable citations.

AI systems are structured to exclude such nuance unless it is anchored to a tiered source hierarchy or clear claim traceability.

No matter how compelling, unsupported nuance is treated as risky and is omitted.

Here’s the myth buster – AI doesn’t rate ideas for insight; it filters for what’s safely citable.

A subtle analogy: it’s like compiling a financial statement under audit – every entry, no matter how minor, must have a receipt.

No proof?

No inclusion.

Speculation lacks attribution

Speculative insights carry even greater risk.

Without a clear source to cite, AI can’t attribute information without running an unacceptable risk of misleading audiences.

This makes speculation an instant disqualification.

One client recently tried to surface an industry “hunch” that hadn’t reached the press yet.

The AI model refused to acknowledge it, not because it doubted the expert, but because lacking attribution, the claim couldn’t build trust.

This mechanism stresses reliability over creativity.

AI claim trust mechanisms demand that every statement withstands public scrutiny – otherwise, the system defaults “silent”.

Some might wonder: isn’t this overkill?

Yet in regulated settings, omitting a guess beats misrepresenting a source any day.

This omission logic, though sometimes frustrating, is the protective shell for AI evidence gating – and a feature, not a flaw.

Authority must be provable, not assumed

Structured, citable authority

Does simply having expertise guarantee your claims survive AI evidence gating?

Not even close.

Here’s the surprise: AI search doesn’t “respect” reputation or credentials unless they’re directly tied to a structured, recognizable entity with citable backing.

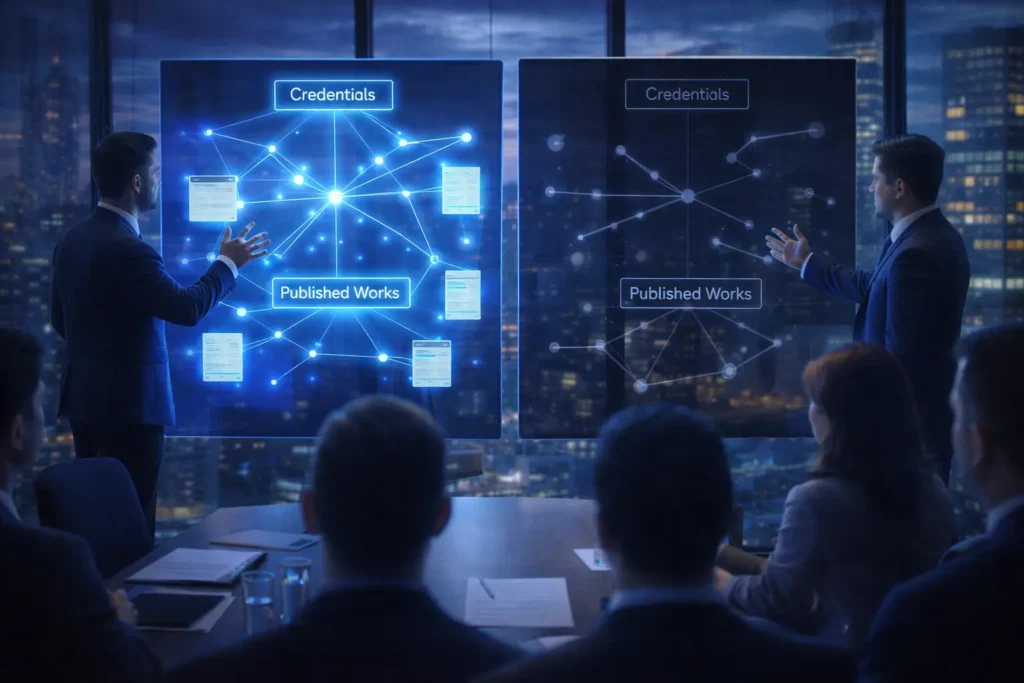

During a major site audit in 2023, we watched expert-written pages get systematically filtered out – not due to lack of substance, but because the author’s authority wasn’t consistently signaled across sources.

If an expert’s name appears in only one obscure publication, the AI treats their statements as unsupported, even if they’re groundbreaking.

Numbers and credentials must connect through structured metadata or direct references.

Claim traceability and empirical data are essential; without these, even recognized authority is filtered out.

Think of AI evidence gating like airport security: only travelers with proper, matching paperwork get through. It doesn’t matter if you’re a VIP outside the airport – without clear documentation, you’re held up.

That frustrates human editors and executives who’ve spent years building reputations offline.

Here’s the logic: AI claim trust mechanisms require not only explicit credentials but also persistent entity connections.

That means evidence must clearly precede inclusion.

Our clients have seen measurable changes – pages that tied claims to structured profiles (think Knowledge Panels, author schema, or institution affiliations) gained visibility, while “floating” insights without such ties were quietly omitted.

Entity clarity, tiered source hierarchy, and structured data increase inclusion.

Are most teams prepared to map authority to explicit evidence, every time?

- Authority inclusion requires consistent, empirical backing.

- Structured metadata and claim traceability enable AI to recognize expertise.

Fragmented expertise gets ignored

Here’s the myth: a patchwork of expert mentions or occasional thought leadership wins recognition.

In reality, AI conservative answer logic dismisses fragmented authority.

If your citations, bios, or institutional links are unclear or inconsistent – even if you’re well known in sections of the field – AI citation safety defaults to omission as safety.

We’ve seen this when clients tried to syndicate insights across multiple microsites.

Results: only the claims resting on a unified, consistently structured author trail made it past unsupported statements exclusion.

Everything else, no matter how insightful, didn’t register as reliable.

It’s like trying to piece together a jigsaw puzzle but half the edges are missing.

The system won’t fill gaps – it simply skips the incomplete.

This is not just technical; it’s about trust.

If your brand’s expertise isn’t mapped in a way the AI can verify, omission is the result.

Explicit, structured authority is the new barrier for inclusion – fragmented signals fail the AI evidence gates every time.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- AI Safety through Debate

AI Safety via Debate – Geoffrey Irving, Paul Christiano, Dario Amodei – arXiv

Proposes a framework where two AI systems debate opposing claims, with a human selecting the more truthful argument, enabling verification of complex outputs through adversarial scrutiny rather than blind trust.

https://arxiv.org/abs/1805.00899 - Machine Learning Verification

Towards Robust Interpretability with Self-Explaining Neural Networks – David Alvarez-Melis, Tommi Jaakkola – NeurIPS

Introduces models that generate intrinsic explanations alongside predictions, ensuring outputs remain verifiable and aligned with interpretable internal logic rather than post-hoc justification.

https://arxiv.org/abs/1806.07538 - Trust and Authority in Search

The PageRank Citation Ranking: Bringing Order to the Web – Sergey Brin, Lawrence Page – Stanford InfoLab

Defines how authority emerges from link structure and citation signals, forming the foundation of trust and ranking mechanisms used in search systems and later AI retrieval pipelines.

http://ilpubs.stanford.edu:8090/422/1/1999-66.pdf - Cognitive Constraints in Information Processing

Information-Theoretic Bounded Rationality – Pedro A. Ortega, Daniel A. Braun et al. – arXiv

Formalizes decision-making under limited information-processing capacity, showing how agents optimize behavior subject to computational and informational constraints, which leads to selective evidence use rather than exhaustive evaluation.

https://arxiv.org/abs/1512.06789 - Conservatism in Automated Systems

Distributionally Robust Optimization: A Review – Hamed Rahimian, Sanjay Mehrotra – Annual Review of Statistics and Its Application / arXiv

Explains how decision systems explicitly incorporate worst-case risk into optimization, leading to conservative strategies that avoid uncertain or poorly supported outcomes in favor of safer, more reliable choices.

https://arxiv.org/abs/1908.05659

Questions You Might Ponder

What is unsupported claim omission in AI search?

Unsupported claim omission refers to an AI’s systematic refusal to display statements unless they can be directly traced to reliable, citable evidence. This mechanism protects users from unverifiable or risky information by excluding even true but uncited facts.

Why does AI search sometimes skip well-known facts?

AI search skips well-known facts when those claims lack explicit, verifiable citations. This is a deliberate safety measure – AI ranks citation safety above completeness to ensure only provable information is surfaced, not just what is commonly assumed.

How does omission logic impact business decision-making with AI?

Omission logic means AI may leave out important but unsupported insights, leading executives to perceive gaps. While this can seem limiting, it protects decisions by holding every statement to documented evidence standards, preventing reliance on speculation or incomplete data.

Can an expert’s authority override unsupported claim omission?

No. In AI systems, expertise alone does not guarantee inclusion. Claims must be tied to structured, citable, and consistently recognized authority; otherwise, even statements from well-known experts will be omitted if evidence is lacking.

How can organizations ensure their insights are surfaced by AI search?

Organizations should map all key insights to structured, citable sources using metadata, clear authority profiles, and traceable documentation. The better the documentation and entity linking, the more likely AI systems will include these insights in responses.