What You’ll Learn

trust propagation

Key Takeaways

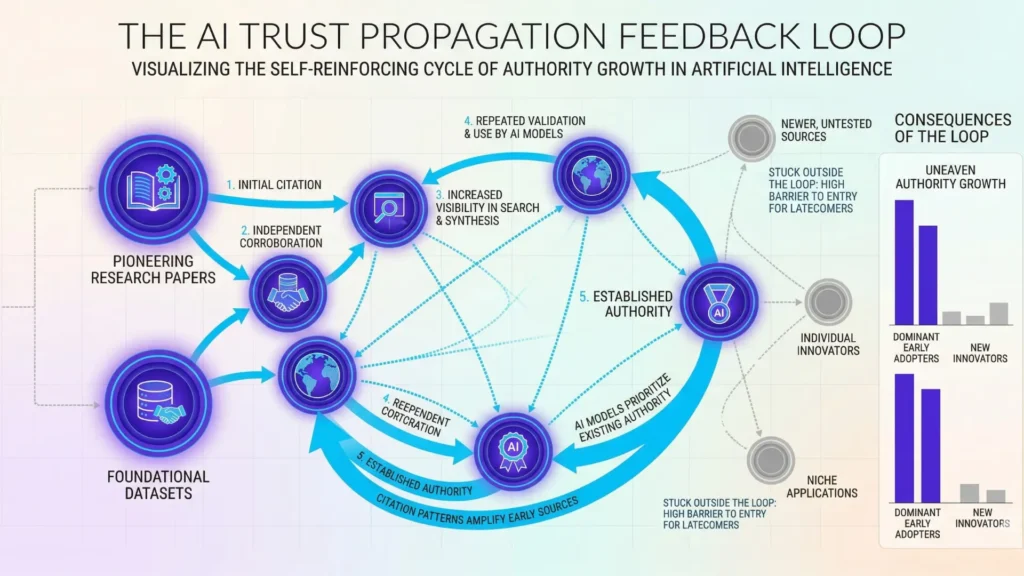

- Trust propagation in AI systems is shaped primarily by early exposure and feedback loops, locking in initial authority advantages.

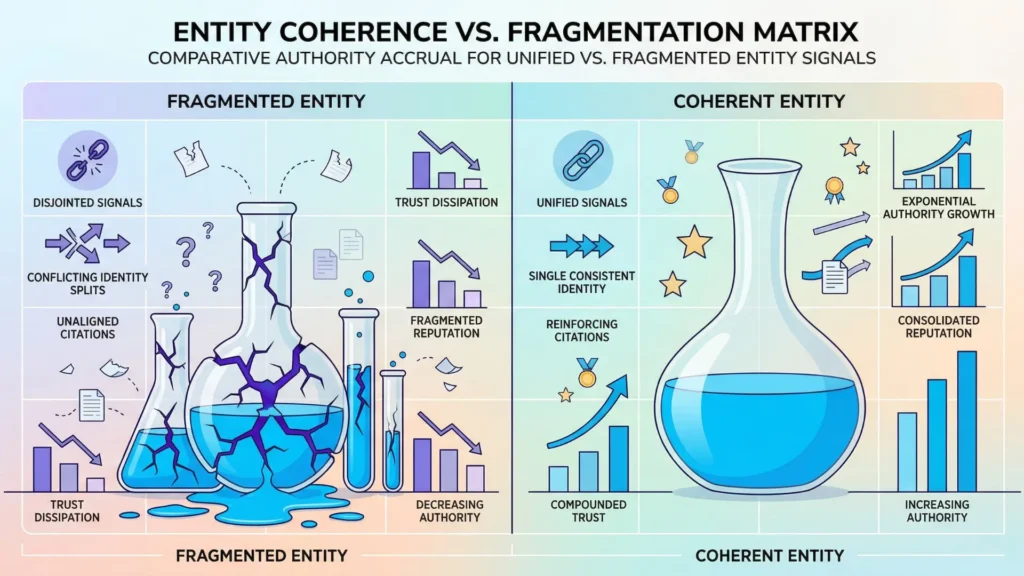

- Entity fragmentation – through inconsistent names and contexts – greatly hinders authority growth, slowing effective trust propagation.

- Authority growth in AI is a slow, recursive process driven by consistent, cross-source citation and network reinforcement, not quality alone.

- Regulated industries face even slower authority propagation due to heightened requirements for evidentiary rigor and safe citation.

Imagine if only five people were allowed to nominate the “most trustworthy” expert in every field.

What happens when every AI system starts with the same small handful of citations?

This isn’t just a thought experiment – AI search and knowledge systems frequently give outsized weight to early, narrow retrieval windows, where only a limited set of sources get cited or included.

Why authority grows unevenly in AI systems

Narrow retrieval windows and seed exposure

Here’s what most executives don’t realize: no matter how authoritative your content is, if it doesn’t appear in those very first rounds of AI selection, your chance of being recognized as a trusted source can remain stubbornly low for months, sometimes years.

We’ve seen client sites with 10x the depth and credibility of competitors hang invisible in entity-based authority AI search, purely because the original exposure window passed them by.

The “seed exposure” problem creates a feedback loop.

Early citations teach the system which sources to trust, and that trust quickly multiplies for those initial names.

Everyone else is left outside the glass, even if they’re shouting louder or more accurately.

One surprising analogy: think of it like airport security lines – once the fast lane fills up, no amount of good behavior gets you through unless someone opens a new checkpoint. In most cases, they don’t.

Definition: AI trust propagation is the mechanism by which a source’s trustworthiness increases – or stagnates – over time as its entity is repeatedly cited, connected, and corroborated in the graph.

Ever wonder why a proven report or brand seems to get ignored by AI-driven rankings while a less credible competitor dominates?

This isn’t randomness – it’s a function of entity graph trust propagation and how limited retrieval cycles keep amplifying early favorites.

Corroboration-driven selection entrenchment

Myths and Realities of Corroboration in AI Authority Growth

| Signal | Description | Impact on Authority Growth |

| Corroborative Mentions | References to the same entity across multiple trusted sources | Reinforces reliability and trustworthiness |

| Consistency of Name, Context, and Relationship | Uniform use of entity names and roles over time | Prevents fragmentation of authority |

| Repeated Citation Patterns | Frequent, rhythmic mentions of the entity | Enables cumulative trust building |

There’s a persistent belief that quality eventually rises.

The data says otherwise.

Once AI systems register a set of “corroborated” sources, the selection process doubles down.

We’ve observed even large, reputable brands get locked out of AI source selection authority growth, simply because the first few rounds of link or mention corroboration didn’t include them – often due to pure chance.

This isn’t about content merit alone.

It’s about which names the system can triangulate across multiple early sources.

If initial AI signals repeatedly reinforce the same set of entities, those entities’ authority growth accelerates while new entrants are largely ignored.

In practical terms, think of it as planting trees in cement – the system keeps watering what’s already sprouted, not what’s struggling to break through.

One client’s measurable authority spike only happened after a surge in cross-source mentions triggered fresh corroboration in entity-based authority AI search.

The effect was dramatic but far from instant – the lag between initial mentions and actual authority growth often stretched five to seven months, far longer than most expect.

The myth: “Publish great work and AI will eventually find you”.

Reality: AI trusts what it learned first, not what’s best.

Can you afford to wait for a second chance at the trust window?

Authority isn’t a fair or automatic process in AI systems. It’s a recursive pattern – early recognition locks in advantages, while everyone else faces a slow climb. The unevenness isn’t a glitch; it’s the outcome of structural feedback loops, waiting for someone bold enough to strategize around them.

Why coherence in entity graphs matters

Fragmented identity vs unified entity presence

Ever wondered why a business with groundbreaking insights gets bypassed, while a lesser-known competitor dominates AI discovery? Here’s a seldom-discussed reason: entity identity splinters. It’s not about content quality alone. If your brand name, website, or spokesperson appear differently across channels – even slight inconsistencies – AI’s entity graph splits your authority into fragments.

We’ve seen teams pour investment into premium articles, yet their mention frequency fails to snowball. Why? Five name formats, two parent company spellings, three spokesperson versions – each one signals “maybe the same, maybe not” to entity-based AI search. The result? Authority never pools; it dissipates. Imagine pouring water into cracked glassware. That’s how trust leaks out, citation by citation.

Here’s a practical case: one client’s acquisition led to months of seeing either the old brand or new merger name online, never both unified. During that window, their AI visibility flatlined – even as they landed bylines in top publications. Their entity coherence reset to zero.

A common myth? That search AI effortlessly ‘knows’ all variants refer to the same source. In real systems, entity coherence is earned, not granted automatically.

Cross-source name and context linking

Signals for Safe Citation in AI Trust Propagation

| Myth | Reality | Implication for Strategy |

| Quality content eventually surfaces automatically | Early corroboration and entity triangulation dominate authority gain | Focus on early, cross-source mentions to seed trust |

| Authority growth is fast once published | Lag of 5-7 months or more is common | Plan for long-term consistent efforts |

| AI trusts the best sources inherently | AI trusts what was learned first, not necessarily the best | Don’t rely on chance; actively manage early exposure |

Diagnostic: Entity Recognition and Repetition

AI systems repeatedly scan for recurring, consistent references to entities across sources and time.

If recognition and repetition aren’t established, authority propagation fails before it starts.

How does trust flow in an AI system?

Imagine an orchestra: each section must play in time, on cue.

If your citations from industry news, LinkedIn, and websites use different names, titles, or job tags, AI hesitates to unite them.

This hesitation creates a trust gap between your content and authority growth; the signals appear scattered, so propagation slows.

We see this when internal teams control some sites while agencies or PR handle the rest.

Each group might tweak language, unaware that these micro-edits fracture the broader entity signal.

One client discovered that simply normalizing job titles across social, press, and bios – in just 30 days – unlocked new AI source selection authority (feedback showed an uptick in entity-based authority AI search).

No tool ‘automatically’ fixes these splits, but entity management platforms – think schema-based monitors – can spot gaps quickly. Imagine your authority as a mosaic.

Every missing piece is visible to the algorithm, even if readers miss it.

Is it any wonder that slow authority recognition AI outcomes often follow fragmented names and broken linkage?

Here’s the upshot: entity graph trust propagation depends on a unified presence and coherent signals.

If the foundation is cracked, the skyscraper never rises.

Contrast: Entity Authority vs Keyword Optimization, Authority vs Ranking

| Legacy SEO (Keyword/Ranking) | AI Authority Propagation (Entity-Based) |

| Keywords optimize page rank | Entity links propagate trust |

| Volume boosts traffic | Coherence compounds authority |

| Backlinks drive credibility | Citations reinforce safe recognition |

How trust flows through entity relationships

Neighboring topic reinforcement

Ever noticed how one strong page can pull its weaker siblings up the ranks?

In AI trust propagation, authority doesn’t grow in a vacuum. Instead, it moves across connections – often in surprising directions.

Picture a cluster of related topics: when one node earns recognition for “AI trust propagation authority growth”, its links to fields like “entity graph trust propagation” and “AI source selection authority” become quietly valuable.

Their proximity means that trust, once seeded, nudges sideways as well as upward.

We’ve seen clients score a high-authority feature on a niche subtopic, then watch their broader search presence rise over the following quarter – even where direct mentions were sparse.

It’s not magic.

When adjacent topics reinforce one another, their total footprint expands.

This mirrors the neighbor effect in urban renewal, where one upgraded building boosts local property values simply by adjacency.

But have you ever wondered why one seemingly trivial mention can tip the balance, while years of effort on isolated topics barely register?

The answer lies in entity-based authority AI search.

Linked signals act as multipliers.

A single, well-connected piece sometimes does more than ten isolated assets scattered across unrelated topics.

Yet, the multiplier only works if those links are visible and interpreted correctly by AI systems.

AI systems look for:

- Corroborative mentions across trusted sources

- Consistency of name, context, and relationship

- Demonstrated, repeated citation patterns

- Absence of conflicting or fragmented references

Slow accumulation through repeated patterns

Authority growth in AI visibility rarely arrives in a dramatic spike.

It’s more like watching moss take over a stone – steady, persistent, almost invisible until suddenly everywhere.

Repeated, consistent coverage of aligned topics acts as a rehearsal: each piece making the next more credible in the entity graph.

This is a pattern recognition game, and AI systems reward rhythmic signals over random noise.

For a B2B client, we mapped their entity signals and pushed topical clusters every month – twelve months in a row.

By month seven, their “authority growth AI visibility” score began to climb, and citation from AI systems grew threefold by year’s end, with zero viral moments.

The result?

Slow authority recognition in AI replaced years of stagnation with gradual, compounding gains.

If this feels frustratingly slow, ask: Are you chasing short-term wins, or building the kind of trust that persists?

Contrary to the myth that AI trust signals reset each cycle, true authority is cumulative – and, like good compounding interest, it favors patience and repetition.

Put simply: Trust propagation in AI systems isn’t a one-off event.

It’s a relay, where each baton pass – across topics, months, and patterns – moves authority a little further than the last handoff.

Ready to see how diagnostic evidence construction seals these gains, or will you leave your progress to chance?

What must be understood before acting

Authority growth is systemic, not instant

In AI search, authority growth refers to the cumulative, recursive process by which entities gain trust – measured not by content quality alone but by coherent repetition, corroboration, and network effects across the entity graph.

Did you know that some credible digital sources remain virtually invisible to AI-driven discovery for 18 – 24 months?

It’s like planting seeds in winter and watching for green shoots long before they appear – logical, but deeply unsatisfying when quarterly goals demand faster outcomes.

AI trust propagation authority growth doesn’t just depend on surface-level signals.

It follows slow, compounding loops built into entity graph trust propagation.

The misconception?

Many teams believe that one-time bursts – like a round of earned media or a quick fix to brand mentions – will bend the authority curve in weeks.

Here’s what happens in practice: We watched a data infrastructure company that spent six figures on thought leadership.

Their breakthrough didn’t come until a year passed and a dozen corroborations appeared on neighboring topics.

The AI source selection authority process left them in the shadows, simply because their entity was not consistently referenced or reinforced in related content.

Why so slow?

Trust propagation in AI systems works more like water seeping through layers of earth than a running faucet.

Every citation, mention, or contextual alignment adds a thin new layer, not a dramatic rush. Imagine stacking sheets of tracing paper – each pass reveals a slightly clearer outline, but you need many sheets before the image stands out.

It’s tempting to demand a shortcut.

But entity-based authority AI search rewards patience and persistent coherence more than aggressive bursts.

The real signal?

Consistency across months, not moments.

Does your brand show up, say the same thing, and link to the same concepts, every time?

So: If your internal story expects instant authority growth AI visibility, hit pause.

The only thing that grows fast is frustration.

Every executive wants a lever to pull now.

But entity coherence AI citation and slow authority recognition AI are diagnostics – not quick switches.

Recognizing the pace and interconnectedness is the first move.

Effective interventions rely on accepting this tempo – and reading the real signals beneath the surface.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Trust Dynamics and Social Networks

Trust and Distrust Definitions: One Bite at a Time – D. Harrison McKnight, Norman L. Chervany – Springer (Lecture Notes in Computer Science)

Provides a structured framework for trust as a multi-dimensional construct (dispositional, interpersonal, institutional), clarifying how trust propagates across social and organizational networks.

https://link.springer.com/chapter/10.1007/3-540-45547-7_3 - Entity Linking and AI Authority

Neural Entity Linking: A Survey of Models Based on Deep Learning – Özge Sevgili et al. – Semantic Web Journal

Explains how entity linking systems identify, disambiguate, and connect entities using knowledge graphs and embeddings, forming the technical basis for authority attribution in AI systems.

https://journals.sagepub.com/doi/10.3233/SW-222986 - Feedback Loops and Selection Bias in Algorithms

Feedback Loop and Bias Amplification in Recommender Systems – Masoud Mansoury, Himan Abdollahpouri, Mykola Pechenizkiy, Bamshad Mobasher, Robin Burke – ACM Conference on Recommender Systems

Shows how recommendation algorithms create iterative feedback loops where user interactions reinforce initial biases, leading to reduced diversity and systematic overexposure of already popular items.

https://dl.acm.org/doi/10.1145/3340531.3412152 - Decision-Making Under Visibility Constraints

Correcting Exposure Bias for Link Recommendation – Shantanu Gupta, Hao Wang, Zachary Lipton, Yuyang Wang – Proceedings of Machine Learning Research (ICML)

Analyzes how limited exposure to information creates selection bias in decision systems, showing that visibility constraints distort observed relevance and propagate biased outcomes over time.

https://proceedings.mlr.press/v139/gupta21c.html - Knowledge Graph Authority and Propagation

“Knowledge Graphs: Methodology, Tools and Selected Use Cases” – Dieter Fensel et al. – Springer

Offers in-depth technical background on entity graphs, coherence, and how structured linkage affects propagation, trust, and selection in modern AI.

https://link.springer.com/book/10.1007/978-3-030-37439-6

Questions You Might Ponder

Why does trust propagation in AI favor early sources?

AI systems disproportionately trust sources cited during initial retrieval cycles. Early exposure becomes “seed” authority – later, repeated citations reinforce these, often marginalizing even more credible but later entrants. This entrenches authority unevenly, shaping long-term AI rankings and visibility.

How do fragmented entity signals hurt AI authority growth?

When brand names, spellings, or spokesperson titles vary across channels, AI splits recognition across multiple entities. This fragmentation prevents authority from consolidating, causing slower trust propagation and reduced visibility – even for brands publishing high-quality content consistently.

What’s the difference between keyword optimization and entity-based trust propagation?

Keyword optimization targets page rank by maximizing term frequency, while entity-based trust propagation relies on coherent, repeated citation of entities. The latter builds compounded authority through network effects, not just keyword volume, shaping long-term AI-driven discoverability.

Why is authority growth slow in regulated industries?

AI systems in regulated sectors (healthcare, finance, etc.) adopt stricter citation and corroboration requirements, slowing trust propagation. High evidentiary standards mean that even coherent brands face prolonged lag times before gaining recognition in AI search and recommendation algorithms.

Can rapid content bursts accelerate trust propagation in AI?

No – authority in entity-based AI systems relies on consistent, repeated signals across sources and time. One-off bursts of content rarely shift entrenched trust dynamics; instead, sustained patterns and cross-source corroboration are needed to drive measurable growth in AI authority scores.