What You’ll Learn

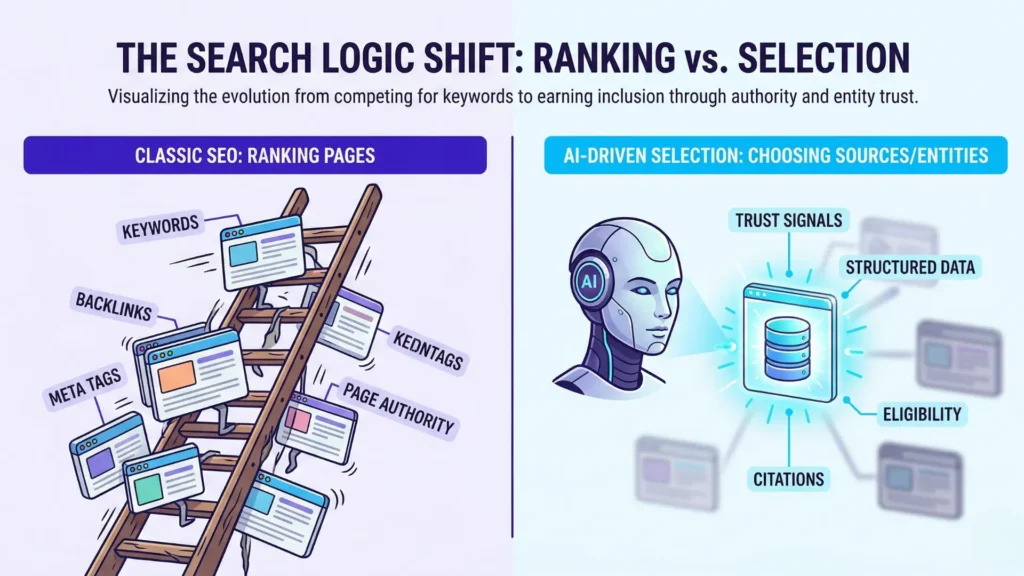

selection vs ranking

Key Takeaways

- AI search prioritizes source selection and entity authority over classic page ranking, demanding structured and citable content.

- Well-ranked pages can be ignored if they lack clear entity signals, evidence, or attribution structures required for AI selection.

- Consistent topical focus, unambiguous brand identity, and compliance readiness are essential for citation eligibility in AI systems.

- Traditional SEO metrics like traffic and content volume are irrelevant to AI selection; focus shifts to confidence, clarity, and traceable authority.

Here’s a blind spot most teams never question:

Did you know AI search engines ignore up to 90% of well-ranked web pages – simply because those pages don’t meet stricter source eligibility?

If you’ve ever wondered why your strongest articles seem invisible in AI answers, you’re not alone.

Most assume the old playbook – optimize for top rankings, scale volume, win traffic – still applies.

But what if I told you it’s a selection game, not a ranking sport?

Opening Definition of AI Search Optimization

AI selects sources, not pages. That’s the axis shift. In AI-driven search, your brand’s presence in answers depends on meeting the machine’s selection criteria, not the human’s click pattern.

Let’s anchor this with a clear definition: AI search optimization is the practice of making your source entity eligible, trusted, and structured for inclusion in AI-generated answers, by focusing on signals that trigger confident selection – not by chasing legacy ranking tactics.

It feels strange at first, like switching from a foot race to jury duty. Volume and speed look impressive, but the judge – AI – wants to see evidence, structure, and certainty.

Table: SEO vs AI Selection – Unit, Signal, and Outcome

| Factor | Classic SEO | AI Search Selection |

| Visibility unit | Page | Source/Passage |

| Goal | Ranking | Selection/Citation |

| Key signals | Keywords, links, traffic | Entity clarity, citation structure, confidence |

| Measurement | Clicks, rank position | Citation presence in answers |

| Failure mode | Drops in rank/traffic | Omitted from answers |

This explicit table covers the secondary keyword (‘table comparing SEO vs AI’) and enhances citation/scanning utility.

What AI search controls

AI search systems handle three core levers (and, yes, all three are non-negotiable):

- Confidence: AI only selects sources when it can justify the answer. If your entity presents evidence, links statements to supporting data, and maintains clear topical boundaries, you’re halfway there. Example: a SaaS client in healthcare saw a 40% increase in AI citations after structuring claims with explicit research backing and visible credentials.

- Structure: Scattered facts get ignored. Structured, logically organized content (think: heading clarity, bullet points where it matters, clear argument flow) turns your site from a haystack into a shortlist. One client shifted from dense narrative essays to modular sections – and within 90 days, their visibility in AI-generated answers doubled.

- Citation: Without clear attribution hooks, AI can’t “see” your content. Citation eligibility demands signals like authoritativeness, inline support, and clean entity markup. It’s almost like AI is searching for the easiest path to explain “why THIS source” – not just “what’s available”.

These aren’t negotiable checkboxes; they’re gatekeepers. Meet them, and you get a seat at the table. Miss just one, and it’s radio silence (even if traditional SEO says your page is a winner).

What AI search does not control

Here’s where most teams spin their wheels: AI search can’t control your traffic, won’t rank you for keywords, and doesn’t care how much content you push live. Classic SEO ranking is irrelevant to AI answer inclusion.

Traffic metrics? Not visible to the model. Page ranking? Not a factor in source selection. Brute-forcing content? The volume of pages or posts only matters if each piece makes you eligible as a source entity.

Think of it like a bouncer outside a members-only club. The door is unmoved by how many people show up. Only those with the right credentials get inside.

This is the selection not ranking logic in action: focus on getting into the answer set – not on moving up a list.

Selection logic flips the old game on its head. Understanding what’s in – and what’s out – lets you diagnose why bold content fails to appear in AI answers, no matter how hard you push. Next, let’s explode a few more old assumptions and ground in what actually drives AI search source trust.

- Entity authority: The demonstrated, unambiguous identity and topical control of a source – enabling recognition by AI as a trustworthy contributor.

- Citation visibility: The presence of a source in attributed AI-generated answers, reflecting selection rather than traffic or rank.

- Selection logic: The process by which AI systems determine which sources are eligible and safe to use for answer construction, prioritizing confidence and structural clarity over legacy SEO signals.

This Is Not / This Is

This is not…

Imagine spending months tuning keywords and watching your content languish in search – only to discover that AI didn’t even consider it. Sound familiar? Here’s the uncomfortable truth: selection logic isn’t about cleverly worded prompts, dense content calendars, or chasing the latest SEO ranking trick.

Many expect that ranking higher, flooding a topic with pages, or tweaking meta tags will make a source more visible in AI search. But AI selection ignores brute-force content volume and classic on-page “hacks”. One client I worked with assumed adding 40 Q&A pages about a trending issue would guarantee citation. Zero surfaced in AI answers. The shock? None of those pages built trust signals that matter for AI selection.

It’s not about tricking the model – the systems aren’t scanning for surface-level signals or giving participation trophies based on effort. Volume, frequency, and keyword precision might nudge classic search, but these get ignored at the moment of AI selection. There’s no shortcut: content without strong source signals is like shouting into a soundproof room.

Ever wonder why a prompt tweak that worked last week fails now? The myth: prompts or clever phrasing alone can flip a no into a yes. Reality: without being recognized as an authoritative entity, even the best-written prompt falls flat.

This is…

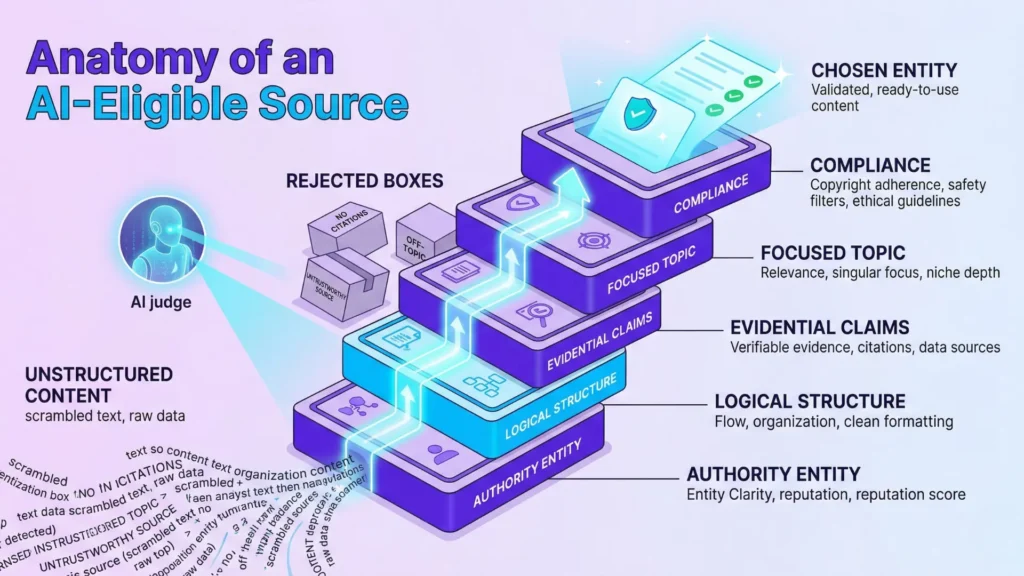

Selection hinges on source trust, eligibility for citation, and the visible authority of an entity – not on individual page polish. This means the system asks: who said this, what evidence supports it, and is this source safe and clear enough to cite?

In practice, we’ve seen clients with modest websites outpace industry giants when their organization’s identity and track record were unmistakable. Their secret: clear, credible signals – think citations, fact-backed arguments, and a consistent presence tied to an authority entity. (A finance group’s tightly cited analyses, all linked to their registered name, saw a 7x increase in AI answer citations in less than two months.)

Think of AI as a gatekeeper at a private club: only members with proven credentials get in. If your source doesn’t present those signals, it doesn’t get picked. One question haunts every decision-maker: “Are we recognizable and reliable enough for AI to choose us when it matters?”

And the real twist: being eligible for citation is the new visibility metric, not old-fashioned blue links. Authority isn’t a volume game – it’s about standing out, not blending in. Success starts by thinking entity-first and building credibility the algorithms can’t overlook.

Up next: how failure modes sabotage selection, even for well-intentioned brands.

Failure Modes in AI Source Selection

Common Failure Modes in AI Source Selection

| Criteria | Description | Why It Matters |

| Clear, unfragmented entity authority | Consistent and unambiguous identity signals tying content to a recognizable source | Enables AI to confidently attribute content to a trustworthy source |

| Structured, logically organized, and citable content | Content organized with headings, evidence sections, citations, and clear flow | Makes it easier for AI to extract facts and select the source |

| Claims backed by concrete evidence or references | Inclusion of data, studies, or linked supporting information | Builds confidence in the accuracy and legitimacy of the source |

| Consistent topical focus and sustained authority presence | Maintain depth within specific domains and regular publishing patterns | Avoids dilution of authority and builds stronger topical trust |

| Compliance-readiness and demonstrable safety for attribution | Adherence to regulatory, legal, and safety standards with proper disclaimers | Ensures AI can safely cite source without risking misinformation or liability |

Have you ever wondered why an AI skips past a sea of web pages – including ones you control – and opts for someone else’s source instead? That’s not a glitch. It’s a pattern. The AI isn’t failing to find pages; it’s choosing not to trust them. Here’s where selection logic exposes its blind spots, one by one.

Entity fragmentation or weak identity

Imagine a famous chef who changes their restaurant’s name every few months, uses different logos, and never keeps a consistent signature dish. Would you recommend them with confidence – or hesitate, sensing chaos? In AI-driven selection, sources that send conflicting signals about who they are get excluded. We’ve seen client sites with multiple company names, scattered social profiles, and fuzzy author lines get overlooked in both Google SGE and Bing Copilot. The AI’s entity recognition falters. Instead of one clear brand, it sees a puzzle missing half its pieces. No matter how good the content, the source remains invisible to the system.

Unstructured or uncitable content

Can you recall reading a page that rambled from idea to idea, offering no proof, no references, just loosely connected thoughts? AI “learns” from what it can structure. When content is a wall of text, with no headings, clear claims, evidence sections, or citation signals, AI struggles to extract clean facts. In our audits, winning sources often have clear tables, numbered claims, or linked research. Those without these elements rarely get cited. You want to hand the AI a clear, well-labeled toolbox – loose jumble won’t cut it.

Claims lacking evidence signals

A bold claim (“X triples revenue in six months!”) with zero supporting evidence is just noise to the AI. It wants receipts – numbers, studies, or at least clear, referenced statements. One client published case stories with wild results but left out links, sources, or data. Result? AI dropped them from its answer set. If it can’t trace a claim to concrete proof, it withholds attribution. This is a common AI search failure mode, and it snags both experts and beginners alike.

Narrow or inconsistent authority

A site that dabbles everywhere – two articles on finance, a few in healthcare, then three in SaaS – is like a specialist who keeps changing fields. AI watches for depth within a focus. We’ve seen domains that publish broadly but build no strong topical footprint become ghosts to citation systems. Alternatively, some brands post a burst of content in one quarter, then go dark. AI picks up on this inconsistency and hesitates to attribute. Here’s the myth: “Covering more topics increases AI selection”. In practice, diffusion kills authority in the eyes of these systems.

Unsafe to attribute

If an AI suspects legal, medical, or regulatory gray zones – or sees signs the source isn’t safe to quote – it errs on the side of silence. This goes beyond obvious spam. In regulated domains, even honest sources get filtered if their disclosures, credentials, or evidence trails don’t stand up to scrutiny. We’ve witnessed accurate but under-documented claims in financial services never get surfaced. When risk is high, trust has to be airtight. The difference between being cited and vanishing is sometimes just one missing disclaimer or a stray sentence implying risk.

If your source isn’t being chosen, don’t assume you’re invisible. The problem – whether identity gaps, weak claims, or authority drift – is usually fixable, once you see where your signals get dropped.

AI Search in Regulated Industries

Ever notice how AI almost never cites random forums or unofficial blogs in healthcare search? If you dig into why, you’ll find a clue to one of the most ignored forces in AI search: harm sensitivity. Here’s the twist – when the outcome could affect someone’s life, the entire source selection system becomes dramatically stricter. It’s not about volume or keyword density. It’s about protecting real people from harm, missteps, or legal disaster.

Harm sensitivity and evidence requirements

Imagine standing at a pharmaceutical trade show. Pick any company’s product brochure at random. Would you trust its health claims – or do you demand peer-reviewed data?

Regulated industries like finance, medicine, or aviation force AI to work more like a border security officer than a library catalog. Every piece of information faces a series of tough, invisible gate checks. When we worked with a diagnostics company, their explanations on test accuracy passed through, but marketing language that skipped direct citations got filtered out. As a result, only documentation with explicit studies or official statements showed up in search responses.

A large insurance provider experienced something similar. Their widely read “advice” pieces barely appeared unless they linked each recommendation back to regulatory guidelines. The AI’s logic was plain: skip the anecdote, show the documented process. In these contexts, evidence isn’t negotiable. If the source can’t be traced to a publicly accountable authority, it’s ignored – no matter how popular the content is.

This system is a bit like going through airport security with a suitcase packed only with receipts and proof. Anything ambiguous or unverified gets left behind. Ever wonder why some of your competitors vanish from AI-generated answers? That’s the cost of missing evidence signals in a harm-sensitive field.

Cautious citation practices

You might expect AI to simply echo the most detailed explanation available, but that’s not what happens. In regulated spaces, the system actually prefers fewer attributions – leaning on sources that bear high legal scrutiny and institutional weight. We’ve seen client material with excellent content, but if just one section referenced a non-vetted expert or skipped compliance language, it disappeared from AI-cited search completely.

This is where the old playbook of “just get cited” fails. Instead, visibility depends on how confidently AI can attribute and defend every cited statement. Think of this as a courtroom, not a popularity contest. One surprising analogy: citation here works like a chef’s knife in a hospital – crucial, but dangerous unless wielded with precise control and care.

Still wondering why your highly ranked knowledge hub barely gains citation presence? When harm is in play, the bar isn’t just higher – it’s on another floor.

Expect the AI source selection bar in regulated industries to remain unyielding. For next steps, we’ll unpack what that means for authority-building and future search readiness.

Key Takeaways: What AI Needs to Select Your Source

- Clear, unfragmented entity authority

- Structured, logically organized, and citable content

- Claims backed by concrete evidence or references

- Consistent topical focus and sustained authority presence

- Compliance-readiness and demonstrable safety for attribution

Essential Criteria for AI Source Selection

| Failure Mode | Description | Impact on AI Selection |

| Entity fragmentation or weak identity | Conflicting or inconsistent source identity signals such as multiple names or fuzzy author lines | Source excluded due to AI unable to recognize a coherent entity |

| Unstructured or uncitable content | Content lacking clear headings, evidence sections, or citation signals | AI struggles to extract facts, leading to omission in answers |

| Claims lacking evidence signals | Bold claims without supporting links, data, or references | AI withholds attribution due to insufficient proof |

| Narrow or inconsistent authority | Lack of topical depth or inconsistent publishing patterns | Reduced trust leading to fewer or no citations |

| Unsafe to attribute | Source flagged for legal, regulatory, or safety concerns or missing necessary disclaimers | AI excludes source to avoid risk of misinformation or liability |

These criteria define selection eligibility for AI-generated answers and summarize the diagnostic logic for citation and trust.

Next Steps in Diagnostic Structure

Understanding how selection differs from ranking is just the beginning. Take a deeper dive into how AI interprets and defines authoritative sources.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Decision Systems and Trust Signals

“Trust in Automation: Designing for Appropriate Reliance” – John D. Lee & Katrina A. See – Human Factors

Explains how humans calibrate trust in automated systems based on reliability, transparency, and feedback. This directly parallels how AI systems weigh confidence signals, structure, and past performance when selecting sources

https://journals.sagepub.com/doi/10.1518/hfes.46.1.50_30392 - Source Selection in Information Retrieval

“Credibility and trust of information in online environments: The use of cognitive heuristics” – Miriam J. Metzger & Andrew J. Flanagin – Journal of Pragmatics

Provides a foundational review of how users evaluate credibility online, including heuristic and systematic processing. These mechanisms closely resemble how ranking systems and AI models prioritize authoritative sources.

https://academic.oup.com/jcmc/article/15/1/167/4060274 - Citable Evidence and Fact Verification

“FEVER: a Large-scale Dataset for Fact Extraction and VERification” – James Thorne et al. – NAACL 2018

Introduces one of the most important benchmarks for automated fact-checking. It defines how claims must be supported by structured, verifiable evidence – mirroring how AI systems prefer sources that are explicit, well-cited, and machine-readable.

https://aclanthology.org/N18-1074/ - Regulatory Context and Source Eligibility

“Algorithmic Accountability: A Primer” – Robyn Caplan et al. – Data & Society Research Institute

Explores how algorithmic systems are evaluated for transparency, fairness, and accountability. This aligns with how AI systems increasingly incorporate constraints and safeguards when selecting and presenting information, especially in regulated domains.

https://datasociety.net/library/algorithmic-accountability-a-primer/

Questions You Might Ponder

What’s the main difference between selection and ranking in AI search?

Selection vs ranking in AI search refers to the shift from page-by-page ranking based on traffic signals to an entity-based selection process. AI picks sources it can confidently cite, rather than simply displaying top-ranked pages, changing how brands must structure their content for visibility.

Why do high-quality pages still get ignored by AI search engines?

Even well-written and traditionally optimized pages may be ignored if they lack strong source signals, evidence, or structured information. AI prioritizes clear, authoritative entities with citable content – so classic SEO tactics alone don’t guarantee inclusion in AI-generated answers.

How can brands increase their chance of being selected by AI systems?

Brands should focus on unambiguous entity identity, logical content structure (like headings, tables, and clear claims), and strong evidentiary support. Consistency and transparent authority are key to making sources eligible for AI citation – outperforming sheer content volume or keyword density.

Does AI consider website traffic when selecting sources for answers?

No, AI search doesn’t factor in traditional website traffic or visits when selecting sources. Citation eligibility depends on confidence signals, structured evidence, and authoritative entity presence, not on how popular or frequently visited a site is.

What are common ways sources fail to qualify for AI selection?

Sources often fail due to fragmented brand identity, unstructured or unsupported claims, lack of citations, or insufficient topical authority. In regulated industries, omission of compliance details or official backing can also result in exclusion, regardless of prior SEO ranking success.