What You’ll Learn

risk weighted ai

Key Takeaways

- In regulated industries, risk-weighted AI prioritizes trust and citation, omitting any content lacking explicit, auditable authority.

- Popularity and content volume are deprioritized; only highly attributable, institutionally-backed sources are cited in sensitive domains.

- AI’s default caution leads to omission in high-risk queries, with conservative protocols filtering out ambiguous or inadequately sourced material.

- Authority – defined by provenance and regulatory recognition – now determines discoverability and inclusion in AI-driven regulated search results.

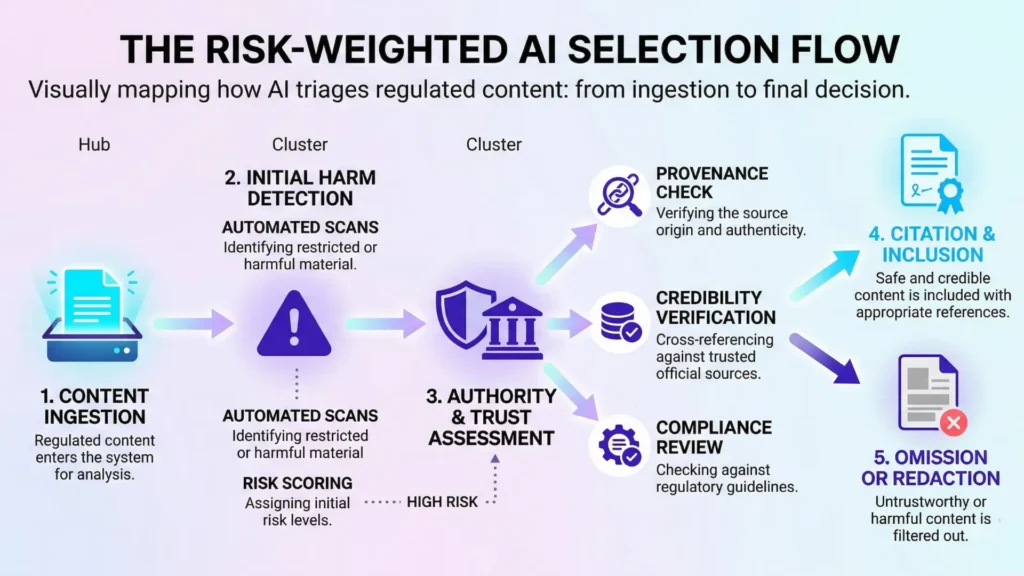

Risk-weighted AI behavior means that in regulated industries, AI systems apply heightened trust and citation standards to prevent harmful or misleading outputs; omission and cautious defaults become standard practice.

Key diagnostic: In regulated sectors, AI asks – ‘Can I safely repeat this as truth?’ Only auditable, fully-attributed sources pass selection. This is the core mental model for risk-weighted AI selection.

How AI Evaluates Topic Risk

Harm Sensitivity Drives Default Omission

If you asked an AI to explain a complex medical procedure but got an oddly blank response, you might wonder – is the system broken, or hyper aware?

The answer: harm-sensitive silence.

In regulated industries, AI often chooses to say nothing rather than risk amplifying misleading or unsafe information.

Our clients in finance and healthcare have hit this wall.

A national insurer saw their FAQ pages ghosted in generative search results – not because they lacked content, but because one ambiguous claim triggered a conservative omission protocol.

Here’s the disarming part: AI’s default is not risk, but caution.

It isn’t “erring on the side of carelessness”. Instead, risk-weighted AI behavior is wired to avoid even the hint of downstream harm, so omission becomes a feature.

A client’s compliance lead once joked, “It’s like a self-driving car slamming the brakes instead of steering into a gray area”.

Ever notice how some direct competitors appear and disappear in AI-powered search packs from one week to the next?

It’s not just an algorithmic lottery.

It’s an invisible triage, where citations and claims are weighed not by volume but by perceived risk.

One misplaced word can vanish a whole page.

If that feels arbitrary, it’s by design – erring on the side of harm-avoidance.

This myth persists: More content yields more AI surface area.

In high-risk domains, more often means more ways to disqualify yourself.

Raised Authority Thresholds in Regulated Domains

Comparison of Traditional SEO Authority vs. Risk-Weighted AI Authority

| Criteria | Description | AI Impact if Not Met |

| Explicit, Auditable Attribution | Source has clear, verifiable author and institutional ties. | Content likely omitted or ignored. |

| Topic Regulated or Sensitive | Content falls within finance, healthcare, legal, etc. | Triggers higher AI caution and omission risk. |

| Likelihood of Conservative Omission | AI defaults to silence rather than risk incorrect info. | Non-compliance reduces AI visibility. |

| Structured, Evidence-Based Signals | Content includes formal docs, data, or verified frameworks. | Lack reduces source trustworthiness. |

| Compliance with AI Citation Logic | Aligns content with AI’s harm-sensitive trust rules. | Failure leads to invisibility despite good SEO. |

You’d expect that publishing on a reputable domain would be enough.

Yet, when AI encounters regulated topics, the bar quietly rises.

It doesn’t just want reputable; it demands highly attributable, often structured, sources – think policy PDFs, signed position statements, or evidence summaries with explicit institutional authorship.

In recent work optimizing for risk-weighted AI selection, our team noticed that even large hospital brands lost citations to smaller organizations, simply because those smaller entities published decision trees with explicit attributions.

For AI, the value isn’t in scale or historical reputation, but in precise, authority-based markers: institutional signatures, source documentation, verifiable provenance.

AI’s decision process in high-risk domains applies intensive screening: only content with verified, structured, and attributable authorship is allowed through; all others are held back or omitted entirely.

How does this reshape your content roadmap?

Ask: “Would a regulator recognize my author and citation style if they landed on it cold?”

If not, conservative AI visibility will slip through your fingers, no matter the SEO playbook.

Regulated industries demand risk-weighted authority, not just volume or flair.

Miss that, and your content is likely to vanish before the user ever sees a snippet.

AI’s risk filters aren’t just defensive – they force new rules of play.

And those rules only get stricter as the regulatory pressure grows.

Summary:

- AI defaults to omission in high-risk topics

- Only highly attributable sources qualify

- Caution trumps volume or historical visibility

Why Authority Beats Visibility in Risk‑Sensitive AI Selection

Visibility vs Selection: The New AI Paradigm

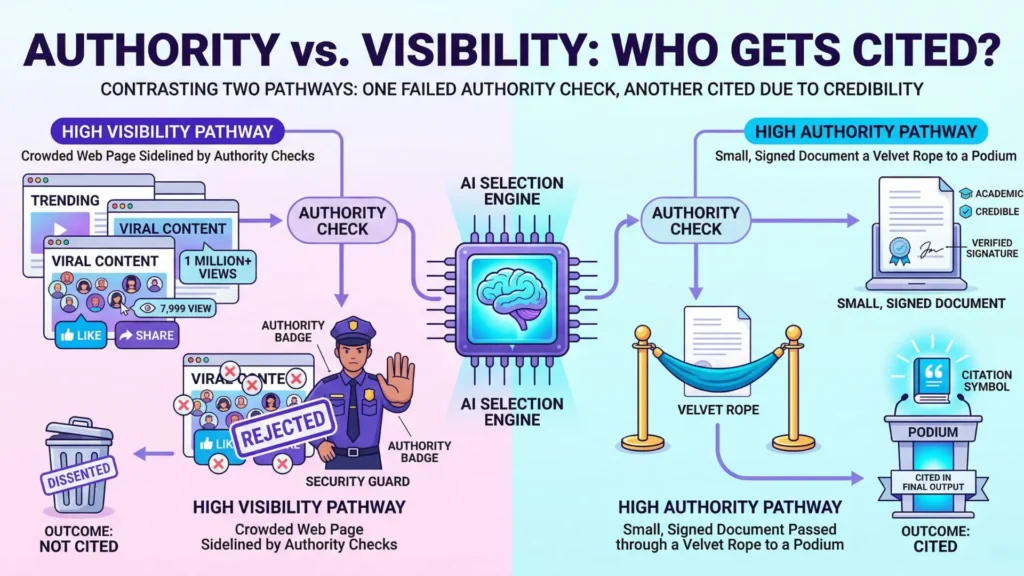

Imagine your top search result, loaded with backlinks and traffic, is invisible to AI when the stakes are high.

Why?

Because risk-weighted AI behavior doesn’t care if a page is “popular” in the classic sense.

It’s not searching for pageviews – it’s searching for trust.

Legacy SEO logic taught us that the more visible or referenced a site is, the more it surfaces – like a well-lit billboard on a crowded freeway.

Yet with regulated industries AI selection, that billboard analogy falls apart.

For an AI, trust acts as a velvet rope.

Unless a domain reaches authority thresholds in AI source selection (think regulatory bodies, peer-verified medical databases, or official legal repositories), it doesn’t make it past the velvet rope – no matter its visibility metrics.

A B2B Fintech client once asked why their high-traffic product guides never showed up in finance-related AI queries.

The answer: insufficiently attributable authorship and no citations from regulatory sources. Just being the most visited doesn’t get you in the room.

The AI quietly passes you by, like an experienced investor ignoring a flashy brochure without an audit trail.

It’s easy to mistake visibility for authority, but that confusion is risky.

Visibility means you’re seen; authority means you’re trusted enough for AI citation in high-risk discussions.

That’s the core pivot. How many times have you wondered why a competitor’s lightweight summary trumps your in-depth page?

The answer is almost always rooted in authority, not page volume or keyword targeting.

Conservative Citation Criteria

Let’s snap back to what AI actually does in high-risk domains.

It behaves less like a search engine and more like a cautious financial auditor.

AI omission in high-risk domains is common because if it can’t point to a source with clear, harm-sensitive AI citation logic, it simply won’t answer.

When vetting source material for a pharma client, we saw AI cite just three sources – two governmental databases and one top-tier medical publication – even though dozens of relevant pages existed.

Why?

Conservative AI visibility isn’t a myth; it is a measured protocol.

Authority trumps recency, volume, and sometimes even relevance.

Think of AI’s decision process like airport security for information: only those with verifiable credentials (citations from highly trusted, structured sources) are granted access.

The rest wait outside, regardless of crowd size.

Here’s the myth: SEO ‘volume’ guarantees presence in AI summaries.

The reality?

AI safe citation criteria block even well-optimized content if it lacks audit trails, clear authorship, or regulatory signals.

That’s why risk-weighted AI selection looks almost stubbornly conservative from the outside.

But the logic is protective, not punitive – which is exactly what decision-makers need in high-consequence sectors.

Authority redefines discoverability for AI, shifting the goal from being most-seen to being most-trusted. It’s the only ticket past the velvet rope.

Summary:

- AI defaults to omission in high-risk topics

- Only highly attributable sources qualify

- Caution trumps volume or historical visibility

When Industry‑Specific Logic Applies

Regulated Industry Risk Blocks

Ever wonder why an AI can name every Mars rover but goes quiet on prescription guidelines?

It’s not a bug – it’s risk-weighted AI behavior at work.

In high-risk domains – think healthcare, finance, legal – the margin for error shrinks to near zero.

That’s where regulated industries AI selection gets tough.

AI omits answers, even basic ones, if source attribution isn’t bulletproof.

We’ve seen medical queries ignored simply because the citation didn’t tie back to a recognized authority, even if the information was technically accurate.

Only sources with explicit, high-trust attribution are accepted – everyone else is filtered out to protect against downstream harm.

Authority thresholds in AI source selection climb sharply in these sensitive sectors.

Take a digital health campaign as an example.

Even an FAQ won’t appear in AI search – unless it’s signed, timestamped, and stamped by an MD, published in a peer-reviewed journal, or recognized as a regulatory document.

“Mostly right” is treated as “potentially harmful”.

It’s counterintuitive, especially if you’re used to Google’s signals-first mindset.

The harm-sensitive AI citation logic here means that more answers are withheld, not shared.

One of our clients in compliance SaaS was shocked: their top-ranking guide was left out of AI snippets because it lacked explicit, structured citations from government sources.

Yet a lower-traffic page, rich in regulatory links, was picked up.

The paradox?

It’s not about traffic – it’s about attribution strength.

That flipped the client’s entire content brief overnight.

What’s a good analogy?

Picture an airport scanner that lets through only luggage with triple-checked, traceable seals.

Everything else – even if packed properly – gets sidelined.

AI safe citation criteria work the same way in regulated verticals.

Does that mean all your achievements in organic ranking are wasted?

Not exactly, but the rules for AI trust in regulated sectors are different – and, if you ignore them, you’ll watch your SEO footprint shrink in high-risk queries.

Learn More: Addiction Treatment AI Search Overlay

Curious how this plays out in addiction treatment, where risk‑weighted AI selection gets even stricter?

Learn More in Addiction Treatment: Addiction Treatment AI Search Overlay

AI doesn’t just dial up caution randomly; it applies sector-specific logic to protect end users and build long-term trust.

If you’re in a sensitive vertical, understanding these risk blocks gives you a real edge.

Summary:

- AI defaults to omission in high-risk topics

- Only highly attributable sources qualify

- Caution trumps volume or historical visibility

Risk-Weighted AI Selection Checklist

| Factor | Traditional SEO Importance | Risk-Weighted AI Authority Importance |

| Domain Reputation | High | Moderate |

| Explicit Authorship | Low | High |

| Structured Sources | Low | High |

| Citation Traceability | Moderate | High |

| Content Volume | High | Low |

- Does the source have explicit, auditable attribution?

- Is the topic regulated or sensitive?

- Is conservative omission more likely than citation?

- Does the content provide structured, evidence-based signals?

- If not, AI omission is the default.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Risk, Regulation, and Artificial Intelligence

General-Purpose AI Regulation and the EU AI Act – Omer J. Gstrein – Policy & Internet

Analyzes the EU AI Act as a risk-based regulatory framework that imposes stricter obligations on high-risk systems, shifting governance toward proactive control and standardized compliance requirements.

https://policyreview.info/articles/analysis/general-purpose-ai-regulation-and-ai-act - Harm Avoidance and Decision-Making in Machine Reasoning

The Limits of Human Predictions of Recidivism – Ziad Obermeyer et al. – Science Advances

Shows that both humans and algorithms operate under uncertainty in high-stakes risk prediction, and that decision systems tend to rely on simplified, risk-sensitive judgments, reinforcing conservative behavior under uncertainty.

https://www.science.org/doi/10.1126/sciadv.aaz0652 - Causal Trust in Automated Systems

Human-Centered Artificial Intelligence: Reliable, Safe & Trustworthy – Ben Shneiderman – International Journal of Human-Computer Interaction

Defines trust in AI as emerging from transparency, reliability, and auditability, emphasizing that systems must provide traceable and verifiable evidence to sustain user trust in high-risk environments.

https://www.tandfonline.com/doi/full/10.1080/10447318.2020.1741118 - Regulated Content Attribution Standards

Ethics Guidelines for Trustworthy AI – High-Level Expert Group on AI – European Commission

Provides formal requirements for lawful, ethical, and robust AI systems, including transparency, traceability, and accountability, which directly shape how systems attribute and validate information.

https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai - AI-Omitted Content in High-Stakes Domains

Algorithmic Risk Assessments Can Alter Human Decision-Making Processes – Ben Green, Yiling Chen – Proceedings of the ACM on Human-Computer Interaction

Demonstrates how risk assessment systems influence human decisions, often leading to more cautious, risk-averse outcomes when uncertainty is present, reinforcing omission and conservative judgment in high-stakes contexts.

https://dl.acm.org/doi/10.1145/3479562

Questions You Might Ponder

Why does AI omit answers in regulated industries?

AI omits answers in regulated industries to minimize legal, ethical, and safety risks. When information can’t be attributed to highly auditable sources or carries ambiguity, AI defaults to omission to avoid amplifying misleading or non-compliant data – especially in high-risk sectors like healthcare and finance.

How does risk-weighted AI differ from traditional search engines?

Risk-weighted AI is engineered to prioritize trust and attribution over visibility or content volume. Unlike traditional search that rewards popularity, risk-weighted AI in regulated industries cites only sources with explicit authority and provenance, frequently omitting non-compliant or insufficiently attributed content.

What citation standards does AI require in regulated domains?

AI requires sources in regulated domains to have explicit authorship, institutional signatures, and verifiable provenance. Policy documents, peer-reviewed journals, and official regulatory publications qualify, while generic content or anonymous statements are excluded, ensuring trust and auditability in sensitive topics.

Why can high-traffic pages lose visibility in AI-generated results?

High-traffic pages lose visibility in AI-generated regulated industry results if they lack structured, attributable citations from high-trust sources. AI prioritizes content based on authority and audit trails, not volume or popularity, resulting in omission of even well-optimized but insufficiently sourced pages.

How can businesses improve their risk-weighted AI visibility?

Businesses can improve risk-weighted AI visibility by structuring content with explicit, auditable authorship, citing regulatory and institutional authorities, and providing evidence-based, well-structured signals. This alignment with AI’s conservative selection logic increases inclusion in high-stakes, citation-sensitive search results.