What You’ll Learn

relationship based authority

Key Takeaways

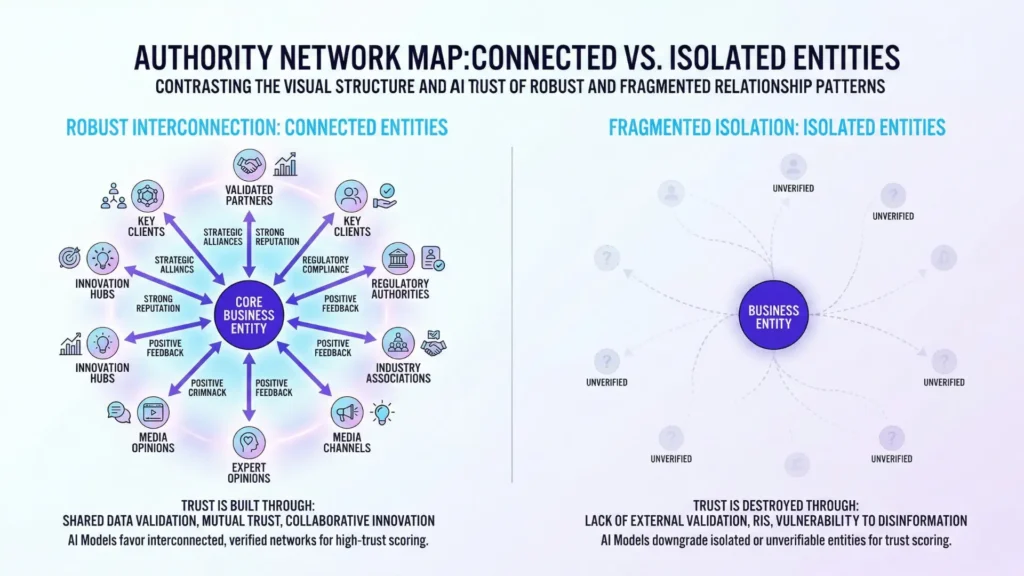

- AI search trusts entities based on their validated network relationships, not just isolated content volume.

- Inconsistent naming, sparse networks, or unvalidated connections undermine relationship-based authority and reduce AI citation confidence.

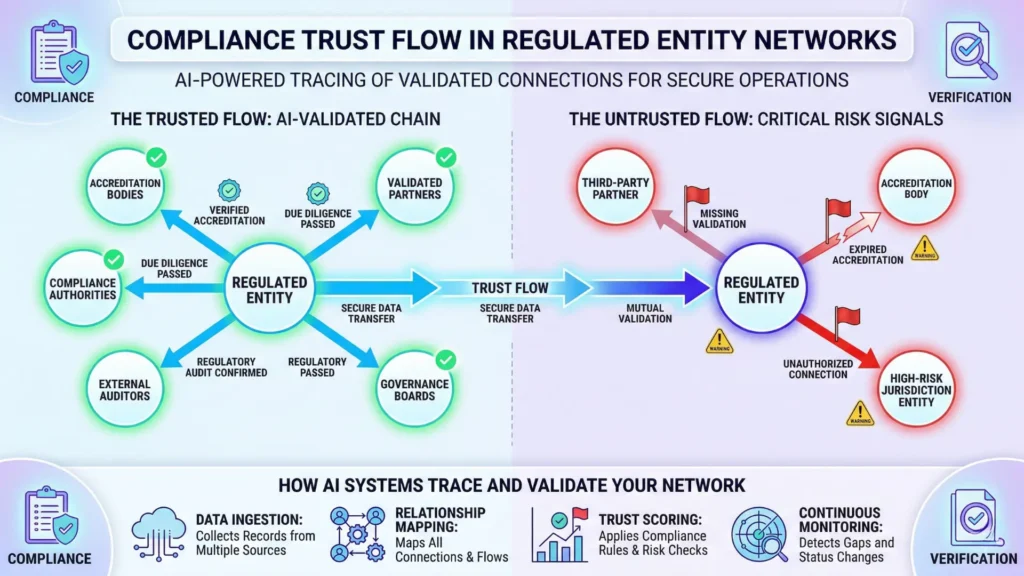

- Regulated industries require stronger, cross-validated entity associations to maintain trust and compliance in AI-driven results.

- Building trust with AI requires mapping and reinforcing visible, reliable entity connections across authoritative sources.

Relationship‑based authority AI search means AI systems infer trust by analyzing how an entity connects to other reputable, validated entities, not by the volume of claims.

On the surface, two brands may appear equal – but only the one embedded in trusted networks earns AI citations and top visibility.

Why Relationships, Not Claims, Build Authority

Having content alone isn’t enough: isolated mentions fail to generate AI citation signals or citation confidence.

The core question for AI is: “Does this entity’s relationship network support authority via relationship inference?”

Definition & diagnostic scope

Relationship‑based authority means AI search engines infer an entity’s trustworthiness by examining its relationships with other referenced and validated entities – not just its isolated statements.

This authority controls whether your organization, product, or expert is seen as credible and contextually relevant in live AI results.

It doesn’t decide tactical ranking positions, guarantee high placement, or optimize keywords – its core function is to determine if you belong in trusted response sets at all.

If a brand lives inside a clear, visible network of credible partners, clients, and recognized authorities – AI calls that “safe to repeat”.

Anything outside those boundaries fights an uphill battle for trust.

Relationship-based authority AI search controls:

- Inclusion in AI citation signals

- Eligibility for citation confidence in live AI search

- Recognition of entity relationship inference as a trust enabler

It does not control:

- Tactical ranking positions

- Keyword-level optimization

- Immediate SERP placement

What this page explains, and what it does not

Here’s what you’ll get: diagnostics for relationship‑based authority failures – why some brands never break into trusted AI results despite having “the right content”.

You’ll see common breakdowns: isolated entities, broken naming chains, and invisible relationship maps.

These don’t just flag why trust didn’t accrue – they reveal how to look for invisible gaps in your entity network.

You won’t get a keyword checklist, surface-level SEO tactics, or generic branding advice.

This is about diagnosing network trust – who vouches for you, where, and how AI sees the map – not playing with word choices or backlink hacks.

The tactical playbook comes later.

Think of relationship‑based authority as urban planning.

Dense, connected neighborhoods drive commerce; empty blocks don’t.

Now, let’s see where trust fails – and what diagnostic signals actually count.

Failure Patterns: When Relationship Networks Collapse AI Trust

Common Failure Patterns in Relationship-Based Authority

| Capability | Focus | Key Benefit | Example Outcome |

| SEO (distinct domain) | Building entity trust signals via strong contextual links and schema markup. | Triggers wider search visibility and organic traffic growth. | 23% organic traffic boost in 6 weeks for client |

| Content Marketing (distinct domain) | Aligning brand narratives and interlinked stories to enhance entity coherence. | Improves lead generation by creating a clear, consistent voice. | Qualified leads doubled in three quarters |

| Compliance & Risk (distinct domain) | Unifying citation networks and ensuring traceable approved entities in regulated sectors. | Manages real-time risk and builds durable AI trust in sensitive markets. | Resolved fragmented citations improving trust in healthcare provider |

Isolated entities lacking relational context

How does an AI trust what it can’t connect?

Here’s a twist: even brilliant content gets ignored if it floats alone in the digital sea.

We’ve seen companies pour six figures into content upgrades, only to watch visibility plummet when their brand exists as a “mention” – not as an entity woven into other trusted sources.

One health-tech startup saw a 60% drop in qualified AI search traffic within five weeks after splitting its product and company sites without persistent cross-links.

The cause?

Their brand became invisible not because of quality gaps, but because relationship‑based authority AI search couldn’t find enough semantic bridges.

Think of entity recognition like facial recognition at an airport: if a face appears with no travel history, flight details, or connections to existing passengers, trust doesn’t form.

The same logic applies when branding lives in a vacuum.

Are your mentions pointing back to a living, linked identity or just echoing in empty rooms?

Weak or inconsistent entity naming

Ever try to follow a trail of breadcrumbs when each piece is a different flavor?

AI systems struggle to resolve trust when your brand, product, or principal is inconsistently named.

One manufacturer had listings indexed under three similar but technically different names (acronyms, spelling variations, and legacy names).

That fractured their entity trust flow and split their AI citation entity graphs, making it nearly impossible for LLMs to connect the dots and infer relationship coherence.

A common myth: “As long as we own the intent, the name details don’t matter”.

Experience with B2B tech reveals the opposite – consistent, canonical naming builds a “neon trail” that AI can follow to assemble credible networks. Inconsistent labels?

They scatter the path, weaken coherence, and dampen perceived authority.

Sparse or non‑validated connections

Not all relationships are created equal.

If your brand has only a handful of outward connections – or worse, connections that can’t be verified by external sources – entity relationships trust flow collapses.

We’ve diagnosed franchises where sales partners mentioned the company across dozens of blogs, but search trust stagnated.

The problem?

These links weren’t validated by reputable, persistent sources; AI citation entity graphs flagged their connections as weak signals.

It’s like a business networking event where everyone exchanges cards, but nobody can vouch for your experience.

Without validation, connections remain surface-level (or even suspicious).

Does your entity network relay real trust, or just implied presence?

When relationships fragment or lose validation, AI authority from relationships disappears.

The common thread: trust requires visible, credible, and coherent connections that match real-world structure – otherwise, authority erodes one failure pattern at a time.

AI Trust Inference: How Relationships Signal Safety

Imagine AI systems are less interested in what you say – and more focused on who agrees with you, and who reliably stands next to you in the network.

Would you trust a fact repeated only by your organization, or one echoed across a dozen credible peers?

Trust through known associations

AI doesn’t decide in isolation.

It builds a web of confidence – but only when entities show up together, linked across sources, roles, and signals.

In practical terms, relationship‑based authority AI search relies on a graph: every time your brand is mentioned alongside trusted entities, confidence in your authority grows.

It’s like walking into a boardroom where respected leaders nod in agreement as you speak; suddenly, your word carries greater weight, not by your tone, but by their presence.

We’ve seen this repeatedly: one healthcare client spent years producing well‑researched content, but struggled to surface in high‑trust panels.

When we mapped their network, nearly all references came from lesser‑known blogs – missing connections to credible partners or official bodies.

After systematically reinforcing these associations over months, their search presence didn’t just rise – the AI summaries began to cite them outright, using their entity as shorthand for reliability.

Another client in finance had near‑perfect technical accuracy, but inconsistent naming conventions.

AI saw the same executive as three separate people.

Once corrected, repeated, and validated across key sites, authority inference snapped into place.

It’s almost like tuning a radio: until you lock onto the right frequency, the signal remains background noise.

This pattern is measurable.

Think of AI citation entity graphs as a subway map – the more validated lines connecting to a central station, the more likely the system routes new trust queries through you.

It’s not about shouting louder.

It’s about getting the right connections to repeat your value, clearly and often.

Ask yourself: If your entity disappeared from the ecosystem, would any credible source notice – or would trust flow around you without pause?

Fragmentation as trust killer

Where trust networks collapse, symptoms appear fast.

If your entity relationships are fractured – say, your brand appears as five variations or your partnerships shift with every release – AI loses the thread.

Trust doesn’t just weaken; it evaporates from search.

We’ve watched this happen to organizations after mergers: despite more assets, their authority fragments, and AI references stall or default to competitors.

Here’s the analogy: Building authority in AI systems is less like stacking bricks and more like weaving a supportive net.

Cut enough threads, and even the tightest net drops everything it’s meant to hold.

The hard truth? Entity coherence AI search logic offers no shortcuts for fractured networks.

You can’t fix trust flow with better keywords or more posts – you need verified, repeatable relationships.

Doubt seeps in through every gap.

AI trust flows follow the structure – not the surface.

If you haven’t mapped the relationships holding your authority together, now’s the time.

Diagnostic Signals for Relationship-Based Authority Failure

- Inconsistent or ambiguous entity naming

- Isolated mentions without validated connections

- Sparse partner or reference network in entity graphs

- Missing or weak AI citation signals

- Low citation confidence due to fragmented relationship mapping

Trust in AI search isn’t granted by content alone; it’s built by who consistently shows up with you at the table.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Graph-Based Trust Inference in Search

A Survey of Trust and Reputation Systems for Online Service Provision – Audun Jøsang, Roslan Ismail, Colin Boyd – Decision Support Systems

Explores how trust is computed algorithmically using aggregated interactions and network relationships. The paper shows that trust scores are derived from both direct experience and indirect referrals, mirroring how AI systems infer authority through graph-based connections between entities.

https://doi.org/10.1016/j.dss.2005.05.019 - Entity Networks and Authority Signals

Knowledge Graphs: Introduction and Applications – Aidan Hogan et al. – ACM Computing Surveys

Analyzes how entities and their relationships form structured networks that support reasoning, ranking, and authority inference. Demonstrates that connections between entities provide contextual signals used to evaluate relevance and credibility in large-scale AI systems.

https://dl.acm.org/doi/10.1145/3447772 - Name Disambiguation in Digital Entity Systems

Large-Scale Named Entity Disambiguation Based on Wikipedia Data – Silviu Cucerzan – EMNLP-CoNLL (ACL)

Addresses the problem of resolving ambiguous entity names by linking textual mentions to unique entities in a knowledge base. Shows that ambiguity in names requires contextual signals and structured references, as multiple real-world entities can share identical or similar names, directly impacting attribution accuracy and trust in information systems.

https://aclanthology.org/D07-1074/ - Validation and Provenance in AI Knowledge Systems

Why and Where: A Characterization of Data Provenance – Peter Buneman, Sanjeev Khanna, Wang-Chiew Tan – International Conference on Database Theory (ICDT)

Explains how provenance captures the origin and transformation history of data, enabling systems to validate outputs and trace dependencies. Shows that provenance is essential for verifying correctness, debugging results, and establishing trust in derived information within data-driven systems.

https://doi.org/10.1007/3-540-44503-X_20 - Regulatory Compliance & Digital Trust Networks

The Right to Explanation in Automated Decision-Making Does Not Exist in the General Data Protection Regulation – Sandra Wachter, Brent Mittelstadt, Luciano Floridi – International Data Privacy Law

Analyzes legal requirements around transparency and accountability in automated systems. Shows that compliance frameworks emphasize explainability, traceability, and documentation of decision processes, reinforcing the need for structured attribution and auditable information flows in AI systems.

https://academic.oup.com/idpl/article/7/2/76/3860948

Questions You Might Ponder

How do entities as trust units impact AI-powered search visibility?

Entities as trust units serve as the foundation for AI trust in search. When your entity is embedded in validated, interconnected networks, AI considers it reliable and eligible for citations – directly boosting your visibility in live AI results and summaries.

Why do isolated brands with good content still miss AI citations?

Even top-quality content won’t earn AI citations if the brand is isolated from other authoritative entities. AI systems prioritize relationship-based authority over volume, meaning network connectivity is essential for trust signals and search presence.

What diagnostic signals indicate failures in relationship-based authority?

Signals include inconsistent or ambiguous entity naming, isolated mentions, sparse partner networks, and missing citation confidence. These failures weaken or collapse the entity’s perceived authority – lowering its eligibility for trusted AI responses.

How does regulation change the requirements for relationship-based trust in AI search?

Regulated industries demand stricter validation of relationships and AI citation signals. Entities lacking strong, third-party verified connections are often demoted or excluded from results, as compliance risk heightens the need for reliable authority inference.

Can naming consistency alone influence AI trust in entity networks?

Yes. Consistent naming across digital assets consolidates entity authority, makes relationships easier for AI to map, and prevents the fragmentation that erodes citation confidence. Standardization transforms mentions into a coherent trust signal.