What You’ll Learn

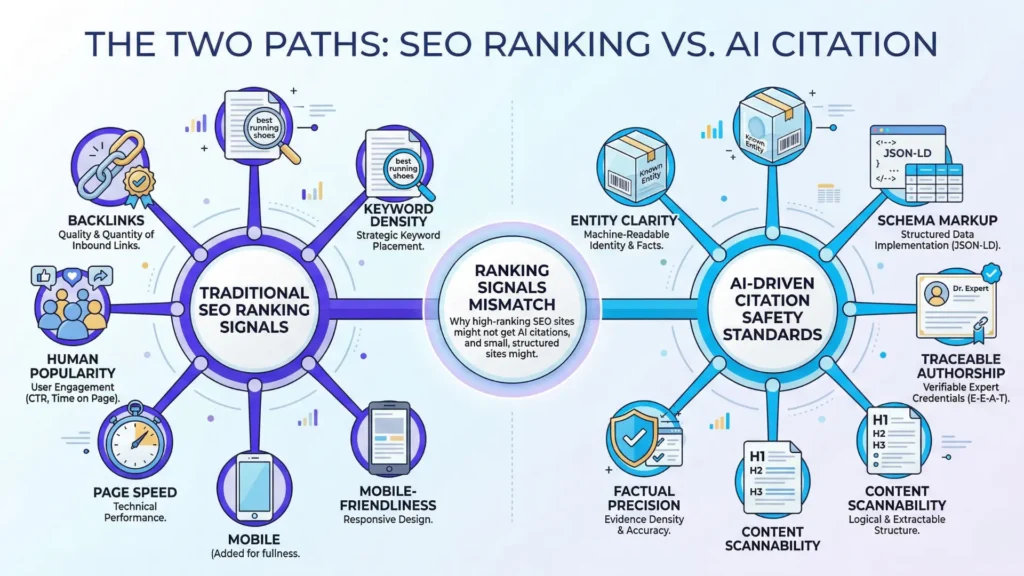

ranking signals mismatch

Key Takeaways

- Traditional ranking signals like backlinks and keyword relevance boost SEO but rarely guarantee AI-driven citation or answer selection.

- AI systems prioritize citation safety, structured data, and clear entities instead of popularity or traffic.

- Entity ambiguity, unstructured content, and over-reliance on backlinks decrease a page’s chances of being cited by AI.

- The true measure of visibility in AI search is frequent, safe citation – requiring precise organization and trust signals, not just high search rank.

Why does the #1 result in Google sometimes get ignored by AI assistants?

Here’s a twist: traditional SEO doesn’t actually “prove” a source is safe enough to cite, only that it’s probably useful for human readers.

Search engines rank pages by measuring proxies for usefulness, like how many sites link back (backlinks) or how often specific keywords show up.

This is classic signal-based SEO – but these signals mostly measure popularity and surface-level relevance.

What Ranking Signals Are Optimized For

Ranking as popularity and relevance proxy

When we worked with a B2B client pushing for “best project management tools”, we saw massive ranking jumps after a flurry of legitimate backlinks drove traffic.

But AI systems didn’t select their pages as sources.

The culprit?

SEO logic: traffic and links meant human readers trusted the page, not necessarily an AI system seeking safe, structured facts.

You might ask: if everyone else seems to trust a page, why won’t AI?

Because AI is wired for safe citation, not crowd-validated popularity.

Popular, not citation‑safe

Here’s the myth: pages that rank highest also make the best candidates for AI answers. In practice, those signals (like keyword density or piles of backlinks) can make a page rise for search, yet leave it invisible to AI selection tools.

For example, we’ve seen deep-dive guides absolutely dominate organic SEO – hundreds of referring domains, top three spots, bonafide authority in the eyes of Google. But when testing AI summary outputs, those same URLs rarely come up. It’s like acing the popularity contest but getting left out of the science fair.

AI source selection looks for clear entities, evidence, and verifiability – not just raw web clout. The analogy? Imagine hiring someone based on the number of people waving at them, instead of their credentialed expertise or clarity of presentation. Popularity helps with SEO ranking, but it’s not a guaranteed ticket to AI trust or visibility.

Sound familiar? If your analytics show big organic wins but zero mentions in AI answers, you’re seeing the “ranking signals mismatch AI search” firsthand.

Traditional SEO ranking signals aim for the crowd; AI citation logic demands higher precision and safety. That tension drives everything that follows.

What AI Systems Optimize For Instead

Citation safety over traffic

AI Citation Safety Signals Checklist

| Failure Mode | Description |

| Weak entity signals | Ambiguity in brand, author, or organization information |

| Unstructured or loosely-organized content | Lack of clear structure or modular content blocks |

| Fragmented references | Inconsistent naming and scattered mentions |

| Over-reliance on backlinks/keywords | High backlinks without clear entity clarity |

| Lack of explicit evidence | No verifiable facts or direct citations |

| Narrative or qualitative prose | Content is storytelling without extractable answers |

Why do some high-traffic pages vanish from AI-generated answers, while a little-known source becomes the citation? AI search systems prize citation safety, not popularity. Their core mandate: avoid hallucinations, minimize liability, and protect users with traceable, unambiguous evidence.

Think of it this way – a viral blog post might rake in clicks, but unless it backs claims with clear facts, it’s as useful to an AI as an unsigned memo. In our work with finance and health brands, we’ve seen AI ignore pages that outperformed competitors in Google but lacked clear data provenance or transparent sourcing. Even sites with authoritative backlink profiles often get skipped if their message can’t be easily quoted or verified by machine logic.

AI citation safety signals include:

- Clear, traceable authorship

- Structured data or schema markup

- Consistent entity naming – Neutral, fact-based language

- Direct attribution and references

- Contextual stability (no ambiguous sources)

Are your pages built to be cited against these standards, or only to be clicked?

Structured, clear, semantically aligned content

Now, try picturing a giant, automated librarian sifting millions of documents. What does it pick? Consistency, extractable statements, and meaning-rich structure. AI doesn’t just read for gist – it parses for attributes, patterns and clarity. If content looks like a jumbled junk drawer, it’s skipped; organized, schema-marked, and contextually precise pages stand out.

In B2B software, for example, we found feature tables and bullet lists cited far more often than free-form, story-driven pages – even when those pages ranked higher on Google. The machine values what humans overlook: signal density, logical segmentation, and alignment with known schemas. One client saw their AI citation rate double in three months, not because of bigger budget, but because they moved key facts from body copy into structured, labeled boxes.

Here’s an analogy: imagine AI as a search-and-rescue drone – not interested in crowded beaches, but in landing pads marked with bright, geometric shapes it can recognize instantly. When a page spells out entities, relationships, and evidence in consistent patterns, it gives AI the “permission slip” to cite safely.

Does your content invite AI to cite, or does it force AI to guess?

Ultimately, AI systems reward entity trust, explicit structure, and citation clarity. The rules for ranking signals mismatch AI search – those who adapt, get found, and trusted. Next: let’s look at the fallout when popularity collides with AI’s hunger for certainty.

Why Popularity and SEO Visibility Can Be Risky

Imagine spending six figures to top the search results – only to find your content nearly invisible to AI citation engines. Does popularity even matter when an AI doesn’t recognize your brand, or can’t disambiguate your content from ten similar sources?

Ambiguous entities and fragmented references

Here’s a scene we hear often: two client teams both “own” a product, but the brand name is generic (think “Evergreen Solutions”). Their homepage ranks, but AI systems either split the references, lumping unrelated mentions together, or simply fail to identify the true source. Why? AI models care less about traffic and more about entity clarity. If your brand’s author panel, organizational schema, or about page is vague – or if citations use inconsistent naming – AI can’t trust the thread.

One financial advisor client pushed hard on topical blogs. These ranked well, yet when we checked Bing’s AI citations, the assistant mixed up their firm with four unrelated agencies sharing a founder’s first name. It’s a bit like showing up to a convention wearing nothing but a sticker that says “Hi, I’m Alex” – how can anyone find you in a crowd?

This isn’t hypothetical discomfort. It’s a tangible barrier for AI source selection: ambiguous entities and fragmented references block reliable extraction, a mistake even experienced SEO teams can make. Does boosting brand mentions matter if AI can’t assign them correctly?

Over-reliance on backlinks without entity clarity

Here’s a myth: more backlinks means more AI trust. In fact, classic SEO logic – count links, claim authority – doesn’t hold for AI visibility. We’ve seen SaaS clients with monstrous backlink portfolios, but their site failed to distinguish the company as a unique entity. The AI assistant defaulted to Wikipedia and industry news instead.

In one retail case, a client’s FAQ content received thousands of links from “best of” roundups. Yet, the links referenced product names, not the corporate entity, and lacked a clear author. The AI dismissed the page as undifferentiated marketing, even as it topped organic search. Backlinks can be bread crumbs – helpful only if they lead to an identifiable home.

Backlinks are a popularity contest, not proof of identity. AI isn’t persuaded by mass endorsement; it wants structured signals – like organization markup, clean authorship, and unique identifiers it can map. Without these, even thousands of signals add noise, not confidence.

In the world of AI search, popularity is like shouting in a crowd. Clarity – entity precision, not just volume – wins attention. Notice how your prospects aren’t asking to find the loudest; they want to find the right source – instantly.

Diagnostic Failure Modes: Ranking Signals Mismatch AI Search

Common Diagnostic Failure Modes in Ranking Signals vs AI Search

| Signal | Description |

| Clear, traceable authorship | Authorship is explicit and verifiable |

| Structured data or schema markup | Use of formal schema to represent content |

| Consistent entity naming | Uniform names for brands, products, and authors |

| Neutral, fact-based language | Language free from bias or marketing spin |

| Direct attribution and references | Citing credible sources clearly |

| Contextual stability | Content is stable and unambiguous over time |

Weak entity signals (brand, author, organization ambiguity):

- Unstructured or loosely-organized content

- Fragmented references (inconsistent naming, scattered mentions)

- Over-reliance on backlinks/keywords with no clarity for selection

- Lack of explicit evidence or verifiable facts

- Narrative or qualitative prose with no extractable answers

- Absence of clean authorship/ownership markers

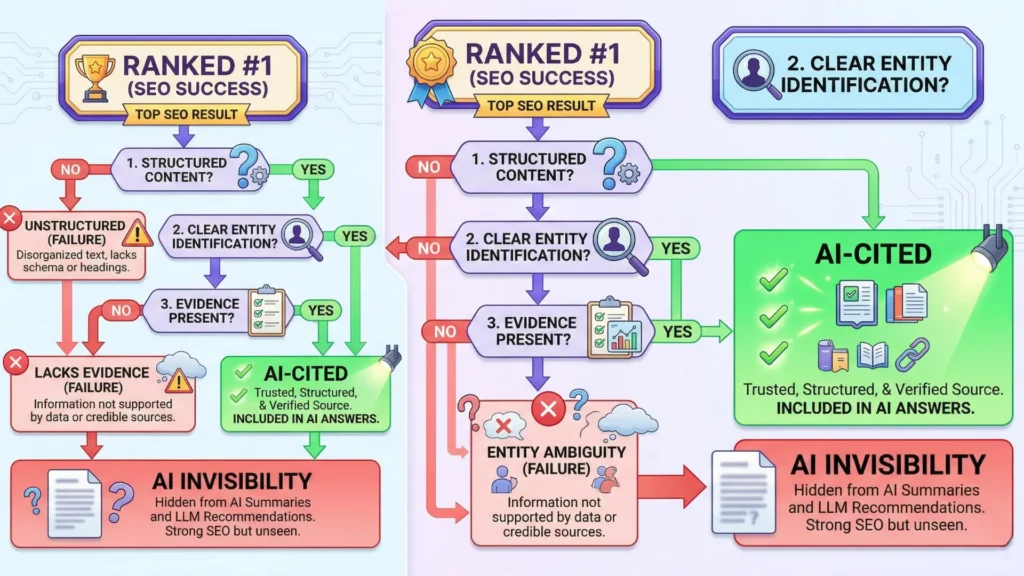

Why SEO Success Coexists with AI Invisibility

Unstructured or low-density content

Did you know that a page can rank #1 on Google for months without a single AI tool ever considering it for citation? Let that sink in. It isn’t an accident – it’s the result of a hidden mismatch. Almost every client we’ve worked with on “AI-ready” visibility has revealed this pattern: beautifully optimized SEO pages crammed with keyword clusters and long-form sections, but so loosely organized that extracting a direct answer proves nearly impossible for AI. The information floats – the way a page of faded notes on a corkboard tells a story to a human but leaves a search model guessing.

One executive asked, “If we’re winning organic search, why do we never appear as a cited source in AI answers?” The quick answer – structure trumps rank for AI. When your content lacks crisp headings, consistent structure, or answer density, it’s like serving soup with a fork. The AI struggles to scoop out reliable pieces, so it moves on.

For example, one client’s popular blog post dominated for a keyword (“enterprise CRM integration examples”), pulling in steady search traffic for 18 months, but never once cited by major AI engines. Why? The case studies were detailed but scattered, each buried under unrelated sections, and the only answer block was shuffled into a sidebar. This ambiguity is death by a thousand paper cuts for AI-driven visibility.

Misaligned answer format vs AI needs

What looks like rich, compelling narrative to a human reader may be invisible to modern AI selectors. AI models crave clear, extractable claims – think bullet points, crisp tables, and direct definitions. Yet, most top-ranking SEO content still leans into “thought leadership” prose, preferring elegant transitions and run-on stories that impress judges or Google’s Quality Raters.

We’ve seen entire tech sector landing pages rank on transactional keywords, but when the AI tries to pull a citation about “AI source selection vs SEO ranking”, it hits a wall. The core answer is woven into a paragraph halfway down, never directly stated, or it’s diluted by unrelated bullets (“our latest features”, “team expertise”, etc.). Imagine a librarian searching for a book in a library where titles are written in poetry instead of categories – frustrated, they’ll pick the simplest, clearly-labeled resource every time.

Here’s the myth: “If Google ranks it, AI will cite it”. In reality, AI doesn’t care about search position – it cares about citation safety, extractability, and pattern-recognition triggers. We’ve learned to ask: does this section answer the core query, or does it just sound nice to a human reader?

The gap is structural, not qualitative. SEO success can camouflage citation risk.

Both the skeleton (structure) and the muscle (content substance) need to fit AI’s grasp. Otherwise, even the strongest SEO site can become invisible when it matters most.

Citation as the New Unit of Visibility

Visibility through selection mechanisms

Imagine two pages: one sits on top of Google with 100,000 monthly clicks, while the other is rarely visited. Yet, AI systems may pick the underdog, not the “winner”. Here’s the counter-intuitive twist – AI doesn’t reward traffic spikes; it rewards citation safety.

Traditional SEO teams obsess over ranking signals: keywords, backlinks, historical clicks. But in client reviews, we’ve seen high-traffic pages consistently skipped by AI when the structure is ambiguous or sources lack clear trust markers. One firm we advised had an authoritative, backlink-rich blog, yet none of its material surfaced in AI-generated answers – their format buried the specifics AI systems need to cite.

Visibility for AI is not eyeball mass or trending status. It’s not measured by visits or shares. The real metric now is frequency of selection as a source – citation. A page that gets cited once an hour by generative AI is more “visible” in these systems than a first-place page that rarely feeds an answer. Ask yourself: Would you rather be seen by millions or named as the trusted reference powering tomorrow’s answers?

Picture a library where the loudest books aren’t the ones quoted – the ones cited are those with crystal-clear facts, clean references, and zero ambiguity. This is the algorithmic shift away from popularity toward utility.

Implications for trust‑first content strategy

Here’s a myth to leave behind: “Ranking first guarantees AI citation”. In practice, we’ve watched entire client portfolios slip into AI obscurity – despite organic dominance – simply because they lacked direct, structured claims or didn’t signal ownership of an idea or entity. Visibility is no longer something you win and keep; it’s a status you must re-earn, citation by citation.

Shifting to a trust-first mindset means executive teams should start asking new questions. How do we become the definitive input, not just another blue link? What signals make us the safest, clearest choice for machines weighing citation risk? One analogy: Being “ranked” is dating, being “cited” is getting the marriage proposal. The commitment level is categorically different.

Clients who adopted entity-level clarity (think: consistent naming, transparent authorship, reference-ready structure) started seeing an uptick in generative AI citations within weeks – far faster than the glacial pace of movement in legacy search. The upshot? AI-ready trust levers – like Schema.org markup or explicit fact labeling – aren’t nice-to-haves. They’re the entry ticket.

Summary: Why SEO Ranking Signals Mismatch AI Search

- Popularity and relevance (ranking signals) drive organic search results, but AI source selection prioritizes citation safety, clarity, and structured information.

- To be visible in AI-driven answers, content must present unambiguous entities, citeable evidence, and formalized structure.

- The frequency and quality of being cited (not just ranked) is the new measure of digital visibility.

The new goal isn’t just to appear; it’s to be selected. This reframes visibility as a contest won not by shouting loudest, but by being the most quotable, safest source in the room.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- The reliability and utility of ranking signals

The Anatomy of a Large-Scale Hypertextual Web Search Engine – Sergey Brin and Larry Page – Computer Networks

Analyzes the foundations of modern search ranking through PageRank, where link structure is used as a proxy for importance and relevance. Demonstrates that ranking signals reflect network centrality and popularity rather than factual accuracy or citation safety, making them insufficient as standalone indicators for reliable AI outputs.

https://research.google/pubs/pub334/ - Citation trust and information safety in AI

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks – Patrick Lewis et al. – NeurIPS

Combines retrieval with generation to improve factual grounding in language models. Introduces explicit evidence conditioning, where generated outputs depend on retrieved documents, improving traceability and reducing unsupported claims compared to purely generative systems.

https://arxiv.org/abs/2005.11401 - Knowledge graph-based entity disambiguation

Knowledge Graphs: Introduction and Applications – Aidan Hogan et al. – ACM Computing Surveys

Describes how entities are represented as structured nodes with defined relationships and unique identifiers. Enables precise disambiguation and consistent attribution across sources, reducing ambiguity inherent in keyword-based retrieval systems.

https://dl.acm.org/doi/10.1145/3447772 - Structured data and schema utility for machine agents

The Semantic Web – Tim Berners-Lee et al. – Scientific American

Introduces machine-readable metadata frameworks that allow systems to interpret relationships between entities. Establishes the foundation for structured data, enabling automated reasoning, improved interoperability, and more reliable attribution in machine-driven environments.

https://www.scientificamerican.com/article/the-semantic-web/ - Human attention versus algorithmic content selection

Exposure to Ideologically Diverse News and Opinion on Facebook – Eytan Bakshy et al. – Science

Demonstrates how algorithmic curation shapes user exposure to information. Shows that engagement-driven ranking can limit diversity and skew visibility, highlighting the gap between human attention signals and systems designed for balanced or reliable information selection.

https://www.science.org/doi/10.1126/science.aaa1160

Questions You Might Ponder

Why don’t high-ranking SEO pages always appear in AI-generated answers?

SEO ranking signals prioritize page popularity and organic relevance for humans, but AI search tools require clear, structured, and safe information that can be reliably cited. As a result, content optimized for clicks may be bypassed if it lacks citation clarity or structured data.

What makes content ‘citation-safe’ for AI systems?

Citation-safe content demonstrates clear authorship, structured data, fact-based statements, and transparent sources. AI systems prefer pages formatted for easy extraction and attribution, greatly reducing the risk of hallucinations or liability and supporting trustworthy answer generation.

How does entity clarity affect AI visibility versus SEO ranking?

Entity clarity – consistent naming, schema markup, and unambiguous references – helps AI systems reliably identify and cite sources. While SEO may reward frequent mentions or traffic, AI prioritizes content that explicitly establishes its authority and identity with clear markers.

Why can excessive backlinks fail to boost AI source selection?

While backlinks enhance SEO performance by signaling popularity and authority to search engines, AI citation engines ignore them if pages lack structured facts or distinct entity markers. For AI, verifiable, structured signals matter more than sheer link volume.

What structural changes can make content more AI-visible?

Improving AI visibility requires organizing content with crisp headings, answer blocks, structured tables, and schema markup. This format enables AI models to quickly extract, verify, and attribute information, increasing the likelihood of being cited in AI responses over traditional SEO pages.