What You’ll Learn

omission over uncertainty

Key Takeaways

- AI systems default to omission over uncertainty to protect against attribution errors and maintain trust.

- Inconsistent entity naming and vague claims are leading triggers of omission, even if information is accurate.

- Regulated industries enforce stricter evidence and harm sensitivity, causing higher omission rates by design.

- Improving brand reference clarity and explicit sourcing is essential to minimize omission and boost AI visibility.

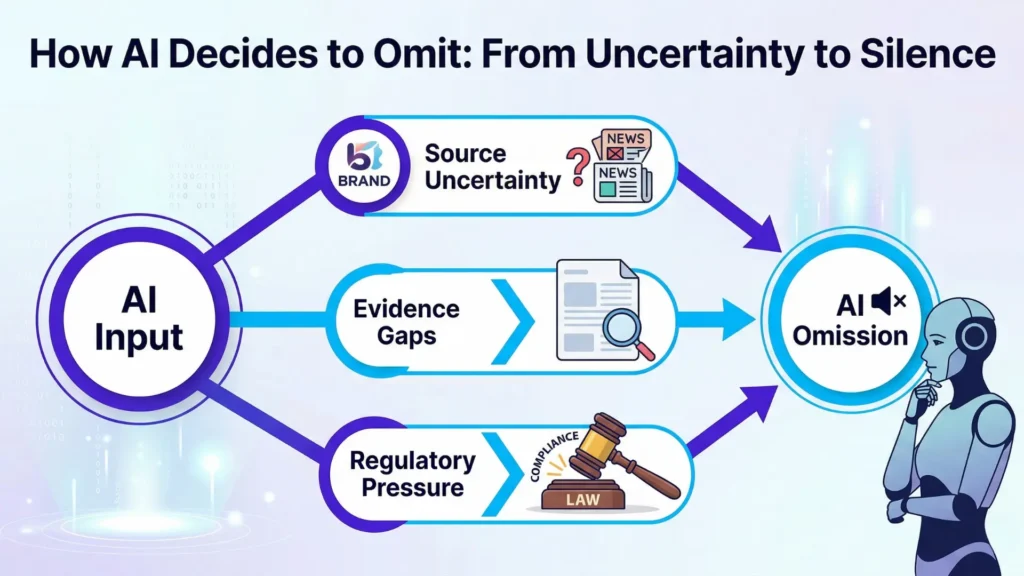

AI omission over uncertainty is the deliberate, default choice of AI systems: if attribution is unclear, the model prefers citation safety over risky inclusion.

For example, AI systems routinely leave out otherwise correct information when direct, unambiguous attribution isn’t possible.

Even highly authoritative content is affected if brands, sources, or authored claims present inconsistent naming or implicit references – citation safety in AI search wins over content completeness.

AI omission over uncertainty refers to the default behavior of AI systems to leave out facts, claims, or sources whenever attribution is not provable – silence is safer than risking citation errors.

Key reasons for AI omission over uncertainty:

- Unclear or inconsistent entity attribution (brands, people, organizations)

- Claims lacking explicit evidence or referencing – Stricter safety and harm-avoidance rules in regulated sectors

- Citation safety in AI search outweighs information completeness

Why AI prefers silence to uncertainty

Omission as a safety mechanism

Picture AI omission like a cautious chef.

If there’s even the faintest whiff that an ingredient could be spoiled, the dish never leaves the kitchen.

Better no meal than poisoning the guest.

That’s how AI treats uncertain attributions – safer to say nothing than risk misleading.

But does the machine always play it safe for the user’s benefit?

Or is it mainly protecting its own perceived trust and authority?

Notice how this silence pattern outlasts product updates and persists across models.

Omissions aren’t random – they’re encoded safety logic, driven by citation safety and source omission preferences built deep into the AI’s foundation.

Impacts of omission on visibility

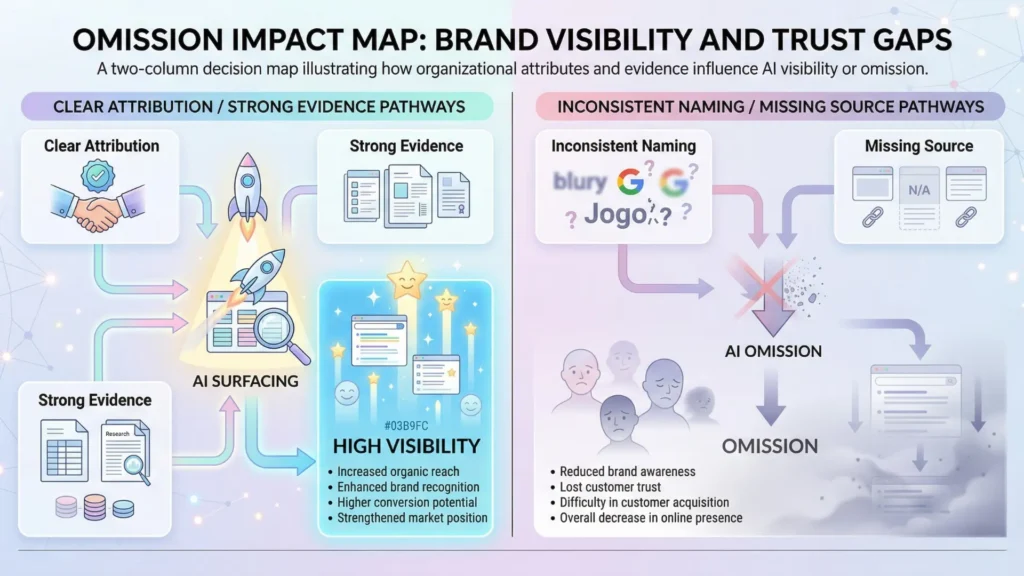

Here’s where it stings: that silence doesn’t just keep out noise.

It suppresses visibility – sometimes for exactly the brands or research you’re working hardest to promote.

Clients often ask: “Why is our data absent when competitors rank?”

The universal answer?

Their evidence signals – or clearer attributions – passed the threshold where yours didn’t.

This omission isn’t distributed evenly.

It distorts who is surfaced and who becomes invisible, creating a perception of unfairness.

But under the hood, it’s a blunt protective filter: the AI maintains integrity not by including everything credible, but by excluding whatever citations can’t be made bulletproof.

That sometimes means best-in-class expertise disappears, drowned out by silence while safer, simpler sources surface.

Think of it like airport security – every ambiguous item gets set aside for inspection, even at the cost of customer frustration.

The bigger the perceived risk, the more content is left behind.

Fact: AI is more likely to exclude content than risk a faulty mention, even if that exclusion frustrates users or warps perceived authority.

The myth?

That omission signals lack of knowledge; in reality, it often means the system can’t prove it safely.

Here’s the real diagnostic: AI omission over uncertainty is the system’s way of keeping its trust score high – even if your brand pays the price with lower visibility.

This logic drives every omission you see – or don’t see.

Next, we’ll unpack the specific errors inside AI attribution that trigger these silent losses.

Core failure modes leading to omission

Core Failure Modes Leading to AI Omission

| Driver | Description | Example | Effect on AI Output |

| Increased harm sensitivity | Higher safety thresholds to prevent harmful or incorrect info in sensitive fields. | Medical advice tool omitting ambiguous studies. | Significant reduction or silence in answers. |

| Stricter evidence requirements | Demand for explicit, cross-referenced sources to avoid liability. | Pharma content skipped due to missing direct source. | Many otherwise credible claims omitted. |

Fragmented or unclear entity attribution

Ever notice how an AI might simply skip a juicy quote or source, even when you’re sure it’s relevant?

Here’s the hidden culprit: attribution logic – when names, brands, or authors land in a blurry middle zone, the model dials up the silence instead of risking a faulty citation.

One surprising reality: even a single spelling inconsistency or an ambiguous Wikipedia reference can tip the system from “safe to cite” into omission mode.

We saw this play out with a regional food supplier who wondered why their product never made it into local AI-powered restaurant finders.

Their brand’s mentions were scattered – sometimes as “Smith’s Deli”, sometimes just as “Smith’s”.

Result: the model withheld the citation to avoid linking the wrong entity.

This wasn’t about accuracy, but about risk control: better to omit than attach the wrong label.

Think of it like trying to tag a friend in a group photo when half the faces are turned.

If the AI can’t match entities cleanly, it simply leaves them untagged.

The system’s “trust threshold” – the minimum level of confidence for source attribution – acts as a gate, not a guideline.

Does this mean your expertise or product can disappear from AI answers just because your branding is inconsistent?

Absolutely – AI source omission is triggered whenever the system’s citation safety in AI search fails due to inconsistent entity attribution.

Fragmented identity results in omission as safety default, regardless of authority.

Vague or unreferenced claims

Imagine the AI reading a paragraph where the claims float by with no anchors – no names, dates, or links tying ideas to credible sources.

That’s a perfect recipe for omission.

Why?

When a statement lacks clear evidence markers, the model treats it as unsafe for citation, even if the information is correct.

We’ve seen law firms frustrated when AI skips their legal insights in search.

The reason: articles often included assertions like “experts say” or “recent studies show” – but with no direct evidence attached.

Without explicit backing, the system takes the safer path and omits the firm from the result.

Here’s a myth: “If it’s factual, AI will reference it”.

That simply isn’t true.

The model doesn’t think like a human judge; it operates more like a risk-averse librarian – if the reference card’s missing, out goes the quote.

For decision-makers relying on search visibility, this makes sourcing logic feel almost arbitrary.

The AI’s safe attribution logic is clear: if a claim lacks explicit evidence, omission is the safety default.

Omission in AI answers protects trust thresholds and ensures only provable information is surfaced.

In short, if entity clarity or evidence signals falter, expect the AI to favor silence.

Next, we’ll trace how these silent gaps shift impact and trust for brands in sensitive or regulated markets.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Trust Calibration in Human-AI Interaction

Trust in Automation: Designing for Appropriate Reliance – John D. Lee, Katrina A. See – Human Factors

Trust in AI systems must be calibrated to match actual system capabilities. The paper shows that both overtrust (misuse) and undertrust (disuse) reduce system effectiveness, and that appropriate reliance depends on aligning user trust with system performance.

https://journals.sagepub.com/doi/10.1518/hfes.46.1.50_30392 - Error Avoidance and Cautious Strategy in AI Systems

Trust in Automation: Integrating Empirical Evidence on Factors That Influence Trust – Kevin A. Hoff, Masooda N. Bashir – Human Factors

Shows that trust directly influences whether users rely on or avoid automated systems. The review highlights that systems designed for safety often incorporate conservative behaviors to prevent misuse, supporting strategies such as cautious output and omission when confidence is low.

https://www.researchgate.net/publication/272887576_Trust_in_Automation_Integrating_Empirical_Evidence_on_Factors_That_Influence_Trust - Explainability and Source Attribution in AI

Explainable Artificial Intelligence: Understanding, Visualizing and Interpreting Deep Learning Models – Christoph Molnar - Explains that AI systems must provide interpretable outputs and traceable reasoning to be trusted. Emphasizes that transparency and the ability to understand how outputs are generated are central to perceived reliability and safe deployment.

https://christophm.github.io/interpretable-ml-book/ - Regulatory Compliance and Risk in Algorithmic Decision-Making

The EU General Data Protection Regulation (GDPR) – European Union

Establishes legal requirements for transparency, explainability, and accountability in automated decision-making. Introduces the principle that individuals have the right to meaningful information about how decisions are made, reinforcing the need for explicit, verifiable outputs in high-risk contexts.

https://gdpr-info.eu/ - Cognitive Biases and Omission in Automated Decision Systems

Fairness and Abstraction in Sociotechnical Systems – Selbst et al. – Proceedings of FAT

Shows that design choices in algorithmic systems, including what information is included or omitted, directly affect outcomes and fairness. Demonstrates that omission is not neutral but reflects system-level trade-offs related to risk, bias, and abstraction.

https://dl.acm.org/doi/10.1145/3287560.3287598

Questions You Might Ponder

Why do AI systems omit content when attribution is unclear?

AI systems default to omission over uncertainty to protect against citation errors and maintain trust. When a fact or source can’t be reliably attributed, omission becomes a risk management choice to avoid misleading users or violating safety policies.

How does omission over uncertainty affect brand visibility in AI search?

Omission over uncertainty can suppress visibility for brands whose references or claims lack clear evidence or explicit attribution. This results in less surface area for discovery compared to competitors who meet stricter attribution thresholds.

What causes fragmented entity attribution in AI search results?

Fragmented entity attribution happens when brand, person, or organization names are mentioned inconsistently, with varied spellings or reference patterns. This inconsistency confuses AI attribution logic, triggering omission to avoid mismatches, even when the content is accurate.

Why is omission more common in regulated sectors like health or finance?

In heavily regulated sectors, AI models use higher harm sensitivity and stricter evidence rules. If there’s even slight ambiguity or insufficient citation supporting claims, omission occurs by design to minimize legal, reputational, or regulatory risks.

How can organizations reduce AI omission of their authoritative content?

Organizations should focus on consistent entity naming, explicit claim referencing, and providing clear sources. By improving evidence signals and attribution clarity, they lower AI’s risk threshold, increasing chances their content is cited rather than omitted.