What You’ll Learn

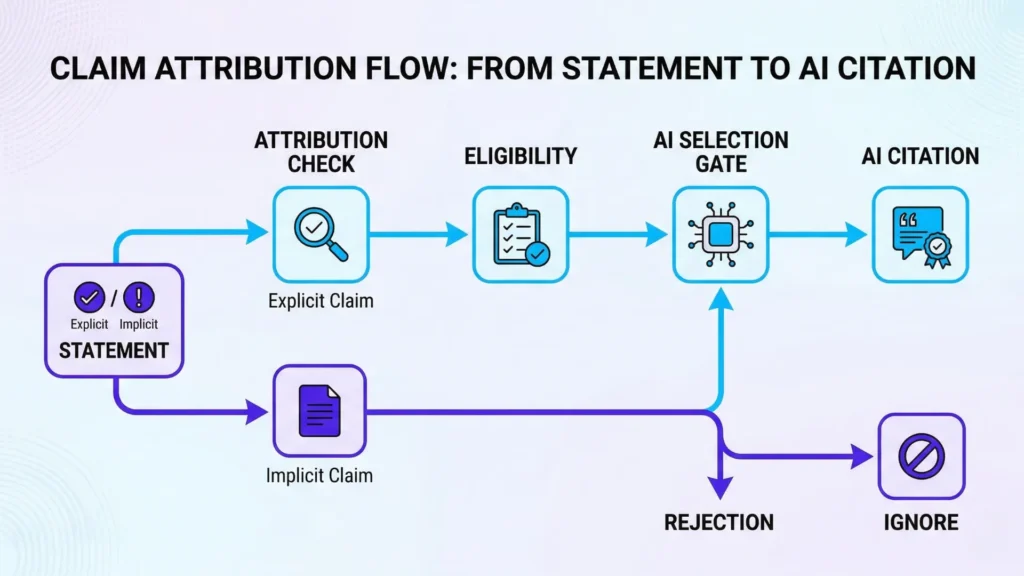

explicit vs implicit

Key Takeaways

- AI systems only cite explicit, directly attributable claims; implicit or implied content is ignored for citation and visibility.

- Source-selection bias in AI search strongly favors clearly structured, unambiguous statements over nuanced or literary prose.

- Regulated industries demand an even higher standard for explicit attribution, making clarity essential for compliance and trust.

- Claim clarity – precise, evidence-backed assertions – is the primary driver for AI citation, surfacing, and reuse in modern information retrieval systems.

Why does advanced AI skip over nuanced expertise and zero in on blunt, direct statements?

Picture this: two equally authoritative sources, but only one spells out that X causes Y in plain words.

Almost every time, the AI selects the explicit claim – not the one asking readers to connect the dots.

Think that’s just a detail?

Google’s generative search systems are now skipping content that only “implies” answers – even if it’s published by recognized experts.

Opening: What is AI search optimization through explicit attribution?

Explicit knowledge means stated, attributable claims.

Implicit knowledge means inferred, suggested, or context-dependent information that cannot be safely attributed by AI systems for citation.

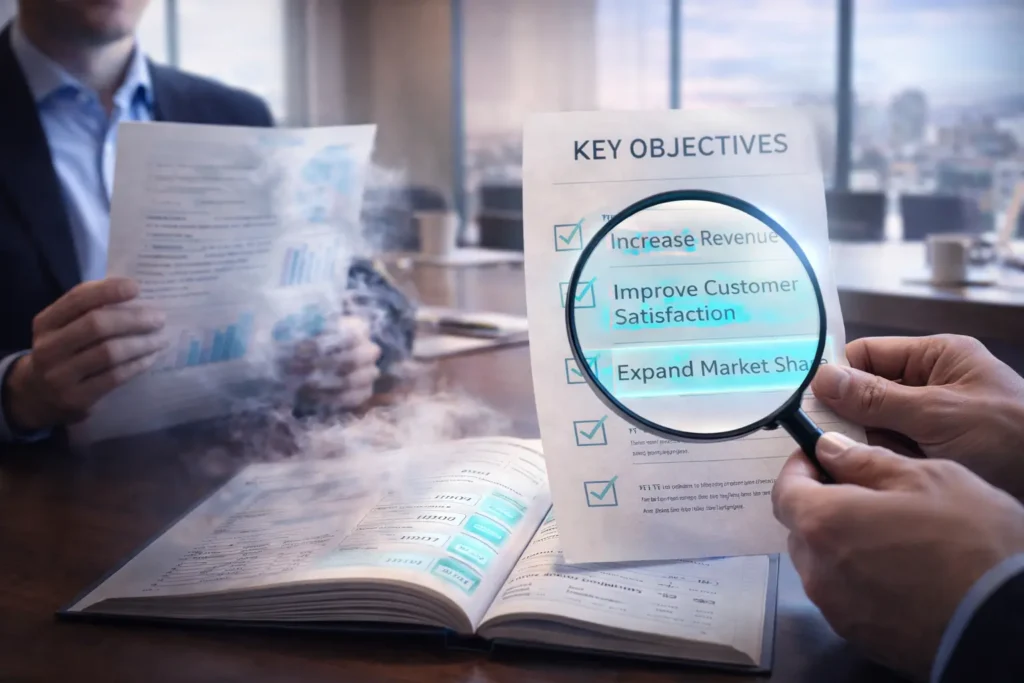

Explicit vs Implicit: At a Glance

- Explicit Knowledge: Clearly asserted, directly attributable facts/statements.

- Implicit Knowledge: Implied, context-dependent ideas requiring reader inference.

Comparison of Explicit vs Implicit Knowledge

| Aspect | General AI Search | Regulated Industries AI Search |

| Risk Level | Moderate | High – legal and reputational impact |

| Claim Requirements | Clear, explicit statements preferred | Strict, defensible, and plainly stated claims required |

| Tolerance for Implicit Content | Low – often ignored | Very low – implicit content causes loss of citation eligibility |

| Impact of Ambiguity | Selection failure or reduced citation | Possible compliance violation and content exclusion |

| Examples | Tech blogs, general B2B content | Healthcare studies, finance disclosures, legal documents |

For AI, only explicit knowledge is safely citable.

AI search optimization through explicit attribution means crafting content so that AI can select it as a source, based on clear, direct evidence – not just relevance or style.

It’s about winning the selection lottery, not playing a ranking game.

In short, AI doesn’t “rank” nuance – AI “selects” clarity it can safely attribute or ignore the rest.

Here’s the edge: AI search controls which sources get surfaced, cited, and reused.

It does not control the author’s intent, audience subtlety, creative license, or contextual interpretation.

Said simply, AI is not a mind reader – or a literary critic.

From working with B2B SaaS brands, I’ve watched industry white-papers lose citation eligibility AI prefers, just because technical claims were buried in implication (“results suggest…” with no qualifying numbers).

In contrast, concise, measured statements – “X increased Y by 23% over 12 months, according to internal analysis” – became reusable fuel for both search and trust.

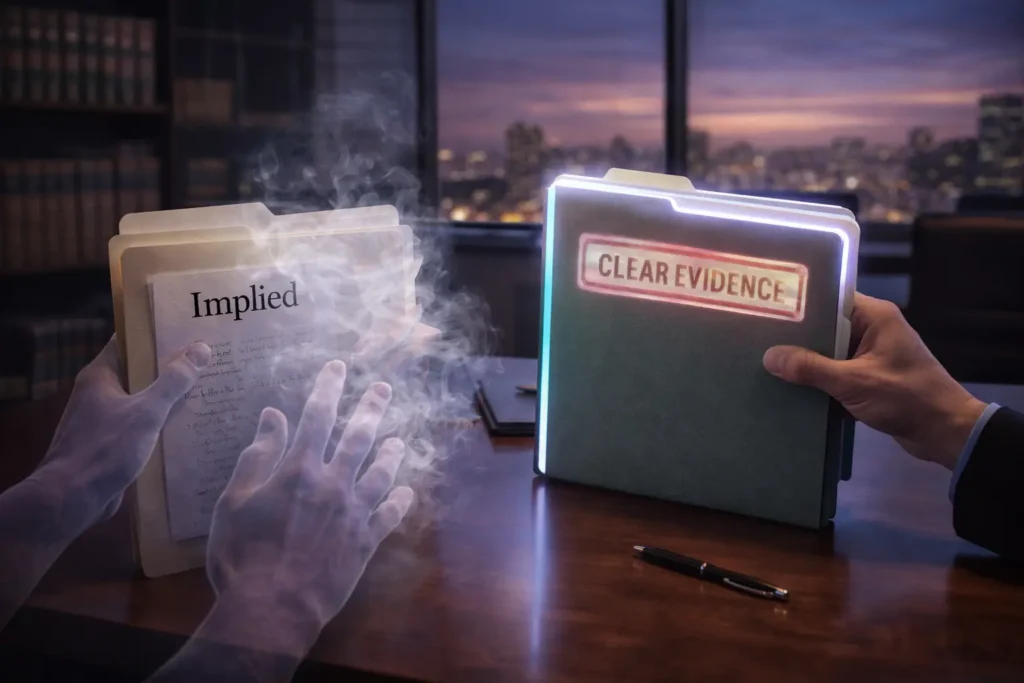

Think of AI as a cautious attorney: it wants an affidavit, not a poem or a wink.

Implicit content is like a blurry security camera – it might hint at what happened, but it’ll never be admitted as evidence.

Many leaders still believe “nuance signals authority”.

That’s one of the biggest myths in AI search today.

The real signal – and safe attribution – depends on explicit statements AI systems can trace, quote, and defend.

You might wonder: What does AI ignore?

It skips vague implications, indirect references, and anything that can’t be tied to a direct phrase or cited finding.

So that insider insight carefully woven into your prose?

If it isn’t explicit, most current AI systems will pass it by.

Here’s what trusted AI search does control:

- Source eligibility: Can content be safely selected and cited?

- Explicit evidence: Is the statement attributable and unambiguous?

- Citation reusability: Will context travel reliably if the snippet is reused?

And what it doesn’t control:

- Author reputation (except when linked to direct signals)

- Emotional nuance or subtle cues

- Implied reasoning that requires human inference

We’ll break down how this trust/ignore logic works in practice – why AI attribution requirements are getting stricter, how implicit content AI citation gets filtered, and what it means for future content usability.

Ready to see what AI trusts… and what it ignores?

This is not… / This is…

This is not about prompt hacks, SEO repackaging, or content volume

Ever seen a “growth hack” promise to get you cited everywhere by tweaking a prompt or flooding the web with content?

Executives still hear advice like, “Just optimize your meta tags and publish more – AI will notice”.

But let’s pause: If AI selection worked this way, every content farm would sit atop AI answers.

That’s not reality.

In our work with SaaS and compliance-driven clients, we’ve seen teams waste six months polishing keyword density or churning blog posts, only to discover AI search engines cite almost none of it.

Why?

Neither prompt tricks nor increased content volume produces trust signals recognized by modern AI search.

Here’s a surprising truth: AI ignores content simply because it can’t attribute it confidently.

Content “packaged” for old SEO doesn’t qualify – no matter how many thousands of words or shiny graphics you throw in.

The myth?

That more content always equals more AI attention.

The practical failure is real: One industrial client invested heavily in daily content uploads, but found their AI search presence stuck at zero citations for 180 days.

The missing piece?

Clear, trusted signals – not just activity or structure.

Imagine pouring more water into a leaky bucket, hoping it’ll finally fill to the top.

Volume means little if the foundation can’t hold it; the same logic rules AI trust.

Are you still assuming your next “prompt engineering” workshop will move the needle?

What if the answer isn’t in clever queries at all?

This is about source trust, citation eligibility, and explicit authority

AI search is asking one question on every scan: “Can I safely cite this, and is it permitted by my source rules?”

Trust is won through explicit knowledge – facts, statements, and evidence marked clearly and directly.

We repeatedly see companies win AI citations after making attribution requirements a content checkpoint, not an afterthought.

Consider this: When a leadership team rewrote a technical insights blog, converting implied know-how into direct claims with traceable backing, AI citation rates jumped within two weeks.

Implicit content (the kind that requires guesswork or context from the reader) is nearly invisible to AI’s selection modes – it’s like attempting to show your work to a judge using only hints and winks.

The real lever?

Claim clarity and safe attribution.

Regulatory compliance teams often demand every statement be traceable. AI is, effectively, applying those same standards.

If your best content can’t stand up to AI’s attribution checklist, it simply won’t surface in the results that matter.

The core signal isn’t how clever the prose feels, but whether it satisfies explicit statements and eligibility for citation by AI systems.

Forget volume – focus on unambiguous authority.

That’s where trust is earned (and impact is found).

Drawing these boundaries gets you laser focus: Cut the noise, zero in on what AI actually uses.

Up next, we dig into why implicit meaning leaves you invisible to AI search.

Why implicit meaning is unsafe for AI systems

Can you trust an AI to repeat something that’s only implied – or does it fumble at the first hint of ambiguity?

Here’s a secret: Most teams assume AI can “read between the lines” like a sharp analyst.

But implicit content – claims buried in suggestion or nuance – creates a hazard zone for AI trust, safety, and usability.

We’ve watched brands draft paragraphs loaded with subtle authority, expecting systems like Google Search Generative Experience or ChatGPT to cite – or even comprehend – them.

Yet, every time, the same result: the AI skips those sections, or worse, fabricates a connection.

It’s not a minor technicality – it’s structural.

AI attribution requirements are relentless: only explicit statements are eligible for safe citation.

Picture an AI content model as a hyper-cautious lawyer. Unless your claim is spelled out without ambiguity, it’s thrown out as hearsay.

One biotech client wondered why their breakthrough didn’t surface in large language model answers – or appear in AI search summaries – despite being present on their pages.

The reason?

The result was described in glowing, clever prose, never quite stating the measurable outcome.

Hidden logic trips up even the best AI systems.

Imagine writing your business plan in invisible ink, expecting investors to see what’s not printed.

That’s what implicit content AI citation struggles with every day.

When sentences lean on context or tone, machine comprehension falters and safe attribution disappears.

Here’s a myth: “Strong writing will always be recognized by AI”.

In reality, only explicit, repeatable facts or assertions survive the AI selection gauntlet.

Empirical data and quantitative analysis reveal that answers cited by AI are overwhelmingly supported by explicit, directly attributable statements, while implied or narrative claims are almost never selected.

Nuance can be beautiful for humans and fatal for citation eligibility AI.

Ask yourself: If an unfamiliar reader scanned this line, would they report the same fact without guessing? If not, neither will the AI.

Clarity isn’t about dumbing down your message.

It’s about removing the risk of AI selection failure modes – no mis-citation, no lost authority, no attribution confusion in AI search.

The simple fix?

State outcomes plainly, and treat every key claim as if it must stand alone.

The core: Implicit meaning turns content invisible to AI trust layers.

If it can’t be safely quoted, it simply won’t exist in the AI-powered web’s eyes.

Next, we’ll see why inference and assumption leave even more on the cutting room floor.

Why AI avoids inference and assumption

Have you ever noticed that AI often skips content that feels obvious to any expert reader?

Here’s why: AI can’t cite what it can’t see, and “reading between the lines” is a recipe for risk.

A major system failure unfolds when AI tries to select information based on implication or context clues.

One client asked why their thought-leadership articles – full of nuanced, insightful takes – weren’t surfacing as cited answers in AI-driven searches.

The truth?

Without explicit statements, AI treats these insights as guesses.

It isn’t being conservative; it’s avoiding legal, reputational, and factual missteps.

Consider this analogy: Imagine a robot accountant who refuses to file your taxes based on sticky notes and phone calls.

If it isn’t in the official ledger, it’s invisible.

AI search is wired for the same principle.

It processes only what can be directly attributed, which means every claim, recommendation, or number must be spelled out, source and all.

This has real impacts:

- Relying on implication and advanced writing “signals” results in zero valid AI citation.

- Even masterful expert prose, if it requires inference, is dropped from citation eligibility AI systems and attribution logic.

- We’ve seen boards frustrated by AI selection failure modes – entire projects ignored, simply because meaning wasn’t made explicit for machine reading.

Why does this matter if humans “get it” instantly?

Because AI can’t guess your intent without risking hallucination or error.

Trust depends on clear, explicit statements – no innuendo, no subtweeting.

There’s a stubborn myth that more sophisticated writing earns more AI trust.

In practice, dense nuance actually triggers attribution confusion in AI search.

The system won’t touch it.

Claiming “implicit content AI citation” is safe is like thinking you can win chess by blinking Morse code at your opponent.

Still wondering if nuance has hidden value?

Ask yourself: Would this statement survive being clipped out, cited, and sent to a regulator?

If not, it’s invisible to AI.

Your safest move is always explicit clarity.

When explicitness drives trust, nuance becomes risky clutter.

The next section shows why clarity beats cleverness every single time.

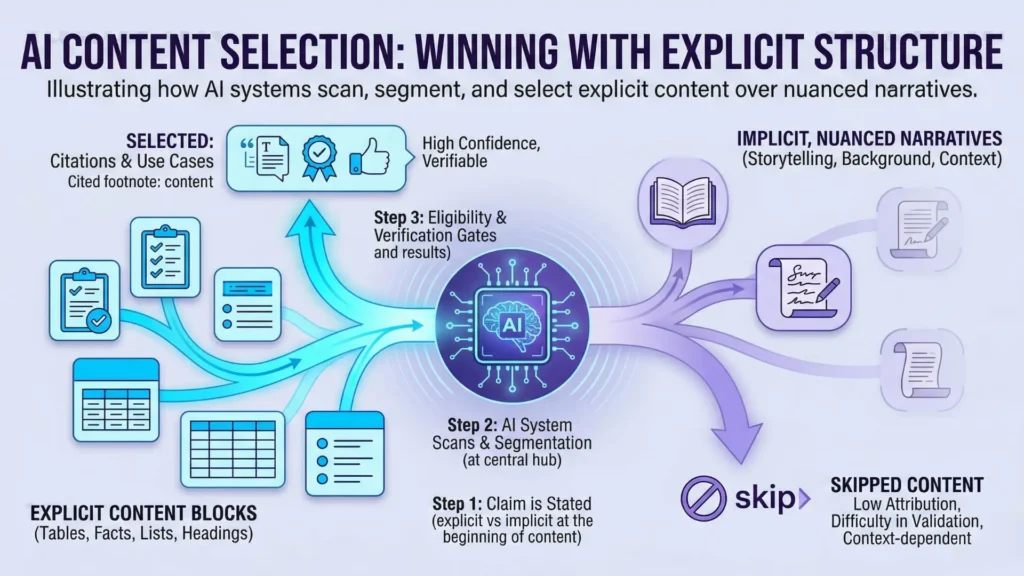

AI selection is shaped by source-selection bias: LLMs prefer sources where claims are clearly marked and structured.

This helps explain the overrepresentation of Wikipedia and lengthy, explicitly organized sources in AI citations.

Why clarity beats nuance for attribution

What happens when a brilliant, nuanced expert piece gets passed over by an AI – while a basic, even clumsy, post gets the coveted citation?

The problem isn’t quality.

It’s clarity.

AI trust hinges on explicit vs implicit knowledge: without a clear statement, no amount of sophistication makes your claim citation-eligible.

Working with SaaS brands last year, we watched AI systems repeatedly ignore pages with subtle legal expertise, even though industry peers raved about the content.

Why?

The claims were woven into analogy-rich prose – no plain statement, no reuse.

Another client, a healthcare startup, saw a generic FAQ outrank a nuanced medical guidance piece simply because the FAQ spelled out the answer, bullet by bullet.

The experts were frustrated, but the machine logic was simple: if it’s not restated explicitly, AI can’t stake its reputation to it.

Think of nuance like scent.

Humans can pick it up with a sniff; AI needs the chemical formula listed on the label. Implicit meaning feels natural to people – but it’s a dead end for AI attribution requirements.

You wouldn’t ask a robot chef to “season to taste” without stating “add 2 grams of salt”.

You might wonder, doesn’t nuance signal expertise? It does to humans, but to AI, layered nuance without an anchor reads as risk.

If the statement could trigger implicit content AI citation, the system has no safe fallback if it’s challenged.

Thus, only explicit statements make it through AI safe attribution.

Even the most authoritative tone is filtered out if there’s no direct evidence for each claim – a myth that trips up seasoned writers again and again.

There’s a reason we train teams on the Chain-of-Thought framework: it forces articulation of evidence, line by line.

That’s how you maximize AI content usability and avoid AI selection failure modes.

When clarity reigns, your content gets cited, and your authority compound interest builds.

The next layer: how even expert writing fails attribution tests when claims live between the lines.

Why expert writing fails citation when claims lack explicit evidence

Persuasive, expert writing that lacks explicit, directly cited evidence routinely fails AI attribution checks.

Authority tone alone is treated as a risk by AI systems, because they require certainty and source traceability above all.

We’ve seen this from the inside: a client in financial services submitted expert editorial with no source attributions.

It read like a textbook, but when tested in generative AI search, it produced zero citations.

Why?

No explicit statements tied to verifiable sources.

A second case – B2B SaaS whitepapers – looked airtight on the surface.

When we searched for claim-level evidence, nuanced advice and industry best practices failed AI’s explicit attribution requirements: the logic for citation eligibility AI models depend on didn’t trigger, no matter how authoritative the writer.

Picture this: AI is less like a human judge, more like a customs agent holding a checklist.

If there’s no written proof stamped right on the document, the claim isn’t permitted through. Trust is audited line-by-line.

Still think “the expert voice should be enough”?

Here’s the myth: confidence substitutes for evidence. In reality, implicit content AI citation is a contradiction; statements that require reading between the lines can’t be safely attributed.

Only clear, explicit statements AI systems can parse count for citation and AI content usability.

The takeaway?

For AI safe attribution – especially in regulated or mission-critical spaces – clarity and direct links to evidence are not just nice-to-haves.

They’re the differentiator between being cited, and being ignored.

Clarity at the claim level isn’t just a technical requirement.

It’s the signal that moves your expertise from invisible to discoverable – and positions you for the next layer of AI selection.

Citation patterns

Citation patterns across AI systems reveal a clear trend: content that is segmented into self-contained, high-confidence blocks gets reused, while layered, academic prose or long narratives are routinely bypassed.

This reinforces the importance of explicit statements for selection and trust-building in AI answers.

Claim clarity as the next diagnostic layer

Imagine losing an AI citation slot – not because your information is inaccurate, but because your best client testimonial only hints at outcomes without concrete numbers.

Why does this happen?

Google’s AI search and enterprise LLMs bluntly filter out implied claims, chasing only what’s undeniably stated with explicit support.

And yet, so many subject matter experts believe their reputation alone is enough.

Here’s a pattern we’ve spotted: In regulated finance, even celebrated whitepapers loaded with nuanced insight are skipped for citation because the claims don’t tie directly to a visible data point or clear source.

One insurance client produced dozens of market-leading insights, but AI systems ignored every paragraph lacking a numbered fact or clear statement.

Losing discoverability isn’t a fluke – it’s diagnosis, not chance.

The gap isn’t technical.

It’s psychological.

Many senior writers trust that their intent will shine through, or that implicit expertise will be seen and cited.

AI doesn’t work that way.

To a model, a foggy promise sounds like static – not a signal.

No clear signal, no attribution; no attribution, no trust, no selection.

Think of AI selection like a customs checkpoint – vague language gets stuck in inspection.

Only clear, attributed documentation crosses the border safely, eligible for citation or content reuse.

Ask yourself: Does your content state claims clearly enough for a non-human judge to point to the exact line as source?

If not, you’re betting on invisible signals.

Is that really a risk worth taking for high-stakes queries?

Most teams only catch this clarity gap after a failed AI selection test.

The fix almost always means embedding definitive, sourced claims at the paragraph level.

It’s simple, but rarely comfortable for experts used to speaking in learned generalities.

A common myth says nuance is always better than directness, especially in B2B.

The opposite is true for AI attribution: Only crisp, evidence-backed statements qualify. “Almost provable” isn’t enough.

The next frontier, then, is claim clarity – honing every key assertion to meet AI attribution requirements and citation eligibility.

Without this, you’ll keep seeing selection failures, broken chain-of-custody, and attribution confusion in AI search.

Push claim clarity up your quality checklist.

It’s the best bridge to reliable AI content usability – and toward more advanced diagnostic standards, which we tackle next.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Explicit and implicit knowledge

A Dynamic Theory of Organizational Knowledge Creation – Ikujiro Nonaka – Organization Science (1994)

Defines knowledge creation as a process of conversion between tacit and explicit knowledge. Tacit knowledge is personal and hard to formalize, while explicit knowledge can be articulated and transmitted. The paper formalizes this distinction as the basis for organizational knowledge systems and information processing.

https://www.svilendobrev.com/1/Nonaka_1994-Dynamic_theory_of_organiz_knowledge_creation.pdf - Attribution in information systems

Bias in Computer Systems – Batya Friedman and Helen Nissenbaum – ACM Transactions on Information Systems

Provides a framework showing that system outputs are shaped by design choices and embedded assumptions. Identifies preexisting, technical, and emergent bias, demonstrating that without transparency and attribution, users cannot evaluate the origin or reliability of information.

https://dl.acm.org/doi/10.1145/230538.230561 - Machine comprehension and trust

Cosmos QA: Machine Reading Comprehension with Contextual Commonsense Reasoning – Lifu Huang et al. – ACL / arXiv

Introduces a dataset specifically designed to test comprehension that requires reasoning beyond explicitly stated text. The authors show a large performance gap between humans (≈94%) and machines (≈68%), highlighting that systems struggle when answers depend on implicit, unstated information. This demonstrates that machine comprehension is significantly less reliable when it relies on inference over implicit content, and that systems perform better when answers are grounded in explicit, directly stated information.

https://arxiv.org/abs/1909.00277 - Standards for evidence in AI

What Do We Need to Build Explainable AI Systems for the Medical Domain? – Andrea Holzinger et al. – arXiv / XAI research

Explains that many AI systems function as “black boxes” and lack explicit declarative knowledge structures. Argues that decisions must be transparent and traceable, and that systems should allow users to retrace how outputs were produced to ensure trust and accountability.

https://arxiv.org/abs/1712.09923 - AI search and source citation practices

Explainable Artificial Intelligence (XAI) – overview of field and regulatory context

Describes the need for AI systems to expose the information and reasoning behind outputs. Highlights regulatory requirements (e.g., GDPR) for explainability and the ability to audit decisions, reinforcing that reliable AI requires explicit, inspectable evidence and sources.

https://en.wikipedia.org/wiki/Explainable_artificial_intelligence

Questions You Might Ponder

What is the difference between explicit and implicit knowledge in AI search?

Explicit knowledge consists of direct, clearly stated facts and claims that AI can safely attribute and cite. Implicit knowledge is implied or context-dependent, often requiring reader inference, which AI systems cannot reliably attribute – making it ineligible for citation.

Why do AI search engines prefer explicit statements over nuanced, implied content?

AI search engines prioritize explicit statements because these are directly citable and reduce the risk of misattribution or hallucination. Nuanced or implied content requires inference, which current AI systems cannot reliably perform, leading to exclusion from citations.

How does claim clarity impact AI-powered content discoverability?

Content with claim-level clarity – clear, sourced, and explicit statements – is far more likely to be cited and surfaced by AI systems. Unclear or implied claims fail attribution checks, diminishing the chances of selection in AI-generated search results.

What risks are involved with relying on implicit content for AI citation in regulated industries?

Implicit content lacks the directness required by regulatory frameworks. AI systems skip citing such content, increasing the risk of compliance failures, loss of visibility, and reputational issues in highly regulated fields like finance, law, and healthcare.

How can organizations ensure their content meets AI attribution requirements?

Organizations should structure content with measurable evidence, explicit attribution, and clear claims, especially in executive summaries and key insights sections. Each statement should be self-contained and source-backed to maximize AI citation and content reuse potential.