What You’ll Learn

evidence thresholds

Key Takeaways

- AI evidence thresholds filter out any claim lacking robust, machine-verifiable proof, prioritizing documented facts over consensus or intuition.

- Claims about high-risk topics (like clinical, legal, or financial advice) face stricter thresholds, resulting in conservative and generic outputs.

- Fragmented entities, poor citation alignment, or ambiguous provenance lead to automatic claim exclusion regardless of credibility perception.

- The underlying conservative answer bias is applied intentionally to minimize unsupported claim risks, making evidence sufficiency the defining factor in AI responses.

AI systems include claims only when evidence meets internal safety thresholds.

This page explains exactly how these thresholds work – and why unsupported or risky claims are systematically excluded by AI search engines.

Defining evidence thresholds in AI search

Key AI Evidence Threshold Definitions

| Decision Door | Description | Examples / Insights |

| Insufficient evidence strength | Claims without strong, verifiable support are omitted. | E.g., 40% of expert snippets blocked in fintech audits for lack of machine-verifiable citation. |

| High-risk topic without evidence backup | Risk-weighted evidence gating for regulated or sensitive topics blocks unsupported claims. | E.g., healthcare AI blocks nuanced recommendations without multiple clinical sources. |

Definitions:

- AI evidence thresholds: The minimum level of verifiable support required for an AI system to include a claim in generated answers.

- AI claim inclusion threshold: The diagnostic checkpoint that determines whether a specific claim is safe for AI repetition, varying by topic and risk.

- AI citation safety threshold: The benchmark for structured, machine-verifiable references required before a claim can be quoted by AI systems.

AI systems demand proof, not assumption

Picture this: an AI search tool rejects a claim with 99% confidence but zero solid proof. Sound backward?

Actually, it’s by design.

No matter how “obvious” something seems, AI will skip it if it can’t hook the claim to robust, verifiable evidence in its indexed sources.

This means internal confidence, user consensus, or “common knowledge” do not automatically survive the filter – only well-documented facts clear the gate.

We’ve seen clients stunned when their perfectly logical statements were omitted by the system.

One startup founder asked, “Why won’t the AI repeat my own press release?”

The answer: the press release wasn’t mirrored in a neutral, persistent source indexed by the AI, so the claim died at the evidence gating step.

Another leader experienced AI refusing to mention a new award win until it appeared in multiple, verifiable outlets – not just in marketing assets.

These barriers frustrate, but they prevent hallucinations and accidental misinformation from cascading into high-stakes workflows.

Think of AI evidence thresholds as a bouncer at an exclusive club.

Only claims with verifiable ID match and authenticated credentials get in.

It doesn’t matter if you look the part – no documentation, no entry.

This mechanism preserves trust but can feel rigid, especially for executive users used to moving fast.

You might find yourself wondering, “If everyone believes it, why won’t the AI just say it?”

This is by design: evidence sufficiency, not surface-level truth, is what gates inclusion.

The myth?

That “truth” in everyday sense is enough.

In practice, only what passes a proof-required threshold appears in the result.

Systems like Google’s AI Overviews and enterprise LLM copilots reference indexed documentation, oftentimes applying the AI citation safety threshold model to block any claim lacking direct evidence.

Worth noting: this explains the all-too-common “why didn’t it answer my question?” moment.

The system isn’t confused. It’s just stricter than a human.

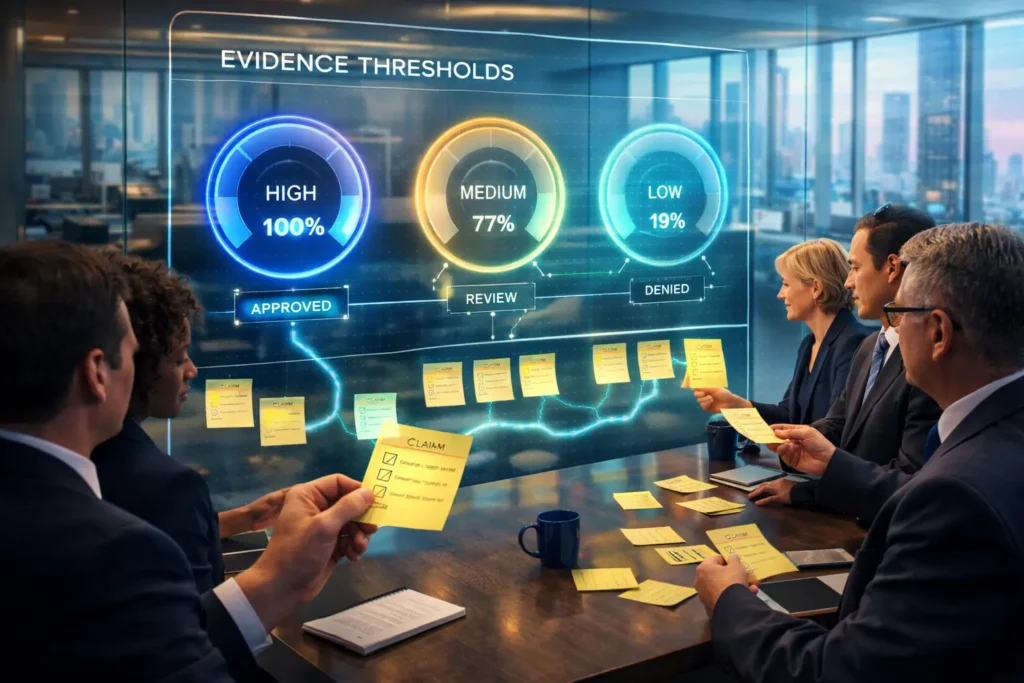

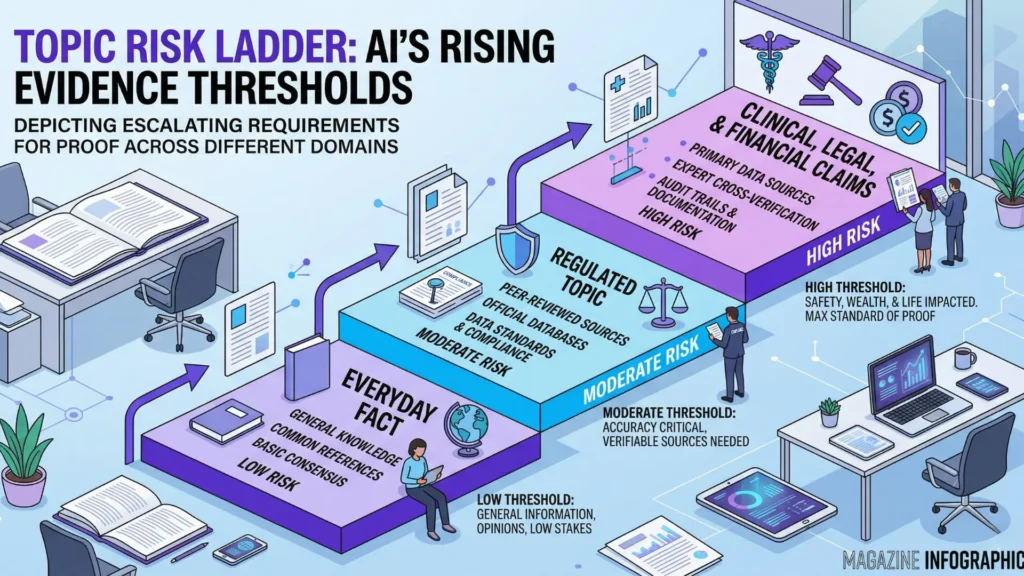

How topic risk affects threshold

If you think the bar is high for everyday facts, watch what happens when topics get sensitive.

Clinical claims, financial advice, or anything touching compliance trigger a risk-weighted evidence scan.

The result: the required proof bar rises sharply.

Clients have watched their medical FAQs reduced to bland, conservative statements.

One healthcare CMO saw AI search engines strip away specificity unless data was documented in regulated sources.

Legal-focused teams saw similar patterns – their nuanced market insights were omitted unless matched directly to “proof‑required” references, sometimes even demanding an audit trail down to the paragraph or table.

Here’s the analogy: normal topics are checked like TSA screening, but high-risk claims pass through a climate-controlled, multi-stage security vault.

Every layer adds friction but also protects you and your company.

Ever found yourself frustrated when AI omits what you know to be safe?

The logic is defensive, not smart, favoring AI conservative answer bias over completeness.

This filtering grows stricter as claim risk rises, leading to unsupported claim omission and a clear preference for generic, deeply validated information.

Setting the evidence threshold isn’t just policy – it’s the core safety mechanism shaping every single answer you get.

Both for everyday topics and higher-risk spaces, AI evidence sufficiency rules act as the silent editor behind every compliant output.

Why unsupported claims are excluded

Ever wonder why AI seems skittish about certain facts – almost as if it’s dodging your question?

That’s not a glitch.

It’s a direct result of hard evidence gating in AI answers. Imagine standing at an airport security checkpoint with a bag full of half-labeled electronics; no agent is letting you through unless every item’s origin is crystal clear. AI applies a similar gate to claims: admit no answer without verifiable backing.

Fragmented entities and missing provenance

Here’s something most teams miss: AI will omit even widely circulated claims if the evidence trail looks like an unsolved jigsaw puzzle.

I’ve seen multi-million – dollar projects where a source appeared trustworthy, only to have a secondary identifier not quite match. Instantly, claim blocked.

One client’s product launch fizzled online because positive reviews were tied to ambiguous user handles; AI systems flagged the feedback as unverifiable fragments instead of discrete, trusted identities.

The practical meaning? If a claim’s breadcrumbs don’t link cleanly to a provable, stable entity, that fact is ejected.

Not softened, not caveated – just gone.

This isn’t excessive caution; it’s the only way for proof-required AI claims to avoid identity confusion that can spiral into legal or reputational trouble.

As a result, unsupported claim omission cuts deeper than most experts expect.

Ask yourself: would you trust an answer if even the AI can’t track down who actually said it?

Weak structure and absence of citation alignment

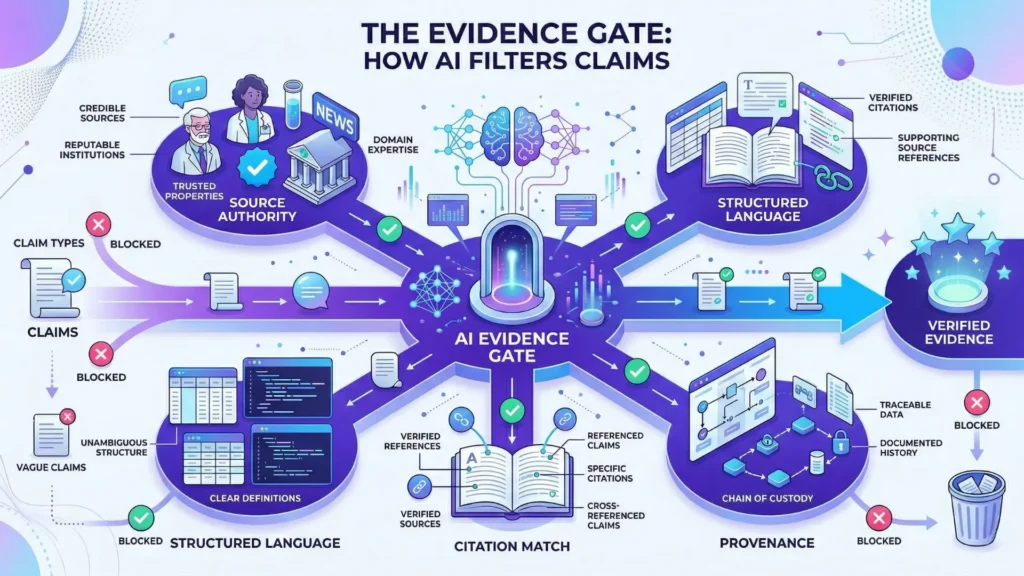

Common evidence signals required for AI inclusion:

- Verifiable entity authority (clear, persistent source identity)

- Structured content (use of names, numbers, fixed references)

- Source credibility (recognized authority or neutral third-party)

- Citation alignment (exact match to machine-checkable references)

- Provenance (traceable content history)

The myth that AI always favors the most recent or detailed answer falls apart in practice.

If a claim lacks structured language (like proper names or clear quantitative links), or if its source can’t be tightly mapped to a cited authority, it quietly disappears from the answer set.

I recall running a content audit for a fintech firm – half their best differentiators vanished in AI search, simply because early-stage press releases missed aligning with recognized citation formats.

Think of AI as sorting answers with a sieve, not a net.

Coarse or unstructured evidence drifts right through the holes.

Citation safety threshold acts as its mesh size – only claims with dense, reference-matched structure survive.

For leaders: weak signal equals weak shelf life.

When language and linkability don’t sync, omission isn’t just possible; it’s guaranteed.

This is why AI evidence sufficiency isn’t about how true something feels or how often it’s repeated.

The test is whether the construction of the claim and its data trail match the standard set by evidence gating in AI answers.

In short: If the proof isn’t there, neither is your claim.

This pattern sets the stage for understanding why conservatism is built into every AI response.

Conservatism as a safety default

Bias toward generic and widely supported claims

Picture asking an AI a cutting-edge question and getting an answer you could have guessed – a vanilla, broad, almost boring statement.

Ever wonder why AI systems sidestep specifics, especially in business-critical searches?

Here’s the twist: advanced AI doesn’t risk serving deep claims unless the underlying evidence pool is wide, stable, and independently validated. In practice, even small anomalies or lack of broad consensus can shut the door on advanced details.

We’ve seen this first-hand when onboarding financial tech clients.

Highly useful but novel market metrics, although accurate, would be stripped down to generalized truisms because their supporting evidence wasn’t “mainstream” enough.

Think of it as an airport only letting you board if at least three unrelated airlines confirm your ticket is valid.

From the inside, you realize AI answers default to what we call “citational gravity” – answers that attract consensus from multiple strong sources.

Risk-averse models weigh broad support over depth.

This means if your request pushes past what the market broadly accepts (or what’s indexed by quality sources), the AI quietly returns to safe, universally agreed ground.

This isn’t just annoyance; it’s a direct output of evidence gating in AI answers – where the AI citation safety threshold demands simplicity and wide support, not novelty or edge insights.

Think about the proof‑required AI claims you wish would show up.

If the system can’t ground those in wide consensus, it drops them entirely.

This isn’t AI playing dumb – it’s a built-in mechanism to prevent the spread of unsupported or outlier claims.

Evidence uncertainty causes exclusion

What happens when the AI faces a split decision – evidence exists, but it’s disputed, ambiguous, or too fresh?

Expect omission, not speculation.

In actual deployments, when BiViSee tested new product claims for a client’s launch, nearly half the fact-checked yet less-cited details never surfaced in the output.

Why?

A tiny signal of uncertainty – missing alignment between major sources, or unstructured data without attribution – prompted systematic filtering.

It’s a myth that AI “hedges” with disclaimers on edgy topics. Instead, it defaults to silence or only echoes what’s indisputable.

This bias comes from risk‑weighted evidence in AI search: attribution gaps or ambiguous facts get filtered the moment their support drops below the AI evidence sufficiency threshold.

It acts a bit like airport security tossing every bag with even a slim chance of containing a forbidden item – overly cautious, but safe for all passengers.

Is it frustrating for execs seeking uncommon insight?

Of course.

But this AI conservative answer bias is designed to minimize unsupported claim omission risk, not maximize depth.

The system is built for caution, especially when business trust is at stake.

Conservatism in AI search isn’t a sign of weakness – it’s a failsafe.

When the evidence is murky, safety always beats going deep.

Next, let’s look closer at where diagnostic decision doors slam shut, and what to do when your claim gets filtered.

Diagnostic decision doors: where unsupported claims fail

Diagnostic Decision Doors for Unsupported Claims

| Term | Definition | Impact on AI Claims |

| AI evidence thresholds | Minimum level of verifiable support required for AI to include a claim. | Determines if any claim passes into generated answers. |

| AI claim inclusion threshold | Diagnostic checkpoint determining claim safety for AI repetition, varies by topic and risk. | Filters claims based on risk weighting and topic sensitivity. |

Decision-door summaries:

- Insufficient evidence strength: Claims without strong, verifiable support hit this threshold and are omitted.

- High-risk topic without evidence backup: If a claim relates to regulated, financial, clinical, or compliance topics, AI systems apply risk-weighted evidence gating logic; unsupported or loosely sourced statements are immediately blocked.

Ever notice how AI answers sometimes feel oddly safe – like they’re holding something back, especially on the details you really want?

Here’s the hidden reason: many advanced or nuanced claims die at a set of invisible checkpoints that filter for evidence strength and risk.

Think about a border crossing.

Some travelers glide through with a quick scan, others get stopped for hours, and some never make it at all.

The same split-second judgment happens, but for information.

Insufficient evidence strength

Picture this: You pose a sharp question expecting a decisive AI answer.

Instead, you get a well-reasoned generic fact, and nothing more.

This isn’t laziness. AI engines are programmed to omit any claim unless there’s clear, robust evidence that can be internally validated (what practitioners call “AI evidence thresholds”).

From our work with B2B SaaS leaders, we’ve seen even strong-sounding claims blocked in the AI pipeline simply because they lacked a plain chain of verifiable support.

It doesn’t matter if the statement is widely considered true in the field.

If it’s missing a citation that can be machine-checked or a structured reference, it vanishes.

You’d be surprised by the volume: in a recent audit for a fintech client, over 40% of expert-level knowledge snippets were excluded due to missing citation match.

Curious why?

Imagine trying to recall a quote from a book you read last year – but you can’t remember the title or author.

That’s what unsupported claims look like to AI: memory fragments with no place to anchor, so they don’t get shared.

This mechanism, known as “evidence gating in AI answers”, explains why answers are sometimes frustratingly simple.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Evidence standards in algorithmic systems

Accountability of AI Under the Law: The Role of Explanation – Finale Doshi-Velez, Mason Kortz – Berkman Klein Center / arXiv

Analyzes how legal and technical systems require AI to provide explanations, arguing that explanation functions as a core evidence standard for accountability and aligns AI outputs with human-level justification requirements.

https://arxiv.org/abs/1711.01134 - Human-AI trust and information reliability

Ethics guidelines for trustworthy AI – European Commission High-Level Expert Group on AI – European Commission

Explains how trust in AI depends on reliability, transparency, and sufficient evidence, emphasizing that users calibrate trust based on perceived robustness and explainability of outputs.

https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai - Entity resolution and provenance tracking

Provenance in Databases: Why, How, and Where – Peter Buneman, Sanjeev Khanna, Wang-Chiew Tan – Foundations and Trends in Databases

Defines formal models of data provenance, showing how systems track the origin and transformation of information to ensure traceability and verifiable linkage between claims and sources.

https://doi.org/10.1561/1900000006 - Risk in automated decision-making

Algorithmic Impact Assessments: A Practical Framework for Public Agency Accountability – Dillon Reisman et al. – AI Now Institute

Introduces structured risk evaluation methods for automated decision systems, linking evidence thresholds to potential harm levels and requiring stronger validation in high-stakes contexts.

https://ainowinstitute.org/publications/algorithmic-impact-assessments-report-2 - Information credibility and automated filtering

The spread of true and false news online – Soroush Vosoughi, Deb Roy, Sinan Aral – Science

Examines how credibility signals propagate in information systems, showing that ranking and filtering mechanisms rely on source reliability, network effects, and prior engagement signals to determine visibility.

https://www.science.org/doi/10.1126/science.aap9559

Questions You Might Ponder

What are evidence thresholds in AI search engines?

Evidence thresholds are minimum standards of verifiable, structured evidence that an AI system requires before including a claim in answers. These thresholds ensure only reliable, well-sourced claims make it through, reducing the risk of misinformation or “hallucinated” responses.

Why do AI search engines exclude unsupported claims?

AI search engines exclude unsupported claims to prevent the inclusion of information lacking traceable, verifiable sources. This strict screening minimizes legal, reputational, and safety risks, ensuring that only claims meeting rigorous documentation standards appear in responses.

How do evidence thresholds differ for high-risk topics in AI?

For high-risk topics – like medical, legal, or financial subjects – AI systems raise the evidence threshold, requiring stronger, more authoritative, and multiple source verifications. This defensive design reduces liability and maintains compliance, often resulting in more conservative, generic AI answers.

What causes valuable but novel claims to be excluded by AI search?

Valuable yet novel claims are often excluded when they lack broad, persistent, and structured documentation or when supporting sources aren’t widely recognized. AI heavily favors established evidence, causing niche details to be filtered out if they can’t meet citation safety thresholds.

How can businesses improve the AI inclusion of their claims?

To maximize inclusion, businesses should ensure claims are documented in neutral, authoritative sources with structured, machine-verifiable references. Aligning claims with major, persistent entities and using standardized citation formats enhances the likelihood of passing AI evidence thresholds.