What You’ll Learn

entity fragmentation

Key Takeaways

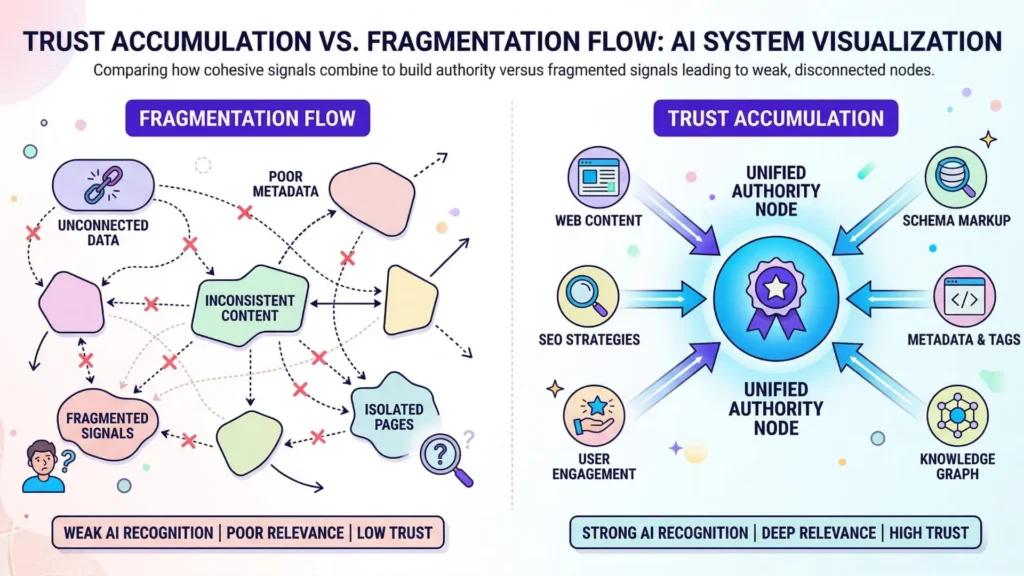

- Entity fragmentation occurs when a brand’s digital signals are inconsistent across platforms, preventing trust and authority from building in AI systems.

- Frequent mentions or high content volume do not improve AI trust if entity signals are fragmented; without cohesion, authority is diluted rather than amplified.

- AI search systems resolve ambiguous or fragmented entities by defaulting to more stable competitors, reducing citation and retrieval confidence.

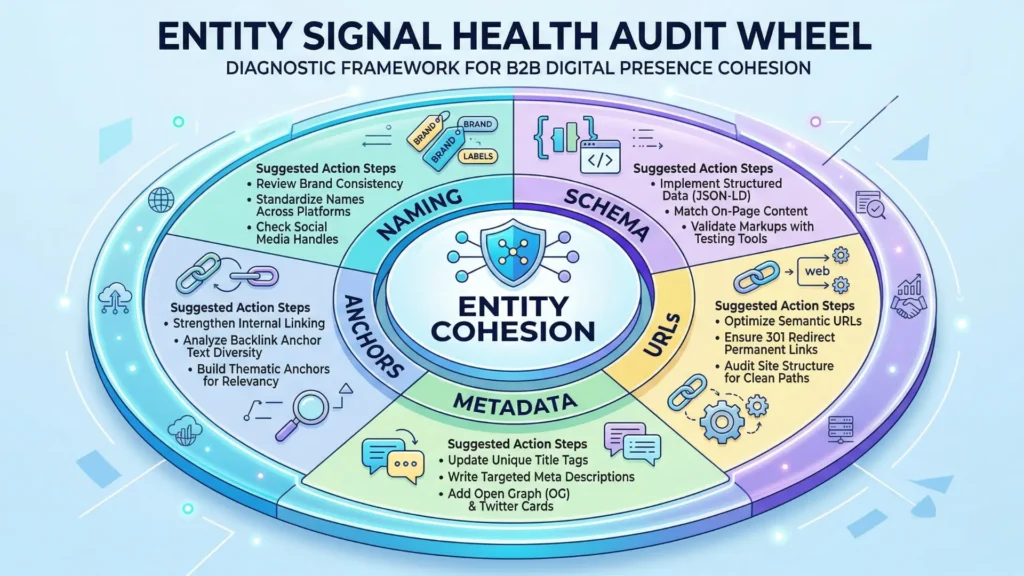

- The only path to compounding AI authority is through rigorous entity cohesion – clear, uniform signals across schema, URLs, metadata, and references.

What if your company could appear 1,000 times across the web – yet AI never truly “sees” you?

Executives expect their entity to be easily recognized by AI systems, but here’s the reality: AI trust fragmentation can quietly break authority before you spot a single traffic loss.

What entity fragmentation means

Inconsistent identity signals fragment trust

Examples of Entity Signal Variations Causing Fragmentation

| Scenario | Content Volume | Entity Cohesion | AI Trust Outcome |

| High Volume, Low Cohesion | 20 posts/month | Multiple brand handles, mixed schema | Authority dilution, weak trust signals |

| High Volume, High Cohesion | 20 posts/month | Consistent brand naming and schema | Strong trust build-up, authority compounding |

| Low Volume, High Cohesion | 5 posts/month | Stable identity signals | Moderate trust with coherent growth |

| Low Volume, Low Cohesion | 5 posts/month | Fragmented signals | Minimal trust, fragmented authority |

Entity fragmentation in AI search trust happens when naming, metadata, or reference anchors appear in slightly different forms across platforms.

Countless times, we’ve seen brands called “BiViSee”, “Bivisee Agency”, or just “BVS” – each subtle shift creates a new weak node in the AI’s mapping.

It’s like shouting your name in a crowded lobby but each time with a different accent; the system never forms a stable picture of who you are.

I’ve watched a public SaaS client use more than five taglines, two schemas, and three main URLs over 18 months.

Despite soaring mention counts, their entity signal never accumulated authority.

More exposure did not add up to trust, since every system – from Google to GPT – construed these as separate, weak entities instead of one strong signal.

Here’s the analogy: imagine wiring a house, but every outlet runs back to a different circuit.

Plug in a device and you’ll get flickers, never consistent power.

Without uniformity, the “current” of trust can’t build up.

Feel that itch – how many places might your brand’s core signals contradict each other right now? Is your schema telling one entity story while your LinkedIn name or authorship seems off-key? Even cross-linking strategies backfire when they propagate these inconsistent signals.

A common myth: more mentions mean more authority.

In fact, with fragmented signals, every new mention might dilute AI trust further rather than compounding it (the opposite of what most content teams assume).

Fragmentation prevents trust compounding

Most executives expect authority to accumulate, like stacking bricks.

But in fragmented entity environments, stacking doesn’t work – each “brick” is made from a different material, so the wall collapses before it rises.

Across dozens of authority audits, I’ve seen teams chase domain rating or content volume, believing these will convert into higher AI citability.

Yet without cohesive entity signals – consistent use of schemas, canonical URLs, and unbroken anchor logic – AI systems classify the mentions under different, lower-impact clusters.

The result?

Authority dilution for AI entities, with little chance for trust propagation.

Entity cohesion, not mention count, is the foundation for lasting trust.

If your signals are fragmented, even your strongest proof points function like whispers, not shouts.

Think for a moment – are your “best” digital assets really compounding, or quietly canceling each other out?

Clear, unified identity is the prerequisite for trust to form and amplify.

Fragmented entity signals mean you’re building on sand, not stone.

In the next section, we’ll break down how this fragmentation leads AI systems to second-guess, hesitate, or bypass your sources entirely.

How fragmentation erodes AI citation confidence

Retrieval clustering sees multiple weak entity nodes

Picture this: a search AI is scanning thousands of documents, looking for credible signals about a single brand. Instead, it finds seven variations – slight differences in naming, inconsistent schema, scattered author trails.

Which entity is real?

Which one holds authority?

The result feels like trying to listen to a symphony where every instrument is out of tune.

Our team has watched clients lose ranking visibility, not because of poor content, but because fragmented entity identity sent diluted signals.

One firm had award wins and CEO quotes split across three spellings, two address formats, and five metadata structures.

Their trust got split up too – each fragment too weak to anchor retrieval strength.

Sound familiar?

AI search engines often respond by creating multiple low-confidence nodes in their internal clustering.

The system sees fragments as separate entities – or, worse, as weakly related.

One distributed b2b client with 40+ local listings saw their signals scatter so widely that not one city location achieved top-3 map pack visibility, even though they dominated reviews and volume.

It’s like pouring water into five leaky buckets: none ever fills up.

Entity fragmentation in AI search trust drains cumulative potential.

The classic myth says frequent mentions are enough – yet we see again and again that unless those signals tie back to a single, coherent entity graph, authority pixels never assemble into the full picture.

Cautious citation: AI opts for safe, coherent alternatives

Here’s what surprises most: when AI search faces ambiguity, it doesn’t gamble.

If there’s uncertainty about identity, it defaults to citing external sources with clearer entity cohesion – even if the information is more generic or less current.

We worked with a SaaS vendor whose blog had hundreds of high-authority mentions, but inconsistent company spelling and product taxonomy.

Search AI routinely cited a competitor’s less-trafficked knowledge base simply because that entity presented unbroken identity signals.

The implications are vast for teams chasing citability in AI-powered search.

You might feel your name is everywhere, but AI models dismiss the scattered signals as high-risk.

It’s a silent vote for safety.

Picture AI as an editor scanning articles; it will quote the writer with a tidy, verified bio over the one who switches names or credentials mid-story.

So if your team ever asks, “Why don’t we get cited as an industry source, even when we own the conversation?” – entity signal inconsistency is probably the real answer, not content weakness.

Singular, stable identity is the simplest shortcut to AI trust.

AI citation confidence isn’t about sheer volume – it’s about the strength and clarity of entity presence.

Next, we’ll dig into why superficial mentions and content volume can trick teams into missing the root cause.

Teams misinterpret visibility gaps as content failure

Mentions don’t equal entity reinforcement

Why do some brands with hundreds of mentions still see flat search visibility?

Here’s a blunt truth: AI can’t stitch together scattered identity fragments – no matter how many times you get named.

Think of it like seeing your company’s name spray-painted on a dozen abandoned walls.

Loud?

Yes.

But none of those marks link back to a real headquarters, a consistent logo, or even an address.

For AI systems, these scattered signals mean nothing sticks.

In client audits, we’ve seen global brands pump out mention-heavy content.

But each mention uses a different spelling, schema, or format. Instead of amplifying authority, the result is noise.

We once tracked a fintech client who appeared as “Acme Solutions”, “Acme Inc”., and “AcmeS”. Guess what?

AI search interpreted those as separate entities – so the trust signal fractured.

Their domain authority flatlined despite near-daily coverage.

What’s stopping AI from connecting the dots? Entity signal inconsistency.

If your identity reads like a shadow puppet show – familiar yet never identical – AI can’t reconcile the parts.

And if you wonder why that single press release didn’t move the needle, it’s this: Citability requires deep, cohesive identity, not just broadcast volume.

Assuming volume over cohesion masks fragmentation

Impact of Content Volume vs Entity Cohesion on AI Trust

| Signal Type | Variant 1 | Variant 2 | Variant 3 |

| Brand Name | BiViSee | Bivisee Agency | BVS |

| URLs | www.example.com | exampleagency.com | example.co |

| Taglines | Your Growth Partner | Growth Experts | Partner for Growth |

| Schema | Schema A | Schema B | Schema C |

Flooding the web with surface-level content is like shouting in a crowded stadium – the effect is diffusion, not amplification.

Many teams believe more is always better.

But volume without cohesion hides a silent killer: entity fragmentation in AI search trust.

We’ve seen SaaS clients publish twenty blog posts a month, hoping brute force will drive up authority.

Yet, each author uses slightly different brand handles, half-addressed schema, or mixed-up anchor texts.

The result? Instead of one strong, unified signal, AI finds a jumble of fragmented entity signals.

Authority dilution for AI entities isn’t subtle; it’s measurable – think sub-1% increases in retrieval confidence, even with aggressive output.

A simple analogy: imagine a river broken into dozens of trickling streams.

Add more water if you like, but if the banks aren’t merged, the flow never gathers power.

Are your content volumes masking a lack of entity cohesion?

Or worse – have your reporting dashboards convinced you the gap is a “content quality” issue, not an identity one?

Teams who chase content thresholds without diagnostic logic fall into this trap.

AI systems don’t reward volume – they reward entity cohesion AI trust.

The myth?

More mentions means more authority. Reality? Only a stable, unrepeated identity lets AI compounding work.

Pressure can mount to produce, but speed only spreads the fragments wider if the core isn’t solid.

Visibility gaps often point to hidden identity issues – not campaign failure.

The next diagnostic is entity coherence: what’s holding all your signals together?

Trust propagation: bridging to entity coherence

Stable identity as foundation for authority

Imagine asking five different AI systems to cite your organization’s expertise – and getting five subtly different answers, each undermining the next.

Why?

Because even tiny identity gaps can fracture trust propagation before it ever starts.

Here’s a shocker: we’ve seen clients with hundreds of press mentions receive zero AI authority lift, simply because their brand signals shifted slightly in schema, syntax, or naming anchors over time.

In our work, clients often believe their reputation compounds naturally, but fragmentation leaves their authority diluted and scattered.

It’s like pushing water across a marble counter – unless there’s a channel, the droplets never combine.

Authority, in AI’s eyes, is cumulative only when all signals converge on a single, stable entity.

Any disconnect – say, inconsistent URLs, confusing meta data, or schema drift – creates fissures where trust leaks away.

One client had three domain variations, multiple spelling conventions, and even inconsistent colors and logos.

The result?

AI systems treated them as unrelated sources, which meant there was no single pulse of credible signal.

Their name was everywhere, but nowhere the same.

Authority dilution in fragmented AI entities doesn’t show up as a penalty – it simply fails to surface at all.

Ask yourself: Are you scattering recognition or stacking it?

Because a stable, unified identity becomes the bedrock for trust amplification.

Authority signals need a hub – without it, propagation fizzles.

Routing to trust and propagation mechanisms

The next move isn’t more mentions or louder content.

It’s architecting your digital identity so every mention, every schema field, every reference speaks with one clear voice.

In technical terms, this means deploying entity cohesion – the consistent, cross-platform definition of signals, from your Knowledge Graph to third-party citations to how you’re described in open data sources (think Wikidata, schema.org).

We’ve advised brands who thought SEO was their only gap, only to find their entity signals routed trust down dozens of dead ends.

One analogy stands out: networks amplify only when all wires run to the same node.

Otherwise, energy dissipates.

For AI search trust, the Single Source of Truth isn’t just marketing talk; it’s the strongest amplifier of digital authority.

A practical tool here: Knowledge Graph management platforms can help orchestrate schema, validate consistency, and map all references back to a coherent entity.

But don’t confuse this with a quick fix.

Cohesion is earned by relentless precision – unifying every entity mention and identity signal over time.

So the next step is obvious: take inventory, correct the splits, and route every signal to a singular entity node.

Because only this enables trust to propagate, accumulate, and be cited as a reliable authority by AI.

Identity clarity isn’t optional – it’s the precursor for trust propagation.

Without it, authority never compounds, no matter how loud or frequent your signals become.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Entity Linking and Authority Accumulation in Knowledge Graphs

Entity Linking with a Knowledge Base: Issues, Techniques, and Solutions – Wei Shen, Jianyong Wang, Jiawei Han – IEEE Transactions on Knowledge and Data Engineering

Explains how entity linking maps ambiguous mentions in text to unique entities in a knowledge base. Shows that variation in names and ambiguity prevent consistent accumulation of signals, requiring disambiguation mechanisms to unify references and enable reliable knowledge integration.

https://www.computer.org/csdl/journal/tk/2015/02/06823700/13rRUwbs2bs - Effects of Data Consistency on Automated Trust Assessments

Data Quality and Trust: Review of Challenges and Opportunities – J. Byabazaire et al. – Electronics (MDPI)

Explores how data quality dimensions such as consistency, accuracy, and completeness directly influence trust in data-driven systems. Shows that inconsistent or fragmented data reduces reliability and undermines automated trust assessments, especially in distributed environments.

https://www.mdpi.com/2079-9292/9/12/2083 - Knowledge Graph Evolution and Identity Management

The sameAs Problem: A Survey on Identity Management in the Web of Data – Joe Raad et al. – Semantic Web / arXiv

Examines how multiple identifiers for the same entity across datasets create fragmentation in knowledge graphs. Shows that incorrect or inconsistent identity links (e.g., owl:sameAs) can propagate errors and reduce reliability, making identity management a core challenge for maintaining trust and coherence in evolving knowledge systems.

https://arxiv.org/abs/1907.10528 - Authority Signal Diffusion in Search Engines

The Anatomy of a Large-Scale Hypertextual Web Search Engine – Sergey Brin, Larry Page – Computer Networks

Introduces PageRank and explains how authority signals propagate through link structures. Shows that pages gain authority through incoming links, and that weak or fragmented connections reduce ranking strength, demonstrating how authority diffuses through network topology in search systems.

https://research.google/pubs/pub334/ - Schema and Metadata Best Practices for AI Trust

Linked Data – Design Issues – Tim Berners-Lee – W3C

Defines principles for using URIs and structured relationships to represent entities consistently across the web. Shows how standardized, machine-readable metadata enables reliable data integration, reduces ambiguity, and improves extractability and trust in automated systems.

https://www.w3.org/DesignIssues/LinkedData.html

Questions You Might Ponder

What is entity fragmentation in the context of AI trust?

Entity fragmentation occurs when a brand or entity’s identity is inconsistently presented online, causing AI systems to recognize multiple, weaker nodes instead of one strong profile. This fragmentation prevents trust, authority, and citability from accumulating across platforms.

How does inconsistent schema impact search engine authority?

Inconsistent schema makes it difficult for AI and search engines to link all references to a single authoritative entity. This results in diluted authority, weaker rankings, and reduced chances of being cited as a credible source, even with large content output.

Why does frequent mention not always increase AI trust?

Frequent mention without cohesive identity signals causes AI to treat each appearance as a separate, weak entity. Instead of amplifying trust, fragmented mentions dilute authority, making it hard for search engines to reliably cite or promote the entity.

How can brands ensure entity coherence for AI systems?

Brands should standardize naming, schema, URLs, and metadata across all platforms. Using a central “source of truth” like a knowledge graph and regularly auditing for alignment ensures all signals reinforce one identity, maximizing AI trust and citation potential.

What are signs that entity fragmentation is hurting your AI visibility?

Common signs include flat or declining search rankings despite higher mention volume, split or inconsistent knowledge panels, and seeing generic competitors cited in AI results instead of your brand – even when your content is more comprehensive or updated.