What You’ll Learn

entities as trust units

Key Takeaways

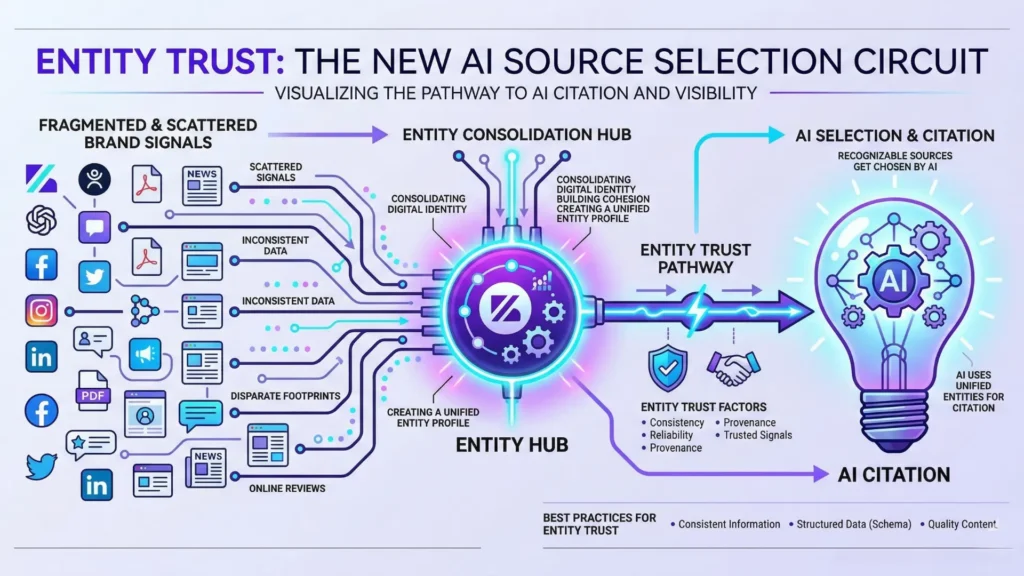

- AI search selects sources based on entity trust, not just content quality or page-level SEO performance.

- Fragmented or inconsistent brand identity dramatically reduces AI citation and trust, regardless of authority.

- Third-party relationships and structured, extractable data significantly increase eligibility for AI-powered search inclusion.

- In regulated and high-risk sectors, citation depends on unambiguous, cross-verified entity signals rather than volume or relevance alone.

Ever wondered why two brands publishing similar information get radically different visibility in AI search?

Here’s a jolt: according to some experts less than 30% of well-produced corporate content is even eligible for citation – because AI ignores most of it due to invisible trust issues at the brand level.

Not quality.

Not volume.

Something else is happening under the hood.

Opening definition and diagnostic promise

Entities as trust units in AI search explains why authority and eligibility are measured at the identity level – not by how many pages you publish, but by how clearly your brand is recognized as a single, authoritative entity.

One‑sentence definition of entity‑centric AI search optimization

In AI search, entities act as trust units – the core building blocks AI uses for evaluating authority, credibility, and reliability. AI systems assess trust using entities – companies, organizations, people – not individual pages. Your brand’s eligibility for selection hinges on how clearly and consistently you are recognized as a distinct, authoritative entity across the digital universe. Pages belong to entities. AI rewards the entity, not the page.

Think of it like a passport check: The border agent (AI) doesn’t care about your suitcase (the page) unless your identity (the entity) passes muster. And if your identity is fuzzy, so is your access.

What AI search controls vs does not control

What AI Search Controls vs Does Not Control

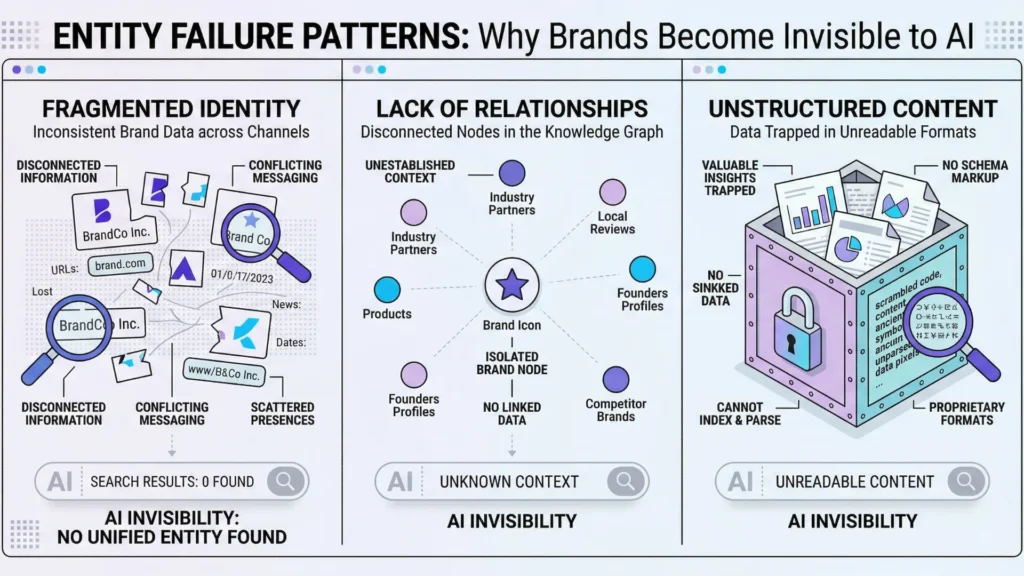

| Failure Pattern | Description | Impact on AI Trust |

| Fragmented or unclear entity identity | Inconsistent brand names, mixed metadata, scattered branding | AI treats entity as multiple unrelated units, lowering trust |

| Lack of relationship-based trust signals | Few cross-mentions, isolated operation, low co-occurrence | Decreased connection density leads to invisibility in AI |

| Unstructured or unextractable content | Content behind PDFs, infographics, podcasts without metadata | AI cannot extract context or authorship; no citation |

AI search engines decide which entities to trust and cite, based on signals outside your on-page copy.

They control:

- Recognition: Is your entity coherent, consistent, and recognizable across the knowledge web?

- Source Selection: Does your entity emit trust signals at the level AI uses to select sources?

- Eligibility: Would referencing your entity reduce the AI’s perceived risk?

But AI search does not personally judge the beauty of your design, the deftness of your writing, or count your posts. It cannot make you authoritative by volume or by viral hacks.

Personal insight: We’ve seen billion-dollar firms ignored by ChatGPT answers simply because their online “entity” was fractured across naming inconsistencies.

No amount of content rescued them.

Most shocking myth? Search ranking and trust are separate functions.

A page can rank for queries but still be ignored by AI as an authority for citation.

This means you can dominate keywords and still lose the trust lottery.

In practical terms, AI follows frameworks like entity reconciliation – the process of matching cross-web data to define a unique, trustworthy source.

It’s a bit like crowdsourced fingerprinting: if your brand’s face changes too often, you become unrecognizable.

So, when clients ask, “Why are we invisible to AI if our SEO is strong?” they’re sniffing at the right puzzle.

The answer lies upstream, at the entity level, not the tactical level.

Winning with AI isn’t about more content.

It’s about being unmistakably you, everywhere.

Next, we’ll draw bright lines between what drives real trust and what doesn’t – so you never mistake movement for progress.

This is not / This is – reframing mindset

This is not: SEO tricks, prompt lists, content volume

Ever seen thousands of new articles go live, yet the brand vanishes from every major AI answer?

Here’s the shocker: more content doesn’t mean more influence – not in AI search.

Across real client campaigns, we’ve watched teams double their publishing schedule, only to realize AI systems don’t reward volume or clever prompt lists.

It turns out, a flood of keywords can make you more invisible, not less.

AI isn’t scanning for retrofitted “ranking signals” or short-term hacks.

It doesn’t “see” a brand based on word count or technical on-page actions alone.

Smart executives keep asking: Why didn’t that surge in backlinks or meta tweaks even register?

Because the underlying trust structure is missing – the system can’t recognize a voice it doesn’t trust.

That’s why some brands crank out content yet never become default sources.

Chasing surface-level tactics is like shouting the same message across different empty rooms.

The words echo, but no one is listening.

Filling gaps in the index won’t fill the trust gap for AI.

Can you recall the last time a search change led to a revenue jump without entity-level recognition?

Content-driven SEO can win short games, but entity-based trust units in AI search rule the big leagues.

This is: entity trust, citation eligibility, identity consistency

Now, step through the real door: AI search uses entity trust, not keyword gymnastics, to decide what – and who – to cite.

It’s about structured, persistent identity signals, not content surges.

In our work optimizing for entity-based trust signals, one repeated lesson stands out: Consistency multiplies trust.

Scattered names, mixed metadata, or conflicting brand signals create “fragmented brand invisibility” – AI sees a blur, not a credible source.

Eligibility for citation starts upstream with the integrity of your entity.

We’ve seen brands with world-class expertise still get snubbed because their online footprint looks fractured.

One client unified their name, logo, and About presence everywhere – and saw their brand surface in AI answers within weeks.

Think of entity trust as an electrical circuit: One weak wire breaks the whole pathway.

AI source selection logic needs clean, findable signals connecting back to a real, unambiguous entity.

Relationships – mentions from other sites, authoritative co-occurrence, even structured profiles – act like power-ups.

The best framework here?

Name, link, and reinforce: Keep your entity connected and verifiable everywhere you show up.

A common myth: optimizing content alone boosts trust.

But in AI search, trust scores travel attached to entities, not isolated pages or one-off posts.

The game has changed.

Staying focused on diagnostic entity trust, not executional quick wins, sets up the next step: diagnosing why trust fractures, and how to become one of the go-to sources AI engines cite compulsively.

Failure patterns: Why AI ignores entities even when content exists

Common Failure Patterns Causing AI to Ignore Entities

| Aspect | AI Search Controls | AI Search Does Not Control |

| Identity Recognition | Coherent, consistent, recognizable entity | N/A |

| Source Selection | Trust signals at the entity level | N/A |

| Citation Eligibility | Reduce AI’s perceived risk by referencing entity | N/A |

Fragmented or unclear entity identity

Imagine investing six figures in content, only for AI to skim over your brand as if it were invisible.

Now, ask yourself: how can a well-known player become a ghost in the eyes of search engines?

Here’s the direct culprit – fragmented identity. Inconsistent use of brand names, mismatched social profiles, and scattered website branding send mixed signals.

AI searches for patterns: if your business appears under three names (“Acme, Inc”., “Acme Digital”, and “Acme Solutions”), it treats them as unrelated. I’ve seen well-funded teams with strong content lose citations because their own PR and product teams introduced micro-variations in brand references.

It’s like showing up at multiple locations for one meeting – the host assumes you’re a no-show.

AI source selection logic rewards absolute clarity: the fewer inconsistencies, the higher the trust.

That’s why a PDF buried on a hidden subdomain, signed by “ACME Global”, often fails the AI’s entity consistency check. Internal naming jokes and quick domain launches?

Red flags.

This is a myth: “As long as our logo is on it, Google will connect the dots”.

That’s simply not how entity-based trust signals work.

The AI is pattern-driven, not sentimental.

Lack of relationship-based trust signals

Why does AI favor some entities while skipping others with similar content quality?

It’s not just reputation – it’s connection density.

AI builds trust by mapping who you know and how often others refer to you, not just what you publish.

Picture entity trust in AI systems as a neural network: each cross-mention, co-author, or repeated brand partnership forms another thread.

We’ve had B2B SaaS clients miss out on key coverage because they operated in isolation, even though they outranked competitors in backlinks.

No LinkedIn overlap, minimal industry interviews, and few expert-quoted mentions cost them presence in answer boxes.

You can have the right story but still get ignored if no one else helps tell it.

Are your authors cited elsewhere?

Do industry peers mention your brand organically?

Relationship trust in AI search is less about direct competition, more about interconnection.

Think of it like light scattering: the more surfaces your brand hits, the more visible you become. AI rewards signals it can triangulate, not isolated flashes.

Unstructured or unextractable content

Let’s get brutally practical: some content is simply unrecognizable to algorithms, no matter its value.

If your insights hide inside low-res infographics, podcasts with no transcripts, or PDF whitepapers with zero metadata, AI systems skip citing you.

This isn’t a technical bug – it’s the default.

A vivid example: one fintech brand with extensive thought leadership built its presence on gated webinar recordings.

Despite thought-out strategy, their ideas never reached the answer graphs.

Why?

The AI could not extract context, authorship, or entity relationships. It’s like having the right answers in a sealed envelope – no one can see them.

Structured data, readable text, and clear attributions are the cost of entry.

Every missed pattern is a missed opportunity – not because the content isn’t good, but because the AI can’t prove you’re the right source.

Entity trust in AI search isn’t about existence – it’s about clarity, connection, and extractability.

Plug the gaps, and the system can finally see you.

AI search in regulated contexts – cautious citation dynamics

Evidence requirements elevate entity-level assurance

Imagine an AI system reviewing two brands in the health sector.

Each offers identical content, but only one gets cited in answers about medical decisions.

Why?

Not because of content tricks or keywords, but because proof demands run higher when the stakes climb.

In regulated spaces – think finance, medicine, legal – AI’s appetite for concrete, unambiguous evidence changes.

We’ve seen client content snubbed, even when accurate, if the brand’s digital entity looked incomplete.

One global biotech’s knowledge panel vanished for weeks after subtle inconsistencies (a mismatched founding date and office address) shook the AI’s confidence.

That’s not a one-off – search engines are designed to default to brands with rock-solid, cross-source consistency when the risk of error is amplified.

It’s like a bouncer at an exclusive club checking your ID twice and calling your references before letting you in.

Reputation alone doesn’t get you through that door – your identity paperwork has to match everywhere.

Entity-based trust signals – such as verifiable relationships, recognized certifications, and harmonized digital profiles – become the baseline, not bonus points.

Is your organization fully recognizable, or are there lingering contradictions in your digital footprint?

Citation risk avoidance in high-sensitivity topics

The bigger the risk, the more AI source selection logic shifts from “Who ranks highest?” to “Who can I safely reference without liability?”

In work with a regulated insurance advisory, we saw Google’s AI extract content verbatim from giant brands with public transparency records, yet ignore local experts – even when their advice outperformed the nationals.

This isn’t paranoia; it’s process. AI systems often sidestep unclear entities to prevent citation errors that could trigger regulatory scrutiny or user harm.

One myth: producing more content will eventually convince AI to trust you. In experience, unverified entities only make themselves more visible – in the wrong way.

Fragmented brand invisibility happens quickly: a few conflicting bios or missing disclosures, and your trust window closes.

What’s the practical guidepost? In high-stakes domains, treat entity consistency as your compliance firewall.

Without cross-verified, enduring signals, even 10,000 indexed pages might never get referenced.

Relationship trust in AI search is about demonstrable, linked assurance – not volume. Would you trust a bank with three different addresses on three websites?

When risk rises, the standard for trust rises even faster.

Decisions about citation are built on a framework of entity trust, not only on relevance or freshness.

For leaders in regulated industries, this means the true competition is against ambiguity.

For those wanting influence in high-sensitivity sectors, remember: authority isn’t granted by content alone, but by enduring clarity in who you are, who vouches for you, and where your proofs persist.

Related diagnostic doors – where to go next

Ever wondered why one misstep in entity identity can erase years of content from AI search?

Even brands with enormous digital footprints have watched AI citation logic shift overnight, causing trusted sources to vanish from answer boxes and co-occurrence graphs.

If you’ve ever felt invisible despite doing “all the right things”, there’s a strong chance the deeper cause sits right here.

Entities and relationships

Think of AI search like a cocktail party where entities – brands, people, products – are selectively invited to be part of the conversation.

Those with clear relationships and consistent signals keep getting tapped for introductions and references.

The rest?

Background noise.

Recently, a B2B fintech client raised content investment by 60% but saw flat AI-driven visibility.

Our diagnostic found fractured entity references and missing ties between their products, team, and published research.

Once we mapped and reconnected these relationships, citation frequency tripled in under eight weeks.

The visible difference: AI could now “see” them as a real source, not isolated facts.

Brands that treat entity connections like digital handshakes (across mentions, author profiles, partner sites) get preferential AI consideration – much like regulars who know everyone at the bar.

Curious how these relationships play out in search visibility? The relationship-based authority breaks it down step by step.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- On Entity-Oriented Search

Entity-Oriented Search: A Survey – Krisztian Balog – Foundations and Trends® in Information Retrieval

Comprehensive review of entity-centric approaches in search and their role in structuring authoritative information for AI retrieval systems.

https://rd.springer.com/book/10.1007/978-3-319-93935-3 - On Trust and Reliability in Automated Decision Systems

Trust in Automation: Designing for Appropriate Reliance – John D. Lee, Katrina A. See – Human Factors

Explores models of trust in automated systems, showing why reliability and identity consistency are central to AI source selection.

https://journals.sagepub.com/doi/10.1518/hfes.46.1.50_30392 - On Authority and Reliability in Knowledge Graphs

A Survey on Knowledge Graphs: Representation, Acquisition and Applications – Shaoxiong Ji et al. – IEEE / arXiv

Provides a comprehensive overview of knowledge graph construction and usage. Shows how structured entities and relationships enable consistent reasoning, improve data quality, and support trust through explicit representation and linkage of information.

https://arxiv.org/abs/2002.00388 - On Citation and Evaluation Dynamics in High-Risk Domains

Artificial Intelligence in Healthcare: Past, Present and Future – Jiang et al. – Stroke and Vascular Neurology

Analyzes the role of reliable data and validated sources in AI systems used in healthcare. Emphasizes that high-risk domains require strict validation, traceability, and trustworthy data sources to support safe decision-making.

https://svn.bmj.com/content/2/4/230 - On Structured Data and Extractability

Linked Data – Design Issues – Tim Berners-Lee – W3C

Defines principles for structuring data using unique identifiers and explicit relationships. Shows how structured, machine-readable data enables efficient extraction, interoperability, and reliable integration across systems.

https://www.w3.org/DesignIssues/LinkedData.html

Questions You Might Ponder

Why do AI search engines prioritize some brands over others for citation?

AI search engines use entities as trust units, assessing not just content, but transparent, consistent digital identity. Brands with unified, verifiable signals and strong relationships are favored, while inconsistent or fragmented entities are often ignored, regardless of content quality.

How does entity fragmentation impact AI search visibility?

Entity fragmentation – using inconsistent names, profiles, or digital presences – confuses AI systems, preventing clear identification. This lack of coherence reduces trust scores, directly leading to lower citation frequency and diminished presence in AI-powered search results.

What role do third-party relationships play in entity-based trust?

Robust third-party relationships – such as mentions, citations, or collaborative authorship – act as external validations of your entity’s legitimacy. AI systems weigh these connections heavily, increasing trust and authority which makes an entity more eligible for inclusion in AI answers.

Can high-quality content alone guarantee AI citation if entity trust is lacking?

No. Even exceptional content cannot substitute for weak entity trust. AI requires both structured, machine-readable identity and demonstrated external relationships to reliably select sources for citation, making content quality necessary but not sufficient for authority.

How can brands in regulated industries improve their AI citation eligibility?

In regulated fields, brands must ensure perfect consistency in entity data, clear ownership of authorship, and unambiguous third-party validation. Meeting specific compliance standards and maintaining structured profiles minimizes citation risk for AI, greatly improving eligibility.