What You’ll Learn

conservative sourcing

Key Takeaways

- Conservative sourcing in AI search engines favors established sources, reinforcing uniformity and risk aversion over information diversity.

- AI evidence gating intensifies bias by filtering out newer or less corroborated expertise, regardless of underlying accuracy or quality.

- Structural mechanics like eligibility and preference filters lock out unconventional or uniquely formatted content, shaping persistent answer bubbles.

- Users experience predictable but limited perspectives, with nuanced or innovative expert insights routinely omitted from AI summaries.

Ever notice how AI search engines seem to cite the same sources repeatedly, even when more current or nuanced options exist?

Conservative sourcing drives this pattern: AI systems systematically select content deemed safest to cite based on corroboration and structural consistency.

Only after ensuring this safety do they include a source – ensuring answers remain predictable and low-risk.

Why AI systems prefer conservative, repeatable sources

For illustration, imagine always choosing the same color tie before a big board meeting – predictable, safe, but potentially uninteresting.

Definition: Conservative sourcing in AI search is the systematic preference for sources with established credibility, corroboration, and consistent structure, designed to reduce risk and minimize citation errors in AI-generated answers.

Error minimization through safe-source repetition

AI systems rely on an accumulation effect: each time a reputable source is cited without generating errors, its authority is reinforced in subsequent answers.

This compounding trust model reduces error risk but leads to stable – and repetitive – citation hierarchies.

For example, a rigorous, yet new, expert report may be ignored not because it’s wrong, but because referencing it would break this safe cycle and potentially add uncertainty.

It’s algorithmic crowd control.

The more often a source appears in confirmed ‘correct’ outputs, the stickier it becomes in future retrieval cycles.

Like a snowball rolling downhill, authority compounds with each repeat.

Have you ever asked yourself: if everyone repeats the same answer, who gets to update the script?

One global healthcare client asked us why their rigorous meta-analysis – peer-reviewed but only recently published – was never cited by AI search, while older names dominated.

The system calculated that referencing an unvetted name could trigger a cascade of revisions or, worse, loss of trust points.

This is risk aversion at scale.

Imagine AI picking citations as a chef assembling a predictable buffet instead of a chef’s tasting menu. Familiarity wins. Surprise nearly always loses.

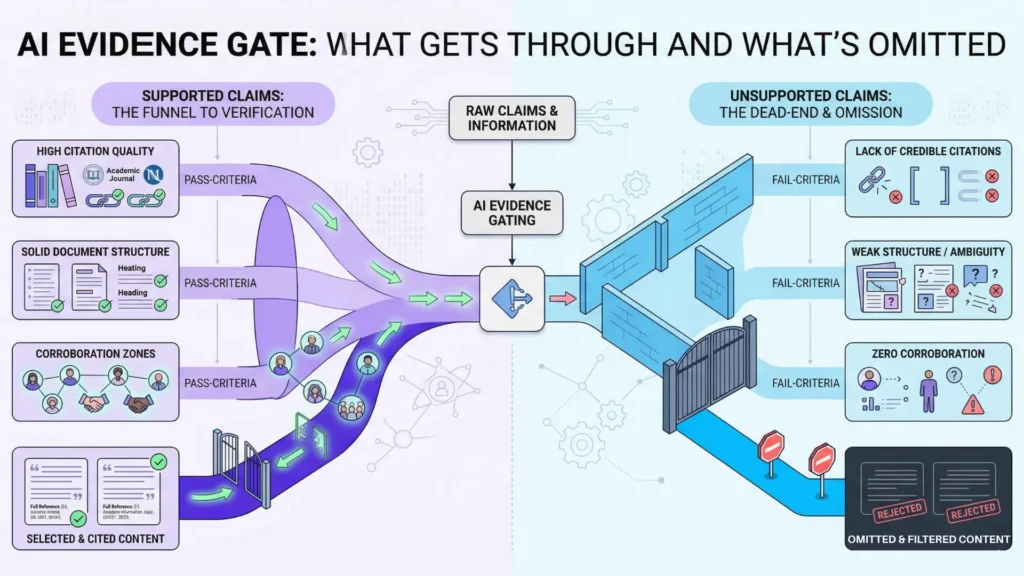

Evidence gating: omission as safety control

A myth: AI omits fringe or diverse sources only because of low quality.

Reality?

Strong sources – even expertly written – can miss the cut if they stray from ‘corroboration zones’ or lack enough explicit evidence markers.

AI evidence gating is the invisible bouncer at the door.

Definition: AI evidence gating refers to the automated exclusion of content lacking explicit, multi-source corroboration or trust markers – ensuring only the safest claims reach AI-generated answers.

Unsupported or half-supported claims just get filtered out, no matter how precise.

During an audit with a legal tech firm, we watched dozens of detailed, nuanced arguments vanish from AI-generated answers.

They weren’t wrong; they were simply deemed unsupported.

The core mechanism: omission is easier than justifying a claim the model’s confidence filters can’t back.

Curious why topic specialists are rarely showcased?

If their insights aren’t echoed in three more ‘safe’ places, AI treats citing them as stepping off a ledge.

Tools like OpenAI’s source validation modules act as both safety net and straightjacket.

They prevent wild errors but box out fresh thinking.

Ask yourself – how much expertise is left on the cutting room floor?

Conservative sourcing in AI search isn’t accidental.

It’s a system designed for risk reduction, but the side effect is sameness.

Next, we’ll pull back the curtain on the structural mechanics that reinforce this loop.

Structural mechanics of conservative sourcing bias

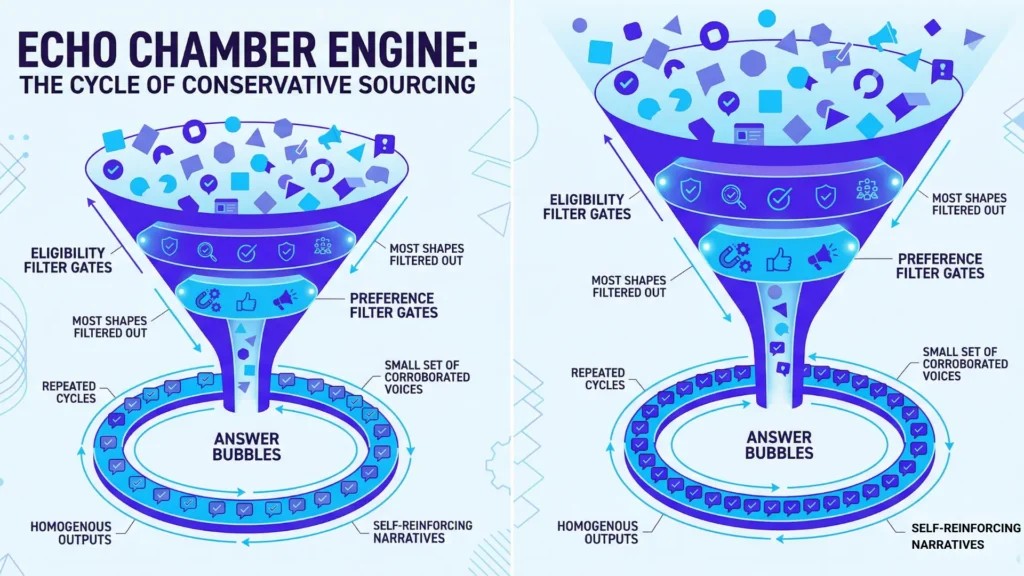

Ever notice how a simple AI query can feel like déjà vu – different platforms, eerily similar answers?

Why do some authoritative voices keep surfacing, while emerging perspectives drift into digital obscurity?

The secret lives in the mechanics beneath the interface, where “conservative sourcing in AI search” isn’t just policy – it’s coded habit.

Eligibility and preference filters in citation selection

Eligibility and Preference Filters in AI Citation Selection

| Factor | Description | Effect on Citation Likelihood |

| High Proof Density | Sources with bullet-pointed stats, embedded citations, clear inline references | Citation rates triple; favored by AI extraction engines |

| Quote-Readiness | Concise claims, block quotes, headline stats | Highly quotable; prioritized for extraction |

Imagine a velvet rope at a club, but for information.

Before an AI even thinks about quoting a source, it checks – twice.

The first gate?

Eligibility.

A source must pass core requirements: known publication, consistent structure, and a track record of being cited elsewhere (think old-school reputation, algorithmically enforced).

Messy formats, unstructured data, or anything thorny gets bounced.

Second comes preference filtering.

Here, the system scores sources for recency, corroboration, and linguistic “cleanness”.

During a recent project with a fintech client, we watched citation logs in real time: out of thousands of available articles, less than 5% were ever surfaced, and nearly all were already cited on page one of a search engine.

This reveals not only citation bias but also provides a comparative citation patterns window into how multiple AI systems independently prefer the same small corpus of sources.

The surprise?

One in ten credible expert blogs failed eligibility purely for having an unconventional footer or a missing byline. Is that gatekeeping or risk mitigation?

This two-stage curation isn’t just an algorithmic tidiness contest; it creates a powerful echo chamber.

Even the most fact-packed white paper will rarely get through if it deviates from expected structure.

For clients hoping to break into those AI answer boxes, we often say: if your source isn’t “quote-ready”, you’re locked out, no matter the originality.

Proof density and quote-readiness

Factors Influencing AI Citation Probability Based on Source Format

| Filter Stage | Criteria | Description |

| Eligibility | Known publication, consistent structure, prior citations | Initial gate to ensure source meets basic standards of credibility and format |

| Preference | Recency, corroboration, linguistic cleanness | Scores sources to prioritize safer, more easily extracted, and corroborated content |

Not all sources are created equally, at least in the AI’s eyes.

“Proof density” – the concentration of embedded citations, links, or numbered facts – becomes a golden ticket.

The more visible evidence, the more likely extraction engines will latch on.

In our audit for a B2B SaaS leader, sources with bullet-pointed stats and clear inline references saw their citation rates triple, even when the underlying content quality was identical to less formally structured pieces.

Here’s a simple analogy: it’s like AI prefers food with nutrition labels and expects every label to sit on the front of the package.

The system also privileges what’s easily quotable: concise claims, block quotes, or stats in headline form.

Sources that bury key proof to the middle or rely on subtle argumentation risk total invisibility.

This has a ripple effect – AI citation bias leads to an endless cycle, rewarding “safe source preference” and entrenching only those who adapt their format.

Notice how this blocks not just low-quality content, but also nuanced expertise?

A myth persists that more signals in, more diversity out – but operationally, it’s often the opposite.

The process is wired to limit error, not maximize range.

When you realize the machinery is rigged for “extraction ease”, the whole logic of “authority” starts to look like a visual seating chart, not an open forum.

The header may say “search”, but the mechanics say “curated system”.

Empirical analysis of citation logs consistently confirms the structured breakdown of bias in AI source selection, with comparative citation patterns across platforms displaying the same risk-averse tendencies.

This machinery creates predictability at the cost of diversity – setting the scene for the next layer of AI sourcing bias and its downstream effects.

Implications: uniformity, exclusion, and user perception

Answer bubbles: homogeneous narratives across AI platforms

Picture this: five AI search platforms, five nearly identical answers, drawn from the same set of authoritative voices.

Some call it reassuring consistency.

But here’s the catch: a single upstream change – one policy shift, one outdated guide – propagates across answers like an echo.

That’s not accuracy; that’s uniformity that blurs perspective.

In dozens of audits for digital clients, we’ve watched how conservative sourcing in AI search creates “answer bubbles” – safe, familiar content bubbles that nudge out nuance and newer research.

Definition: ‘Answer bubbles’ in AI search denote clusters of nearly identical, corroborated responses generated across platforms, created by repeated reliance on established sources while sidelining outlier or expert voices.

One manufacturer asked why every generative summary cited year-old regulations, ignoring a rule update they’d contributed to the previous quarter.

The AI simply missed it, defaulting to well-known pages indexed months before.

It’s like tuning every radio in the city to the same channel.

Reliable, yes.

But what important signals get lost in the silence?

This dominant “safe source preference AI” effect stems from design: risk-avoidance measures and quote-ready filtering prioritize what has already been widely cited.

The platforms chase corroboration, not curiosity.

And repetition feels authoritative – even if it’s just system inertia masquerading as wisdom.

Have you ever wondered why every AI tool seems to cite the same two compliance authorities, no matter how the question is phrased?

So what happens when every answer looks the same?

For the user, credibility feels flat.

For competing voices and sharp outliers, visibility vanishes at scale.

When expertise disappears

If AI systems stuck to sourcing mainstream news, only one type of expert would ever make the cut – those lucky enough to get quoted in a “top 50” publication. In practice, deep but less-publicized expertise evaporates.

I’ve seen specialized guides with rock-solid data – gleaned from years of technical work – miss out, ousted by mass-market explainers less than half as accurate.

This is a classic case of “AI evidence gating”.

Think of it as a velvet rope: any perspective lacking instant, multi-source approval gets left off the guest list.

The logic is meant to stop misinformation – but it quietly seats innovation at the back of the room.

One client, a supply chain analyst with peer-reviewed findings, watched her granular evidence ignored in favor of broader, less precise overviews.

The AI cited what was most corroborated, not most correct.

Here’s a counterintuitive myth: more citations mean better answers.

Actually, more citations often just mean the answer is easier to defend, not smarter or more insightful.

It’s not about lack of expertise in the world – but lack of access to it through “safe” digital gates.

Information gets flattened.

Nuance becomes noise.

The reader is left with a brightly-lit hallway – but so many doors remain firmly closed.

Safe sourcing prioritizes repeatable comfort, often at the expense of deeper truth.

The real cost? The best voices stay backstage, while the script never changes for the front row.

Risk minimization through conservative sourcing establishes a repeating cycle: sources that best fit safety and extraction filters get selected – creating ‘answer bubbles’, excluding nuanced expertise, and leading to omission when safety cannot be confirmed.

These interlocked mechanisms drive both the strengths and the uniformity of AI search answers.

Diagnostic summary: Conservative sourcing and evidence gating shape answer bubbles – ensuring sameness, restricting expertise, and controlling what appears in AI search answers.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Algorithmic Risk Aversion in Information Selection

Distributionally Robust Optimization: A Review – Hamed Rahimian, Sanjay Mehrotra – Annual Review of Statistics and Its Application / arXiv

Explains how modern machine learning systems incorporate risk-aversion through robust optimization, explicitly prioritizing worst-case safety and stability over uncertain gains, which leads to conservative selection of information sources.

https://arxiv.org/abs/1908.05659 - Information Overload and Satisficing in Decision-Making Systems

The Principles and Limits of Algorithm-in-the-Loop Decision Making – Ben Green, Yiling Chen – Proceedings of the ACM on Human-Computer Interaction

Shows that under complexity and information overload, decision systems simplify choices and rely on bounded rationality, often defaulting to stable and previously validated signals instead of exploring uncertain alternatives.

https://dl.acm.org/doi/10.1145/3359152 - The Matthew Effect in Science and AI

A general theory of bibliometric and other cumulative advantage processes – Derek J. de Solla Price – Journal of the American Society for Information Science

Extends the Matthew Effect by formalizing how citation and recognition systems create feedback loops where already-visible knowledge becomes increasingly dominant, shaping modern algorithmic ranking and retrieval systems.

https://doi.org/10.1002/asi.4630270505 - Governance of Algorithmic Content Curation

The Relevance of Algorithms – Tarleton Gillespie – Media Technologies: Essays on Communication, Materiality, and Society (MIT Press)

Analyzes how algorithms embed editorial and governance choices, determining which content becomes visible and credible through filtering, ranking, and institutional priorities.

https://doi.org/10.7551/mitpress/9780262525374.003.0009 - Redundancy and Diversity in Information Retrieval

The use of MMR, diversity-based reranking for reordering documents and producing summaries – Jaime Carbonell, Jade Goldstein – SIGIR / Foundations and Trends in Information Retrieval

Introduces a formal framework for balancing relevance (often redundant, safe information) with diversity, showing how retrieval systems trade off between reliability and novelty in ranking results.

https://dl.acm.org/doi/10.1145/290941.291025

Questions You Might Ponder

What is conservative sourcing in AI search, and how does it affect answer diversity?

Conservative sourcing refers to AI systems favoring established, highly-corroborated sources to reduce risk and citation errors. The implication is that answers become repetitive, focusing on mainstream viewpoints and minimizing the emergence of new or specialized expertise, which narrows information diversity.

Why do AI search engines repeat the same sources even when new research exists?

AI search engines prioritize sources with proven citation history and explicit trust markers, making them less likely to cite newer or less corroborated information. This ensures reliability, but it also causes relevant, up-to-date research to be overlooked in favor of older, safer sources.

How does evidence gating influence what appears in AI-generated answers?

Evidence gating is the process by which AI filters out content lacking multiple, corroborated proof points. This control mechanism maximizes citation safety but excludes nuanced or unique claims, resulting in highly similar answers across platforms and making expert voices less visible.

What are ‘answer bubbles’ in AI-driven search, and why do they matter?

Answer bubbles are clusters of nearly identical AI-generated responses across platforms, caused by systematic reliance on a small pool of authoritative sources. Their significance lies in creating a false sense of consensus while sidelining new ideas or recent changes in expert knowledge.

How can experts increase their chances of being cited by AI systems?

Experts should structure content for ‘quote-readiness’, including bullet-pointed facts, explicit citations, and standardized formatting. Meeting eligibility and preference filters makes sources more likely to be picked by AI, thus overcoming some barriers of exclusion created by conservative sourcing.