What You’ll Learn

claim clarity

Key Takeaways

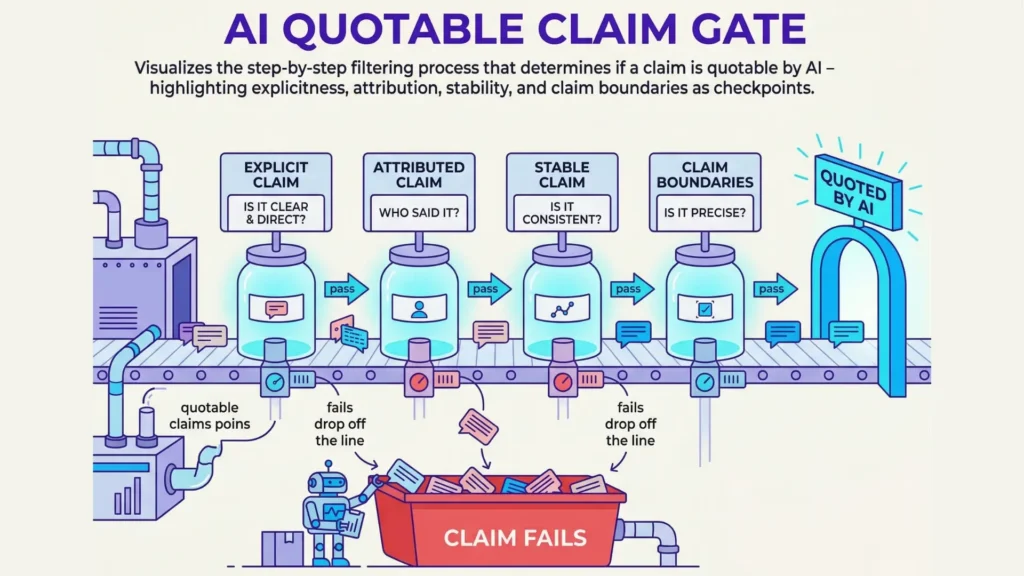

- AI only cites claims that are explicit, self-contained, fully attributed, and have stable, context-free meaning.

- Vague authorship or ambiguous claim boundaries lead AI to paraphrase or omit statements rather than quoting.

- Attribution safety is fundamental – statements lacking clear ownership or structure are suppressed from direct citation for risk management.

- Optimizing for AI quotability means designing claims for extraction: precise, isolated, and audit-ready.

Did you know large AI models will ignore a brilliant insight – just because it isn’t written as a closed, stand-alone claim?

Imagine reading a crystal-clear statistic, then watching the AI paraphrase it as a bland generalization.

The catch: AI only quotes what it can extract, name, and fence off as an autonomous statement.

Definition (AI quotable claims attribution): The process and criteria by which AI systems determine if a claim is explicit, bounded, and attributable enough to cite directly.

Why AI requires explicit, self-contained claims

Explicit vs implicit knowledge

Checklist: What Makes a Claim Quotable by AI?

| Failure Signal | Explanation |

| Claims mixed with unrelated commentary | The claim is entangled with opinions or off-topic info, making it unclear. |

| Unclear where the claim starts or ends | No distinct boundaries to isolate the claim from other text. |

| Statement requires reading previous paragraphs to be understood | The claim is context-dependent and not standalone. |

| Multiple parties or vague collective voice credited | The author attribution is ambiguous or involves more than one unclear source. |

Checklist: What makes a claim quotable by AI?

- Explicit, stand-alone statement

- Clearly attributed to a distinct author or entity

- Context-independent, stable meaning

- Crisp boundaries (clear beginning and end)

- Verifiable factual content

In real work with clients, we’ve seen teams assume their expertly woven paragraphs would be quotable.

Yet, when their original thought was tangled with background details or stretched across multiple sentences, AI would skip citation and paraphrase instead.

It’s not just about having the right answers; it’s about having the answers stated so directly that even an algorithm with zero context can lock on.

Presenting claims to AI is like labeling sealed jars for inspection: if the claim isn’t self-contained and labeled, it won’t be used.

This is about safety, not editorial style.

This surprises many leaders.

They expect AI detection to work like a diligent editor, weaving together context and nuance.

Instead, the system draws a hard line: if a claim requires narrative context, it becomes invisible to citation logic.

The myth: “AI will quote anything insightful”.

The reality: only autonomous, explicit claims survive the AI quotable claims attribution filter.

Semantic stability and determinism

Checklist: Red flags for AI citation suppression ambiguity

- Ambiguous author or source

- Claim relies on narrative context or previous sentences

- Statement meaning varies with audience or time

- No clear delimitation of claim edges

Definition: AI citation suppression ambiguity: The condition where AI systems avoid direct citation because of unclear attribution, ambiguous boundaries, or implicit content structure.

Now ask yourself: how often do your key claims read the same, word for word, every time a question is asked?

Unless the statement is stable – unchanged by time, audience, or prompt – AI treats it as unreliable.

For one SaaS client, we saw their best product claims get paraphrased, not quoted.

Why?

Each time, the system found a sliver of ambiguity or context shift. AI relies on safe repetition, not story.

“Stable knowledge” in AI means a claim is so clearly bounded, so context-free, it cannot be mistaken for something else.

Think of AI citation logic like a quality control gate on an assembly line.

Only claims that are consistently shaped, sharply defined, and easy to copy without risk get through.

If a statement’s meaning or boundaries wobble, the answer is always the same: paraphrase instead of cite, for safety.

Attribution safety in AI systems isn’t a matter of trust; it’s the fixed rule behind AI citation suppression ambiguity.

If a claim can’t be pinned down – unchanged, unambiguous, and self-contained – the system withholds its endorsement.

Quotability in AI content criteria comes down to discipline: say it like you want it quoted, or risk it slipping into paraphrase oblivion.

The most quotable claims are not just insightful; they’re engineered for extraction.

You’ve seen the boundary.

Next, see how this cutoff works when authorship and claim limits get murky.

When attribution fails: entity, boundaries, and quotability

Ever notice how AI confidently states facts but suddenly sidesteps quoting the big idea you’re searching for?

In testing, the system might even omit what you wrote yourself.

Why?

Here’s the twist: it’s not caution – it’s structural ambiguity.

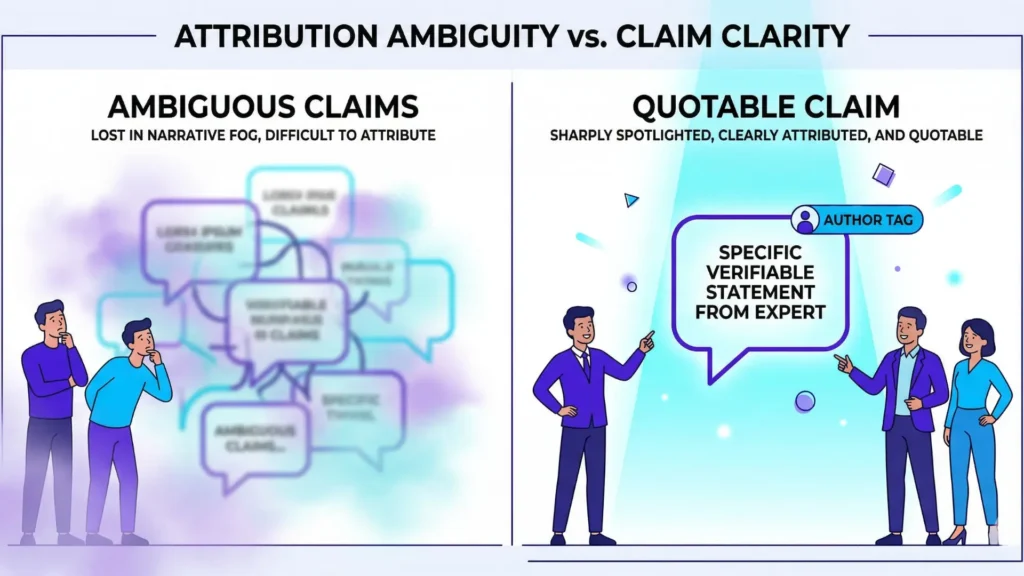

If the author of a claim is foggy or the edges of the statement blur into surrounding context, an AI model will hit pause.

Think of trying to pin down a shadow on a foggy morning – no matter how hard you chase, it keeps shifting.

Attributability to a clear entity

We once audited a customer FAQ page that was technically accurate, yet every answer was a team effort.

No names, no organization, just the company brand.

When an enterprise AI model tried to cite these, it flatlined – “source not identifiable”.

Why?

For quotability, the system needs to attribute exact claims to a single, stable entity.

Vague collectives, pseudonyms, or faceless groups?

Those trigger what we call ‘AI citation suppression ambiguity’ – the model skips the quote to avoid pinning a statement to the wrong source.

Sound familiar?

It happens often with joint statements, internal memos, and editorial blends.

If you can’t identify the voice in the room, how can an AI confidently echo it?

Delimitation and claim boundaries

Failure Signals for AI Quotable Claims Attribution

| Criteria | Description |

| Explicit, stand-alone statement | The claim must be self-contained and clearly expressed on its own. |

| Clearly attributed to a distinct author or entity | The source or author of the claim must be identifiable and named. |

| Context-independent, stable meaning | The claim should have a consistent meaning regardless of context or audience. |

| Crisp boundaries (clear beginning and end) | The exact start and end of the claim must be defined. |

Checklist: Failure signals for AI quotable claims attribution

- Claims mixed with unrelated commentary

- Unclear where the claim starts or ends

- Statement requires reading previous paragraphs to be understood

- Multiple parties or vague collective voice credited

Picture a puddle spreading out on the pavement – where does it stop?

For AI quotable claims attribution, a statement must have crisp boundaries.

Ambiguous borders, interwoven opinions, or a claim dependent on the previous paragraph’s nuance?

The model can’t isolate what’s actually being claimed.

In practice, I’ve seen annual reports where mission statements and factual updates were tangled across a few sentences – result: no quotable claim, only paraphrase.

Here’s the myth: “If it’s explicitly written, it’s automatically quotable”.

Reality check?

Unless the claim stands on its own (with start and end clearly marked), AI’s default is omission.

The analogy is simple – a chef can plate only what’s separable.

If the claim bleeds into narrative sauce, the AI leaves the plate empty.

Ever find yourself thinking, “Why did the model paraphrase my phrasing?”

Next time, glance at the author tag and outline the claim’s boundaries.

If it’s not visible to you, it’s invisible to AI.

Clear attribution plus sharp claim edges equals quotability.

Remove either – and the quote disappears.

Omission as safety: when paraphrase replaces citation

AI’s safety-first logic

Definition: Attribution safety in AI systems: The requirement that only deterministic, fully attributed statements will be cited for safe, reliable response generation.

Ever wonder why AI sometimes skips quoting an original line, even when it seems clear to you?

The reality is surprisingly simple: AI chooses omission or paraphrase not because the original content lacks value, but to avoid the risk of misattribution.

In legal and compliance work with tech clients, we’ve seen AI models suppress direct citations from major news sources – even when executives expected plug-and-play quotability.

Why?

The machine couldn’t confidently map the statement to a clear, bounded, and deterministic author. It was never about content quality.

Think of AI’s safety logic like the seatbelt alarm in your car.

If there’s any ambiguity – maybe someone’s sitting but not buckled – the prompt kicks in.

The language model “hears” ambiguity (unclear author, muddy claim boundary) and triggers a default: paraphrase, never quote. Is this frustrating for leaders relying on sharp citations?

Yes.

But it’s precisely what makes AI outputs safer for decision-makers who can’t afford loose attributions.

There’s a myth that only poorly written or vague content faces suppression.

In truth, some of the most analytically rigorous reports get paraphrased because of a tiny gap in claim boundary or author labeling.

AI citation suppression ambiguity isn’t about low content standards – it’s about consistent, predictable safety procedures.

Narrative dilution vs discrete claims

When opinions, facts, and inferences blend together, ‘narrative dilution’ prevents AI from extracting a direct, citable claim.

Checklist: Citation-eligible statements in AI

- Stand-alone

- Attributed

- Stable phrasing

- Clearly bounded

Blended narratives violate these standards and result in paraphrase or omission.

Working with SaaS scale-ups, we’ve seen entire paragraphs paraphrased rather than cited, just because one sentence floated inside a sea of commentary.

The takeaway: unless a statement stands alone – clear, bounded, and explicitly attributed – AI content explicit statements usually vanish into a safety paraphrase.

When content blends context and insight, the AI has to guess where one claim ends and the next begins. Imagine trying to slice one noodle out of a bowl of spaghetti – loose ends everywhere, and no single strand to pick cleanly.

Reliable attribution safety AI systems don’t gamble on those odds.

If there’s no crisp claim delimitation, paraphrase always wins.

What’s better – the safe summarizer or the risky quoter?

Sometimes, the answer depends on the stakes.

But in most executive content, safety-first logic is the invisible gatekeeper.

Omission and paraphrase are not failures; they are purposeful shields for both user and publisher.

Next, we’ll reveal where diagnostic structure exposes these silent decisions and guides you to control the odds.

Next: controlling diagnostic doors

Bridge to omission diagnostic

What if the silence you see from AI isn’t a glitch, but the aftershock of strict quotability criteria?

Most assume an AI’s refusal to generate a direct quote points to weak input or broken data.

But reality flips that script: often, the AI has detected ambiguity – either in claim boundaries or author identity – and opts to paraphrase or omit for safety.

I’ve seen teams puzzled by quiet responses where the original material felt quotable.

One client’s executive summary with hidden opinion phrases became a case study in repeated AI silence.

The culprit?

Claims dissolved into context as soon as we chased explicit, standalone sentences.

Think of an AI as a bouncer at an exclusive club – not letting in claims unless they show hard proof of identity and their own clear boundaries.

If the door stays closed, it’s not because the club’s empty.

It’s that the criteria haven’t been met.

Pause and ask: Is my knowledge structured for direct attribution, or am I smuggling in context-dependent nuance?

Does that quiet omission signal risk aversion or an opportunity to tighten up explicit evidence?

Precision signage to related clusters

A single diagnostic door rarely solves the case.

If omission continues, what structural issue – claim clarity, evidence logic, or content boundaries – is tripping the system’s self-protection reflex?

The solution requires seeing evidence like a grid: only cells fully lit with explicit, delimited attribution are open to AI citation.

Here’s the misbelief: that AI citation suppression is mainly content quality.

In practice, it’s more about structural and evidentiary clarity – the difference between a clean, quoted icon and a fuzzy, paraphrased shadow.

One quick check: skim for sentences where attribution would be obvious to a third-party auditor.

If not, you’re in paraphrase territory.

Signal is everything here.

To dive deeper, continue to the omission diagnostic route for surgical analysis.

It reveals where the quotability scaffolding holds – and where it collapses.

True control comes from seeing which diagnostic door you’re really standing in front of – and knowing which route gets you from silence to signal.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Explicit Knowledge Representation

Semantic Networks (Knowledge Representation Overview) – ScienceDirect Topics

Explicit knowledge representation requires formal structures where concepts and their relationships are clearly defined. Semantic networks model knowledge as nodes (concepts) and edges (relationships), enabling machines to process and reason over explicitly defined information structures.

https://www.sciencedirect.com/topics/computer-science/semantic-network - Attribution and Accountability in Machine Learning

Algorithmic Accountability: A Primer – Nicholas Diakopoulos – Data & Society Research Institute

Algorithmic systems require transparency in how decisions are produced, including traceability of data sources and model behavior. Attribution enables accountability by allowing external inspection of how outputs are generated and evaluated.

https://www.researchgate.net/publication/313467350_Algorithmic_Accountability - Information Extraction and Delimitation

Learning Dictionaries for Information Extraction by Multi-Level Bootstrapping – Ellen Riloff, Rosie Jones – AAAI

Information extraction depends on identifying well-defined boundaries of entities and facts within text. The study shows that extracting structured knowledge from narrative is difficult due to ambiguity, reinforcing the need for clearly delimited, explicit statements in automated systems.

https://aaai.org/papers/068-aaai99-068-learning-dictionaries-for-information-extraction-by-multi-level-bootstrapping/ - Safety and Attribution in AI Generated Content

Fairness and Machine Learning: Limitations and Opportunities – Solon Barocas, Moritz Hardt, Arvind Narayanan

Safe AI systems require clear definitions of inputs, outputs, and decision processes. The work highlights risks from opaque systems and shows that lack of transparency and attribution can lead to unreliable or harmful outputs in automated decision-making.

https://mitpress.mit.edu/9780262048613/fairness-and-machine-learning/ - Trust and Transparency in Language Models

On the Opportunities and Risks of Foundation Models – Rishi Bommasani et al. – Stanford CRFM

Large language models can generate fluent but unsupported outputs. The report emphasizes the need for transparency, traceability, and reliable attribution to ensure trust, especially when models summarize, paraphrase, or omit information.

https://crfm.stanford.edu/report.html

Questions You Might Ponder

What makes a claim quotable by AI models?

A claim is quotable by AI if it is explicit, stand-alone, clearly attributed to one entity, stable in meaning, and has well-defined boundaries. This allows the system to extract and cite it directly without risk of ambiguity or misattribution.

Why do AI models paraphrase instead of quoting directly?

AI models paraphrase when a statement’s author, boundaries, or content are ambiguous or context-dependent. If citation could lead to error or miscredit, the AI defaults to paraphrase for safety and compliance, avoiding unreliable direct quotes.

How does attribution ambiguity affect AI citations?

Attribution ambiguity occurs when the author or responsible entity for a claim isn’t clear. AI models suppress citations in such cases to prevent false attribution, leading to either paraphrasing or total omission of the original statement.

What is AI citation suppression ambiguity?

AI citation suppression ambiguity describes a state where AI avoids direct citation due to unclear attribution, fuzzy claim boundaries, or phrases that change meaning with context. This ensures that only reliable, safe-to-cite statements survive AI extraction.

How can content creators increase AI quotability of their claims?

To maximize AI quotability, creators should write self-contained, attributed, context-independent statements with clear edges. Avoid blending critical claims into broader narrative or collective statements to ensure direct citation by AI systems.