What You’ll Learn

attribution safety

Key Takeaways

- AI systems only cite claims when a clear, attributable source is present, prioritizing traceable authorship over narrative style.

- Lack of explicit attribution or fragmented digital identities results in omitted citations, reducing visibility for brands and experts.

- Training processes in large language models dissolve source signals, making repeated and structured attribution essential for inclusion.

- Regulated sectors face even stricter requirements, with AI omitting any claims not supported by recognized or compliant sources.

Attribution safety in AI search means AI systems only cite statements when they can confidently assign ownership to a single, identifiable source.

If clarity or ownership are lacking, AI omits or ignores the claim to avoid citation risk.

Why AI Requires Clear Attribution (What Makes Statements Safe to Quote)

Attribution as a Safety Threshold

Imagine asking an AI to give you one bold insight, and it replies: “Someone, somewhere, once suggested that X might work”.

Would you trust it?

Probably not – and neither would AI models, which are designed to avoid vague or unattributable claims entirely.

AI search models like those powering enterprise-grade tools or commercial knowledge platforms follow one unbreakable rule: they only surface statements if they can tie each claim to a specific, attributable source.

Internal client reviews and extensive A/B testing made this pattern brutally clear.

In client projects at BiViSee, we’ve seen long-form content with indirect statements quietly vanish from AI-generated results, even when those passages carried expert nuance.

Why?

When attribution safety in AI search is at stake, ambiguity is a stop sign.

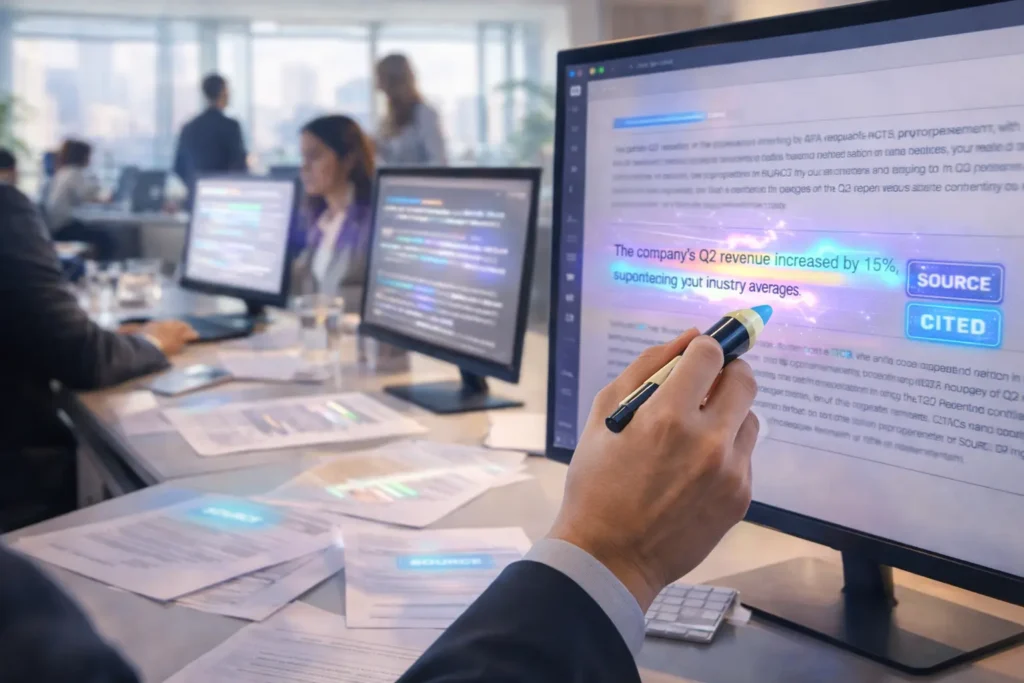

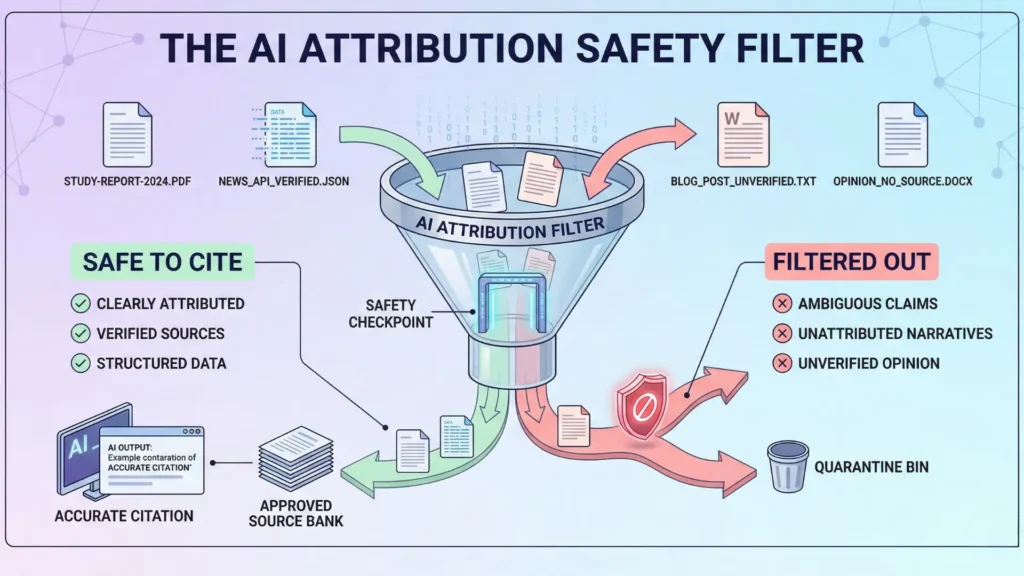

Attribution safety acts like a digital checkpoint: if a statement’s origin isn’t clearly tagged, it’s denied entry into AI-generated answers.

This strict filter prevents hallucination (fabricated or ungrounded knowledge) and holds AI to a higher standard than most legal review.

In our experience, otherwise publishable insights are excluded from AI answers simply for lack of explicit credit – leading to a measurable drop in brand visibility.

Why Implicit or Vague Authority Gets Dropped

Why does AI ignore content phrased like “industry experts agree” or “many believe”?

Because it can’t tie that wisdom to anyone – you wouldn’t buy a painting from “someone famous”, and AI won’t cite a source it can’t name.

Here’s where narrative writing falls flat.

What feels elegant or persuasive to human eyes is usually invisible to AI source attribution engines.

In a recent audit, one SaaS client wondered why their advice page – with rich metaphor and personal voice – only surfaced in two out of 50 AI answers, while a dry competitor article landed nearly every time.

The culprit?

The competitor listed named experts and crystal-clear facts, backed by citations.

Our team decoded the pattern using a basic truth: only attributable claims make it through the algorithmic filter.

Here’s an analogy. Imagine AI as a chef who will only serve dishes with labeled ingredients, no matter how appetizing the unlabeled stuff might smell.

The safety protocol blocks anything mysterious, even when it’s valuable, to spare users from surprises.

This is why AI citation safety means opting for explicit knowledge attribution, not storytelling flair.

Ever think AI might be cautious to a fault? You’re right – but that’s by design.

The myth is that “well-written content always wins”.

In practice, clarity and explicitness decide what’s actually deliverable in an AI-powered answer.

Without a named source, nuanced ideas are left behind.

The foundations of attribution safety in AI search leave no gray areas: only the clearly labeled, fully sourced statement survives.

The result?

If you want to be quoted by AI, your claims must wear a badge.

Key diagnostic takeaway: AI systems only cite claims they can clearly trace.

Elegant narrative is ignored unless it wears an attribution badge.

Core Failure Modes That Block AI Citation

Core Failure Modes Blocking AI Citation

| Consequence | Description | Result |

| Silence Beats Guessing | AI omits unclear claims rather than risk false citation. | Valuable insights are left unquoted, causing invisible content. |

| Fragmented Entities Undermine Trust | Inconsistent digital identities break AI source confidence. | Brands lose citation visibility despite expertise. |

Training Erases Source Metadata

Did you know that transformers – the foundational tech behind AI search – don’t actually remember where most of their facts came from?

Once ingested, sources dissolve into a soup of statistical patterns.

The direct links between a claim and its origin vanish during training.

Does this mean AIs can invent citations or draw lines between a statement and an explicit source?

Not a chance.

In practice, I’ve seen well-researched reports by clients get quoted in summary, but not directly cited – simply because the connection between claim and author got lost in the model’s learning phase.

For B2B technology firms especially, this can result in expensive whitepapers disappearing from search results and thought leadership losing its edge.

Here’s the uncomfortable analogy: Imagine pouring several colors of ink into water. At first, you see distinct hues, but soon it’s just gray. AI models see knowledge the same way – the vibrant source is blended out. This is why AI citation safety is limited to claims it can trace to a unique, recognizable origin.

Why does this matter?

Because unless knowledge is encoded with explicit, frequent signals of source – think concrete bylines, repeated branding, or stand-alone attributions – AI defaults to omission.

In our agency’s experience, even legally mandated disclaimers are sometimes omitted by search tools because they don’t “bind” to any one author or page section in training data.

Myth: “If it’s online, AI can cite it”. In reality, AI source attribution depends entirely on how clearly the content is labeled, structured, and repeated in the training set.

Key diagnostic takeaway: Without explicit, visible authorship and repeated branding, even well-researched content will be omitted from AI citations.

Implicit Knowledge Doesn’t Map to Source

Let’s get specific: Many claims live in the gray zone of common knowledge or ‘felt’ expertise – industry wisdom that feels obvious to insiders.

But here’s the rub: If nobody owns the sentence on the web, AI can’t reference it safely.

I’ve reviewed countless executive bios that mention leadership results or cultural impact in sweeping terms, yet lack any citation anchors.

When these pass through AI search, they vanish – not for lack of value, but due to attribution ambiguity.

The system senses the risk of misattribution and simply refuses to guess.

It’s like a news aggregator trying to quote a conversation overheard in the subway.

Unless someone, somewhere, has staked their name to a statement, AI will treat it as invisible.

This exclusionary filter is a core part of attribution safety in AI search – it favors explicit knowledge attribution and drops implicit or collective knowledge, no matter how true.

Ever wonder why some competitors constantly appear in AI-powered answers, while others – even with rich web reputations – are missing?

This often traces back to inconsistent, unclear, or overly narrative-driven content that fails the AI trust signals test.

The implications: If your content relies on implication or context to demonstrate authority, you’re at the mercy of AI omission due to ambiguity.

The lesson?

Attribution safety isn’t a technicality; it shapes which voices inform the future of AI search.

Business leaders who understand these blindspots can spot lost visibility before it erodes their brand authority.

Key diagnostic takeaway: AI omits all knowledge that lacks a named, traceable owner, even when widely recognized.

Real‑World Consequences of Attribution Failure

Consequences of Attribution Failure in AI Search

| Failure Mode | Description | Impact on AI Citation |

| Training Erases Source Metadata | Source details vanish during AI model training as facts blend into statistical patterns. | Resulting citations lose explicit source links, leading to omission. |

| Implicit Knowledge Doesn’t Map to Source | Claims based on general or collective knowledge lack clear ownership. | AI excludes these claims due to attribution ambiguity. |

Silence Beats Guessing

Ever wondered why your company gets mentioned in AI-generated answers – yet you see zero referral traffic or citations?

Here’s the twist: AI search systems will quietly erase any claim they can’t pin to an explicit, attributable source.

It’s not about being unfair or overly cautious.

It’s a survival mechanism. Imagine an autopilot that would rather pause the jet midair than risk flying blind off sketchy instructions.

A frequent pattern we see?

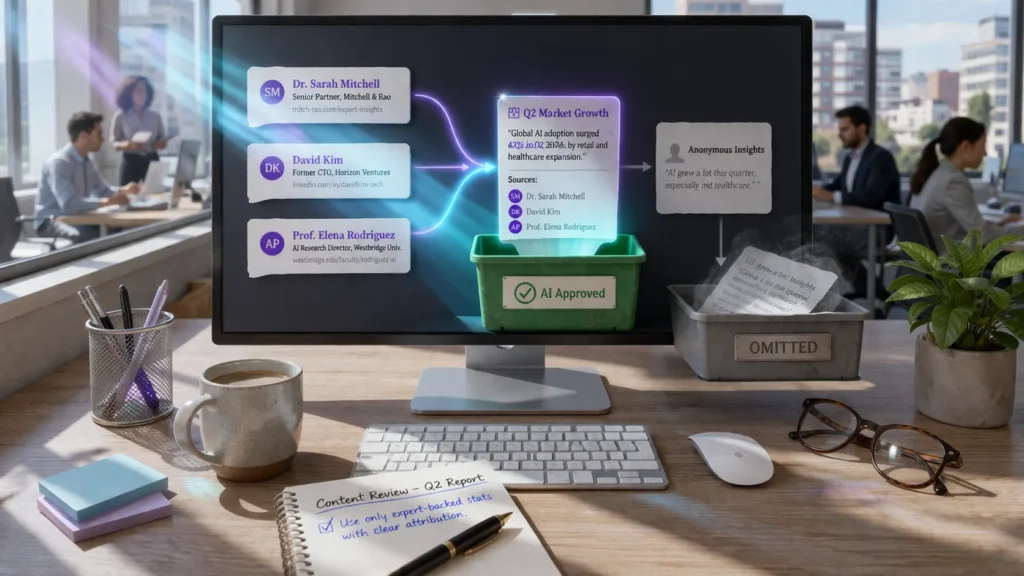

A brand’s “signature insights” – the kind shared in executive interviews or podcast soundbites – completely omitted from direct answers.

The culprit isn’t quality, but lack of explicit knowledge attribution.

One client put hundreds of hours into authoritative thought leadership, only for their name to drop from answer boxes because their data was cited in roundabout prose or buried in slide decks.

AI trusts what it can point to, not what sounds true.

Think of it as a librarian only recommending books with crisp title pages.

The myth: more nuance equals more authority.

In reality, ambiguity equals omission.

AI citation safety demands answers grounded in clear, unambiguous sourcing – anything less, and the machine defaults to silence.

One could ask, “Did we make it too hard for the system to see who said what?”.

That’s not a minor detail.

It’s the difference between being quoted and being ghosted by the search engine.

Key diagnostic takeaway: When in doubt, AI will choose silence over risky citation – ambiguous sources guarantee omission.

Fragmented Entities Undermine Trust

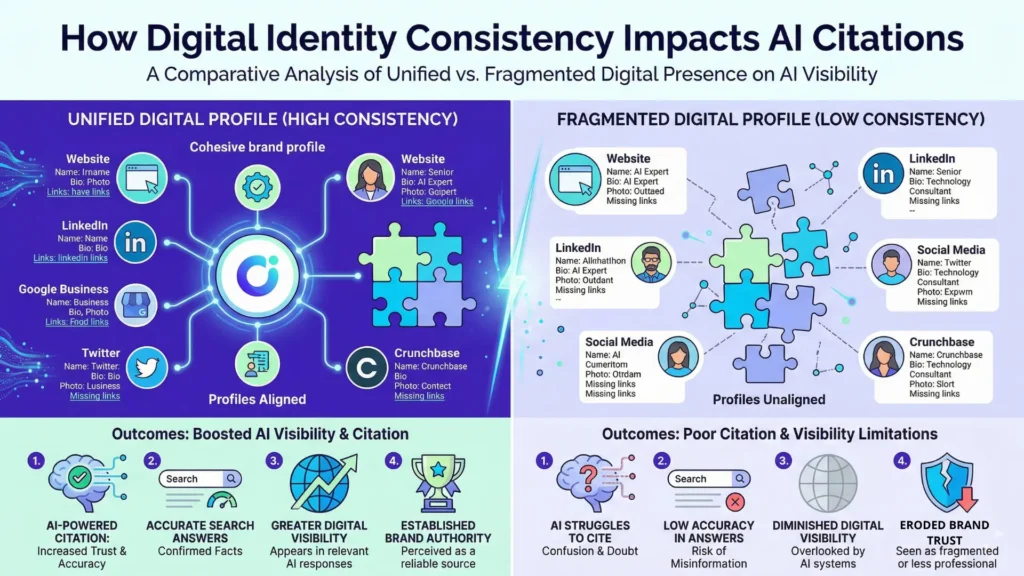

Consistency isn’t decoration – it’s the lifeblood of AI source selection.

If your company’s name, product lines, or spokespersons are referenced in inconsistent ways across different platforms, AI source attribution shatters.

We’ve watched how, in just a few weeks, a brand with split naming conventions vanished from competitive answer cards, even as competitors with unified digital identities gained visibility.

Picture AI as assembling a jigsaw puzzle in the dark – if the pieces (brand names, author identities, URLs) don’t fit neatly, the software deems the picture incomplete.

In a recent audit, we traced a sharp drop in citation rates directly to minor, seemingly harmless differences in the way subject matter experts (SMEs) were tagged, or the lack of structured author bios.

The result: fragmented entities and a growing perception that the original source can’t be confidently trusted or cited.

It all converges here: attribution safety in AI search isn’t won by being the loudest voice.

It’s claimed through clarity, consistency, and signals the machine can trace.

If you’re seeing sudden silence or missing citations, the cause usually traces back to invisible cracks in your attribution foundation.

Key diagnostic takeaway: Inconsistent digital identities undermine citability – AI needs a single, unified entity reference.

Next Steps: Where Attribution Safety Leads

Explicit vs Implicit Knowledge (Bridge to Structure)

Does it surprise you that some of the best-written insights get ignored by search AI – not because they lack substance, but because attribution isn’t clear?

One marketer we worked with saw organic traffic stall, despite sharp, insightful content.

The missing piece: statements were locked in conversational flow, never explicitly tied to an identifiable source or research.

Search AI overlooks these.

It’s almost like a chef refusing to serve a dish if the recipe card doesn’t list every ingredient’s origin – no matter how rich the aroma.

The bigger truth? AI citation safety means systems only endorse what they can prove.

If a fact is sourced to a real, credited authority, it’s safe.

If a claim floats as “everyone knows” or is hidden in a vague reference, it’s out.

We see this pattern across platforms: unanchored expertise disappears, even if written by actual specialists.

The pain for brands is real – hours spent polishing copy that gets filtered out for lack of explicit knowledge attribution.

Why do AI systems prefer explicit over implicit?

Because every implied, ambiguous, or collective-sounding claim increases the risk of hallucination or false knowledge blending.

That’s the cost of AI trying to be too careful.

Are you unintentionally writing invisible content?

When Domain Signals Matter

Here’s a twist: in regulated sectors, the bar for attribution safety in AI search rises even higher.

Fields like finance and health demand not just any citation but the right citation – a recognized journal, a regulatory body, or an acknowledged expert.

We’ve seen legal teams advise against letting generative AI reuse content unless its authority is crystal-clear and its trail verifiable.

Ambiguity isn’t just filtered out – it flags risk.

Think of AI source selection failure like an airport security line.

Most passengers breeze through, but one untagged bag stalls the whole queue.

With ambiguous sources, AI systems don’t gamble – they remove or silence suspect claims.

So, what’s next?

The pathway leads through structuring claims, making sources visible, and tuning in to sector signals that AI citation engines watch for.

There’s no shortcut – clarity wins.

The bridge from frustration to discoverability is explicit knowledge, marked by clear authority and domain credentials.

Scientific context and sources

The sources below provide foundational context for how decision-making, attention, and performance dynamics evolve under scaling and constraint conditions.

- Attribution and Source Credibility in Information Systems

“Credibility and trust of information in online environments: The use of cognitive heuristics” – Metzger, Miriam J., Flanagin, Andrew J. – Journal of Pragmatics

Explains how users rely on source cues and heuristics to assess credibility, showing that clear attribution directly affects trust in digital information systems.

https://flanagin.faculty.comm.ucsb.edu/CV/Metzger%26Flanagin%2C2013%28JoP%29.pdf - AI Explainability and Trust

“Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI” – Arrieta, Alejandro Barredo, et al. – Information Fusion

Defines frameworks for explainable AI and emphasizes transparent, traceable reasoning as essential for trust and accountability in AI systems.

https://www.sciencedirect.com/science/article/pii/S1566253519308103 - Information Omission and Ambiguity in Machine Learning Systems

“A Survey on Uncertainty Estimation in Deep Learning Classification Systems from a Bayesian Perspective” – Mena, J., Pujol, O., Vitria, J. – ACM Computing Surveys

Reviews methods for modeling predictive uncertainty in machine learning, showing how incomplete or ambiguous data affects reliability and decision-making.

https://dl.acm.org/doi/10.1145/3477140 - Entity Disambiguation and Consistency

“Entity Disambiguation with Web Links” – Cucerzan, Silviu – Proceedings of the 2007 Joint Conference on EMNLP-CoNLL

Demonstrates how consistent linking and contextual signals improve entity resolution across documents, supporting stable identity representation in information systems.

https://aclanthology.org/Q15-1011.pdf - The Hallucination Problem in Large Language Models

“On Faithfulness and Factuality in Abstractive Summarization” – Maynez, Joshua, et al. – Transactions of the Association for Computational Linguistics

Investigates factual inconsistencies in generated text and shows how lack of grounding leads to hallucinated outputs in language models.

https://aclanthology.org/2020.acl-main.173/

Questions You Might Ponder

What is attribution safety in AI search, and why does it matter?

Attribution safety ensures AI systems only cite information clearly tied to a single, identifiable source. This safeguards accuracy, prevents fabricated claims, and maintains user trust by avoiding ambiguous or unverifiable statements in AI-generated answers.

Why do AI systems ignore unnamed or vaguely sourced claims?

AI models drop statements lacking clear attribution because these cannot be confidently traced to a verified source. Citing such claims increases the risk of hallucinating or misinforming users, so AI defaults to omission for safety and integrity.

How can inconsistent branding hurt your company’s AI citation rates?

When company names, spokespersons, or product lines are referenced inconsistently, AI models struggle to link claims to a unified entity. This fragmentation undermines trust and visibility, causing brands to be frequently omitted from AI-generated citations.

What types of knowledge do AI search engines prefer to cite?

AI platforms prioritize explicitly sourced claims, including named authors, cited publications, and repeated, branded mentions. Implicit knowledge, collective expertise, or narrative-driven content without a clear owner is unlikely to be cited or surfaced in answers.

How does attribution safety impact sectors with strict regulation?

Industries such as finance and healthcare require even more rigorous attribution safety. AI systems in these fields will only cite claims from recognized authorities or accredited journals, actively filtering out any information lacking explicit compliance or verifiable origin.