Table of Content

core web vitals seo impact

Key Takeaways

- Core Web Vitals SEO impact is mostly about stability, not speed – CWV rarely “win” rankings, but unstable experiences can stop rankings from holding in competitive SERPs.

- CWV sit inside Page Experience as a filter, not a boost – they reduce evaluation risk after relevance and authority already make you a viable candidate.

- The three metrics describe reliability signals – LCP (main content appears), INP (interaction responds), CLS (layout stays stable); thresholds mark risk bands, not score targets.

- Field data drives the real story – search systems “see” aggregated real-user behavior (CrUX/Search Console), while lab tests help diagnose but do not reflect ranking inputs directly.

Most companies don’t lose rankings because they are slow.

They lose them because the experience is unstable.

We’ve seen pages load in 1.4 seconds and still slide.

We’ve seen slower pages hold top 3.

Speed alone does not decide trust.

Search systems look for repeatable behavior across sessions, devices, and traffic spikes.

They prefer pages that respond the same way when people tap, scroll, and click.

Imagine a store where the floor shifts slightly.

You might stay for a moment.

You won’t relax enough to buy.

Core Web Vitals are not a growth shortcut.

They are stability signals that reduce evaluation uncertainty.

That difference changes how you should read performance data.

Core Web Vitals do not create rankings, but instability can prevent rankings from holding.

They are three user-experience metrics: LCP, INP, and CLS, covering loading, responsiveness, and layout stability.

What this is: a model for stability risk in search evaluation.

What this is not: a checklist for improving performance.

This page explains stability signals and evaluation risk.

It does not cover optimizations, fixes, or audits.

What we cover: experience stability, thresholds as risk bands, field vs lab signals, and when CWV decide outcomes.

What we do not cover: tools walkthroughs, score chasing, or developer checklists.

Glossary (quick):

- Page Experience – experience-related signals set

- Field data – aggregated real-user experience

- Lab data – simulated test results

- CWV – LCP, INP, CLS metrics

Experience quality as an SEO filter

Core Web Vitals rarely “win” you rankings.

But bad experience can stop you from keeping them.

Is this the reason rankings won’t stick?

Google has a practical problem.

It has to choose results that behave predictably at scale.

Predictability reduces complaints, pogo-sticking, and re-querying.

That is why search systems look at experience.

Not because they love speed scores.

Because unstable pages create risk.

Think of it like a car with misfiring brakes.

It may be fast.

You still won’t trust it at speed.

In real audits, we see this pattern a lot.

A site climbs after a relaunch.

Then it slides back within 3-6 weeks.

Nothing “SEO” changed on the page.

When we check the field data, the story shows up.

Mobile users get late layout jumps.

Buttons shift under the thumb.

Clicks miss, and sessions end early.

One ecommerce client had this exact issue.

Category pages ranked top 5 for months.

Then revenue from organic dropped 18% in 30 days.

The pages still indexed fine, but the experience got noisy.

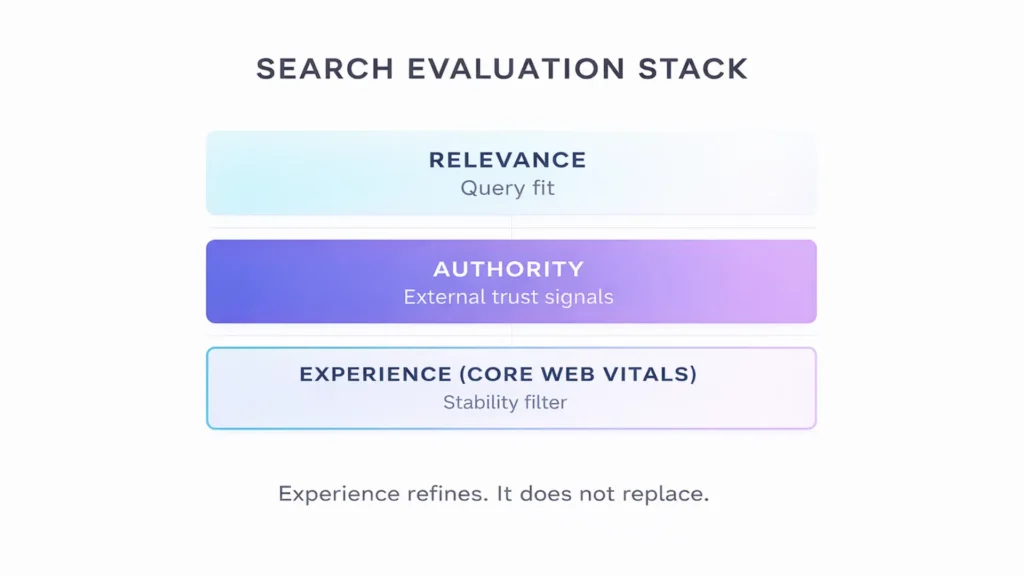

Core Web Vitals are part of Page Experience signals.

They sit behind relevance and authority in the decision stack.

Relevance decides fit, authority adds confidence, and experience reduces risk.

So what can CWV do?

They can lower the chance you get filtered out.

They can make your rankings more stable in tight SERPs.

What can’t CWV do?

They can’t turn weak relevance into demand.

They can’t replace authority when competitors are stronger.

For the full SEO system model, see the SEO capability hub.

This page stays on experience stability.

Experience signals don’t create winners. They remove fragile pages from consideration.

Up next: how these signals enter evaluation.

How Core Web Vitals fit into search evaluation

Search engines do not see your design.

They see patterns of behavior.

They aggregate real user interactions.

They measure how your pages behave under pressure.

Then they classify that behavior into signals.

Those signals help the system predict whether users will stay, interact, and return without friction.

Core Web Vitals are reliability signals.

They measure whether content loads predictably.

They measure whether interactions respond consistently.

They measure whether layouts stay stable while rendering.

This is not about aesthetics.

It is about uncertainty.

Unstable behavior also complicates evaluation.

If rendering, interactivity, or layout changes are inconsistent, systems get mixed signals about what the page is.

Search systems reduce uncertainty whenever possible, because unstable pages increase the probability of abandonment and short sessions, which weakens confidence in the result.

We saw this clearly with a SaaS client last year.

Content was strong.

Authority was growing.

Yet rankings fluctuated weekly.

When we examined field data, interaction delays spiked on mobile during peak traffic hours.

Nothing broke technically, but response times varied enough to create inconsistent user signals.

What happened?

Positions did not crash.

They just stopped compounding.

Core Web Vitals sit inside Google’s Page Experience layer.

That layer acts as a filter.

It does not overpower relevance.

It refines the final selection when several pages look similar.

This is an important shift.

CWV are not a “speed hack”.

They are trust indicators inside evaluation logic.

Imagine two equally relevant results.

One loads cleanly, reacts smoothly, and stays visually stable.

The other jitters and delays interactions.

Which one feels safer to serve again?

That bias is subtle.

But at scale, subtle filters change outcomes.

In practice, this acts like a stabilizer.

Experience becomes a stability multiplier rather than a growth lever.

Experience signals don’t replace content strength. They reduce evaluation doubt when content is already competitive.

Next: what the three metrics actually capture.

What Core Web Vitals actually measure

Core Web Vitals measure experienced performance, not technical capacity.

They describe how stable a page feels during real interaction.

They do not evaluate server horsepower or code elegance.

They evaluate perception at scale.

Search systems use these signals to estimate whether interaction patterns are predictable across real sessions.

Largest Contentful Paint – LCP

LCP measures how long it takes for the main visible content to load.

In practical terms, LCP represents the moment when the user receives confirmation that the page has delivered its primary promise. It is not about total page load time. It is about when the most meaningful element becomes visible. If that moment is delayed or inconsistent across sessions, confidence erodes even if other parts load quickly.

This is the moment a user feels the page is usable.

If that moment arrives quickly and consistently, confidence builds.

If it lags or varies, hesitation appears, especially on mobile connections where users are less patient.

We worked with a publisher where LCP averaged 2.3 seconds.

On paper, it was “good”.

But during traffic spikes, it jumped above 3.5 seconds.

Rankings held, but engagement dropped 11% in two weeks.

Consistency mattered more than the average.

Interaction to Next Paint – INP

INP measures how fast the page responds after a user interacts.

Unlike older metrics that measured only the first action, INP evaluates responsiveness across the session. It captures the slowest interaction that meaningfully affects the user. This makes it a reliability metric, not a reaction-time metric. Systems care about whether responsiveness holds under load, not whether a single click was fast.

It captures overall responsiveness across clicks, taps, and key presses.

Google replaced FID with INP because FID measured only the first interaction, while INP evaluates responsiveness across the full session.

That shift tells you something.

Search systems care about sustained reliability, not a single fast reaction.

Cumulative Layout Shift – CLS

CLS measures how much elements move while loading.

CLS quantifies unexpected visual instability. It does not measure animation or intentional movement. It measures layout shifts that occur without user input. When elements relocate unpredictably, users lose spatial confidence. At scale, that instability introduces behavioral noise into session data.

It tracks unexpected visual shifts.

When buttons slide under a thumb mid-tap, trust drops instantly.

That friction is small but repeated thousands of times.

Picture reading a report while someone nudges the page every second.

You might tolerate it briefly.

You would not trust it.

What these three metrics describe together

Together, LCP, INP, and CLS describe three layers of experience stability:

- Delivery stability – does meaningful content appear predictably?

- Interaction stability – does the interface respond consistently?

- Visual stability – does the layout stay spatially reliable?

That “behavior profile” is what evaluation systems can compare.

They do not infer intent.

They observe outcomes across many sessions and devices.

Core Web Vitals are not technical trophies.

They are measurable expressions of user confidence.

Next: the thresholds, and how they function as risk bands.

Thresholds as risk-signal boundaries

The numbers are not goals.

They are boundaries.

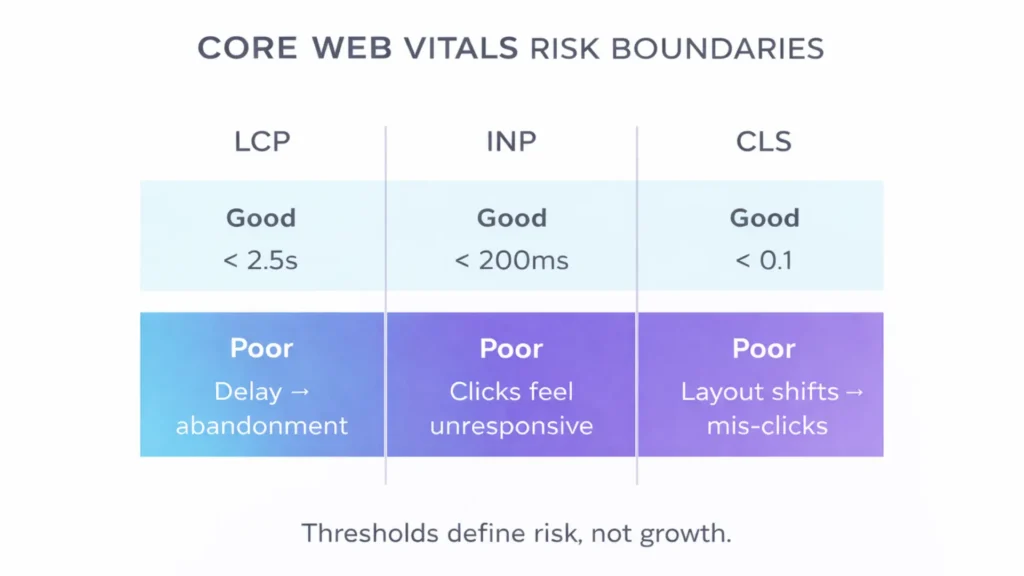

Google defines three performance bands: Good, Needs improvement, Poor.

| Metric | What it reflects | Good (reference) | Risk signal when poor |

| LCP | Main content appears | < 2.5s | Users wait, then abandon |

| INP | Interaction responds | < 200ms | Clicks feel “dead” |

| CLS | Layout stays stable | < 0.1 | Mis-clicks and frustration |

These are not arbitrary lines.

They reflect observed user tolerance across millions of real sessions collected in the Chrome User Experience Report.

When pages cross into the “poor” range, abandonment probability rises sharply.

Users hesitate.

Clicks drop.

Sessions shorten.

Think of thresholds like credit ratings.

AAA does not guarantee profit.

But junk status limits access.

We saw this clearly in a B2B marketplace redesign.

Templates slipped from “good” to “needs improvement” after adding heavy comparison widgets.

Organic traffic did not collapse overnight, but within 60 days rankings softened across 40% of competitive keywords.

The content did not change.

Authority did not change.

Risk perception did.

Passing thresholds reduces friction signals in evaluation.

Failing thresholds increases the likelihood that another page feels safer to serve.

Notice the distinction.

The objective is not to chase a perfect 100.

The objective is to stay out of the danger band.

In competitive SERPs, small risk differences accumulate.

One page feels consistent.

The other produces doubt.

Systems tend to favor the consistent one.

Thresholds do not promise growth. They define the boundary where instability begins to limit it.

Next: the myths that waste the most time.

Stability vs speed myths

The most persistent belief in SEO is simple.

Faster ranks higher.

That belief survives because it feels logical.

But it is incomplete.

We have audited sites that load in under one second.

They still struggle to hold positions.

We have also seen slower sites dominate competitive terms.

Here is the non-obvious truth.

Search systems reward predictability more than peak speed.

A page that loads in 2.2 seconds every time is easier to evaluate than a page that loads in 1.2 seconds sometimes and 4 seconds under load.

Consistency reduces noise in user behavior.

Another myth sounds convincing.

“Improving Core Web Vitals will boost rankings”.

Core Web Vitals do not inject visibility.

They reduce friction in evaluation.

Imagine two equally relevant pages.

Both answer the query well.

One feels stable.

One jitters and delays reactions.

Which one would you serve repeatedly at scale?

This is not about milliseconds alone.

It is about trust under repetition.

In one SaaS case, a team cut average load time by 35%.

They expected ranking growth.

Nothing moved.

Why?

Their relevance and authority were the actual bottlenecks.

Performance was already within safe boundaries.

Speed improvements beyond stability thresholds rarely produce visible ranking jumps, because evaluation weight shifts back to content strength and authority signals.

Speed is necessary up to a point.

Beyond that point, it becomes marginal.

Core Web Vitals are not a shortcut. They are a stability floor, not a visibility accelerator.

Next: how instability stalls growth without a crash.

Why instability blocks compounding SEO

Instability rarely causes a dramatic crash.

It causes stagnation.

Traffic plateaus.

Rankings fluctuate slightly.

Growth stops compounding.

Field data is aggregated behavior.

It reflects thousands of real sessions.

When experience varies, those signals become noisy.

Noisy signals are harder to trust.

One ecommerce client saw this pattern last year.

Organic sessions hovered around 82,000 per month for three months.

Content output increased.

Backlinks improved.

Positions barely moved.

When we reviewed CrUX field data, mobile CLS fluctuated week to week.

Not catastrophic.

Just inconsistent.

Users were tapping filters while layout blocks shifted under load.

Engagement signals softened.

Return visits dipped.

Search systems rely on repeated confirmation.

When behavior varies too much, evaluation confidence weakens and incremental gains stall.

There is also a lag.

Experience signals can take weeks to reflect real change.

That delay misleads teams.

They assume performance did nothing.

But instability works the same way in reverse.

If experience degrades gradually, compounding benefits erode slowly, often before anyone notices the source.

Picture a savings account leaking one percent each month.

You do not panic at first.

But over time, the curve flattens.

Instability blocks growth quietly. It reduces confidence in repetition.

Next: when these signals can decide outcomes.

When CWV become decisive

Most of the time, Core Web Vitals are secondary.

Relevance wins first.

Authority wins second.

But there is a specific moment when experience tips the scale.

It happens when competing pages are equally relevant and similarly authoritative, because then search systems look for signals that reduce risk in repeated user interactions.

We see this in competitive B2B categories.

Two vendors target the same high-intent query.

Both publish strong content.

Both have comparable link profiles.

One template loads predictably and responds smoothly.

The other hesitates under heavier traffic.

The ranking difference is small.

Position three versus position five.

But conversion impact is significant.

In one marketplace case, stability improved while content and links stayed flat.

Over 90 days, top-3 keyword presence rose 14%.

Content did not change.

Backlinks remained stable.

The only visible shift was consistency.

Here is another scenario.

You are already in the top ten.

You hover between positions seven and eight.

Authority gaps are minimal.

In that zone, small experience differences can influence which result feels safer to serve repeatedly at scale.

Core Web Vitals rarely create visibility from nothing.

They become decisive when the rest of the system is already strong.

Think of them as a referee in a close match.

They do not determine the winner in a blowout.

They matter when the score is tight.

When performance is “good enough”, gains flatten.

When performance slips into poor territory, limitations appear quickly.

Core Web Vitals shift from negligible to limiting when competition is close and user tolerance is low.

Next: field data vs lab data.

Field data vs lab data – what search systems actually see

Many teams optimize for Lighthouse scores.

Search systems do not rank Lighthouse.

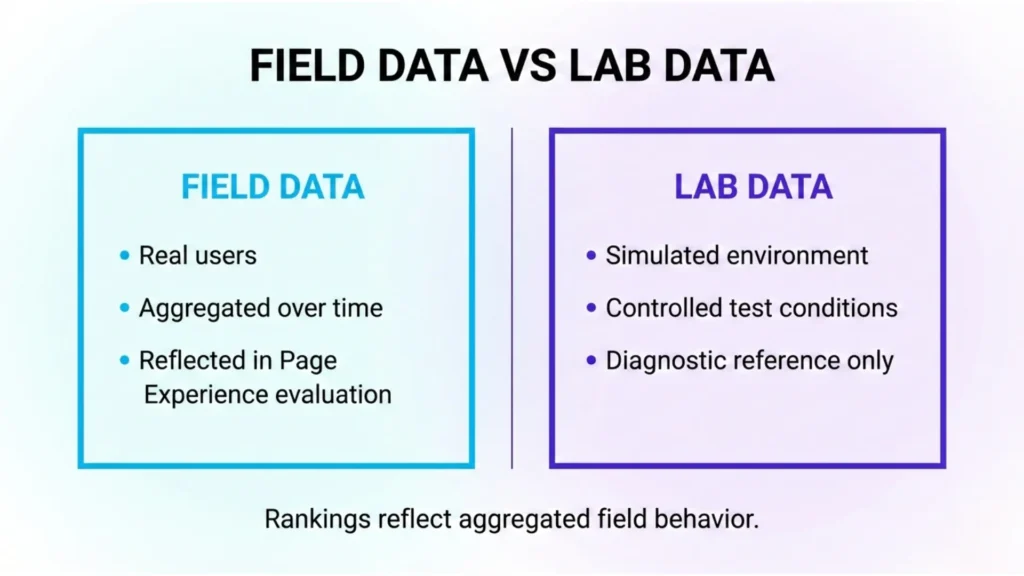

There are two types of data.

Field data and lab data.

They are not the same thing.

Field data comes from real users.

It is aggregated over time.

It reflects how your pages behave on real devices, real networks, real sessions.

This data is aggregated in CrUX and surfaced in Search Console.

It updates in rolling windows, often around 28 days.

Lab data is synthetic.

It is generated in controlled environments.

It helps diagnose issues.

Lab tests simulate conditions and help diagnosis, but they do not represent real-user variability.

We often see a disconnect here.

A team celebrates a lab score win.

Field signals still show instability across real sessions.

Why?

Because real users behave differently than lab simulations.

One client improved lab LCP from 3.1 seconds to 1.9 seconds.

They expected ranking growth.

Field data barely moved for four weeks.

Their mobile audience had slower networks than the simulated test profile.

The lab environment looked clean.

The field reality did not.

Search systems evaluate aggregated field behavior.

That means consistency across devices and traffic spikes matters more than isolated test wins.

This distinction changes decision-making.

You stop chasing perfect lab scores.

You start reducing variability in real sessions.

Field data reflects lived experience. Lab data reflects possibility.

Next: where friction takes over.

When stability is acceptable but growth still stalls

You can pass every Core Web Vitals threshold.

You can sit comfortably in the “good” range.

And growth can still flatten.

That moment confuses many teams.

They assume performance is solved.

They assume rankings should rise automatically.

They assume stability equals momentum.

It does not.

Stability removes one layer of risk.

It does not remove friction.

Friction is different.

Friction is the cumulative delay users feel across multiple interactions.

It is the invisible drag that builds across filters, dynamic content, and heavy scripts.

Imagine a page that loads cleanly.

No layout shifts.

Fast first interaction.

But every filter click pauses slightly.

Individually, each delay is small.

Collectively, they change behavior.

We saw this with a large category site.

Core Web Vitals were solid for three consecutive months.

Yet average pages per session dropped from 4.8 to 3.9.

The cause was not instability.

It was interaction latency stacking across session depth.

This is where stability stops being the limiter.

Friction becomes the dominant constraint.

Core Web Vitals measure reliability at the surface.

Loading behavior measures performance under sustained use.

When experience issues persist, friction becomes the dominant limiter.

If your experience is stable but growth still plateaus, go deeper on loading speed and friction dynamics.

Stability protects rankings. Friction determines how far they scale.

Scientific context and sources

The sources below provide first-party documentation and peer-reviewed research that support the mechanisms described above, including experience measurement, evaluation uncertainty, and user sensitivity to delay.

- Core Web Vitals methodology and field data

Chrome User Experience Report (CrUX) – Google Chrome Developers

Explains how real-user performance data is collected, aggregated, and used to evaluate loading, responsiveness, and layout stability across millions of sessions.

https://developer.chrome.com/docs/crux/ - Page Experience as a ranking signal layer

Page Experience documentation – Google Search Central

Describes how Core Web Vitals sit within broader experience-related ranking signals and clarifies their relative weight compared to relevance and content quality.

https://developers.google.com/search/docs/appearance/page-experience - Human sensitivity to response delay

The Need for Speed: How Website Performance Impacts User Engagement – Google Research (Think with Google)

Empirical data showing how incremental delay increases abandonment probability and reduces engagement across mobile sessions.

https://business.google.com/ca-en/think/marketing-strategies/mobile-page-speed-new-industry-benchmarks/ - Interaction latency and user perception

Impact of Response Latency on User Behaviour in Mobile Web Search – Arapakis, Park, Pielot – CHIIR ’21: Proceedings of the 2021 Conference on Human Information Interaction and Retrieval (2021)

Peer-reviewed research demonstrating how response delays influence perceived system quality and user trust, even when content relevance remains constant.

https://dl.acm.org/doi/10.1145/3406522.3446038 - Information retrieval and evaluation uncertainty

An Introduction to Information Retrieval – Manning, Raghavan, Schütze – Cambridge University Press

Foundational framework explaining how ranking systems reduce uncertainty and rely on consistent signals when comparing documents of similar relevance.

https://nlp.stanford.edu/IR-book/

Questions You Might Ponder

Do Core Web Vitals really affect SEO rankings?

Yes. Core Web Vitals are part of Google’s Page Experience ranking signals. They rarely create large ranking gains on their own, but poor scores can limit visibility. In competitive results, strong Core Web Vitals often act as a tie-breaker when relevance and authority are already similar.

What exactly do LCP, INP, and CLS measure?

Largest Contentful Paint (LCP) measures how quickly the main visible content appears. Interaction to Next Paint (INP) measures overall responsiveness after user input. Cumulative Layout Shift (CLS) measures unexpected layout movement. Together, they evaluate loading reliability, interaction stability, and visual predictability.

What are “good” Core Web Vitals scores?

Google defines “good” thresholds as LCP under 2.5 seconds, INP under 200 milliseconds, and CLS under 0.1. These thresholds are based on real-user field data. Pages falling into “needs improvement” or “poor” ranges show higher abandonment rates and may face ranking instability.

What’s the difference between field data and lab data for Core Web Vitals?

Field data comes from real users and is aggregated in the Chrome User Experience Report and Search Console. Google relies on this data for Page Experience evaluation. Lab data, generated by tools like Lighthouse, simulates performance and helps diagnose issues but does not directly influence rankings.

Can improving Core Web Vitals alone boost my traffic?

Improving very poor Core Web Vitals can recover lost visibility, especially on mobile. However, once pages reach “good” ranges, further performance gains rarely increase rankings without stronger relevance and authority. Core Web Vitals stabilize performance; they do not replace content strength or link authority.

Contact us and find out how we will take care of Core Web Vitals on your website.